FPY Warning!

安装 apache-Flink

# pip install apache-Flink -i https://pypi.tuna.tsinghua.edu.cn/simple/

Looking in indexes: https://pypi.tuna.tsinghua.edu.cn/simple/

Collecting apache-Flink

Downloading https://pypi.tuna.tsinghua.edu.cn/packages/7f/a3/ad50270f0f9b7738922a170c47ec18061224a5afa0864e8749d27b1d5501/apache_flink-1.20.0-cp38-cp38-manylinux1_x86_64.whl (6.8 MB)

|████████████████████████████████| 6.8 MB 2.3 MB/s eta 0:00:01

Collecting avro-python3!=1.9.2,>=1.8.1

Downloading https://pypi.tuna.tsinghua.edu.cn/packages/cc/97/7a6970380ca8db9139a3cc0b0e3e0dd3e4bc584fb3644e1d06e71e1a55f0/avro-python3-1.10.2.tar.gz (38 kB)

Collecting ruamel.yaml>=0.18.4

Downloading https://pypi.tuna.tsinghua.edu.cn/packages/73/67/8ece580cc363331d9a53055130f86b096bf16e38156e33b1d3014fffda6b/ruamel.yaml-0.18.6-py3-none-any.whl (117 kB)

|████████████████████████████████| 117 kB 10.1 MB/s eta 0:00:01

Requirement already satisfied: python-dateutil<3,>=2.8.0 in /usr/local/lib/python3.8/dist-packages (from apache-Flink) (2.8.2)

Collecting numpy>=1.22.4

Downloading https://pypi.tuna.tsinghua.edu.cn/packages/98/5d/5738903efe0ecb73e51eb44feafba32bdba2081263d40c5043568ff60faf/numpy-1.24.4-cp38-cp38-manylinux_2_17_x86_64.manylinux2014_x86_64.whl (17.3 MB)

|████████████████████████████████| 17.3 MB 2.3 MB/s eta 0:00:01

Requirement already satisfied: pytz>=2018.3 in /usr/local/lib/python3.8/dist-packages (from apache-Flink) (2022.2.1)

Collecting pemja==0.4.1; platform_system != "Windows"

Downloading https://pypi.tuna.tsinghua.edu.cn/packages/23/4c/422c99d5d2b823309888714185dc4467a935a25fb1e265c604855d0ea4b9/pemja-0.4.1-cp38-cp38-manylinux1_x86_64.whl (325 kB)

|████████████████████████████████| 325 kB 9.8 MB/s eta 0:00:01

Collecting py4j==0.10.9.7

Downloading https://pypi.tuna.tsinghua.edu.cn/packages/10/30/a58b32568f1623aaad7db22aa9eafc4c6c194b429ff35bdc55ca2726da47/py4j-0.10.9.7-py2.py3-none-any.whl (200 kB)

|████████████████████████████████| 200 kB 10.0 MB/s eta 0:00:01

Collecting protobuf>=3.19.0

Downloading https://pypi.tuna.tsinghua.edu.cn/packages/c0/be/bac52549cab1aaab112d380b3f2a80a348ba7083a80bf4ff4be4fb5a6729/protobuf-5.28.1-cp38-abi3-manylinux2014_x86_64.whl (316 kB)

|████████████████████████████████| 316 kB 10.0 MB/s eta 0:00:01

Collecting apache-beam<2.49.0,>=2.43.0

Downloading https://pypi.tuna.tsinghua.edu.cn/packages/9d/1b/59d9717241170b707fbdd82fa74a676260e7fa03fecfa7fafd58f0c178e1/apache_beam-2.48.0-cp38-cp38-manylinux_2_17_x86_64.manylinux2014_x86_64.whl (14.8 MB)

|████████████████████████████████| 14.8 MB 2.2 MB/s eta 0:00:01

Collecting requests>=2.26.0

Downloading https://pypi.tuna.tsinghua.edu.cn/packages/f9/9b/335f9764261e915ed497fcdeb11df5dfd6f7bf257d4a6a2a686d80da4d54/requests-2.32.3-py3-none-any.whl (64 kB)

|████████████████████████████████| 64 kB 8.1 MB/s eta 0:00:01

Collecting cloudpickle>=2.2.0

Downloading https://pypi.tuna.tsinghua.edu.cn/packages/96/43/dae06432d0c4b1dc9e9149ad37b4ca8384cf6eb7700cd9215b177b914f0a/cloudpickle-3.0.0-py3-none-any.whl (20 kB)

Collecting apache-flink-libraries<1.20.1,>=1.20.0

Downloading https://pypi.tuna.tsinghua.edu.cn/packages/1f/31/80cfa34b6a53a5c19d66faf3e7d29cb0689a69c128a381e584863eb9669a/apache-flink-libraries-1.20.0.tar.gz (231.5 MB)

|████████████████████████████████| 231.5 MB 84 kB/s eta 0:00:01

Collecting fastavro!=1.8.0,>=1.1.0

Downloading https://pypi.tuna.tsinghua.edu.cn/packages/3a/6a/1998c619c6e59a35d0a5df49681f0cdfb6dbbeabb0d24d26e938143c655f/fastavro-1.9.7-cp38-cp38-manylinux_2_17_x86_64.manylinux2014_x86_64.whl (3.2 MB)

|████████████████████████████████| 3.2 MB 450 kB/s eta 0:00:01

Collecting pyarrow>=5.0.0

Downloading https://pypi.tuna.tsinghua.edu.cn/packages/3f/08/bc497130789833de09e345e3ce4647e3ce86517c4f70f2144f0367ca378b/pyarrow-17.0.0-cp38-cp38-manylinux_2_17_x86_64.manylinux2014_x86_64.whl (40.0 MB)

|████████████████████████████████| 40.0 MB 1.3 MB/s eta 0:00:01

Collecting pandas>=1.3.0

Downloading https://pypi.tuna.tsinghua.edu.cn/packages/f8/7f/5b047effafbdd34e52c9e2d7e44f729a0655efafb22198c45cf692cdc157/pandas-2.0.3-cp38-cp38-manylinux_2_17_x86_64.manylinux2014_x86_64.whl (12.4 MB)

|████████████████████████████████| 12.4 MB 1.2 MB/s eta 0:00:01

Collecting httplib2>=0.19.0

Downloading https://pypi.tuna.tsinghua.edu.cn/packages/a8/6c/d2fbdaaa5959339d53ba38e94c123e4e84b8fbc4b84beb0e70d7c1608486/httplib2-0.22.0-py3-none-any.whl (96 kB)

|████████████████████████████████| 96 kB 2.9 MB/s eta 0:00:011

Collecting ruamel.yaml.clib>=0.2.7; platform_python_implementation == "CPython" and python_version < "3.13"

Downloading https://pypi.tuna.tsinghua.edu.cn/packages/22/fa/b2a8fd49c92693e9b9b6b11eef4c2a8aedaca2b521ab3e020aa4778efc23/ruamel.yaml.clib-0.2.8-cp38-cp38-manylinux_2_5_x86_64.manylinux1_x86_64.whl (596 kB)

|████████████████████████████████| 596 kB 2.6 MB/s eta 0:00:01

Requirement already satisfied: six>=1.5 in /usr/lib/python3/dist-packages (from python-dateutil<3,>=2.8.0->apache-Flink) (1.14.0)

Collecting find-libpython

Downloading https://pypi.tuna.tsinghua.edu.cn/packages/1d/89/6b4624122d5c61a86e8aebcebd377866338b705ce4f115c45b046dc09b99/find_libpython-0.4.0-py3-none-any.whl (8.7 kB)

Collecting proto-plus<2,>=1.7.1

Downloading https://pypi.tuna.tsinghua.edu.cn/packages/7c/6f/db31f0711c0402aa477257205ce7d29e86a75cb52cd19f7afb585f75cda0/proto_plus-1.24.0-py3-none-any.whl (50 kB)

|████████████████████████████████| 50 kB 3.5 MB/s eta 0:00:011

Collecting grpcio!=1.48.0,<2,>=1.33.1

Downloading https://pypi.tuna.tsinghua.edu.cn/packages/ae/44/f8975d2719dbf58d4a036f936b6c2adeddc7d2a10c2f7ca6ea87ab4c5086/grpcio-1.66.1-cp38-cp38-manylinux_2_17_x86_64.manylinux2014_x86_64.whl (5.8 MB)

|████████████████████████████████| 5.8 MB 1.3 MB/s eta 0:00:01

Collecting fasteners<1.0,>=0.3

Downloading https://pypi.tuna.tsinghua.edu.cn/packages/61/bf/fd60001b3abc5222d8eaa4a204cd8c0ae78e75adc688f33ce4bf25b7fafa/fasteners-0.19-py3-none-any.whl (18 kB)

Collecting crcmod<2.0,>=1.7

Downloading https://pypi.tuna.tsinghua.edu.cn/packages/6b/b0/e595ce2a2527e169c3bcd6c33d2473c1918e0b7f6826a043ca1245dd4e5b/crcmod-1.7.tar.gz (89 kB)

|████████████████████████████████| 89 kB 5.2 MB/s eta 0:00:011

Requirement already satisfied: typing-extensions>=3.7.0 in /usr/local/lib/python3.8/dist-packages (from apache-beam<2.49.0,>=2.43.0->apache-Flink) (4.3.0)

Collecting pydot<2,>=1.2.0

Downloading https://pypi.tuna.tsinghua.edu.cn/packages/ea/76/75b1bb82e9bad3e3d656556eaa353d8cd17c4254393b08ec9786ac8ed273/pydot-1.4.2-py2.py3-none-any.whl (21 kB)

Requirement already satisfied: regex>=2020.6.8 in /usr/local/lib/python3.8/dist-packages (from apache-beam<2.49.0,>=2.43.0->apache-Flink) (2022.10.31)

Collecting hdfs<3.0.0,>=2.1.0

Downloading https://pypi.tuna.tsinghua.edu.cn/packages/29/c7/1be559eb10cb7cac0d26373f18656c8037553619ddd4098e50b04ea8b4ab/hdfs-2.7.3.tar.gz (43 kB)

|████████████████████████████████| 43 kB 5.2 MB/s eta 0:00:011

Collecting orjson<4.0

Downloading https://pypi.tuna.tsinghua.edu.cn/packages/25/13/a66f4873ed57832aab57dd8b49c91c4c22b35fb1fa0d1dce3bf8928f2fe0/orjson-3.10.7-cp38-cp38-manylinux_2_17_x86_64.manylinux2014_x86_64.whl (141 kB)

|████████████████████████████████| 141 kB 4.8 MB/s eta 0:00:01

Collecting objsize<0.7.0,>=0.6.1

Downloading https://pypi.tuna.tsinghua.edu.cn/packages/ab/37/e5765c22a491e1cd23fb83059f73e478a2c45a464b2d61c98ef5a8d0681c/objsize-0.6.1-py3-none-any.whl (9.3 kB)

Collecting zstandard<1,>=0.18.0

Downloading https://pypi.tuna.tsinghua.edu.cn/packages/1c/4b/be9f3f9ed33ff4d5e578cf167c16ac1d8542232d5e4831c49b615b5918a6/zstandard-0.23.0-cp38-cp38-manylinux_2_17_x86_64.manylinux2014_x86_64.whl (5.4 MB)

|████████████████████████████████| 5.4 MB 1.3 MB/s eta 0:00:01

Collecting pymongo<5.0.0,>=3.8.0

Downloading https://pypi.tuna.tsinghua.edu.cn/packages/2a/72/77445354da27437534ee674faf55a2ef4bfc6ed9b28cbe743d6e7e4c2c61/pymongo-4.8.0-cp38-cp38-manylinux_2_17_x86_64.manylinux2014_x86_64.whl (685 kB)

|████████████████████████████████| 685 kB 5.0 MB/s eta 0:00:01

Collecting dill<0.3.2,>=0.3.1.1

Downloading https://pypi.tuna.tsinghua.edu.cn/packages/c7/11/345f3173809cea7f1a193bfbf02403fff250a3360e0e118a1630985e547d/dill-0.3.1.1.tar.gz (151 kB)

|████████████████████████████████| 151 kB 4.9 MB/s eta 0:00:01

Requirement already satisfied: idna<4,>=2.5 in /usr/lib/python3/dist-packages (from requests>=2.26.0->apache-Flink) (2.8)

Requirement already satisfied: urllib3<3,>=1.21.1 in /usr/lib/python3/dist-packages (from requests>=2.26.0->apache-Flink) (1.25.8)

Requirement already satisfied: certifi>=2017.4.17 in /usr/lib/python3/dist-packages (from requests>=2.26.0->apache-Flink) (2019.11.28)

Collecting charset-normalizer<4,>=2

Downloading https://pypi.tuna.tsinghua.edu.cn/packages/3d/09/d82fe4a34c5f0585f9ea1df090e2a71eb9bb1e469723053e1ee9f57c16f3/charset_normalizer-3.3.2-cp38-cp38-manylinux_2_17_x86_64.manylinux2014_x86_64.whl (141 kB)

|████████████████████████████████| 141 kB 4.9 MB/s eta 0:00:01

Collecting tzdata>=2022.1

Downloading https://pypi.tuna.tsinghua.edu.cn/packages/65/58/f9c9e6be752e9fcb8b6a0ee9fb87e6e7a1f6bcab2cdc73f02bb7ba91ada0/tzdata-2024.1-py2.py3-none-any.whl (345 kB)

|████████████████████████████████| 345 kB 4.9 MB/s eta 0:00:01

Requirement already satisfied: pyparsing!=3.0.0,!=3.0.1,!=3.0.2,!=3.0.3,<4,>=2.4.2; python_version > "3.0" in /usr/local/lib/python3.8/dist-packages (from httplib2>=0.19.0->apache-Flink) (3.0.9)

Collecting docopt

Downloading https://pypi.tuna.tsinghua.edu.cn/packages/a2/55/8f8cab2afd404cf578136ef2cc5dfb50baa1761b68c9da1fb1e4eed343c9/docopt-0.6.2.tar.gz (25 kB)

Collecting dnspython<3.0.0,>=1.16.0

Downloading https://pypi.tuna.tsinghua.edu.cn/packages/87/a1/8c5287991ddb8d3e4662f71356d9656d91ab3a36618c3dd11b280df0d255/dnspython-2.6.1-py3-none-any.whl (307 kB)

|████████████████████████████████| 307 kB 4.9 MB/s eta 0:00:01

Building wheels for collected packages: avro-python3, apache-flink-libraries, crcmod, hdfs, dill, docopt

Building wheel for avro-python3 (setup.py) ... done

Created wheel for avro-python3: filename=avro_python3-1.10.2-py3-none-any.whl size=44009 sha256=b7630bedfef2c8bd38772ef6e0f6f62520cfc6ee7fbc554f7b1b7ae64eb2229e

Stored in directory: /root/.cache/pip/wheels/6b/28/4f/3f68740c0cd12549e2ba9fcfad15913841346bc927af36903e

Building wheel for apache-flink-libraries (setup.py) ... done

Created wheel for apache-flink-libraries: filename=apache_flink_libraries-1.20.0-py2.py3-none-any.whl size=231628008 sha256=2a433e291e2192fa73efb6780bb4753f8c9ecddf7a33036afd02583a42bbc424

Stored in directory: /root/.cache/pip/wheels/1d/6c/ea/da3305a119e44581bd4c0c0e92a376fd0615426227a071894d

Building wheel for crcmod (setup.py) ... done

Created wheel for crcmod: filename=crcmod-1.7-cp38-cp38-linux_x86_64.whl size=35981 sha256=60feb55c2aec53cb53b2c76013f7b37680467cb7c24e3e9e63e2ddcca37960c7

Stored in directory: /root/.cache/pip/wheels/ee/bf/82/ac509f3b383e310a168c1da020cbc62d98c03a6c7c74babc63

Building wheel for hdfs (setup.py) ... done

Created wheel for hdfs: filename=hdfs-2.7.3-py3-none-any.whl size=34321 sha256=52a3f2b630c182293adaa98f1d54c73d6543155a6c8b536b723ba81bcd2c0c7c

Stored in directory: /root/.cache/pip/wheels/71/d9/87/dc2129ee8e18b4b82cfc6be6dbba6f5b7091a45cfa53b5855d

Building wheel for dill (setup.py) ... done

Created wheel for dill: filename=dill-0.3.1.1-py3-none-any.whl size=78530 sha256=8cb55478bc96ba4978d75fcc54972b21f8334d990691267a53e684c2c89eb1e9

Stored in directory: /root/.cache/pip/wheels/8b/d5/ad/893b2f2db5de6f4c281a9d16abeb0618f5249620f845a857b4

Building wheel for docopt (setup.py) ... done

Created wheel for docopt: filename=docopt-0.6.2-py2.py3-none-any.whl size=13704 sha256=bf6de70865de15338a3da5fb54a47de343b677743fa989a2289bbf6557330136

Stored in directory: /root/.cache/pip/wheels/93/51/4b/6e0f7cba524fbe1e9e973f4bc9be5f3ab1e38346d7d63505f4

Successfully built avro-python3 apache-flink-libraries crcmod hdfs dill docopt

ERROR: apache-beam 2.48.0 has requirement cloudpickle~=2.2.1, but you'll have cloudpickle 3.0.0 which is incompatible.

ERROR: apache-beam 2.48.0 has requirement protobuf<4.24.0,>=3.20.3, but you'll have protobuf 5.28.1 which is incompatible.

ERROR: apache-beam 2.48.0 has requirement pyarrow<12.0.0,>=3.0.0, but you'll have pyarrow 17.0.0 which is incompatible.

Installing collected packages: avro-python3, ruamel.yaml.clib, ruamel.yaml, numpy, find-libpython, pemja, py4j, protobuf, cloudpickle, proto-plus, grpcio, fastavro, fasteners, crcmod, charset-normalizer, requests, pyarrow, pydot, docopt, hdfs, orjson, objsize, zstandard, dnspython, pymongo, httplib2, dill, apache-beam, apache-flink-libraries, tzdata, pandas, apache-Flink

Attempting uninstall: numpy

Found existing installation: numpy 1.21.6

Uninstalling numpy-1.21.6:

Successfully uninstalled numpy-1.21.6

Attempting uninstall: requests

Found existing installation: requests 2.22.0

Not uninstalling requests at /usr/lib/python3/dist-packages, outside environment /usr

Can't uninstall 'requests'. No files were found to uninstall.

Attempting uninstall: dill

Found existing installation: dill 0.3.7

Uninstalling dill-0.3.7:

Successfully uninstalled dill-0.3.7

Attempting uninstall: pandas

Found existing installation: pandas 1.2.0

Uninstalling pandas-1.2.0:

Successfully uninstalled pandas-1.2.0

Successfully installed apache-Flink-1.20.0 apache-beam-2.48.0 apache-flink-libraries-1.20.0 avro-python3-1.10.2 charset-normalizer-3.3.2 cloudpickle-3.0.0 crcmod-1.7 dill-0.3.1.1 dnspython-2.6.1 docopt-0.6.2 fastavro-1.9.7 fasteners-0.19 find-libpython-0.4.0 grpcio-1.66.1 hdfs-2.7.3 httplib2-0.22.0 numpy-1.24.4 objsize-0.6.1 orjson-3.10.7 pandas-2.0.3 pemja-0.4.1 proto-plus-1.24.0 protobuf-5.28.1 py4j-0.10.9.7 pyarrow-17.0.0 pydot-1.4.2 pymongo-4.8.0 requests-2.32.3 ruamel.yaml-0.18.6 ruamel.yaml.clib-0.2.8 tzdata-2024.1 zstandard-0.23.0

pip 安装后自动会把 flink 也装上

# find / -name flink 2>/dev/null

/usr/local/lib/python3.8/dist-packages/apache_beam/examples/flink

/usr/local/lib/python3.8/dist-packages/apache_beam/io/flink

/usr/local/lib/python3.8/dist-packages/pyflink/bin/flink

/usr/local/lib/python3.8/dist-packages/pyflink/bin/flink 就是 flink 可执行文件

# /usr/local/lib/python3.8/dist-packages/pyflink/bin/flink run -h

Action "run" compiles and runs a program.

Syntax: run [OPTIONS] <jar-file> <arguments>

"run" action options:

-c,--class <classname> Class with the program entry

point ("main()" method). Only

needed if the JAR file does not

specify the class in its

manifest.

-C,--classpath <url> Adds a URL to each user code

classloader on all nodes in the

cluster. The paths must specify

a protocol (e.g. file://) and be

accessible on all nodes (e.g. by

means of a NFS share). You can

use this option multiple times

for specifying more than one

URL. The protocol must be

supported by the {@link

java.net.URLClassLoader}.

-cm,--claimMode <arg> Defines how should we restore

from the given savepoint.

Supported options: [claim -

claim ownership of the savepoint

and delete once it is subsumed,

no_claim (default) - do not

claim ownership, the first

checkpoint will not reuse any

files from the restored one,

legacy (deprecated) - the old

behaviour, do not assume

ownership of the savepoint

files, but can reuse some shared

files.

-d,--detached If present, runs the job in

detached mode

-n,--allowNonRestoredState Allow to skip savepoint state

that cannot be restored. You

need to allow this if you

removed an operator from your

program that was part of the

program when the savepoint was

triggered.

-p,--parallelism <parallelism> The parallelism with which to

run the program. Optional flag

to override the default value

specified in the configuration.

-py,--python <pythonFile> Python script with the program

entry point. The dependent

resources can be configured with

the `--pyFiles` option.

-pyarch,--pyArchives <arg> Add python archive files for

job. The archive files will be

extracted to the working

directory of python UDF worker.

For each archive file, a target

directory be specified. If the

target directory name is

specified, the archive file will

be extracted to a directory with

the specified name. Otherwise,

the archive file will be

extracted to a directory with

the same name of the archive

file. The files uploaded via

this option are accessible via

relative path. '#' could be used

as the separator of the archive

file path and the target

directory name. Comma (',')

could be used as the separator

to specify multiple archive

files. This option can be used

to upload the virtual

environment, the data files used

in Python UDF (e.g.,

--pyArchives

file:///tmp/py37.zip,file:///tmp

/data.zip#data --pyExecutable

py37.zip/py37/bin/python). The

data files could be accessed in

Python UDF, e.g.: f =

open('data/data.txt', 'r').

-pyclientexec,--pyClientExecutable <arg> The path of the Python

interpreter used to launch the

Python process when submitting

the Python jobs via "flink run"

or compiling the Java/Scala jobs

containing Python UDFs.

-pyexec,--pyExecutable <arg> Specify the path of the python

interpreter used to execute the

python UDF worker (e.g.:

--pyExecutable

/usr/local/bin/python3). The

python UDF worker depends on

Python 3.8+, Apache Beam

(version == 2.43.0), Pip

(version >= 20.3) and SetupTools

(version >= 37.0.0). Please

ensure that the specified

environment meets the above

requirements.

-pyfs,--pyFiles <pythonFiles> Attach custom files for job. The

standard resource file suffixes

such as .py/.egg/.zip/.whl or

directory are all supported.

These files will be added to the

PYTHONPATH of both the local

client and the remote python UDF

worker. Files suffixed with .zip

will be extracted and added to

PYTHONPATH. Comma (',') could be

used as the separator to specify

multiple files (e.g., --pyFiles

file:///tmp/myresource.zip,hdfs:

///$namenode_address/myresource2

.zip).

-pym,--pyModule <pythonModule> Python module with the program

entry point. This option must be

used in conjunction with

`--pyFiles`.

-pypath,--pyPythonPath <arg> Specify the path of the python

installation in worker

nodes.(e.g.: --pyPythonPath

/python/lib64/python3.7/).User

can specify multiple paths using

the separator ":".

-pyreq,--pyRequirements <arg> Specify a requirements.txt file

which defines the third-party

dependencies. These dependencies

will be installed and added to

the PYTHONPATH of the python UDF

worker. A directory which

contains the installation

packages of these dependencies

could be specified optionally.

Use '#' as the separator if the

optional parameter exists (e.g.,

--pyRequirements

file:///tmp/requirements.txt#fil

e:///tmp/cached_dir).

-rm,--restoreMode <arg> This option is deprecated,

please use 'claimMode' instead.

-s,--fromSavepoint <savepointPath> Path to a savepoint to restore

the job from (for example

hdfs:///flink/savepoint-1537).

-sae,--shutdownOnAttachedExit If the job is submitted in

attached mode, perform a

best-effort cluster shutdown

when the CLI is terminated

abruptly, e.g., in response to a

user interrupt, such as typing

Ctrl + C.

Options for Generic CLI mode:

-D <property=value> Allows specifying multiple generic configuration

options. The available options can be found at

https://nightlies.apache.org/flink/flink-docs-stable/

ops/config.html

-e,--executor <arg> DEPRECATED: Please use the -t option instead which is

also available with the "Application Mode".

The name of the executor to be used for executing the

given job, which is equivalent to the

"execution.target" config option. The currently

available executors are: "remote", "local",

"kubernetes-session", "yarn-per-job" (deprecated),

"yarn-session".

-t,--target <arg> The deployment target for the given application,

which is equivalent to the "execution.target" config

option. For the "run" action the currently available

targets are: "remote", "local", "kubernetes-session",

"yarn-per-job" (deprecated), "yarn-session". For the

"run-application" action the currently available

targets are: "kubernetes-application".

Options for yarn-cluster mode:

-m,--jobmanager <arg> Set to yarn-cluster to use YARN execution

mode.

-yid,--yarnapplicationId <arg> Attach to running YARN session

-z,--zookeeperNamespace <arg> Namespace to create the Zookeeper

sub-paths for high availability mode

Options for default mode:

-D <property=value> Allows specifying multiple generic

configuration options. The available

options can be found at

https://nightlies.apache.org/flink/flink-do

cs-stable/ops/config.html

-m,--jobmanager <arg> Address of the JobManager to which to

connect. Use this flag to connect to a

different JobManager than the one specified

in the configuration. Attention: This

option is respected only if the

high-availability configuration is NONE.

-z,--zookeeperNamespace <arg> Namespace to create the Zookeeper sub-paths

for high availability mode

flink run 发送作业

!cd /usr/local/lib/python3.8/dist-packages/pyflink/ && ./bin/flink run \

--jobmanager <<jobmanagerHost>>:8081 \

--python examples/table/word_count.py

如果你的执行环境没有python或者安装的是python3,会报错:Cannot run program "python": error=2, No such file or directory

org.apache.flink.client.program.ProgramAbortException: java.io.IOException: Cannot run program "python": error=2, No such file or directory

at org.apache.flink.client.python.PythonDriver.main(PythonDriver.java:134)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.apache.flink.client.program.PackagedProgram.callMainMethod(PackagedProgram.java:356)

at org.apache.flink.client.program.PackagedProgram.invokeInteractiveModeForExecution(PackagedProgram.java:223)

at org.apache.flink.client.ClientUtils.executeProgram(ClientUtils.java:113)

at org.apache.flink.client.cli.CliFrontend.executeProgram(CliFrontend.java:1026)

at org.apache.flink.client.cli.CliFrontend.run(CliFrontend.java:247)

at org.apache.flink.client.cli.CliFrontend.parseAndRun(CliFrontend.java:1270)

at org.apache.flink.client.cli.CliFrontend.lambda$mainInternal$10(CliFrontend.java:1367)

at org.apache.flink.runtime.security.contexts.NoOpSecurityContext.runSecured(NoOpSecurityContext.java:28)

at org.apache.flink.client.cli.CliFrontend.mainInternal(CliFrontend.java:1367)

at org.apache.flink.client.cli.CliFrontend.main(CliFrontend.java:1335)

Caused by: java.io.IOException: Cannot run program "python": error=2, No such file or directory

at java.lang.ProcessBuilder.start(ProcessBuilder.java:1048)

at org.apache.flink.client.python.PythonEnvUtils.startPythonProcess(PythonEnvUtils.java:378)

at org.apache.flink.client.python.PythonEnvUtils.launchPy4jPythonClient(PythonEnvUtils.java:492)

at org.apache.flink.client.python.PythonDriver.main(PythonDriver.java:92)

... 14 more

Caused by: java.io.IOException: error=2, No such file or directory

at java.lang.UNIXProcess.forkAndExec(Native Method)

at java.lang.UNIXProcess.<init>(UNIXProcess.java:247)

at java.lang.ProcessImpl.start(ProcessImpl.java:134)

at java.lang.ProcessBuilder.start(ProcessBuilder.java:1029)

... 17 more

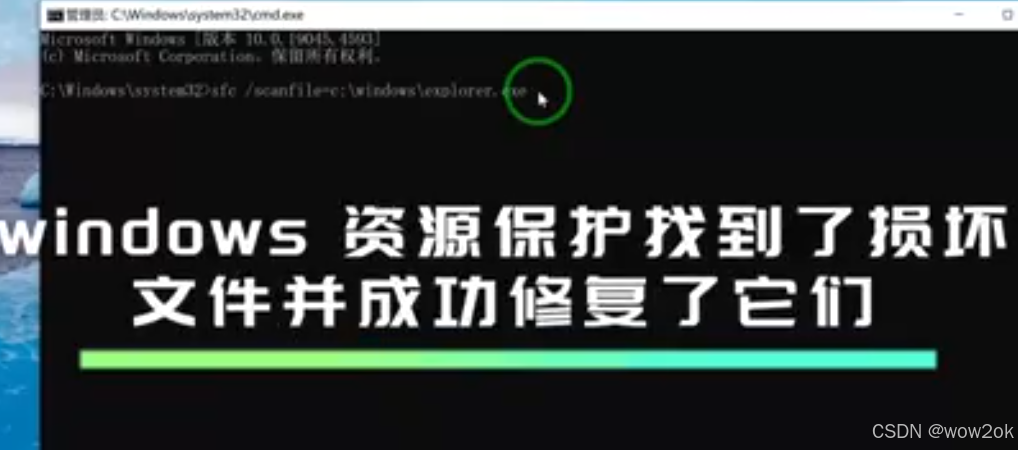

略施小计,创建软链接指向现有python3即可

!ln -s /usr/bin/python3 /usr/bin/python

在此执行,job被提交到flink集群