- 本文为🔗365天深度学习训练营 中的学习记录博客

- 原作者:K同学啊

●难度:夯实基础

●语言:Python3、TensorFlow2

要求:

1.自己搭建VGG-16网络框架

2.调用官方的VGG-16网络框架

拔高(可选):

1.验证集准确率达到100%

2.使用PPT画出VGG-16算法框架图(发论文需要这项技能)

探索(难度有点大)

1.在不影响准确率的前提下轻量化模型

○ 目前VGG16的Total params是134,276,932

我的环境:

●操作系统:ubuntu 22.04

●语言环境:python 3.8.10

●编译器:jupyter notebook

●深度学习框架:tensorflow-gpu 2.9.0

●显卡(GPU):RTX 3090(24GB) * 1

●数据集:咖啡豆数据集

一、前期工作

- 设置GPU(如果使用的是CPU可以忽略这步)

import tensorflow as tf

gpus = tf.config.list_physical_devices("GPU")

if gpus:

tf.config.experimental.set_memory_growth(gpus[0], True) #设置GPU显存用量按需使用

tf.config.set_visible_devices([gpus[0]],"GPU")

- 导入数据

from tensorflow import keras

from tensorflow.keras import layers,models

import numpy as np

import matplotlib.pyplot as plt

import os,PIL,pathlib

data_dir = "./T7/data/"

data_dir = pathlib.Path(data_dir)

image_count = len(list(data_dir.glob('*/*.png')))

print("图片总数为:",image_count)

代码输出:

图片总数为: 1200

二、数据预处理

- 加载数据

使用image_dataset_from_directory方法将磁盘中的数据加载到tf.data.Dataset中。

batch_size = 32

img_height = 224

img_width = 224

"""

关于image_dataset_from_directory()的详细介绍可以参考文章:https://mtyjkh.blog.csdn.net/article/details/117018789

"""

train_ds = tf.keras.preprocessing.image_dataset_from_directory(

data_dir,

validation_split=0.2,

subset="training",

seed=123,

image_size=(img_height, img_width),

batch_size=batch_size)

代码输出:

Found 1200 files belonging to 4 classes.

Using 960 files for training.

"""

关于image_dataset_from_directory()的详细介绍可以参考文章:https://mtyjkh.blog.csdn.net/article/details/117018789

"""

val_ds = tf.keras.preprocessing.image_dataset_from_directory(

data_dir,

validation_split=0.2,

subset="validation",

seed=123,

image_size=(img_height, img_width),

batch_size=batch_size)

代码输出:

Found 1200 files belonging to 4 classes.

Using 240 files for validation.

我们可以通过class_names输出数据集的标签。标签将按字母顺序对应于目录名称。

class_names = train_ds.class_names

print(class_names)

代码输出:

['Dark', 'Green', 'Light', 'Medium']

- 可视化数据

plt.figure(figsize=(10, 4)) # 图形的宽为10高为5

for images, labels in train_ds.take(1):

for i in range(10):

ax = plt.subplot(2, 5, i + 1)

plt.imshow(images[i].numpy().astype("uint8"))

plt.title(class_names[labels[i]])

plt.axis("off")

代码输出:

for image_batch, labels_batch in train_ds:

print(image_batch.shape)

print(labels_batch.shape)

break

代码输出:

(32, 224, 224, 3)

(32,)

- 配置数据集

●shuffle() :打乱数据,关于此函数的详细介绍可以参考:https://zhuanlan.zhihu.com/p/42417456

●prefetch() :预取数据,加速运行,其详细介绍可以参考我前两篇文章,里面都有讲解。

●cache() :将数据集缓存到内存当中,加速运行。

AUTOTUNE = tf.data.AUTOTUNE

train_ds = train_ds.cache().shuffle(1000).prefetch(buffer_size=AUTOTUNE)

val_ds = val_ds.cache().prefetch(buffer_size=AUTOTUNE)

normalization_layer = layers.experimental.preprocessing.Rescaling(1./255)

train_ds = train_ds.map(lambda x, y: (normalization_layer(x), y))

val_ds = val_ds.map(lambda x, y: (normalization_layer(x), y))

image_batch, labels_batch = next(iter(val_ds))

first_image = image_batch[0]

# 查看归一化后的数据

print(np.min(first_image), np.max(first_image))

代码输出:

0.0 1.0

三、构建VGG-16网络

在官方模型与自建模型之间进行二选一就可以了,选着一个注释掉另外一个。

VGG优缺点分析:

●VGG优点

VGG的结构非常简洁,整个网络都使用了同样大小的卷积核尺寸(3x3)和最大池化尺寸(2x2)。

●VGG缺点

1)训练时间过长,调参难度大。2)需要的存储容量大,不利于部署。例如存储VGG-16权重值文件的大小为500多MB,不利于安装到嵌入式系统中。

- 官方模型

官网模型调用这块我放到后面几篇文章中,下面主要讲一下VGG-16。

# model = tf.keras.applications.VGG16(weights='imagenet')

# model.summary()

- 自建模型

from tensorflow.keras import layers, models, Input

from tensorflow.keras.models import Model

from tensorflow.keras.layers import Conv2D, MaxPooling2D, Dense, Flatten, Dropout

def VGG16(nb_classes, input_shape):

input_tensor = Input(shape=input_shape)

# 1st block

x = Conv2D(64, (3,3), activation='relu', padding='same',name='block1_conv1')(input_tensor)

x = Conv2D(64, (3,3), activation='relu', padding='same',name='block1_conv2')(x)

x = MaxPooling2D((2,2), strides=(2,2), name = 'block1_pool')(x)

# 2nd block

x = Conv2D(128, (3,3), activation='relu', padding='same',name='block2_conv1')(x)

x = Conv2D(128, (3,3), activation='relu', padding='same',name='block2_conv2')(x)

x = MaxPooling2D((2,2), strides=(2,2), name = 'block2_pool')(x)

# 3rd block

x = Conv2D(256, (3,3), activation='relu', padding='same',name='block3_conv1')(x)

x = Conv2D(256, (3,3), activation='relu', padding='same',name='block3_conv2')(x)

x = Conv2D(256, (3,3), activation='relu', padding='same',name='block3_conv3')(x)

x = MaxPooling2D((2,2), strides=(2,2), name = 'block3_pool')(x)

# 4th block

x = Conv2D(512, (3,3), activation='relu', padding='same',name='block4_conv1')(x)

x = Conv2D(512, (3,3), activation='relu', padding='same',name='block4_conv2')(x)

x = Conv2D(512, (3,3), activation='relu', padding='same',name='block4_conv3')(x)

x = MaxPooling2D((2,2), strides=(2,2), name = 'block4_pool')(x)

# 5th block

x = Conv2D(512, (3,3), activation='relu', padding='same',name='block5_conv1')(x)

x = Conv2D(512, (3,3), activation='relu', padding='same',name='block5_conv2')(x)

x = Conv2D(512, (3,3), activation='relu', padding='same',name='block5_conv3')(x)

x = MaxPooling2D((2,2), strides=(2,2), name = 'block5_pool')(x)

# full connection

x = Flatten()(x)

x = Dense(4096, activation='relu', name='fc1')(x)

x = Dense(4096, activation='relu', name='fc2')(x)

output_tensor = Dense(nb_classes, activation='softmax', name='predictions')(x)

model = Model(input_tensor, output_tensor)

return model

model=VGG16(len(class_names), (img_width, img_height, 3))

model.summary()

代码输出:

Model: "model"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

input_1 (InputLayer) [(None, 224, 224, 3)] 0

block1_conv1 (Conv2D) (None, 224, 224, 64) 1792

block1_conv2 (Conv2D) (None, 224, 224, 64) 36928

block1_pool (MaxPooling2D) (None, 112, 112, 64) 0

block2_conv1 (Conv2D) (None, 112, 112, 128) 73856

block2_conv2 (Conv2D) (None, 112, 112, 128) 147584

block2_pool (MaxPooling2D) (None, 56, 56, 128) 0

block3_conv1 (Conv2D) (None, 56, 56, 256) 295168

block3_conv2 (Conv2D) (None, 56, 56, 256) 590080

block3_conv3 (Conv2D) (None, 56, 56, 256) 590080

block3_pool (MaxPooling2D) (None, 28, 28, 256) 0

block4_conv1 (Conv2D) (None, 28, 28, 512) 1180160

block4_conv2 (Conv2D) (None, 28, 28, 512) 2359808

block4_conv3 (Conv2D) (None, 28, 28, 512) 2359808

block4_pool (MaxPooling2D) (None, 14, 14, 512) 0

block5_conv1 (Conv2D) (None, 14, 14, 512) 2359808

block5_conv2 (Conv2D) (None, 14, 14, 512) 2359808

block5_conv3 (Conv2D) (None, 14, 14, 512) 2359808

block5_pool (MaxPooling2D) (None, 7, 7, 512) 0

flatten (Flatten) (None, 25088) 0

fc1 (Dense) (None, 4096) 102764544

fc2 (Dense) (None, 4096) 16781312

predictions (Dense) (None, 4) 16388

=================================================================

Total params: 134,276,932

Trainable params: 134,276,932

Non-trainable params: 0

_________________________________________________________________

- 网络结构图

关于卷积的相关知识可以参考文章:https://mtyjkh.blog.csdn.net/article/details/114278995

结构说明:

●13个卷积层(Convolutional Layer),分别用blockX_convX表示。

●3个全连接层(Fully connected Layer),分别用fcX与predictions表示。

●5个池化层(Pool layer),分别用blockX_pool表示。

VGG-16包含了16个隐藏层(13个卷积层和3个全连接层),故称为VGG-16。

四、编译

在准备对模型进行训练之前,还需要再对其进行一些设置。以下内容是在模型的编译步骤中添加的:

●损失函数(loss):用于衡量模型在训练期间的准确率。

●优化器(optimizer):决定模型如何根据其看到的数据和自身的损失函数进行更新。

●指标(metrics):用于监控训练和测试步骤。以下示例使用了准确率,即被正确分类的图像的比率。

# 设置初始学习率

initial_learning_rate = 1e-4

lr_schedule = tf.keras.optimizers.schedules.ExponentialDecay(

initial_learning_rate,

decay_steps=30, # 敲黑板!!!这里是指 steps,不是指epochs

decay_rate=0.92, # lr经过一次衰减就会变成 decay_rate*lr

staircase=True)

# 设置优化器

opt = tf.keras.optimizers.Adam(learning_rate=lr_schedule) #原代码是learning_rate=initial_learning_rate

model.compile(optimizer=opt,

loss=tf.keras.losses.SparseCategoricalCrossentropy(from_logits=False),

metrics=['accuracy'])

SparseCategoricalCrossentropy函数注意事项:

from_logits参数:

●布尔值,默认值为 False。

●当为 True 时,函数假设传入的预测值是未经过激活函数处理的原始logits 值。如果模型的最后一层没有使用 softmax 激活函数(即返回 logits),需要将 from_logits 设置为True。

●当为 False 时,函数假设传入的预测值已经是经过 softmax 处理的概率分布。

五、训练模型

注:网络越来越复杂,对算力要求也更高,CPU训练模型时间会很长,建议尽可能的使用GPU来跑。

epochs = 20

history = model.fit(

train_ds,

validation_data=val_ds,

epochs=epochs

)

代码输出:

Epoch 1/20

30/30 [==============================] - 9s 140ms/step - loss: 1.3883 - accuracy: 0.2531 - val_loss: 1.3882 - val_accuracy: 0.2125

Epoch 2/20

30/30 [==============================] - 4s 120ms/step - loss: 1.1535 - accuracy: 0.3802 - val_loss: 0.9791 - val_accuracy: 0.5667

Epoch 3/20

30/30 [==============================] - 4s 120ms/step - loss: 0.7472 - accuracy: 0.5979 - val_loss: 0.6218 - val_accuracy: 0.6792

Epoch 4/20

30/30 [==============================] - 4s 121ms/step - loss: 0.7028 - accuracy: 0.6052 - val_loss: 0.7372 - val_accuracy: 0.5292

Epoch 5/20

30/30 [==============================] - 4s 120ms/step - loss: 0.6016 - accuracy: 0.6625 - val_loss: 0.5931 - val_accuracy: 0.6042

Epoch 6/20

30/30 [==============================] - 4s 120ms/step - loss: 0.4775 - accuracy: 0.7375 - val_loss: 0.4818 - val_accuracy: 0.7333

Epoch 7/20

30/30 [==============================] - 4s 120ms/step - loss: 0.4483 - accuracy: 0.7458 - val_loss: 0.4824 - val_accuracy: 0.6417

Epoch 8/20

30/30 [==============================] - 4s 120ms/step - loss: 0.4527 - accuracy: 0.7594 - val_loss: 0.4967 - val_accuracy: 0.7583

Epoch 9/20

30/30 [==============================] - 4s 121ms/step - loss: 0.3483 - accuracy: 0.8240 - val_loss: 0.3754 - val_accuracy: 0.8458

Epoch 10/20

30/30 [==============================] - 4s 121ms/step - loss: 0.2360 - accuracy: 0.8990 - val_loss: 0.3246 - val_accuracy: 0.8542

Epoch 11/20

30/30 [==============================] - 4s 121ms/step - loss: 0.1915 - accuracy: 0.9365 - val_loss: 0.3291 - val_accuracy: 0.9000

Epoch 12/20

30/30 [==============================] - 4s 121ms/step - loss: 0.1395 - accuracy: 0.9438 - val_loss: 0.0954 - val_accuracy: 0.9750

Epoch 13/20

30/30 [==============================] - 4s 121ms/step - loss: 0.0680 - accuracy: 0.9792 - val_loss: 0.0786 - val_accuracy: 0.9750

Epoch 14/20

30/30 [==============================] - 4s 121ms/step - loss: 0.0350 - accuracy: 0.9844 - val_loss: 0.0948 - val_accuracy: 0.9750

Epoch 15/20

30/30 [==============================] - 4s 121ms/step - loss: 0.0271 - accuracy: 0.9917 - val_loss: 0.0724 - val_accuracy: 0.9833

Epoch 16/20

30/30 [==============================] - 4s 121ms/step - loss: 0.0232 - accuracy: 0.9896 - val_loss: 0.0800 - val_accuracy: 0.9750

Epoch 17/20

30/30 [==============================] - 4s 121ms/step - loss: 0.0180 - accuracy: 0.9937 - val_loss: 0.1339 - val_accuracy: 0.9667

Epoch 18/20

30/30 [==============================] - 4s 121ms/step - loss: 0.0174 - accuracy: 0.9958 - val_loss: 0.0783 - val_accuracy: 0.9750

Epoch 19/20

30/30 [==============================] - 4s 121ms/step - loss: 0.0235 - accuracy: 0.9927 - val_loss: 0.0816 - val_accuracy: 0.9708

Epoch 20/20

30/30 [==============================] - 4s 121ms/step - loss: 0.0310 - accuracy: 0.9875 - val_loss: 0.0688 - val_accuracy: 0.9833

六、可视化结果

acc = history.history['accuracy']

val_acc = history.history['val_accuracy']

loss = history.history['loss']

val_loss = history.history['val_loss']

epochs_range = range(epochs)

plt.figure(figsize=(12, 4))

plt.subplot(1, 2, 1)

plt.plot(epochs_range, acc, label='Training Accuracy')

plt.plot(epochs_range, val_acc, label='Validation Accuracy')

plt.legend(loc='lower right')

plt.title('Training and Validation Accuracy')

plt.subplot(1, 2, 2)

plt.plot(epochs_range, loss, label='Training Loss')

plt.plot(epochs_range, val_loss, label='Validation Loss')

plt.legend(loc='upper right')

plt.title('Training and Validation Loss')

plt.show()

代码输出:

七、关于卷积补充:

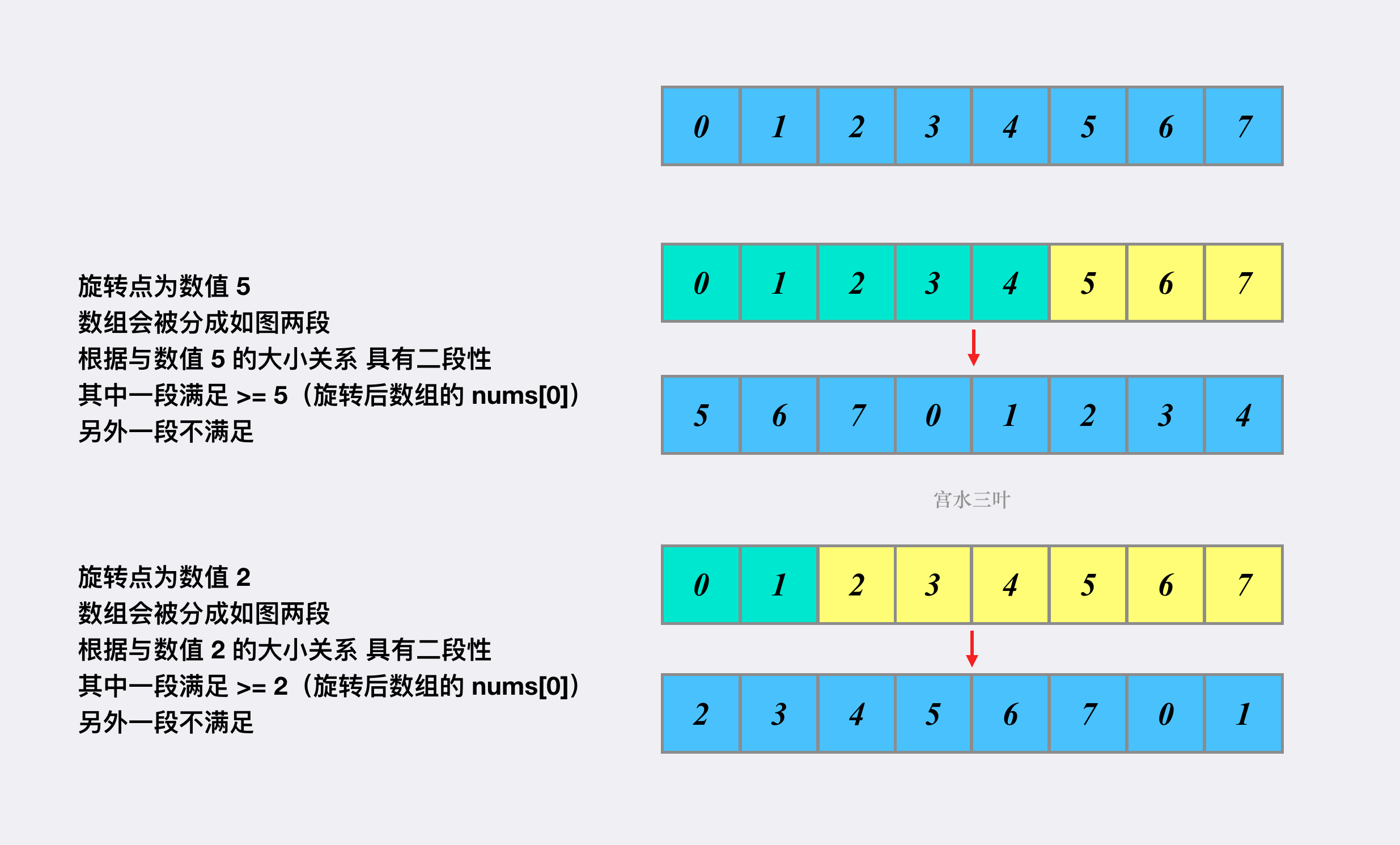

1、 卷积的计算

4 × 4 的输入矩阵 I 和 3 × 3 的卷积核 K :

在步长(stride)为 1 时,输出的大小为 ( 4 − 3 + 1 ) × ( 4 − 3 + 1 )

计算公式:

输入图片矩阵 I 大小: w × w

卷积核 K :k × k

步长S :s

填充大小(padding):p

输出图片大小为:o × o

步长为2,卷积核为3*3,p=0的卷积情况如下:

当卷积函数中padding='same’时,会动态调整 p 值,确保 o = w ,即保证输入与输出一致。例如:输入是 28 * 28 * 1 输出也为 28 * 28 * 1 。

步长为1,卷积核为3*3,padding='same’的卷积情况如下:

2、实例:

7 ∗ 7 的 input,3 ∗ 3 的 kernel,无填充,步长为1,则 o = ( 7 − 3 ) /1 + 1 ,也即 output size 为 5 ∗ 5

7∗7 的 input,3 ∗ 3 的 kernel,无填充,步长为2,则 o = ( 7 − 3 ) /2 + 1,也即 output size 为 3 ∗ 3