前提条件

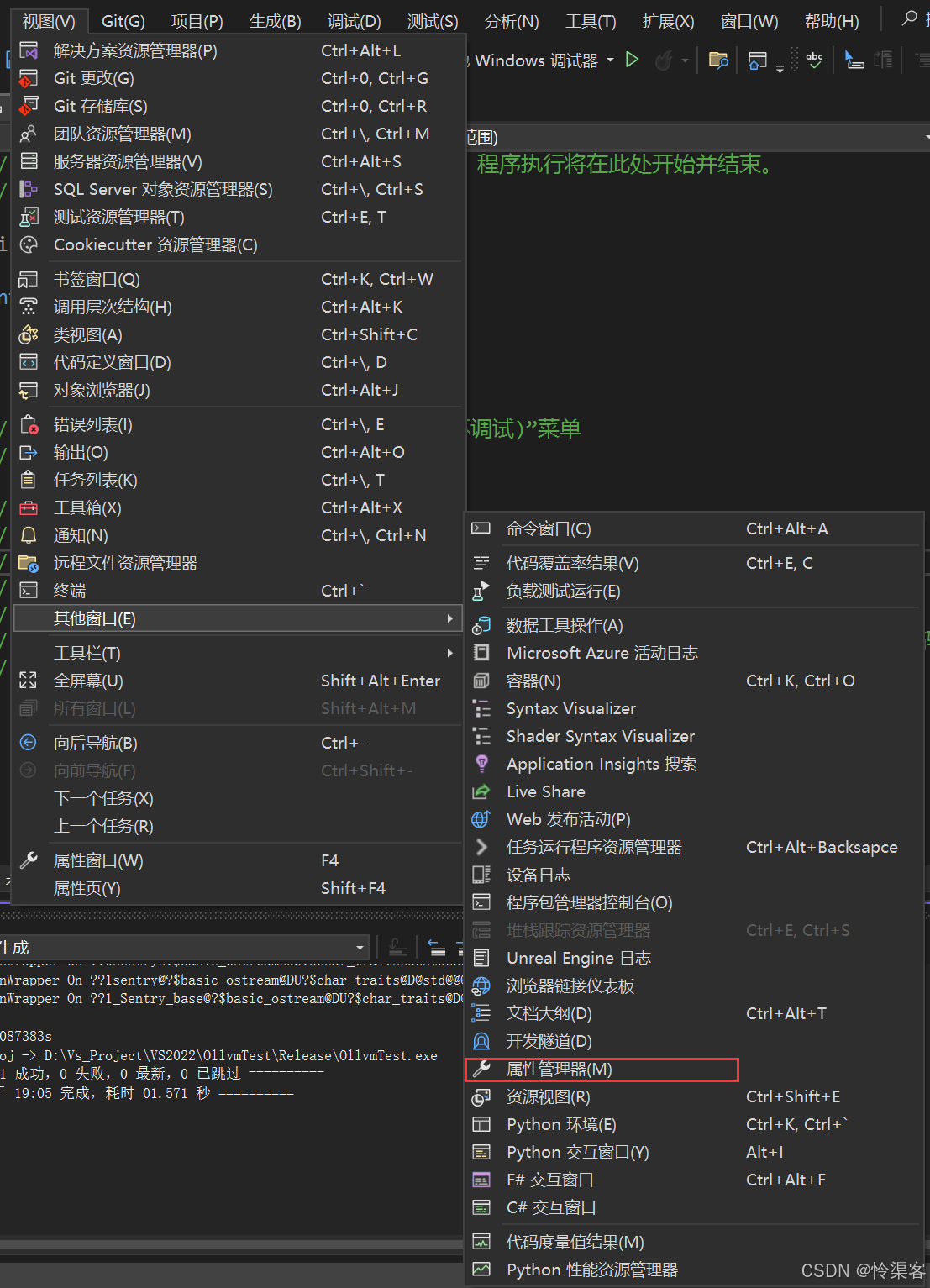

根据不同的操作系统,安装好显卡驱动,并能正常识别出来显卡,比如如下截图:

GPU容器创建流程

containerd --> containerd-shim--> nvidia-container-runtime --> nvidia-container-runtime-hook --> libnvidia-container --> runc -- > container-processGPU驱动安装

# ubuntu系统

apt-get update

apt-get install gcc make

## cuda10.1

wget -c https://ops-software-binary-1255440668.cos.ap-chengdu.myqcloud.com/nvidia/NVIDIA-Linux-x86_64-430.50.run

bash NVIDIA-Linux-x86_64-430.50.run

## cuda10.2

wget -c https://ops-software-binary-1255440668.cos.ap-chengdu.myqcloud.com/nvidia/NVIDIA-Linux-x86_64-440.100.run

bash NVIDIA-Linux-x86_64-440.100.run

## cuda11

wget -c https://ops-software-binary-1255440668.cos.ap-chengdu.myqcloud.com/nvidia/NVIDIA-Linux-x86_64-450.66.run

bash NVIDIA-Linux-x86_64-450.66.run

## cuda11.4

wget -c https://ops-software-binary-1255440668.cos.ap-chengdu.myqcloud.com/nvidia/NVIDIA-Linux-x86_64-470.57.02.run

bash NVIDIA-Linux-x86_64-470.57.02.run安装nvidia runtime

https://nvidia.github.io/nvidia-container-runtime/

# ubuntu在线安装

curl -s -L https://nvidia.github.io/nvidia-docker/gpgkey | sudo apt-key add -

cat > /etc/apt/sources.list.d/nvidia-docker.list <<'EOF'

deb https://nvidia.github.io/libnvidia-container/ubuntu16.04/$(ARCH) /

deb https://nvidia.github.io/nvidia-container-runtime/ubuntu16.04/$(ARCH) /

deb https://nvidia.github.io/nvidia-docker/ubuntu16.04/$(ARCH) /

EOF

apt-get update

apt-get install nvidia-container-runtime

# centos 在线安装

distribution=$(. /etc/os-release;echo $ID$VERSION_ID)

curl -s -L https://nvidia.github.io/nvidia-docker/$distribution/nvidia-docker.repo | sudo tee /etc/yum.repos.d/nvidia-docker.repo

DIST=$(sed -n 's/releasever=//p' /etc/yum.conf)

DIST=${DIST:-$(. /etc/os-release; echo $VERSION_ID)}

sudo rpm -e gpg-pubkey-f796ecb0

sudo gpg --homedir /var/lib/yum/repos/$(uname -m)/$DIST/nvidia-docker/gpgdir --delete-key f796ecb0

sudo yum makecache

yum -y install nvidia-container-runtime配置docker/containerd

# docker配置

cat /etc/docker/daemon.json

{

"registry-mirrors": [

"https://wlzfs4t4.mirror.aliyuncs.com"

],

"max-concurrent-downloads": 10,

"log-driver": "json-file",

"log-level": "warn",

"log-opts": {

"max-size": "10m",

"max-file": "3"

},

"data-root": "/data/var/lib/docker",

"bip": "169.254.31.1/24",

"default-runtime": "nvidia",

"runtimes": {

"nvidia": {

"path": "/usr/bin/nvidia-container-runtime",

"runtimeArgs": []

}

}

}

systemctl restart docker

# containerd配置

cat /etc/containerd/config.toml

#其他的根据自己的需求修改,我这里只说明适配gpu的配置

[plugins]

[plugins."io.containerd.grpc.v1.cri"]

[plugins."io.containerd.grpc.v1.cri".containerd]

#-------------------修改开始-------------------------------------------

default_runtime_name = "nvidia"

#-------------------修改结束-------------------------------------------

[plugins."io.containerd.grpc.v1.cri".containerd.runtimes]

#-------------------新增开始-------------------------------------------

[plugins."io.containerd.grpc.v1.cri".containerd.runtimes.nvidia]

privileged_without_host_devices = false

runtime_engine = ""

runtime_root = ""

runtime_type = "io.containerd.runc.v2"

[plugins."io.containerd.grpc.v1.cri".containerd.runtimes.nvidia.options]

BinaryName = "/usr/bin/nvidia-container-runtime"

#-------------------新增结束-------------------------------------------

systemctl restart containerd.service方案一:使用nvidia官方插件

【根据显卡数量分配,独占显卡】

应用yaml分配GPU资源示例:

resources:

limits:

nvidia.com/gpu: '1'

requests:

nvidia.com/gpu: '1'其中1表示使用1张GPU卡

在Kubernetes中启用GPU支持

# cat nvidia-device-plugin.yaml

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: nvidia-device-plugin-daemonset

namespace: kube-system

spec:

selector:

matchLabels:

name: nvidia-device-plugin-ds

updateStrategy:

type: RollingUpdate

template:

metadata:

labels:

name: nvidia-device-plugin-ds

spec:

tolerations:

- key: nvidia.com/gpu

operator: Exists

effect: NoSchedule

# Mark this pod as a critical add-on; when enabled, the critical add-on

# scheduler reserves resources for critical add-on pods so that they can

# be rescheduled after a failure.

# See https://kubernetes.io/docs/tasks/administer-cluster/guaranteed-scheduling-critical-addon-pods/

priorityClassName: "system-node-critical"

containers:

- image: ycloudhub.com/middleware/nvidia-gpu-device-plugin:v0.12.3

name: nvidia-device-plugin-ctr

env:

- name: FAIL_ON_INIT_ERROR

value: "false"

securityContext:

allowPrivilegeEscalation: false

capabilities:

drop: ["ALL"]

volumeMounts:

- name: device-plugin

mountPath: /var/lib/kubelet/device-plugins

volumes:

- name: device-plugin

hostPath:

path: /var/lib/kubelet/device-plugins

# 应用yaml文件并检查

kubectl apply -f nvidia-device-plugin.yml

kubectl get po -n kube-system | grep nvidia

kubectl describe nodes ycloud

......

Capacity:

cpu: 32

ephemeral-storage: 458291312Ki

hugepages-1Gi: 0

hugepages-2Mi: 0

memory: 131661096Ki

nvidia.com/gpu: 2

pods: 110

Allocatable:

cpu: 32

ephemeral-storage: 422361272440

hugepages-1Gi: 0

hugepages-2Mi: 0

memory: 131558696Ki

nvidia.com/gpu: 2

pods: 110

......方案二:使用第三方插件

【根据显卡显存大小分配,共享显卡】

# 阿里云官方git地址:https://github.com/AliyunContainerService/gpushare-device-plugin/

resources:

limits:

aliyun.com/gpu-mem: '3'

requests:

aliyun.com/gpu-mem: '3'

# 其中3表示使用的显存大小,单位G安装gpushare-scheduler-extender插件

参考文档:https://github.com/AliyunContainerService/gpushare-scheduler-extender/blob/master/docs/install.md

1.修改kube-scheduler配置

# 创建/etc/kubernetes/scheduler-policy-config.json

{

"kind": "Policy",

"apiVersion": "v1",

"extenders": [

{

"urlPrefix": "http://127.0.0.1:32766/gpushare-scheduler",

"filterVerb": "filter",

"bindVerb": "bind",

"enableHttps": false,

"nodeCacheCapable": true,

"managedResources": [

{

"name": "aliyun.com/gpu-mem",

"ignoredByScheduler": false

}

],

"ignorable": false

}

]

}

# 修改cat /etc/systemd/system/kube-scheduler.service文件,添加--policy-config-file相关内容

cat /etc/systemd/system/kube-scheduler.service

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

[Service]

ExecStart=/usr/local/bin/kube-scheduler \

--address=127.0.0.1 \

--master=http://127.0.0.1:8080 \

--leader-elect=true \

--v=2 \

--policy-config-file=/etc/kubernetes/scheduler-policy-config.json

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

# 重启服务

systemctl daemon-reload

systemctl restart kube-scheduler.service

2. 部署gpushare-schd-extender

curl -O https://raw.githubusercontent.com/AliyunContainerService/gpushare-scheduler-extender/master/config/gpushare-schd-extender.yaml

kubectl apply -f gpushare-schd-extender.yaml3.部署device-plugin

# 给节点添加label "gpushare=true"

kubectl label node <target_node> gpushare=true

For example:

kubectl label node mynode gpushare=true

# 部署device-plugin插件

wget https://raw.githubusercontent.com/AliyunContainerService/gpushare-device-plugin/master/device-plugin-rbac.yaml

kubectl apply -f device-plugin-rbac.yaml

wget https://raw.githubusercontent.com/AliyunContainerService/gpushare-device-plugin/master/device-plugin-ds.yaml

kubectl apply -f device-plugin-ds.yaml4.安装kubectl-inspect-gpushare插件,用来查看GPU使用情况

cd /usr/bin/

wget https://github.com/AliyunContainerService/gpushare-device-plugin/releases/download/v0.3.0/kubectl-inspect-gpushare

chmod u+x /usr/bin/kubectl-inspect-gpushare