本文来源公众号“程序员学长”,仅用于学术分享,侵权删,干货满满。

原文链接:快速学会一个算法模型,LSTM

今天,给大家分享一个超强的算法模型,LSTM。

LSTM(Long Short-Term Memory)是一种特殊类型的循环神经网络(RNN),专门设计用来解决传统 RNN 在处理序列数据时面临的长期依赖问题。

LSTM 的关键特征是其维持细胞状态的能力,细胞状态充当可以存储长序列信息的记忆单元。这使得 LSTM 能够随着时间的推移选择性地记住或忘记信息,使它们非常适合上下文和远程依赖性至关重要的任务。

LSTM 的核心组件

LSTM 单元由以下几个主要部分组成

案例分享

加载数据集

import numpy as np

import pandas as pd

from keras.models import Sequential, load_model

from tensorflow.keras.callbacks import ModelCheckpoint, EarlyStopping

from tensorflow.keras.losses import MeanSquaredError

from tensorflow.keras.metrics import RootMeanSquaredError

from tensorflow.keras.optimizers import Adam

from keras.layers import LSTM, Dense, InputLayer

from sklearn.metrics import mean_squared_error as mse

from time import time

import matplotlib.pyplot as plt

import matplotlib

import warnings

path_data = r'filter_pt_data.csv'

df = pd.read_csv(path_data)

df.dropna(inplace=True)

df['dt'] = pd.to_datetime(df['dt'])

df.set_index('dt', inplace=True)

df['Seconds'] = df.index.map(pd.Timestamp.timestamp)

year_secs = 60 * 60 * 24 * 365 # Number of seconds in a year

df['year_signal_sin'] = np.sin(df['Seconds'] * (2 * np.pi / year_secs))

df['year_signal_cos'] = np.cos(df['Seconds'] * (2 * np.pi / year_secs))

df.drop(columns=['Seconds'], inplace=True)准备数据序列

LSTM 模型是专门为处理数据点序列而设计的,因此需要将数据转换为这种格式。

该方法涉及将预测问题转换为监督学习范式。在此设置中,输入 (X) 包含前面的 n 个数据点,而输出 (y) 表示后续时间步的目标值。

为了说明这个概念,假设我们正在使用包含三个特征(“a”、“b”和“c”)的数据集。我们的目标是预测特征 “a”。在这种情况下,我们的输入序列将包含三个时间戳,这意味着我们将检查三个连续时间点的特征值。

def create_sequences_unistep(data, n_steps):

data_t = data.to_numpy()

X = []

y = []

for i in range(len(data_t)-n_steps):

row = [a for a in data_t[i:i+n_steps]]

X.append(row)

label = data_t[i+n_steps][0]

y.append(label)

return np.array(X), np.array(y)创建模型

def train_model(X, y, X_val, y_val, n_steps, n_preds=1):

n_features = X.shape[2]

# Create lstm model

model = Sequential()

model.add(InputLayer((n_steps, n_features)))

model.add(LSTM(4, return_sequences=True))

model.add(LSTM(5))

model.add(Dense(n_preds, activation='linear'))

# Compile model

model.compile(loss=MeanSquaredError(), optimizer=Adam(learning_rate=0.0001), metrics=[RootMeanSquaredError()])

model.summary()

# Save model with the least validation loss

checkpoint_filepath = 'cps/best_model.h5'

model_checkpoint_callback = ModelCheckpoint(

filepath=checkpoint_filepath,

monitor='val_loss', # Monitor validation loss

mode='min', # Save the model with the minimum validation loss

save_best_only=True)

# Stop training if validation loss does not improve in 500 epochs

early_stopping_callback = EarlyStopping(

monitor='val_loss',

patience=50, # Stop training if no improvement in validation loss for 100 epochs

mode='min',

verbose=1,

restore_best_weights=True) # when finish train restore best model

# Fit model

ts = time()

history = model.fit(X, y,

verbose=2,

epochs=500,

validation_data=(X_val, y_val),

callbacks=[model_checkpoint_callback, early_stopping_callback])

tf = time()

print('Time to train model: {} s'.format(round(tf - ts, 2)))

# Plot loss evolution

plt.figure()

plt.plot(history.history['loss'], label='loss')

plt.plot(history.history['val_loss'], label='val_loss')

plt.title('Training and Validation Loss')

plt.xlabel('Epochs')

plt.ylabel('Loss')

plt.legend()

plt.show()

# Load best model

del model

model = load_model(checkpoint_filepath)

return model模型训练

首先,让我们使用之前实现的函数生成序列。我们将分配 500 个值用于训练,50 个值用于验证,并将 “n_steps” 参数设置为 5。

def preprocess_input(X, mean, std):

X[:, :, 0] = (X[:, :, 0] - mean) / std

return X

def preprocess_output(y, mean, std):

y = (y - mean) / std

return y

def postprocess_output(y, mean, std):

y = (y * std) + mean

return y

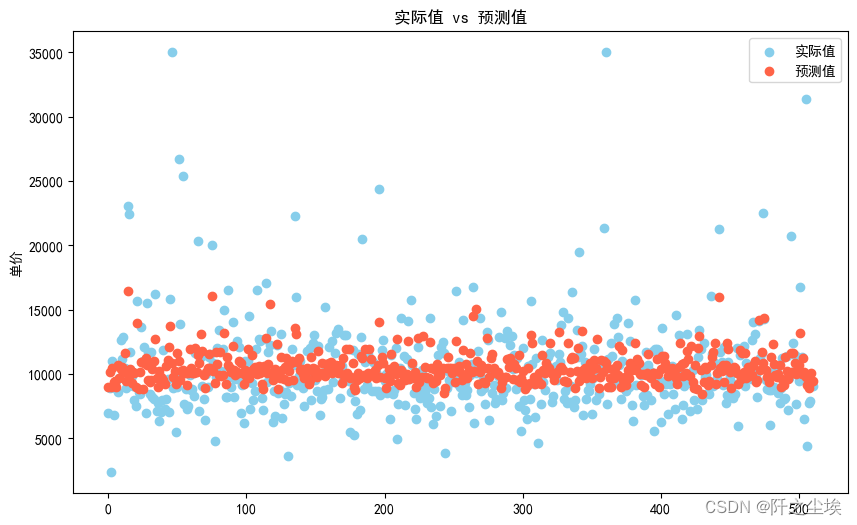

def plot_predictions_unistep(model, X_test, y_test, mean_ref, std_ref):

preds = model.predict(X_test).flatten().tolist()

# preprocess preds to actual scale

preds = [postprocess_output(i, mean_ref, std_ref) for i in preds]

y_t = [postprocess_output(i, mean_ref, std_ref) for i in y_test.tolist()]

er = mse(y_test, preds)

plt.figure(figsize=(12, 8))

plt.plot(y_t, label='Actual values')

plt.plot(preds, label='Predictions', alpha=.7)

plt.legend()

plt.title('MSE = {}'.format(er))

return predsn_steps = 5

X, y = create_sequences_unistep(df, n_steps)

# Prepare train and validation data

nr_vals_train = 500

nr_vals_validation = 50

X_train = X[:nr_vals_train]

y_train = y[:nr_vals_train]

X_val = X[nr_vals_train: nr_vals_train + nr_vals_validation]

y_val = y[nr_vals_train: nr_vals_train + nr_vals_validation]

X_test = X[nr_vals_train:]

y_test = y[nr_vals_train:]

print('X train shape: {}'.format(X_train.shape))

print('y train shape: {}'.format(y_train.shape))

print('X validation shape: {}'.format(X_val.shape))

print('y validation shape: {}'.format(y_val.shape))

# Scale temp value with standard scaler -> mean 0 and std 1

mean_ref = np.mean(X_train[:, :, 0])

std_ref = np.std(X_train[:, :, 0])

# Scale X's

X_train = preprocess_input(X_train, mean_ref, std_ref)

X_val = preprocess_input(X_val, mean_ref, std_ref)

X_test = preprocess_input(X_test, mean_ref, std_ref)

# Scale y's

y_train = preprocess_output(y_train, mean_ref, std_ref)

y_val = preprocess_output(y_val, mean_ref, std_ref)

y_test = preprocess_output(y_test, mean_ref, std_ref)

model = train_model(X_train, y_train, X_val, y_val, n_steps)

# Plot train predictions set

plot_predictions_unistep(model, X_train, y_train, mean_ref, std_ref)

THE END !

文章结束,感谢阅读。您的点赞,收藏,评论是我继续更新的动力。大家有推荐的公众号可以评论区留言,共同学习,一起进步。

![[Go 微服务] go-micro + consul 的使用](https://img-blog.csdnimg.cn/direct/480ef57be4364cfbabcc63e8979c242b.png)