文章目录

- 前言

- 一、epoch,batch-size和iteration

- 二、示例

- 1.说明

- 2.代码示例

- 总结

前言

介绍PyTorch中加载数据集的相关操作。Dataset和DataLoader

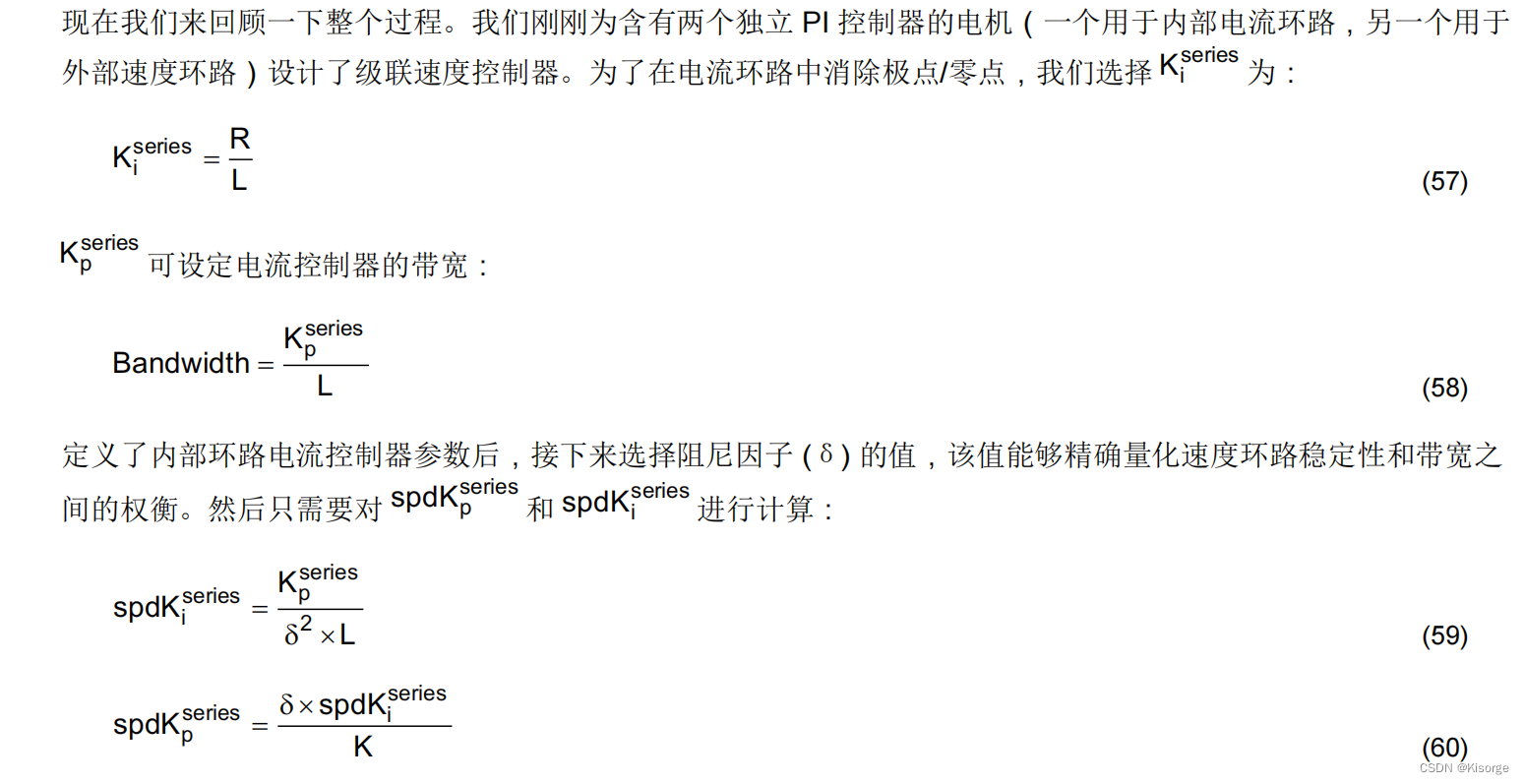

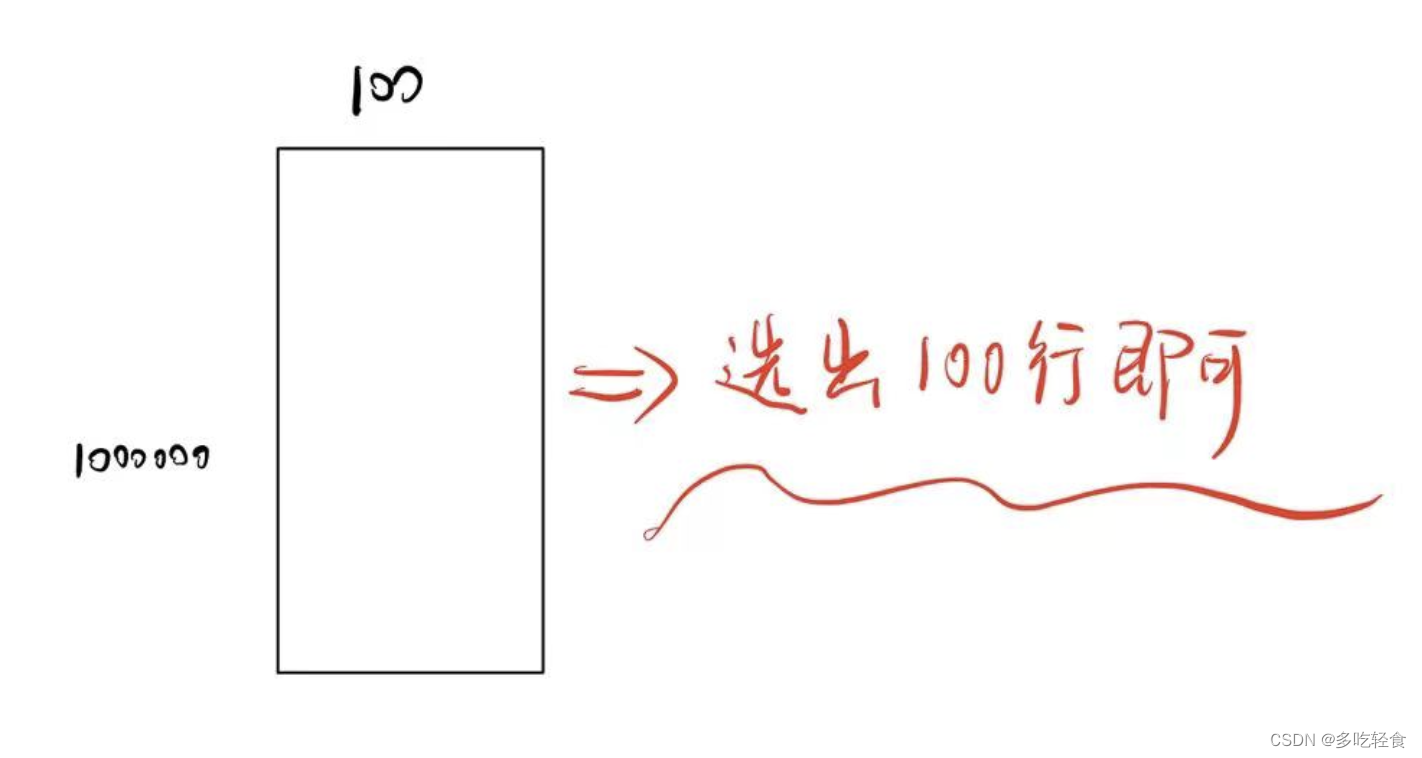

一、epoch,batch-size和iteration

epoch:所有训练数据完成一次前馈和反馈

batch-size:每次小样本选取的数量

iteration:样本总数除以batch-size

二、示例

1.说明

1.Dataset为抽象类,不能实例化对象,只能继承来用。

2.DataLoader用于帮助加载数据。

train_loader = DataLoader(dataset=dataset, batch_size=32, shuffle=True, num_workers=2)

dataset:数据集

batch_size:每一批次的数据大小

shuffle:是否打乱随机取,增加随机性

num_workers:多线程

2.代码示例

代码如下(示例):

import torch

import numpy as np

from torch.utils.data import Dataset

from torch.utils.data import DataLoader

import matplotlib.pyplot as plt

# prepare dataset

class DiabetesDataset(Dataset):

def __init__(self, filepath):

xy = np.loadtxt(filepath, delimiter=',', dtype=np.float32)

self.len = xy.shape[0] # shape(多少行,多少列)

self.x_data = torch.from_numpy(xy[:, :-1])

self.y_data = torch.from_numpy(xy[:, [-1]])

def __getitem__(self, index):

return self.x_data[index], self.y_data[index]

def __len__(self):

return self.len

dataset = DiabetesDataset('diabetes.csv')

train_loader = DataLoader(dataset=dataset, batch_size=32, shuffle=True, num_workers=2) # num_workers 多线程

# design model using class

class Model(torch.nn.Module):

def __init__(self):

super(Model, self).__init__()

self.linear1 = torch.nn.Linear(8, 6)

self.linear2 = torch.nn.Linear(6, 4)

self.linear3 = torch.nn.Linear(4, 1)

self.sigmoid = torch.nn.Sigmoid()

def forward(self, x):

x = self.sigmoid(self.linear1(x))

x = self.sigmoid(self.linear2(x))

x = self.sigmoid(self.linear3(x))

return x

model = Model()

# construct loss and optimizer

criterion = torch.nn.BCELoss(reduction='mean')

optimizer = torch.optim.SGD(model.parameters(), lr=0.01)

# training cycle forward, backward, update

if __name__ == '__main__':

epoch_list = []

loss_list = []

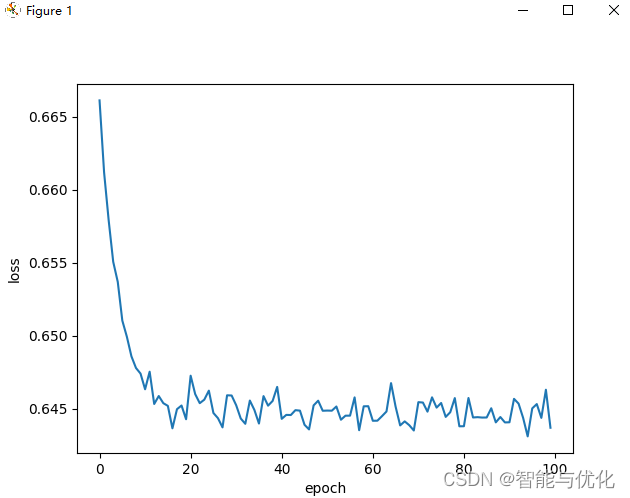

for epoch in range(100):

epoch_list.append(epoch)

loss_temp = 0

for i, data in enumerate(train_loader, 0): # train_loader 是先shuffle后mini_batch

inputs, labels = data

y_pred = model(inputs)

loss = criterion(y_pred, labels)

loss_temp += loss.item()

print(epoch, i, loss.item())

optimizer.zero_grad()

loss.backward()

optimizer.step()

loss_list.append(loss_temp)

plt.plot(epoch_list, loss_list)

plt.ylabel('loss')

plt.xlabel('epoch')

plt.show()

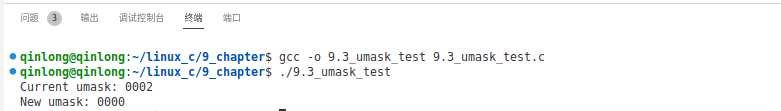

得到如下结果:

总结

PyTorch学习7:加载数据集