import aiofiles

import aiohttp

import asyncio

import requests

from lxml import etree

from aiohttp import TCPConnector

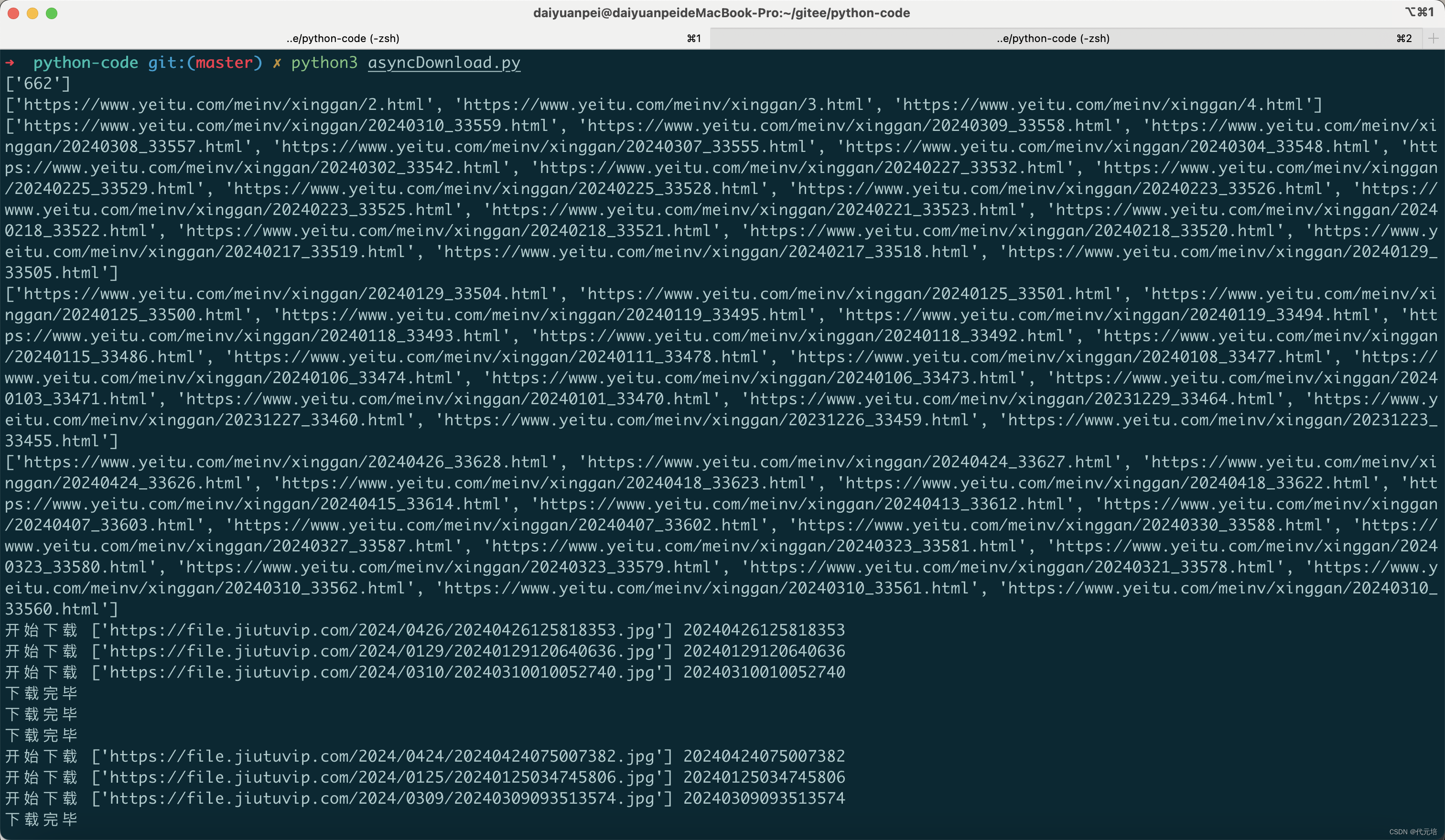

class Spider:

def __init__(self, value):

# 起始url

self.start_url = value

# 下载单个图片

@staticmethod

async def download_one(url):

name = url[0].split("/")[-1][:-4]

print("开始下载", url, name)

headers = {

'Host': 'file.jiutuvip.com',

'User-Agent': 'Mozilla/5.0 (Linux; Android 6.0; Nexus 5 Build/MRA58N) AppleWebKit/537.36 (KHTML, '

'like Gecko) Chrome/124.0.0.0 Mobile Safari/537.36',

'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,image/avif,image/webp,*/*;q=0.8',

'Accept-Language': 'zh-CN,zh;q=0.9',

'Accept-Encoding': 'gzip, deflate, br, zstd',

'Connection': 'keep-alive',

'Upgrade-Insecure-Requests': '1',

'Sec-Fetch-Dest': 'document',

'Sec-Fetch-Mode': 'navigate',

'Sec-Fetch-Site': 'none',

'Sec-Fetch-User': '?1',

'TE': 'trailers'

}

# 发送网络请求

async with aiohttp.ClientSession(connector=TCPConnector(ssl=False)) as session:

async with session.get(url=url[0], headers=headers) as resp: # 相当于 requests.get(url=url[0], headers=head)

# await resp.text() => resp.text

content = await resp.content.read() # => resp.content

# 写入文件

async with aiofiles.open('./imgs/' + name + '.webp', "wb") as f:

await f.write(content)

print("下载完毕")

# 获取图片的url

async def download(self, href_list):

for href in href_list:

async with aiohttp.ClientSession(connector=TCPConnector(ssl=False)) as session:

async with session.get(url=href) as child_res:

html = await child_res.text()

child_tree = etree.HTML(html)

src = child_tree.xpath("//div[@class='article-body cate-6']/a/img/@src") # 选手图片地址 url 列表

await self.download_one(src)

# 获取图片详情url

async def get_img_url(self, html_url):

async with aiohttp.ClientSession(connector=TCPConnector(ssl=False)) as session:

async with session.get(url=html_url) as resp:

html = await resp.text()

tree = etree.HTML(html)

href_list = tree.xpath("//div[@class='uk-container']/ul/li/a/@href") # 选手详情页 url 列表

print(href_list)

await self.download(href_list)

# 页面总页数

@staticmethod

def get_html_url(url):

page = 2

response = requests.get(url=url)

response.encoding = "utf-8"

tree = etree.HTML(response.text)

total_page = tree.xpath("//*[@class='pages']/a[12]/text()") # 页面总页数

print(total_page)

html_url_list = []

while page <= 4: # int(total_page[0]) # 只取第 2、3、4 页

next_url = f"https://www.yeitu.com/meinv/xinggan/{page}.html"

html_url_list.append(next_url)

page += 1

print(html_url_list)

return html_url_list

async def main(self):

# 拿到每页url列表

html_url_list = self.get_html_url(url=self.start_url) # url列表

tasks = []

for html_url in html_url_list:

t = asyncio.create_task(self.get_img_url(html_url)) # 创建任务

tasks.append(t)

await asyncio.wait(tasks)

if __name__ == '__main__':

url = "https://www.yeitu.com/meinv/xinggan/"

sp = Spider(url)

# loop = asyncio.get_event_loop()

loop = asyncio.new_event_loop()

asyncio.set_event_loop(loop)

loop.run_until_complete(sp.main())

![[Windows] 植物大战僵尸杂交版](https://img-blog.csdnimg.cn/img_convert/079456a2ecbcdb04ca5a8ae9164f8ac7.jpeg)