论文网址:[2307.10181] Community-Aware Transformer for Autism Prediction in fMRI Connectome (arxiv.org)

论文代码:GitHub - ubc-tea/Com-BrainTF: The official Pytorch implementation of paper "Community-Aware Transformer for Autism Prediction in fMRI Connectome" accepted by MICCAI 2023

英文是纯手打的!论文原文的summarizing and paraphrasing。可能会出现难以避免的拼写错误和语法错误,若有发现欢迎评论指正!文章偏向于笔记,谨慎食用!

1. 省流版

1.1. 心得

(1)我超,开篇自闭症是lifelong疾病。搜了搜是真的啊,玉玉可以治愈但是自闭症不太行,为啥,太神奇了。我还没有见过自闭症的

1.2. 论文总结图

2. 论文逐段精读

2.1. Abstract

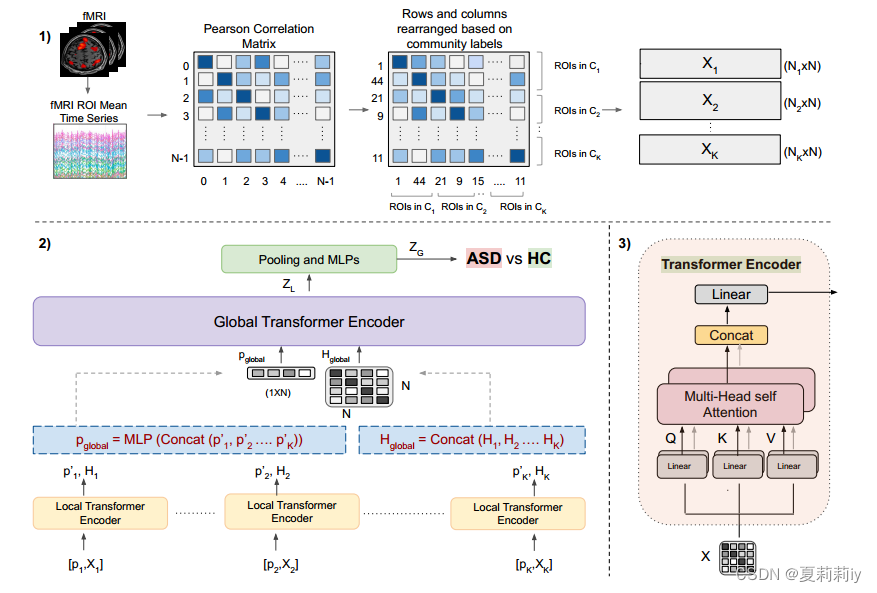

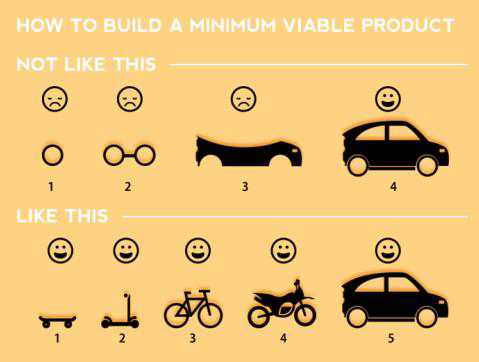

①Treating each ROI equally will overlook the social relationships between them. Thus, the authors put forward Com-BrainTF model to learn local and global presentations

②They share the parameters between different communities but provide specific token for each community

2.2. Introduction

①ASD patients perform abnormal in default mode network (DMN) and are influenced by the significant change of dorsal attention network (DAN) and DMN

②Com-BrainTF contains a hierarchical transformer to learn community embedding and a local transformer to aggregate the whole information of brain

③Sharing the local transformer parameters can avoid over-parameterization

2.3. Method

2.3.1. Overview

(1)Problem Definition

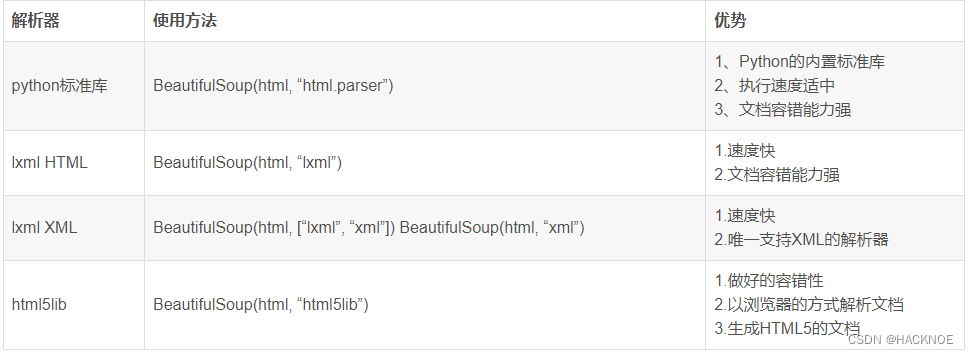

①They adopt Pearson correlation coefficients methods to obrain functional connectivity matrices

②Then divide ROIs to

communities

③The learned embedding

④Next, the following pooling layer and MPLs predict the labels

(2)Overview of our Pipeline

①They provide a local transformer, a global transformer and a pooling layer in their local-global transformer architecture

②The overall framework

2.3.2. Local-global transformer encoder

①With the input FC, the learned node feature matrix can be calculated by

②In transformer encoder module,

where ,

is the number of heads

(1)Local Transformer

①They apply same local transformer for all the input, but use unique learnable tokens :

(2)Global Transformer

①The global operation is:

2.3.3. Graph Readout Layer

①They aggregate node embedding by OCRead.

②The graph level embedding is calculated by

, where

is a learnable assignment matrix computed by OCRead layer

③Afterwards, flattening and put it in MLP for final prediction

④Loss: CrossEntropy (CE) loss

2.4. Experiments

2.4.1. Datasets and Experimental Settings

(1)ABIDE

(2)Experimental Settings

2.4.2. Quantitative and Qualitative Results

2.4.3. Ablation studies

(1)Input: node features vs. class tokens of local transformers

(2)Output: Cross Entropy loss on the learned node features vs. prompt token

2.5. Conclusion

2.6. Supplementary Materials

2.6.1. Variations on the Number of Prompts

2.6.2. Attention Scores of ASD vs. HC in Comparison between Com-BrainTF (ours) and BNT (baseline)

2.6.3. Decoded Functional Group Differences of ASD vs. HC

3. 知识补充

4. Reference List

Bannadabhavi A. et al. (2023) 'Community-Aware Transformer for Autism Prediction in fMRI Connectome', 26th International Conference on Medical Image Computing and Computer Assisted Intervention (MICCAI 2023), doi: https://doi.org/10.48550/arXiv.2307.10181

![[职场] 如何通过运营面试_1 #笔记#媒体#经验分享](https://img-blog.csdnimg.cn/img_convert/84ff96ce89b437afcc33c058527b4fc4.jpeg)