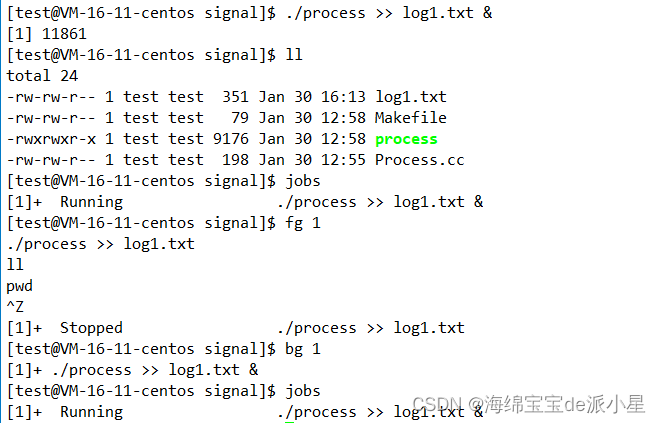

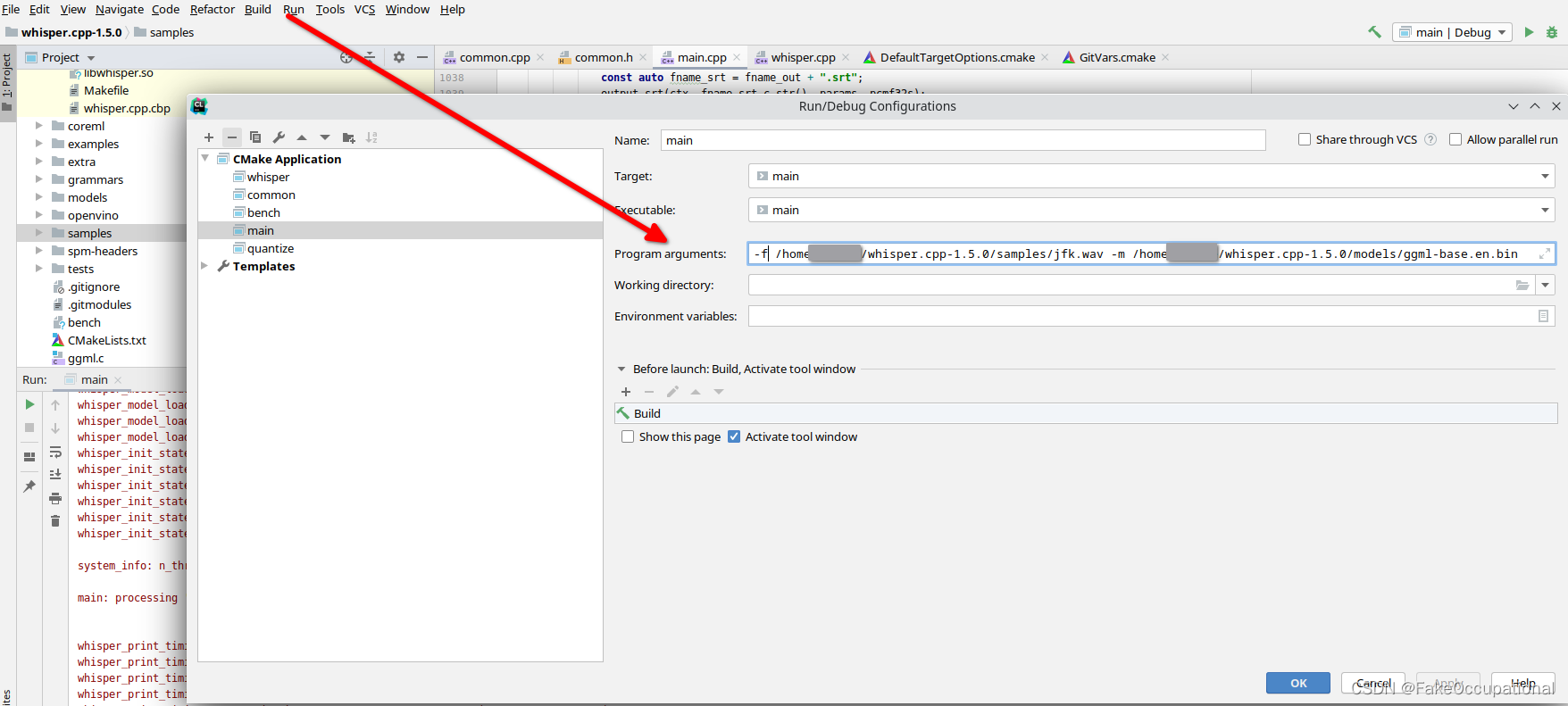

参数设置

/home/pdd/le/whisper.cpp-1.5.0/cmake-build-debug/bin/main

options:

-h, --help [default] show this help message and exit

-t N, --threads N [4 ] number of threads to use during computation

-p N, --processors N [1 ] number of processors to use during computation

-ot N, --offset-t N [0 ] time offset in milliseconds

-on N, --offset-n N [0 ] segment index offset

-d N, --duration N [0 ] duration of audio to process in milliseconds

-mc N, --max-context N [-1 ] maximum number of text context tokens to store

-ml N, --max-len N [0 ] maximum segment length in characters

-sow, --split-on-word [false ] split on word rather than on token

-bo N, --best-of N [5 ] number of best candidates to keep

-bs N, --beam-size N [5 ] beam size for beam search

-wt N, --word-thold N [0.01 ] word timestamp probability threshold

-et N, --entropy-thold N [2.40 ] entropy threshold for decoder fail

-lpt N, --logprob-thold N [-1.00 ] log probability threshold for decoder fail

-debug, --debug-mode [false ] enable debug mode (eg. dump log_mel)

-tr, --translate [false ] translate from source language to english

-di, --diarize [false ] stereo audio diarization

-tdrz, --tinydiarize [false ] enable tinydiarize (requires a tdrz model)

-nf, --no-fallback [false ] do not use temperature fallback while decoding

-otxt, --output-txt [false ] output result in a text file

-ovtt, --output-vtt [false ] output result in a vtt file

-osrt, --output-srt [false ] output result in a srt file

-olrc, --output-lrc [false ] output result in a lrc file

-owts, --output-words [false ] output script for generating karaoke video

-fp, --font-path [/System/Library/Fonts/Supplemental/Courier New Bold.ttf] path to a monospace font for karaoke video

-ocsv, --output-csv [false ] output result in a CSV file

-oj, --output-json [false ] output result in a JSON file

-ojf, --output-json-full [false ] include more information in the JSON file

-of FNAME, --output-file FNAME [ ] output file path (without file extension)

-ps, --print-special [false ] print special tokens

-pc, --print-colors [false ] print colors

-pp, --print-progress [false ] print progress

-nt, --no-timestamps [false ] do not print timestamps

-l LANG, --language LANG [en ] spoken language ('auto' for auto-detect)

-dl, --detect-language [false ] exit after automatically detecting language

--prompt PROMPT [ ] initial prompt

-m FNAME, --model FNAME [models/ggml-base.en.bin] model path

-f FNAME, --file FNAME [ ] input WAV file path

-oved D, --ov-e-device DNAME [CPU ] the OpenVINO device used for encode inference

-ls, --log-score [false ] log best decoder scores of tokens

-ng, --no-gpu [false ] disable GPU

调试设置

项目依赖和CmakeLists.txt

set(TARGET main)

add_executable(${TARGET} main.cpp)

include(DefaultTargetOptions)

target_link_libraries(${TARGET} PRIVATE common whisper ${CMAKE_THREAD_LIBS_INIT})

#include "common.h"

#include "whisper.h"

#include <cmath>

#include <fstream>

#include <cstdio>

#include <string>

#include <thread>

#include <vector>

#include <cstring>

main

int main(int argc, char ** argv) {

// 1.解析参数

whisper_params params;

// 解析命令行参数,将结果保存到params中

if (whisper_params_parse(argc, argv, params) == false) {… }

// 检查输入文件名是否为空

if (params.fname_inp.empty()) {… }// std::vector<std::string> fname_inp = {};

// 检查语言参数是否有效

if (params.language != "auto" && whisper_lang_id(params.language.c_str()) == -1) {… }

// 检查两个布尔参数,如果同时为真,执行相应的错误处理代码

if (params.diarize && params.tinydiarize) {… }

// whisper init

struct whisper_context_params cparams;

cparams.use_gpu = params.use_gpu;

// 2.使用whisper初始化上下文,并根据给定的模型文件和参数进行配置

struct whisper_context * ctx = whisper_init_from_file_with_params(params.model.c_str(), cparams);

if (ctx == nullptr) {

fprintf(stderr, "error: failed to initialize whisper context\n");

return 3;

}

// initialize openvino encoder. this has no effect on whisper.cpp builds that don't have OpenVINO configured

// 初始化OpenVINO编码器,对于没有配置OpenVINO的whisper.cpp构建,此调用无效

whisper_ctx_init_openvino_encoder(ctx, nullptr, params.openvino_encode_device.c_str(), nullptr);

// 3.对输入文件列表进行循环处理

for (int f = 0; f < (int) params.fname_inp.size(); ++f) {… }

whisper_print_timings(ctx); // 打印whisper上下文的计时信息

whisper_free(ctx);// 释放whisper上下文占用的资源

return 0;

}

1.解析参数

- 略

2.使用whisper初始化上下文,并根据给定的模型文件和参数进行配置

- webassembly003 whisper.cpp的main项目-2:根据给定的模型文件和参数进行配置

3.对输入文件列表进行循环处理

3.1解析参数

const auto fname_inp = params.fname_inp[f]; // "/home/***/whisper.cpp-1.5.0/samples/jfk.wav"

const auto fname_out = f < (int) params.fname_out.size() && !params.fname_out[f].empty() ? params.fname_out[f] : params.fname_inp[f]; // "/home/***/whisper.cpp-1.5.0/samples/jfk.wav"

3.2根据参数读取音频

std::vector<float> pcmf32; // mono-channel 单声道(音频只有一个声道) ,采样点类型为32位浮点数, `PCM` 表示脉冲编码调制

std::vector<std::vector<float>> pcmf32s; // stereo-channel 立体声,即音频有两个声道(左声道和右声道)

// read_wav 定义在 common.cpp, 如果在函数调用之前使用::,并且没有指定任何命名空间,那么它会被解释为全局命名空间。

if (!::read_wav(fname_inp, pcmf32, pcmf32s, params.diarize)) { // if (!::read_wav(...)):使用 if 语句检查读取 WAV 文件的结果。! 表示逻辑取反,所以如果 read_wav 返回 false(表示读取失败),则执行下面的代码块。

fprintf(stderr, "error: failed to read WAV file '%s'\n", fname_inp.c_str());

continue;

}

3.3print information

// print system information

{

fprintf(stderr, "\n");

fprintf(stderr, "system_info: n_threads = %d / %d | %s\n",

params.n_threads*params.n_processors, std::thread::hardware_concurrency(), whisper_print_system_info());

}

// print some info about the processing

{

fprintf(stderr, "\n");

if (!whisper_is_multilingual(ctx)) {

if (params.language != "en" || params.translate) {

params.language = "en";

params.translate = false;

fprintf(stderr, "%s: WARNING: model is not multilingual, ignoring language and translation options\n", __func__);

}

}

if (params.detect_language) {

params.language = "auto";

}

fprintf(stderr, "%s: processing '%s' (%d samples, %.1f sec), %d threads, %d processors, %d beams + best of %d, lang = %s, task = %s, %stimestamps = %d ...\n",

__func__, fname_inp.c_str(), int(pcmf32.size()), float(pcmf32.size())/WHISPER_SAMPLE_RATE,

params.n_threads, params.n_processors, params.beam_size, params.best_of,

params.language.c_str(),

params.translate ? "translate" : "transcribe",

params.tinydiarize ? "tdrz = 1, " : "",

params.no_timestamps ? 0 : 1);

fprintf(stderr, "\n");

}

3.4run the inference

3.4.1解析参数

whisper_full_params wparams = whisper_full_default_params(WHISPER_SAMPLING_GREEDY);

wparams.strategy = params.beam_size > 1 ? WHISPER_SAMPLING_BEAM_SEARCH : WHISPER_SAMPLING_GREEDY;

wparams.print_realtime = false;

wparams.print_progress = params.print_progress;

wparams.print_timestamps = !params.no_timestamps;

wparams.print_special = params.print_special;

wparams.translate = params.translate;

wparams.language = params.language.c_str();

wparams.detect_language = params.detect_language;

wparams.n_threads = params.n_threads;

wparams.n_max_text_ctx = params.max_context >= 0 ? params.max_context : wparams.n_max_text_ctx;

wparams.offset_ms = params.offset_t_ms;

wparams.duration_ms = params.duration_ms;

wparams.token_timestamps = params.output_wts || params.output_jsn_full || params.max_len > 0;

wparams.thold_pt = params.word_thold;

wparams.max_len = params.output_wts && params.max_len == 0 ? 60 : params.max_len;

wparams.split_on_word = params.split_on_word;

wparams.speed_up = params.speed_up;

wparams.debug_mode = params.debug_mode;

wparams.tdrz_enable = params.tinydiarize; // [TDRZ]

wparams.initial_prompt = params.prompt.c_str();

wparams.greedy.best_of = params.best_of;

wparams.beam_search.beam_size = params.beam_size;

wparams.temperature_inc = params.no_fallback ? 0.0f : wparams.temperature_inc;

wparams.entropy_thold = params.entropy_thold;

wparams.logprob_thold = params.logprob_thold;

whisper_print_user_data user_data = { ¶ms, &pcmf32s, 0 };

// this callback is called on each new segment

if (!wparams.print_realtime) {

wparams.new_segment_callback = whisper_print_segment_callback;

wparams.new_segment_callback_user_data = &user_data;

}

if (wparams.print_progress) {

wparams.progress_callback = whisper_print_progress_callback;

wparams.progress_callback_user_data = &user_data;

}

whisper_print_segment_callback:获取片段的推理结果并打印的回调函数

void whisper_print_segment_callback(struct whisper_context * ctx, struct whisper_state * /*state*/, int n_new, void * user_data) {

const auto & params = *((whisper_print_user_data *) user_data)->params;

const auto & pcmf32s = *((whisper_print_user_data *) user_data)->pcmf32s;

const int n_segments = whisper_full_n_segments(ctx);

std::string speaker = "";

int64_t t0 = 0;

int64_t t1 = 0;

// print the last n_new segments

const int s0 = n_segments - n_new;

if (s0 == 0) {

printf("\n");

}

for (int i = s0; i < n_segments; i++) {

if (!params.no_timestamps || params.diarize) {

t0 = whisper_full_get_segment_t0(ctx, i);

t1 = whisper_full_get_segment_t1(ctx, i);

}

if (!params.no_timestamps) {

printf("[%s --> %s] ", to_timestamp(t0).c_str(), to_timestamp(t1).c_str());

}

if (params.diarize && pcmf32s.size() == 2) {

speaker = estimate_diarization_speaker(pcmf32s, t0, t1);

}

if (params.print_colors) {

for (int j = 0; j < whisper_full_n_tokens(ctx, i); ++j) {

if (params.print_special == false) {

const whisper_token id = whisper_full_get_token_id(ctx, i, j);

if (id >= whisper_token_eot(ctx)) {

continue;

}

}

const char * text = whisper_full_get_token_text(ctx, i, j);

const float p = whisper_full_get_token_p (ctx, i, j);

const int col = std::max(0, std::min((int) k_colors.size() - 1, (int) (std::pow(p, 3)*float(k_colors.size()))));

printf("%s%s%s%s", speaker.c_str(), k_colors[col].c_str(), text, "\033[0m");

}

} else {

const char * text = whisper_full_get_segment_text(ctx, i);

printf("%s%s", speaker.c_str(), text);

}

if (params.tinydiarize) {

if (whisper_full_get_segment_speaker_turn_next(ctx, i)) {

printf("%s", params.tdrz_speaker_turn.c_str());

}

}

// with timestamps or speakers: each segment on new line

if (!params.no_timestamps || params.diarize) {

printf("\n");

}

fflush(stdout);

}

}

3.4.2解析参数

// examples for abort mechanism

// in examples below, we do not abort the processing, but we could if the flag is set to true

// the callback is called before every encoder run - if it returns false, the processing is aborted

{

static bool is_aborted = false; // NOTE: this should be atomic to avoid data race

wparams.encoder_begin_callback = [](struct whisper_context * /*ctx*/, struct whisper_state * /*state*/, void * user_data) {

bool is_aborted = *(bool*)user_data;

return !is_aborted;

};

wparams.encoder_begin_callback_user_data = &is_aborted;

}

// the callback is called before every computation - if it returns true, the computation is aborted

{

static bool is_aborted = false; // NOTE: this should be atomic to avoid data race

wparams.abort_callback = [](void * user_data) {

bool is_aborted = *(bool*)user_data;

return is_aborted;

};

wparams.abort_callback_user_data = &is_aborted;

}

3.4.3 process whisper_full_parallel

if (whisper_full_parallel(ctx, wparams, pcmf32.data(), pcmf32.size(), params.n_processors) != 0) {

fprintf(stderr, "%s: failed to process audio\n", argv[0]);

return 10;

}

whisper_full_parallel

int whisper_full_parallel(

struct whisper_context * ctx,

struct whisper_full_params params,

const float * samples,

int n_samples,

int n_processors) {

if (n_processors == 1) {

return whisper_full(ctx, params, samples, n_samples);

}

// 略

}

whisper_full

int whisper_full(

struct whisper_context * ctx,

struct whisper_full_params params,

const float * samples,

int n_samples) {

return whisper_full_with_state(ctx, ctx->state, params, samples, n_samples);

}

whisper_full_with_state(推理的关键代码800行)

//没细看,有空再看

int whisper_full_with_state(

struct whisper_context * ctx,

struct whisper_state * state,

struct whisper_full_params params,

const float * samples,

int n_samples) {

// clear old results

auto & result_all = state->result_all;

result_all.clear();

if (n_samples > 0) {

// compute log mel spectrogram

if (params.speed_up) {

// TODO: Replace PV with more advanced algorithm

WHISPER_LOG_ERROR("%s: failed to compute log mel spectrogram\n", __func__);

return -1;

} else {

if (whisper_pcm_to_mel_with_state(ctx, state, samples, n_samples, params.n_threads) != 0) {

WHISPER_LOG_ERROR("%s: failed to compute log mel spectrogram\n", __func__);

return -2;

}

}

}

// auto-detect language if not specified

if (params.language == nullptr || strlen(params.language) == 0 || strcmp(params.language, "auto") == 0 || params.detect_language) {

std::vector<float> probs(whisper_lang_max_id() + 1, 0.0f);

const auto lang_id = whisper_lang_auto_detect_with_state(ctx, state, 0, params.n_threads, probs.data());

if (lang_id < 0) {

WHISPER_LOG_ERROR("%s: failed to auto-detect language\n", __func__);

return -3;

}

state->lang_id = lang_id;

params.language = whisper_lang_str(lang_id);

WHISPER_LOG_INFO("%s: auto-detected language: %s (p = %f)\n", __func__, params.language, probs[whisper_lang_id(params.language)]);

if (params.detect_language) {

return 0;

}

}

if (params.token_timestamps) {

state->t_beg = 0;

state->t_last = 0;

state->tid_last = 0;

if (n_samples > 0) {

state->energy = get_signal_energy(samples, n_samples, 32);

}

}

const int seek_start = params.offset_ms/10;

const int seek_end = params.duration_ms == 0 ? whisper_n_len_from_state(state) : seek_start + params.duration_ms/10;

// if length of spectrogram is less than 1.0s (100 frames), then return

// basically don't process anything that is less than 1.0s

// see issue #39: https://github.com/ggerganov/whisper.cpp/issues/39

if (seek_end < seek_start + (params.speed_up ? 50 : 100)) {

return 0;

}

// a set of temperatures to use

// [ t0, t0 + delta, t0 + 2*delta, ..., < 1.0f + 1e-6f ]

std::vector<float> temperatures;

if (params.temperature_inc > 0.0f) {

for (float t = params.temperature; t < 1.0f + 1e-6f; t += params.temperature_inc) {

temperatures.push_back(t);

}

} else {

temperatures.push_back(params.temperature);

}

// initialize the decoders

int n_decoders = 1;

switch (params.strategy) {

case WHISPER_SAMPLING_GREEDY:

{

n_decoders = params.greedy.best_of;

} break;

case WHISPER_SAMPLING_BEAM_SEARCH:

{

n_decoders = std::max(params.greedy.best_of, params.beam_search.beam_size);

} break;

};

n_decoders = std::max(1, n_decoders);

if (n_decoders > WHISPER_MAX_DECODERS) {

WHISPER_LOG_ERROR("%s: too many decoders requested (%d), max = %d\n", __func__, n_decoders, WHISPER_MAX_DECODERS);

return -4;

}

// TAGS: WHISPER_DECODER_INIT

for (int j = 1; j < n_decoders; j++) {

auto & decoder = state->decoders[j];

decoder.sequence.tokens.reserve(state->decoders[0].sequence.tokens.capacity());

decoder.probs.resize (ctx->vocab.n_vocab);

decoder.logits.resize (ctx->vocab.n_vocab);

decoder.logprobs.resize(ctx->vocab.n_vocab);

decoder.logits_id.reserve(ctx->model.hparams.n_vocab);

decoder.rng = std::mt19937(0);

}

// the accumulated text context so far

auto & prompt_past = state->prompt_past;

if (params.no_context) {

prompt_past.clear();

}

// prepare prompt

{

std::vector<whisper_token> prompt_tokens;

// initial prompt

if (!params.prompt_tokens && params.initial_prompt) {

prompt_tokens.resize(1024);

prompt_tokens.resize(whisper_tokenize(ctx, params.initial_prompt, prompt_tokens.data(), prompt_tokens.size()));

params.prompt_tokens = prompt_tokens.data();

params.prompt_n_tokens = prompt_tokens.size();

}

// prepend the prompt tokens to the prompt_past

if (params.prompt_tokens && params.prompt_n_tokens > 0) {

// parse tokens from the pointer

for (int i = 0; i < params.prompt_n_tokens; i++) {

prompt_past.push_back(params.prompt_tokens[i]);

}

std::rotate(prompt_past.begin(), prompt_past.end() - params.prompt_n_tokens, prompt_past.end());

}

}

// overwrite audio_ctx, max allowed is hparams.n_audio_ctx

if (params.audio_ctx > whisper_n_audio_ctx(ctx)) {

WHISPER_LOG_ERROR("%s: audio_ctx is larger than the maximum allowed (%d > %d)\n", __func__, params.audio_ctx, whisper_n_audio_ctx(ctx));

return -5;

}

state->exp_n_audio_ctx = params.audio_ctx;

// these tokens determine the task that will be performed

std::vector<whisper_token> prompt_init = { whisper_token_sot(ctx), };

if (whisper_is_multilingual(ctx)) {

const int lang_id = whisper_lang_id(params.language);

state->lang_id = lang_id;

prompt_init.push_back(whisper_token_lang(ctx, lang_id));

if (params.translate) {

prompt_init.push_back(whisper_token_translate(ctx));

} else {

prompt_init.push_back(whisper_token_transcribe(ctx));

}

}

// distilled models require the "no_timestamps" token

{

const bool is_distil = ctx->model.hparams.n_text_layer == 2;

if (is_distil && !params.no_timestamps) {

WHISPER_LOG_WARN("%s: using distilled model - forcing no_timestamps\n", __func__);

params.no_timestamps = true;

}

}

if (params.no_timestamps) {

prompt_init.push_back(whisper_token_not(ctx));

}

int seek = seek_start;

std::vector<whisper_token> prompt;

prompt.reserve(whisper_n_text_ctx(ctx));

struct beam_candidate {

int decoder_idx;

int seek_delta;

bool has_ts;

whisper_sequence sequence;

whisper_grammar grammar;

};

std::vector<std::vector<beam_candidate>> bc_per_dec(n_decoders);

std::vector<beam_candidate> beam_candidates;

// main loop

while (true) {

if (params.progress_callback) {

const int progress_cur = (100*(seek - seek_start))/(seek_end - seek_start);

params.progress_callback(

ctx, ctx->state, progress_cur, params.progress_callback_user_data);

}

// of only 1 second left, then stop

if (seek + 100 >= seek_end) {

break;

}

if (params.encoder_begin_callback) {

if (params.encoder_begin_callback(ctx, state, params.encoder_begin_callback_user_data) == false) {

WHISPER_LOG_ERROR("%s: encoder_begin_callback returned false - aborting\n", __func__);

break;

}

}

// encode audio features starting at offset seek

if (!whisper_encode_internal(*ctx, *state, seek, params.n_threads, params.abort_callback, params.abort_callback_user_data)) {

WHISPER_LOG_ERROR("%s: failed to encode\n", __func__);

return -6;

}

// if there is a very short audio segment left to process, we remove any past prompt since it tends

// to confuse the decoder and often make it repeat or hallucinate stuff

if (seek > seek_start && seek + 500 >= seek_end) {

prompt_past.clear();

}

int best_decoder_id = 0;

for (int it = 0; it < (int) temperatures.size(); ++it) {

const float t_cur = temperatures[it];

int n_decoders_cur = 1;

switch (params.strategy) {

case whisper_sampling_strategy::WHISPER_SAMPLING_GREEDY:

{

if (t_cur > 0.0f) {

n_decoders_cur = params.greedy.best_of;

}

} break;

case whisper_sampling_strategy::WHISPER_SAMPLING_BEAM_SEARCH:

{

if (t_cur > 0.0f) {

n_decoders_cur = params.greedy.best_of;

} else {

n_decoders_cur = params.beam_search.beam_size;

}

} break;

};

n_decoders_cur = std::max(1, n_decoders_cur);

WHISPER_PRINT_DEBUG("\n%s: strategy = %d, decoding with %d decoders, temperature = %.2f\n", __func__, params.strategy, n_decoders_cur, t_cur);

// TAGS: WHISPER_DECODER_INIT

for (int j = 0; j < n_decoders_cur; ++j) {

auto & decoder = state->decoders[j];

decoder.sequence.tokens.clear();

decoder.sequence.result_len = 0;

decoder.sequence.sum_logprobs_all = 0.0;

decoder.sequence.sum_logprobs = -INFINITY;

decoder.sequence.avg_logprobs = -INFINITY;

decoder.sequence.entropy = 0.0;

decoder.sequence.score = -INFINITY;

decoder.seek_delta = 100*WHISPER_CHUNK_SIZE;

decoder.failed = false;

decoder.completed = false;

decoder.has_ts = false;

if (params.grammar_rules != nullptr) {

decoder.grammar = whisper_grammar_init(params.grammar_rules, params.n_grammar_rules, params.i_start_rule);

} else {

decoder.grammar = {};

}

}

// init prompt and kv cache for the current iteration

// TODO: do not recompute the prompt if it is the same as previous time

{

prompt.clear();

// if we have already generated some text, use it as a prompt to condition the next generation

if (!prompt_past.empty() && t_cur < 0.5f && params.n_max_text_ctx > 0) {

int n_take = std::min(std::min(params.n_max_text_ctx, whisper_n_text_ctx(ctx)/2), int(prompt_past.size()));

prompt = { whisper_token_prev(ctx) };

prompt.insert(prompt.begin() + 1, prompt_past.end() - n_take, prompt_past.end());

}

// init new transcription with sot, language (opt) and task tokens

prompt.insert(prompt.end(), prompt_init.begin(), prompt_init.end());

// print the prompt

WHISPER_PRINT_DEBUG("\n\n");

for (int i = 0; i < (int) prompt.size(); i++) {

WHISPER_PRINT_DEBUG("%s: prompt[%d] = %s\n", __func__, i, ctx->vocab.id_to_token.at(prompt[i]).c_str());

}

WHISPER_PRINT_DEBUG("\n\n");

whisper_kv_cache_clear(state->kv_self);

whisper_batch_prep_legacy(state->batch, prompt.data(), prompt.size(), 0, 0);

if (!whisper_decode_internal(*ctx, *state, state->batch, params.n_threads, params.abort_callback, params.abort_callback_user_data)) {

WHISPER_LOG_ERROR("%s: failed to decode\n", __func__);

return -7;

}

{

const int64_t t_start_sample_us = ggml_time_us();

state->decoders[0].i_batch = prompt.size() - 1;

whisper_process_logits(*ctx, *state, state->decoders[0], params, t_cur);

for (int j = 1; j < n_decoders_cur; ++j) {

auto & decoder = state->decoders[j];

whisper_kv_cache_seq_cp(state->kv_self, 0, j, -1, -1);

memcpy(decoder.probs.data(), state->decoders[0].probs.data(), decoder.probs.size()*sizeof(decoder.probs[0]));

memcpy(decoder.logits.data(), state->decoders[0].logits.data(), decoder.logits.size()*sizeof(decoder.logits[0]));

memcpy(decoder.logprobs.data(), state->decoders[0].logprobs.data(), decoder.logprobs.size()*sizeof(decoder.logprobs[0]));

}

state->t_sample_us += ggml_time_us() - t_start_sample_us;

}

}

for (int i = 0, n_max = whisper_n_text_ctx(ctx)/2 - 4; i < n_max; ++i) {

const int64_t t_start_sample_us = ggml_time_us();

if (params.strategy == whisper_sampling_strategy::WHISPER_SAMPLING_BEAM_SEARCH) {

for (auto & bc : bc_per_dec) {

bc.clear();

}

}

// sampling

// TODO: avoid memory allocations, optimize, avoid threads?

{

std::atomic<int> j_cur(0);

auto process = [&]() {

while (true) {

const int j = j_cur.fetch_add(1);

if (j >= n_decoders_cur) {

break;

}

auto & decoder = state->decoders[j];

if (decoder.completed || decoder.failed) {

continue;

}

switch (params.strategy) {

case whisper_sampling_strategy::WHISPER_SAMPLING_GREEDY:

{

if (t_cur < 1e-6f) {

decoder.sequence.tokens.push_back(whisper_sample_token(*ctx, decoder, true));

} else {

decoder.sequence.tokens.push_back(whisper_sample_token(*ctx, decoder, false));

}

decoder.sequence.sum_logprobs_all += decoder.sequence.tokens.back().plog;

} break;

case whisper_sampling_strategy::WHISPER_SAMPLING_BEAM_SEARCH:

{

const auto tokens_new = whisper_sample_token_topk(*ctx, decoder, params.beam_search.beam_size);

for (const auto & token : tokens_new) {

bc_per_dec[j].push_back({ j, decoder.seek_delta, decoder.has_ts, decoder.sequence, decoder.grammar, });

bc_per_dec[j].back().sequence.tokens.push_back(token);

bc_per_dec[j].back().sequence.sum_logprobs_all += token.plog;

}

} break;

};

}

};

const int n_threads = std::min(params.n_threads, n_decoders_cur);

if (n_threads == 1) {

process();

} else {

std::vector<std::thread> threads(n_threads - 1);

for (int t = 0; t < n_threads - 1; ++t) {

threads[t] = std::thread(process);

}

process();

for (int t = 0; t < n_threads - 1; ++t) {

threads[t].join();

}

}

}

beam_candidates.clear();

for (const auto & bc : bc_per_dec) {

beam_candidates.insert(beam_candidates.end(), bc.begin(), bc.end());

if (!bc.empty()) {

state->n_sample += 1;

}

}

// for beam-search, choose the top candidates and update the KV caches

if (params.strategy == whisper_sampling_strategy::WHISPER_SAMPLING_BEAM_SEARCH) {

std::sort(

beam_candidates.begin(),

beam_candidates.end(),

[](const beam_candidate & a, const beam_candidate & b) {

return a.sequence.sum_logprobs_all > b.sequence.sum_logprobs_all;

});

uint32_t cur_c = 0;

for (int j = 0; j < n_decoders_cur; ++j) {

auto & decoder = state->decoders[j];

if (decoder.completed || decoder.failed) {

continue;

}

if (cur_c >= beam_candidates.size()) {

cur_c = 0;

}

auto & cur = beam_candidates[cur_c++];

while (beam_candidates.size() > cur_c && beam_candidates[cur_c].sequence.sum_logprobs_all == cur.sequence.sum_logprobs_all && i > 0) {

++cur_c;

}

decoder.seek_delta = cur.seek_delta;

decoder.has_ts = cur.has_ts;

decoder.sequence = cur.sequence;

decoder.grammar = cur.grammar;

whisper_kv_cache_seq_cp(state->kv_self, cur.decoder_idx, WHISPER_MAX_DECODERS + j, -1, -1);

WHISPER_PRINT_DEBUG("%s: beam search: decoder %d: from decoder %d: token = %10s, plog = %8.5f, sum_logprobs = %8.5f\n",

__func__, j, cur.decoder_idx, ctx->vocab.id_to_token.at(decoder.sequence.tokens.back().id).c_str(), decoder.sequence.tokens.back().plog, decoder.sequence.sum_logprobs_all);

}

for (int j = 0; j < n_decoders_cur; ++j) {

auto & decoder = state->decoders[j];

if (decoder.completed || decoder.failed) {

continue;

}

whisper_kv_cache_seq_rm(state->kv_self, j, -1, -1);

whisper_kv_cache_seq_cp(state->kv_self, WHISPER_MAX_DECODERS + j, j, -1, -1);

whisper_kv_cache_seq_rm(state->kv_self, WHISPER_MAX_DECODERS + j, -1, -1);

}

}

// update the decoder state

// - check if the sequence is completed

// - check if the sequence is failed

// - update sliding window based on timestamp tokens

for (int j = 0; j < n_decoders_cur; ++j) {

auto & decoder = state->decoders[j];

if (decoder.completed || decoder.failed) {

continue;

}

auto & has_ts = decoder.has_ts;

auto & failed = decoder.failed;

auto & completed = decoder.completed;

auto & seek_delta = decoder.seek_delta;

auto & result_len = decoder.sequence.result_len;

{

const auto & token = decoder.sequence.tokens.back();

// timestamp token - update sliding window

if (token.id > whisper_token_beg(ctx)) {

const int seek_delta_new = 2*(token.id - whisper_token_beg(ctx));

// do not allow to go back in time

if (has_ts && seek_delta > seek_delta_new && result_len < i) {

failed = true; // TODO: maybe this is not a failure ?

continue;

}

seek_delta = seek_delta_new;

result_len = i + 1;

has_ts = true;

}

whisper_grammar_accept_token(*ctx, decoder.grammar, token.id);

#ifdef WHISPER_DEBUG

{

const auto tt = token.pt > 0.10 ? ctx->vocab.id_to_token.at(token.tid) : "[?]";

WHISPER_PRINT_DEBUG("%s: id = %3d, decoder = %d, token = %6d, p = %6.3f, ts = %10s, %6.3f, result_len = %4d '%s'\n",

__func__, i, j, token.id, token.p, tt.c_str(), token.pt, result_len, ctx->vocab.id_to_token.at(token.id).c_str());

}

#endif

// end of segment

if (token.id == whisper_token_eot(ctx) || // end of text token

(params.max_tokens > 0 && i >= params.max_tokens) || // max tokens per segment reached

(has_ts && seek + seek_delta + 100 >= seek_end) // end of audio reached

) {

if (result_len == 0) {

if (seek + seek_delta + 100 >= seek_end) {

result_len = i + 1;

} else {

failed = true;

continue;

}

}

if (params.single_segment) {

result_len = i + 1;

seek_delta = 100*WHISPER_CHUNK_SIZE;

}

completed = true;

continue;

}

// TESTS: if no tensors are loaded, it means we are running tests

if (ctx->model.n_loaded == 0) {

seek_delta = 100*WHISPER_CHUNK_SIZE;

completed = true;

continue;

}

}

// sometimes, the decoding can get stuck in a repetition loop

// this is an attempt to mitigate such cases - we flag the decoding as failed and use a fallback strategy

if (i == n_max - 1 && (result_len == 0 || seek_delta < 100*WHISPER_CHUNK_SIZE/2)) {

failed = true;

continue;

}

}

// check if all decoders have finished (i.e. completed or failed)

{

bool completed_all = true;

for (int j = 0; j < n_decoders_cur; ++j) {

auto & decoder = state->decoders[j];

if (decoder.completed || decoder.failed) {

continue;

}

completed_all = false;

}

if (completed_all) {

break;

}

}

state->t_sample_us += ggml_time_us() - t_start_sample_us;

// obtain logits for the next token

{

auto & batch = state->batch;

batch.n_tokens = 0;

const int n_past = prompt.size() + i;

for (int j = 0; j < n_decoders_cur; ++j) {

auto & decoder = state->decoders[j];

if (decoder.failed || decoder.completed) {

continue;

}

//WHISPER_PRINT_DEBUG("%s: decoder %d: token %d, seek_delta %d\n", __func__, j, decoder.sequence.tokens.back().id, decoder.seek_delta);

decoder.i_batch = batch.n_tokens;

batch.token [batch.n_tokens] = decoder.sequence.tokens.back().id;

batch.pos [batch.n_tokens] = n_past;

batch.n_seq_id[batch.n_tokens] = 1;

batch.seq_id [batch.n_tokens][0] = j;

batch.logits [batch.n_tokens] = 1;

batch.n_tokens++;

}

assert(batch.n_tokens > 0);

if (!whisper_decode_internal(*ctx, *state, state->batch, params.n_threads, params.abort_callback, params.abort_callback_user_data)) {

WHISPER_LOG_ERROR("%s: failed to decode\n", __func__);

return -8;

}

const int64_t t_start_sample_us = ggml_time_us();

// TODO: avoid memory allocations, optimize, avoid threads?

{

std::atomic<int> j_cur(0);

auto process = [&]() {

while (true) {

const int j = j_cur.fetch_add(1);

if (j >= n_decoders_cur) {

break;

}

auto & decoder = state->decoders[j];

if (decoder.failed || decoder.completed) {

continue;

}

whisper_process_logits(*ctx, *state, decoder, params, t_cur);

}

};

const int n_threads = std::min(params.n_threads, n_decoders_cur);

if (n_threads == 1) {

process();

} else {

std::vector<std::thread> threads(n_threads - 1);

for (int t = 0; t < n_threads - 1; ++t) {

threads[t] = std::thread(process);

}

process();

for (int t = 0; t < n_threads - 1; ++t) {

threads[t].join();

}

}

}

state->t_sample_us += ggml_time_us() - t_start_sample_us;

}

}

// rank the resulting sequences and select the best one

{

double best_score = -INFINITY;

for (int j = 0; j < n_decoders_cur; ++j) {

auto & decoder = state->decoders[j];

if (decoder.failed) {

continue;

}

decoder.sequence.tokens.resize(decoder.sequence.result_len);

whisper_sequence_score(params, decoder.sequence);

WHISPER_PRINT_DEBUG("%s: decoder %2d: score = %8.5f, result_len = %3d, avg_logprobs = %8.5f, entropy = %8.5f\n",

__func__, j, decoder.sequence.score, decoder.sequence.result_len, decoder.sequence.avg_logprobs, decoder.sequence.entropy);

if (decoder.sequence.result_len > 32 && decoder.sequence.entropy < params.entropy_thold) {

WHISPER_PRINT_DEBUG("%s: decoder %2d: failed due to entropy %8.5f < %8.5f\n",

__func__, j, decoder.sequence.entropy, params.entropy_thold);

decoder.failed = true;

state->n_fail_h++;

continue;

}

if (best_score < decoder.sequence.score) {

best_score = decoder.sequence.score;

best_decoder_id = j;

}

}

WHISPER_PRINT_DEBUG("%s: best decoder = %d\n", __func__, best_decoder_id);

}

// was the decoding successful for the current temperature?

// do fallback only if:

// - we are not at the last temperature

// - we are not at the end of the audio (3 sec)

if (it != (int) temperatures.size() - 1 &&

seek_end - seek > 10*WHISPER_CHUNK_SIZE) {

bool success = true;

const auto & decoder = state->decoders[best_decoder_id];

if (decoder.failed || decoder.sequence.avg_logprobs < params.logprob_thold) {

success = false;

state->n_fail_p++;

}

if (success) {

//for (auto & token : ctx->decoders[best_decoder_id].sequence.tokens) {

// WHISPER_PRINT_DEBUG("%s: token = %d, p = %6.3f, pt = %6.3f, ts = %s, str = %s\n", __func__, token.id, token.p, token.pt, ctx->vocab.id_to_token.at(token.tid).c_str(), ctx->vocab.id_to_token.at(token.id).c_str());

//}

break;

}

}

WHISPER_PRINT_DEBUG("\n%s: failed to decode with temperature = %.2f\n", __func__, t_cur);

}

// output results through a user-provided callback

{

const auto & best_decoder = state->decoders[best_decoder_id];

const auto seek_delta = best_decoder.seek_delta;

const auto result_len = best_decoder.sequence.result_len;

const auto & tokens_cur = best_decoder.sequence.tokens;

//WHISPER_PRINT_DEBUG("prompt_init.size() = %d, prompt.size() = %d, result_len = %d, seek_delta = %d\n", prompt_init.size(), prompt.size(), result_len, seek_delta);

// update prompt_past

prompt_past.clear();

if (prompt.front() == whisper_token_prev(ctx)) {

prompt_past.insert(prompt_past.end(), prompt.begin() + 1, prompt.end() - prompt_init.size());

}

for (int i = 0; i < result_len; ++i) {

prompt_past.push_back(tokens_cur[i].id);

}

if (!tokens_cur.empty() && ctx->model.n_loaded > 0) {

int i0 = 0;

auto t0 = seek + 2*(tokens_cur.front().tid - whisper_token_beg(ctx));

std::string text;

bool speaker_turn_next = false;

for (int i = 0; i < (int) tokens_cur.size(); i++) {

//printf("%s: %18s %6.3f %18s %6.3f\n", __func__,

// ctx->vocab.id_to_token[tokens_cur[i].id].c_str(), tokens_cur[i].p,

// ctx->vocab.id_to_token[tokens_cur[i].tid].c_str(), tokens_cur[i].pt);

if (params.print_special || tokens_cur[i].id < whisper_token_eot(ctx)) {

text += whisper_token_to_str(ctx, tokens_cur[i].id);

}

// [TDRZ] record if speaker turn was predicted after current segment

if (params.tdrz_enable && tokens_cur[i].id == whisper_token_solm(ctx)) {

speaker_turn_next = true;

}

if (tokens_cur[i].id > whisper_token_beg(ctx) && !params.single_segment) {

const auto t1 = seek + 2*(tokens_cur[i].tid - whisper_token_beg(ctx));

if (!text.empty()) {

const auto tt0 = params.speed_up ? 2*t0 : t0;

const auto tt1 = params.speed_up ? 2*t1 : t1;

if (params.print_realtime) {

if (params.print_timestamps) {

printf("[%s --> %s] %s\n", to_timestamp(tt0).c_str(), to_timestamp(tt1).c_str(), text.c_str());

} else {

printf("%s", text.c_str());

fflush(stdout);

}

}

//printf("tt0 = %d, tt1 = %d, text = %s, token = %s, token_id = %d, tid = %d\n", tt0, tt1, text.c_str(), ctx->vocab.id_to_token[tokens_cur[i].id].c_str(), tokens_cur[i].id, tokens_cur[i].tid);

result_all.push_back({ tt0, tt1, text, {}, speaker_turn_next });

for (int j = i0; j <= i; j++) {

result_all.back().tokens.push_back(tokens_cur[j]);

}

int n_new = 1;

if (params.token_timestamps) {

whisper_exp_compute_token_level_timestamps(

*ctx, *state, result_all.size() - 1, params.thold_pt, params.thold_ptsum);

if (params.max_len > 0) {

n_new = whisper_wrap_segment(*ctx, *state, params.max_len, params.split_on_word);

}

}

if (params.new_segment_callback) {

params.new_segment_callback(ctx, state, n_new, params.new_segment_callback_user_data);

}

}

text = "";

while (i < (int) tokens_cur.size() && tokens_cur[i].id > whisper_token_beg(ctx)) {

i++;

}

i--;

t0 = t1;

i0 = i + 1;

speaker_turn_next = false;

}

}

if (!text.empty()) {

const auto t1 = seek + seek_delta;

const auto tt0 = params.speed_up ? 2*t0 : t0;

const auto tt1 = params.speed_up ? 2*t1 : t1;

if (params.print_realtime) {

if (params.print_timestamps) {

printf("[%s --> %s] %s\n", to_timestamp(tt0).c_str(), to_timestamp(tt1).c_str(), text.c_str());

} else {

printf("%s", text.c_str());

fflush(stdout);

}

}

result_all.push_back({ tt0, tt1, text, {} , speaker_turn_next });

for (int j = i0; j < (int) tokens_cur.size(); j++) {

result_all.back().tokens.push_back(tokens_cur[j]);

}

int n_new = 1;

if (params.token_timestamps) {

whisper_exp_compute_token_level_timestamps(

*ctx, *state, result_all.size() - 1, params.thold_pt, params.thold_ptsum);

if (params.max_len > 0) {

n_new = whisper_wrap_segment(*ctx, *state, params.max_len, params.split_on_word);

}

}

if (params.new_segment_callback) {

params.new_segment_callback(ctx, state, n_new, params.new_segment_callback_user_data);

}

}

}

// update audio window

seek += seek_delta;

WHISPER_PRINT_DEBUG("seek = %d, seek_delta = %d\n", seek, seek_delta);

}

}

return 0;

}

3.5output stuff

// output stuff

{

printf("\n");

// output to text file

if (params.output_txt) {

const auto fname_txt = fname_out + ".txt";

output_txt(ctx, fname_txt.c_str(), params, pcmf32s);

}

// output to VTT file

if (params.output_vtt) {

const auto fname_vtt = fname_out + ".vtt";

output_vtt(ctx, fname_vtt.c_str(), params, pcmf32s);

}

// output to SRT file

if (params.output_srt) {

const auto fname_srt = fname_out + ".srt";

output_srt(ctx, fname_srt.c_str(), params, pcmf32s);

}

// output to WTS file

if (params.output_wts) {

const auto fname_wts = fname_out + ".wts";

output_wts(ctx, fname_wts.c_str(), fname_inp.c_str(), params, float(pcmf32.size() + 1000)/WHISPER_SAMPLE_RATE, pcmf32s);

}

// output to CSV file

if (params.output_csv) {

const auto fname_csv = fname_out + ".csv";

output_csv(ctx, fname_csv.c_str(), params, pcmf32s);

}

// output to JSON file

if (params.output_jsn) {

const auto fname_jsn = fname_out + ".json";

output_json(ctx, fname_jsn.c_str(), params, pcmf32s, params.output_jsn_full);

}

// output to LRC file

if (params.output_lrc) {

const auto fname_lrc = fname_out + ".lrc";

output_lrc(ctx, fname_lrc.c_str(), params, pcmf32s);

}

// output to score file

if (params.log_score) {

const auto fname_score = fname_out + ".score.txt";

output_score(ctx, fname_score.c_str(), params, pcmf32s);

}

}

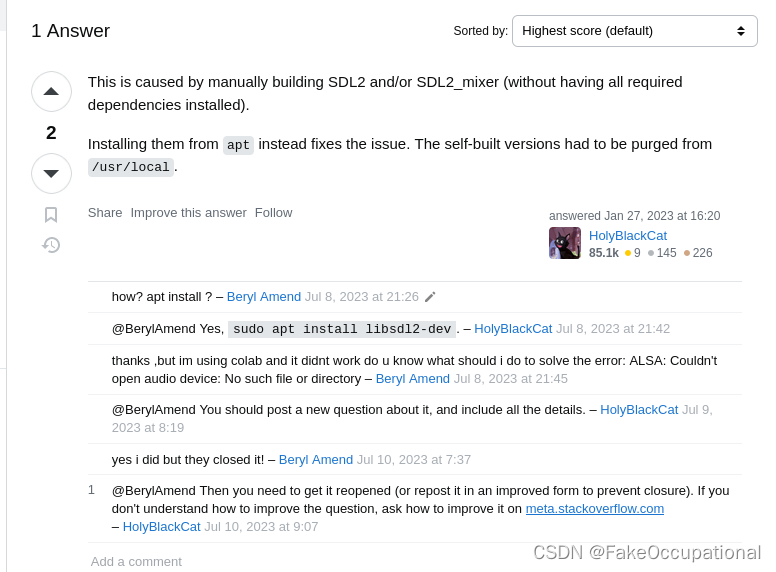

stream

stream的依赖

if (WHISPER_SDL2) # 需要set(WHISPER_SDL2 ON)#option(WHISPER_SDL2 "whisper: support for libSDL2" OFF)

# stream

set(TARGET stream)

add_executable(${TARGET} stream.cpp)

include(DefaultTargetOptions)

target_link_libraries(${TARGET} PRIVATE common common-sdl whisper ${CMAKE_THREAD_LIBS_INIT})

endif ()

// Real-time speech recognition of input from a microphone

//

// A very quick-n-dirty implementation serving mainly as a proof of concept.

//

#include "common-sdl.h" // https://github1s.com/ggerganov/whisper.cpp/blob/d6b9be21d76b91a96bb987063b25e5b532140253/examples/common-sdl.h

#include "common.h"

#include "whisper.h"

#include <cassert>

#include <cstdio>

#include <string>

#include <thread>

#include <vector>

#include <fstream>

[ 66%] Linking CXX static library libcommon.a

gmake[2]: 离开目录“/home/pdd/le/whisper.cpp-1.5.0/cmake-build-debug”

[ 66%] Built target common

gmake[2]: 进入目录“/home/pdd/le/whisper.cpp-1.5.0/cmake-build-debug”

gmake[2]: 进入目录“/home/pdd/le/whisper.cpp-1.5.0/cmake-build-debug”

gmake[2]: 进入目录“/home/pdd/le/whisper.cpp-1.5.0/cmake-build-debug”

Scanning dependencies of target stream

gmake[2]: 离开目录“/home/pdd/le/whisper.cpp-1.5.0/cmake-build-debug”

gmake[2]: 离开目录“/home/pdd/le/whisper.cpp-1.5.0/cmake-build-debug”

gmake[2]: 进入目录“/home/pdd/le/whisper.cpp-1.5.0/cmake-build-debug”

gmake[2]: 进入目录“/home/pdd/le/whisper.cpp-1.5.0/cmake-build-debug”

gmake[2]: 离开目录“/home/pdd/le/whisper.cpp-1.5.0/cmake-build-debug”

[ 72%] Building CXX object examples/main/CMakeFiles/main.dir/main.cpp.o

gmake[2]: 进入目录“/home/pdd/le/whisper.cpp-1.5.0/cmake-build-debug”

[ 77%] Building CXX object examples/quantize/CMakeFiles/quantize.dir/quantize.cpp.o

[ 83%] Building CXX object examples/stream/CMakeFiles/stream.dir/stream.cpp.o

In file included from /home/pdd/le/whisper.cpp-1.5.0/examples/stream/stream.cpp:5:

/home/pdd/le/whisper.cpp-1.5.0/examples/common-sdl.h:3:10: fatal error: SDL.h: 没有那个文件或目录

3 | #include <SDL.h>

| ^~~~~~~

compilation terminated.

SDL安装

反正安装失败了,跟系统版本有关,各种依赖处理有点麻烦。

(base) pdd@pdd-Dell-G15-5511:~/le$ sudo apt install libsdl2-dev

[sudo] pdd 的密码:

正在读取软件包列表... 完成

正在分析软件包的依赖关系树... 完成

正在读取状态信息... 完成

有一些软件包无法被安装。如果您用的是 unstable 发行版,这也许是

因为系统无法达到您要求的状态造成的。该版本中可能会有一些您需要的软件

包尚未被创建或是它们已被从新到(Incoming)目录移出。

下列信息可能会对解决问题有所帮助:

下列软件包有未满足的依赖关系:

udev : 破坏: systemd (< 249.11-0ubuntu3.11)

破坏: systemd:i386 (< 249.11-0ubuntu3.11)

推荐: systemd-hwe-hwdb 但是它将不会被安装

E: 错误,pkgProblemResolver::Resolve 发生故障,这可能是有软件包被要求保持现状的缘故。

(base) pdd@pdd-Dell-G15-5511:~/le$ sudo apt-get install libsdl2-dev

正在读取软件包列表... 完成

正在分析软件包的依赖关系树... 完成

正在读取状态信息... 完成

有一些软件包无法被安装。如果您用的是 unstable 发行版,这也许是

因为系统无法达到您要求的状态造成的。该版本中可能会有一些您需要的软件

包尚未被创建或是它们已被从新到(Incoming)目录移出。

下列信息可能会对解决问题有所帮助:

下列软件包有未满足的依赖关系:

udev : 破坏: systemd (< 249.11-0ubuntu3.11)

破坏: systemd:i386 (< 249.11-0ubuntu3.11)

推荐: systemd-hwe-hwdb 但是它将不会被安装

E: 错误,pkgProblemResolver::Resolve 发生故障,这可能是有软件包被要求保持现状的缘故。

(base) pdd@pdd-Dell-G15-5511:~/le$ sudo apt-get install libsdl2-2.0-0

正在读取软件包列表... 完成

正在分析软件包的依赖关系树... 完成

正在读取状态信息... 完成

下列软件包是自动安装的并且现在不需要了:

fcitx-config-common fcitx-config-gtk fcitx-frontend-all fcitx-frontend-gtk2 fcitx-frontend-gtk3 fcitx-frontend-qt5 fcitx-module-dbus

fcitx-module-kimpanel fcitx-module-lua fcitx-module-quickphrase-editor5 fcitx-module-x11 fcitx-modules fcitx-ui-classic g++-11 gir1.2-appindicator3-0.1

gir1.2-gst-plugins-base-1.0 gir1.2-gstreamer-1.0 gir1.2-keybinder-3.0 gir1.2-wnck-3.0 gnome-session-canberra libfcitx-config4 libfcitx-core0

libfcitx-gclient1 libfcitx-qt5-1 libfcitx-qt5-data libfcitx-utils0 libgettextpo0 libkeybinder-3.0-0 libpresage-data libpresage1v5 libtinyxml2.6.2v5

libwnck-3-0 libwnck-3-common presage python3-gi-cairo

使用'sudo apt autoremove'来卸载它(它们)。

将会同时安装下列软件:

libsndio6.1

建议安装:

sndiod

下列【新】软件包将被安装:

libsdl2-2.0-0 libsndio6.1

升级了 0 个软件包,新安装了 2 个软件包,要卸载 0 个软件包,有 28 个软件包未被升级。

需要下载 366 kB 的归档。

解压缩后会消耗 1,227 kB 的额外空间。

您希望继续执行吗? [Y/n] y

获取:1 http://dk.archive.ubuntu.com/ubuntu xenial/universe amd64 libsndio6.1 amd64 1.1.0-2 [23.2 kB]

获取:2 http://dk.archive.ubuntu.com/ubuntu xenial/universe amd64 libsdl2-2.0-0 amd64 2.0.4+dfsg1-2ubuntu2 [343 kB]

已下载 366 kB,耗时 4秒 (99.5 kB/s)

正在选中未选择的软件包 libsndio6.1:amd64。

(正在读取数据库 ... 系统当前共安装有 285392 个文件和目录。)

准备解压 .../libsndio6.1_1.1.0-2_amd64.deb ...

正在解压 libsndio6.1:amd64 (1.1.0-2) ...

正在选中未选择的软件包 libsdl2-2.0-0:amd64。

准备解压 .../libsdl2-2.0-0_2.0.4+dfsg1-2ubuntu2_amd64.deb ...

正在解压 libsdl2-2.0-0:amd64 (2.0.4+dfsg1-2ubuntu2) ...

正在设置 libsndio6.1:amd64 (1.1.0-2) ...

正在设置 libsdl2-2.0-0:amd64 (2.0.4+dfsg1-2ubuntu2) ...

正在处理用于 libc-bin (2.35-0ubuntu3.1) 的触发器 ...

/sbin/ldconfig.real: /usr/local/cuda-11.4/targets/x86_64-linux/lib/libcudnn_ops_infer.so.8 is not a symbolic link

$ sudo aptitude install libsdl2-dev

下列“新”软件包将被安装。

libsdl2-dev{b} libsndio-dev{a}

下列软件包将被“删除”:

fcitx-config-common{u} fcitx-config-gtk{u} fcitx-frontend-all{u} fcitx-frontend-gtk2{u} fcitx-frontend-gtk3{u} fcitx-frontend-qt5{u}

fcitx-module-dbus{u} fcitx-module-kimpanel{u} fcitx-module-lua{u} fcitx-module-quickphrase-editor5{u} fcitx-module-x11{u} fcitx-modules{u}

fcitx-ui-classic{u} g++-11{u} gir1.2-appindicator3-0.1{u} gir1.2-gst-plugins-base-1.0{u} gir1.2-gstreamer-1.0{u} gir1.2-keybinder-3.0{u}

gir1.2-wnck-3.0{u} gnome-session-canberra{u} libfcitx-config4{u} libfcitx-core0{u} libfcitx-gclient1{u} libfcitx-qt5-1{u} libfcitx-qt5-data{u}

libfcitx-utils0{u} libgettextpo0{u} libkeybinder-3.0-0{u} libpresage-data{u} libpresage1v5{u} libtinyxml2.6.2v5{u} libwnck-3-0{u} libwnck-3-common{u}

presage{u} python3-gi-cairo{u}

0 个软件包被升级,新安装 2 个,35 个将被删除, 同时 28 个将不升级。

需要获取 627 kB 的存档。解包后将释放 49.2 MB。

下列软件包存在未满足的依赖关系:

libsdl2-dev : 依赖: libasound2-dev 但它是不可安装的

依赖: libdbus-1-dev 但它是不可安装的

依赖: libgles2-mesa-dev 但它是不可安装的

依赖: libmirclient-dev 但它是不可安装的

依赖: libpulse-dev 但它是不可安装的

依赖: libudev-dev 但它是不可安装的

依赖: libxkbcommon-dev 但它是不可安装的

依赖: libxss-dev 但它是不可安装的

依赖: libxv-dev 但它是不可安装的

依赖: libxxf86vm-dev 但它是不可安装的

下列动作将解决这些依赖关系:

保持 下列软件包于其当前版本:

1) libsdl2-dev [未安装的]

是否接受该解决方案?[Y/n/q/?] y

下列软件包将被“删除”:

fcitx-config-common{u} fcitx-config-gtk{u} fcitx-frontend-all{u} fcitx-frontend-gtk2{u} fcitx-frontend-gtk3{u} fcitx-frontend-qt5{u}

fcitx-module-dbus{u} fcitx-module-kimpanel{u} fcitx-module-lua{u} fcitx-module-quickphrase-editor5{u} fcitx-module-x11{u} fcitx-modules{u}

fcitx-ui-classic{u} g++-11{u} gir1.2-appindicator3-0.1{u} gir1.2-gst-plugins-base-1.0{u} gir1.2-gstreamer-1.0{u} gir1.2-keybinder-3.0{u}

gir1.2-wnck-3.0{u} gnome-session-canberra{u} libfcitx-config4{u} libfcitx-core0{u} libfcitx-gclient1{u} libfcitx-qt5-1{u} libfcitx-qt5-data{u}

libfcitx-utils0{u} libgettextpo0{u} libkeybinder-3.0-0{u} libpresage-data{u} libpresage1v5{u} libtinyxml2.6.2v5{u} libwnck-3-0{u} libwnck-3-common{u}

presage{u} python3-gi-cairo{u}

0 个软件包被升级,新安装 0 个,35 个将被删除, 同时 28 个将不升级。

需要获取 0 B 的存档。解包后将释放 53.1 MB。

您要继续吗?[Y/n/?] y

(正在读取数据库 ... 系统当前共安装有 285406 个文件和目录。)

正在卸载 fcitx-config-gtk (0.4.10-3) ...

正在卸载 fcitx-config-common (0.4.10-3) ...

正在卸载 fcitx-frontend-all (1:4.2.9.8-5) ...

正在卸载 fcitx-frontend-gtk2 (1:4.2.9.8-5) ...

正在卸载 fcitx-frontend-gtk3 (1:4.2.9.8-5) ...

正在卸载 fcitx-frontend-qt5:amd64 (1.2.7-1.2build1) ...

正在卸载 fcitx-module-kimpanel (1:4.2.9.8-5) ...

正在卸载 fcitx-module-dbus (1:4.2.9.8-5) ...

正在卸载 fcitx-module-lua (1:4.2.9.8-5) ...

正在卸载 fcitx-module-quickphrase-editor5:amd64 (1.2.7-1.2build1) ...

正在卸载 fcitx-ui-classic (1:4.2.9.8-5) ...

正在卸载 fcitx-module-x11 (1:4.2.9.8-5) ...

正在卸载 fcitx-modules (1:4.2.9.8-5) ...

正在卸载 g++-11 (11.3.0-1ubuntu1~22.04) ...

正在卸载 gir1.2-appindicator3-0.1 (12.10.1+20.10.20200706.1-0ubuntu1) ...

正在卸载 gir1.2-gst-plugins-base-1.0:amd64 (1.20.1-1) ...

正在卸载 gir1.2-gstreamer-1.0:amd64 (1.20.3-0ubuntu1) ...

正在卸载 gir1.2-keybinder-3.0 (0.3.2-1.1) ...

正在卸载 gir1.2-wnck-3.0:amd64 (40.1-1) ...

正在卸载 gnome-session-canberra (0.30-10ubuntu1) ...

正在卸载 libfcitx-qt5-1:amd64 (1.2.7-1.2build1) ...

正在卸载 libfcitx-core0:amd64 (1:4.2.9.8-5) ...

正在卸载 libfcitx-config4:amd64 (1:4.2.9.8-5) ...

正在卸载 libfcitx-gclient1:amd64 (1:4.2.9.8-5) ...

正在卸载 libfcitx-qt5-data (1.2.7-1.2build1) ...

正在卸载 libfcitx-utils0:amd64 (1:4.2.9.8-5) ...

正在卸载 libgettextpo0:amd64 (0.21-4ubuntu4) ...

正在卸载 libkeybinder-3.0-0:amd64 (0.3.2-1.1) ...

正在卸载 presage (0.9.1-2.2ubuntu1) ...

正在卸载 libpresage1v5:amd64 (0.9.1-2.2ubuntu1) ...

正在卸载 libpresage-data (0.9.1-2.2ubuntu1) ...

正在卸载 libtinyxml2.6.2v5:amd64 (2.6.2-6) ...

正在卸载 libwnck-3-0:amd64 (40.1-1) ...

正在卸载 libwnck-3-common (40.1-1) ...

正在卸载 python3-gi-cairo (3.42.1-0ubuntu1) ...

正在处理用于 mate-menus (1.26.0-2ubuntu2) 的触发器 ...

正在处理用于 libgtk-3-0:amd64 (3.24.33-1ubuntu2) 的触发器 ...

正在处理用于 libgtk2.0-0:amd64 (2.24.33-2ubuntu2) 的触发器 ...

正在处理用于 libc-bin (2.35-0ubuntu3.1) 的触发器 ...

/sbin/ldconfig.real: /usr/local/cuda-11.4/targets/x86_64-linux/lib/libcudnn_ops_infer.so.8 is not a symbolic link

正在处理用于 man-db (2.10.2-1) 的触发器 ...

正在处理用于 mailcap (3.70+nmu1ubuntu1) 的触发器 ...

正在处理用于 desktop-file-utils (0.26-1ubuntu3) 的触发器 ...

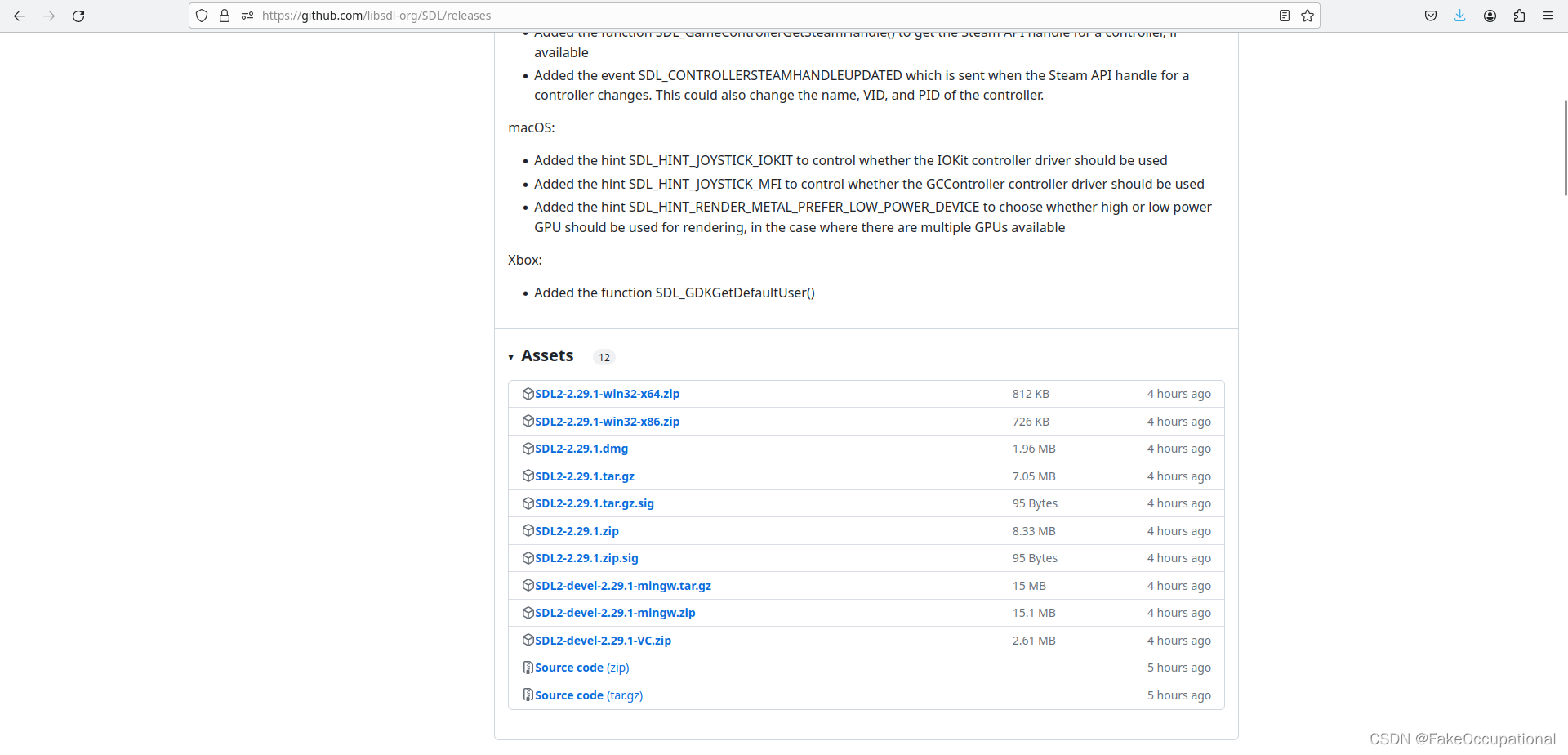

编译安装 https://wiki.libsdl.org/SDL2/Installation

make

git clone https://github.com/libsdl-org/SDL.git -b SDL2

cd SDL

mkdir build

cd build

../configure

make

sudo make install

cmake

git clone https://github.com/libsdl-org/SDL

cd SDL

mkdir build

cd build

cmake .. -DCMAKE_BUILD_TYPE=Release

cmake --build . --config Release --parallel

#CMake >= 3.15

sudo cmake --install . --config Release

#CMake <= 3.14

sudo make install

~/mysdl/SDL2-2.28.5/build$ sudo cmake --install . --config Release [sudo] pdd 的密码: -- Installing: /usr/local/lib/libSDL2-2.0.so.0.2800.5 -- Installing: /usr/local/lib/libSDL2-2.0.so.0 -- Installing: /usr/local/lib/libSDL2-2.0.so -- Installing: /usr/local/lib/libSDL2main.a -- Installing: /usr/local/lib/libSDL2.a -- Installing: /usr/local/lib/libSDL2_test.a -- Installing: /usr/local/lib/cmake/SDL2/SDL2Targets.cmake -- Installing: /usr/local/lib/cmake/SDL2/SDL2Targets-release.cmake -- Installing: /usr/local/lib/cmake/SDL2/SDL2mainTargets.cmake -- Installing: /usr/local/lib/cmake/SDL2/SDL2mainTargets-release.cmake -- Installing: /usr/local/lib/cmake/SDL2/SDL2staticTargets.cmake -- Installing: /usr/local/lib/cmake/SDL2/SDL2staticTargets-release.cmake -- Installing: /usr/local/lib/cmake/SDL2/SDL2testTargets.cmake -- Installing: /usr/local/lib/cmake/SDL2/SDL2testTargets-release.cmake -- Installing: /usr/local/lib/cmake/SDL2/SDL2Config.cmake -- Installing: /usr/local/lib/cmake/SDL2/SDL2ConfigVersion.cmake -- Installing: /usr/local/lib/cmake/SDL2/sdlfind.cmake -- Installing: /usr/local/include/SDL2/SDL.h -- Installing: /usr/local/include/SDL2/SDL_assert.h -- Installing: /usr/local/include/SDL2/SDL_atomic.h -- Installing: /usr/local/include/SDL2/SDL_audio.h -- Installing: /usr/local/include/SDL2/SDL_bits.h -- Installing: /usr/local/include/SDL2/SDL_blendmode.h -- Installing: /usr/local/include/SDL2/SDL_clipboard.h -- Installing: /usr/local/include/SDL2/SDL_copying.h -- Installing: /usr/local/include/SDL2/SDL_cpuinfo.h -- Installing: /usr/local/include/SDL2/SDL_egl.h -- Installing: /usr/local/include/SDL2/SDL_endian.h -- Installing: /usr/local/include/SDL2/SDL_error.h -- Installing: /usr/local/include/SDL2/SDL_events.h -- Installing: /usr/local/include/SDL2/SDL_filesystem.h -- Installing: /usr/local/include/SDL2/SDL_gamecontroller.h -- Installing: /usr/local/include/SDL2/SDL_gesture.h -- Installing: /usr/local/include/SDL2/SDL_guid.h -- Installing: /usr/local/include/SDL2/SDL_haptic.h -- Installing: /usr/local/include/SDL2/SDL_hidapi.h -- Installing: /usr/local/include/SDL2/SDL_hints.h -- Installing: /usr/local/include/SDL2/SDL_joystick.h -- Installing: /usr/local/include/SDL2/SDL_keyboard.h -- Installing: /usr/local/include/SDL2/SDL_keycode.h -- Installing: /usr/local/include/SDL2/SDL_loadso.h -- Installing: /usr/local/include/SDL2/SDL_locale.h -- Installing: /usr/local/include/SDL2/SDL_log.h -- Installing: /usr/local/include/SDL2/SDL_main.h -- Installing: /usr/local/include/SDL2/SDL_messagebox.h -- Installing: /usr/local/include/SDL2/SDL_metal.h -- Installing: /usr/local/include/SDL2/SDL_misc.h -- Installing: /usr/local/include/SDL2/SDL_mouse.h -- Installing: /usr/local/include/SDL2/SDL_mutex.h -- Installing: /usr/local/include/SDL2/SDL_name.h -- Installing: /usr/local/include/SDL2/SDL_opengl.h -- Installing: /usr/local/include/SDL2/SDL_opengl_glext.h -- Installing: /usr/local/include/SDL2/SDL_opengles.h -- Installing: /usr/local/include/SDL2/SDL_opengles2.h -- Installing: /usr/local/include/SDL2/SDL_opengles2_gl2.h -- Installing: /usr/local/include/SDL2/SDL_opengles2_gl2ext.h -- Installing: /usr/local/include/SDL2/SDL_opengles2_gl2platform.h -- Installing: /usr/local/include/SDL2/SDL_opengles2_khrplatform.h -- Installing: /usr/local/include/SDL2/SDL_pixels.h -- Installing: /usr/local/include/SDL2/SDL_platform.h -- Installing: /usr/local/include/SDL2/SDL_power.h -- Installing: /usr/local/include/SDL2/SDL_quit.h -- Installing: /usr/local/include/SDL2/SDL_rect.h -- Installing: /usr/local/include/SDL2/SDL_render.h -- Installing: /usr/local/include/SDL2/SDL_rwops.h -- Installing: /usr/local/include/SDL2/SDL_scancode.h -- Installing: /usr/local/include/SDL2/SDL_sensor.h -- Installing: /usr/local/include/SDL2/SDL_shape.h -- Installing: /usr/local/include/SDL2/SDL_stdinc.h -- Installing: /usr/local/include/SDL2/SDL_surface.h -- Installing: /usr/local/include/SDL2/SDL_system.h -- Installing: /usr/local/include/SDL2/SDL_syswm.h -- Installing: /usr/local/include/SDL2/SDL_test.h -- Installing: /usr/local/include/SDL2/SDL_test_assert.h -- Installing: /usr/local/include/SDL2/SDL_test_common.h -- Installing: /usr/local/include/SDL2/SDL_test_compare.h -- Installing: /usr/local/include/SDL2/SDL_test_crc32.h -- Installing: /usr/local/include/SDL2/SDL_test_font.h -- Installing: /usr/local/include/SDL2/SDL_test_fuzzer.h -- Installing: /usr/local/include/SDL2/SDL_test_harness.h -- Installing: /usr/local/include/SDL2/SDL_test_images.h -- Installing: /usr/local/include/SDL2/SDL_test_log.h -- Installing: /usr/local/include/SDL2/SDL_test_md5.h -- Installing: /usr/local/include/SDL2/SDL_test_memory.h -- Installing: /usr/local/include/SDL2/SDL_test_random.h -- Installing: /usr/local/include/SDL2/SDL_thread.h -- Installing: /usr/local/include/SDL2/SDL_timer.h -- Installing: /usr/local/include/SDL2/SDL_touch.h -- Installing: /usr/local/include/SDL2/SDL_types.h -- Installing: /usr/local/include/SDL2/SDL_version.h -- Installing: /usr/local/include/SDL2/SDL_video.h -- Installing: /usr/local/include/SDL2/SDL_vulkan.h -- Installing: /usr/local/include/SDL2/begin_code.h -- Installing: /usr/local/include/SDL2/close_code.h -- Installing: /usr/local/include/SDL2/SDL_revision.h -- Installing: /usr/local/include/SDL2/SDL_config.h -- Installing: /usr/local/share/licenses/SDL2/LICENSE.txt -- Installing: /usr/local/lib/pkgconfig/sdl2.pc -- Installing: /usr/local/lib/libSDL2.so -- Installing: /usr/local/bin/sdl2-config -- Installing: /usr/local/share/aclocal/sdl2.m4

- ERROR: Couldn’t initialize SDL: dsp: No such audio device

CG

static const std::map<std::string, std::pair<int, std::string>> g_lang = {

{ "en", { 0, "english", } },

{ "zh", { 1, "chinese", } },

{ "de", { 2, "german", } },

{ "es", { 3, "spanish", } },

{ "ru", { 4, "russian", } },

{ "ko", { 5, "korean", } },

{ "fr", { 6, "french", } },

{ "ja", { 7, "japanese", } },

{ "pt", { 8, "portuguese", } },

{ "tr", { 9, "turkish", } },

{ "pl", { 10, "polish", } },

{ "ca", { 11, "catalan", } },

{ "nl", { 12, "dutch", } },

{ "ar", { 13, "arabic", } },

{ "sv", { 14, "swedish", } },

{ "it", { 15, "italian", } },

{ "id", { 16, "indonesian", } },

{ "hi", { 17, "hindi", } },

{ "fi", { 18, "finnish", } },

{ "vi", { 19, "vietnamese", } },

{ "he", { 20, "hebrew", } },

{ "uk", { 21, "ukrainian", } },

{ "el", { 22, "greek", } },

{ "ms", { 23, "malay", } },

{ "cs", { 24, "czech", } },

{ "ro", { 25, "romanian", } },

{ "da", { 26, "danish", } },

{ "hu", { 27, "hungarian", } },

{ "ta", { 28, "tamil", } },

{ "no", { 29, "norwegian", } },

{ "th", { 30, "thai", } },

{ "ur", { 31, "urdu", } },

{ "hr", { 32, "croatian", } },

{ "bg", { 33, "bulgarian", } },

{ "lt", { 34, "lithuanian", } },

{ "la", { 35, "latin", } },

{ "mi", { 36, "maori", } },

{ "ml", { 37, "malayalam", } },

{ "cy", { 38, "welsh", } },

{ "sk", { 39, "slovak", } },

{ "te", { 40, "telugu", } },

{ "fa", { 41, "persian", } },

{ "lv", { 42, "latvian", } },

{ "bn", { 43, "bengali", } },

{ "sr", { 44, "serbian", } },

{ "az", { 45, "azerbaijani", } },

{ "sl", { 46, "slovenian", } },

{ "kn", { 47, "kannada", } },

{ "et", { 48, "estonian", } },

{ "mk", { 49, "macedonian", } },

{ "br", { 50, "breton", } },

{ "eu", { 51, "basque", } },

{ "is", { 52, "icelandic", } },

{ "hy", { 53, "armenian", } },

{ "ne", { 54, "nepali", } },

{ "mn", { 55, "mongolian", } },

{ "bs", { 56, "bosnian", } },

{ "kk", { 57, "kazakh", } },

{ "sq", { 58, "albanian", } },

{ "sw", { 59, "swahili", } },

{ "gl", { 60, "galician", } },

{ "mr", { 61, "marathi", } },

{ "pa", { 62, "punjabi", } },

{ "si", { 63, "sinhala", } },

{ "km", { 64, "khmer", } },

{ "sn", { 65, "shona", } },

{ "yo", { 66, "yoruba", } },

{ "so", { 67, "somali", } },

{ "af", { 68, "afrikaans", } },

{ "oc", { 69, "occitan", } },

{ "ka", { 70, "georgian", } },

{ "be", { 71, "belarusian", } },

{ "tg", { 72, "tajik", } },

{ "sd", { 73, "sindhi", } },

{ "gu", { 74, "gujarati", } },

{ "am", { 75, "amharic", } },

{ "yi", { 76, "yiddish", } },

{ "lo", { 77, "lao", } },

{ "uz", { 78, "uzbek", } },

{ "fo", { 79, "faroese", } },

{ "ht", { 80, "haitian creole", } },

{ "ps", { 81, "pashto", } },

{ "tk", { 82, "turkmen", } },

{ "nn", { 83, "nynorsk", } },

{ "mt", { 84, "maltese", } },

{ "sa", { 85, "sanskrit", } },

{ "lb", { 86, "luxembourgish", } },

{ "my", { 87, "myanmar", } },

{ "bo", { 88, "tibetan", } },

{ "tl", { 89, "tagalog", } },

{ "mg", { 90, "malagasy", } },

{ "as", { 91, "assamese", } },

{ "tt", { 92, "tatar", } },

{ "haw", { 93, "hawaiian", } },

{ "ln", { 94, "lingala", } },

{ "ha", { 95, "hausa", } },

{ "ba", { 96, "bashkir", } },

{ "jw", { 97, "javanese", } },

{ "su", { 98, "sundanese", } },

{ "yue", { 99, "cantonese", } },

};

(base) pdd@pdd-Dell-G15-5511:~/le$ git clone http://github.com/hogelog/whispercppapp.git --recurse-submodules

正克隆到 'whispercppapp'...

warning: 重定向到 https://github.com/hogelog/whispercppapp.git/

remote: Enumerating objects: 411, done.

remote: Counting objects: 100% (411/411), done.

remote: Compressing objects: 100% (245/245), done.

remote: Total 411 (delta 150), reused 368 (delta 113), pack-reused 0

接收对象中: 100% (411/411), 454.29 KiB | 161.00 KiB/s, 完成.

处理 delta 中: 100% (150/150), 完成.

子模组 'whisper.cpp'(https://github.com/ggerganov/whisper.cpp.git)已对路径 'whisper.cpp' 注册

正克隆到 '/home/pdd/le/whispercppapp/whisper.cpp'...

remote: Enumerating objects: 6590, done.

remote: Counting objects: 100% (1812/1812), done.

remote: Compressing objects: 100% (192/192), done.

error: RPC 失败。curl 16 Error in the HTTP2 framing layer

error: 预期仍然需要 5253 个字节的正文

fetch-pack: unexpected disconnect while reading sideband packet

fatal: 过早的文件结束符(EOF)

fatal: fetch-pack:无效的 index-pack 输出

fatal: 无法克隆 'https://github.com/ggerganov/whisper.cpp.git' 到子模组路径 '/home/pdd/le/whispercppapp/whisper.cpp'

克隆 'whisper.cpp' 失败。按计划重试

正克隆到 '/home/pdd/le/whispercppapp/whisper.cpp'...

remote: Enumerating objects: 6590, done.

remote: Counting objects: 100% (1807/1807), done.

remote: Compressing objects: 100% (191/191), done.

remote: Total 6590 (delta 1699), reused 1651 (delta 1613), pack-reused 4783

接收对象中: 100% (6590/6590), 9.99 MiB | 209.00 KiB/s, 完成.

处理 delta 中: 100% (4244/4244), 完成.

子模组路径 'whisper.cpp':检出 'ad1389003d3f8bd47b8ca7d4c21b4764cc3844fc'

子模组 'bindings/ios'(https://github.com/ggerganov/whisper.spm)已对路径 'whisper.cpp/bindings/ios' 注册

正克隆到 '/home/pdd/le/whispercppapp/whisper.cpp/bindings/ios'...

remote: Enumerating objects: 357, done.

remote: Counting objects: 100% (151/151), done.

remote: Compressing objects: 100% (71/71), done.

remote: Total 357 (delta 104), reused 104 (delta 80), pack-reused 206

接收对象中: 100% (357/357), 1.11 MiB | 163.00 KiB/s, 完成.

处理 delta 中: 100% (197/197), 完成.

子模组路径 'whisper.cpp/bindings/ios':检出 '92d4c5c9a07b726e35c20dc513532789919e00c4'

子模组路径 'whisper.cpp':检出 'ad1389003d3f8bd47b8ca7d4c21b4764cc3844fc'