1. 安装CUDA Toolkit 11.8

从MZ小师妹的摸索过程来看,其他版本的会有bug,12.0的版本太高,11.5的太低(感谢小师妹让我少走弯路)

参考网址:CUDA Toolkit 11.8 Downloads | NVIDIA Developer

在命令行输入命令:

wget https://developer.download.nvidia.com/compute/cuda/11.8.0/local_installers/cuda_11.8.0_520.61.05_linux.run

sudo sh cuda_11.8.0_520.61.05_linux.run2. 确定自己用的是cuda 11.8:

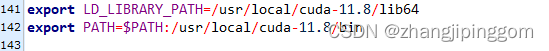

如果不是,在自己的~/.bashrc文件中添加路径:

export LD_LIBRARY_PATH=/usr/local/cuda-11.8/lib64

export PATH=$PATH:/usr/local/cuda-11.8/bin3. 安装pycuda:

conda install -c conda-forge pycuda

4. 测试pycuda:

来源 PyCUDA - 上海交大超算平台用户手册 Documentation

import pycuda.driver as drv

import pycuda.autoinit

from pycuda.compiler import SourceModule

import numpy

# 定义核函数

mod = SourceModule(

"""

__global__ void add_vectors(float *a, float *b, float *c, int n)

{

int idx = threadIdx.x + blockIdx.x * blockDim.x;

if (idx < n)

{

c[idx] = a[idx] + b[idx];

}

}

"""

)

# 定义向量大小

n = 10000

# 生成随机向量数据

a = numpy.random.randn(n).astype(numpy.float32)

b = numpy.random.randn(n).astype(numpy.float32)

# 分配输出内存空间

c = numpy.zeros_like(a)

# 将输入输出数据复制到 GPU

a_gpu = drv.mem_alloc(a.nbytes)

b_gpu = drv.mem_alloc(b.nbytes)

c_gpu = drv.mem_alloc(c.nbytes)

drv.memcpy_htod(a_gpu, a)

drv.memcpy_htod(b_gpu, b)

# 定义块和网格大小

blocksize = 256

gridsize = (n + blocksize - 1) // blocksize

# 执行核函数

add_vectors = mod.get_function("add_vectors")

add_vectors(

a_gpu, b_gpu, c_gpu, numpy.int32(n), block=(blocksize, 1, 1), grid=(gridsize, 1)

)

# 将结果从 GPU 复制回 CPU

drv.memcpy_dtoh(c, c_gpu)

# 检查计算结果是否正确

assert numpy.allclose(c, a + b), "result not correct"

# 输出结果

print("a:", a)

print("b:", b)

print("c:", c)