前言

选修的是蔡mj老师的计算机视觉,上课还是不错的,但是OpenCV可能需要自己学才能完整把作业写出来。由于没有认真学,这门课最后混了80多分,所以下面作业解题过程均为自己写的,并不是标准答案,仅供参考

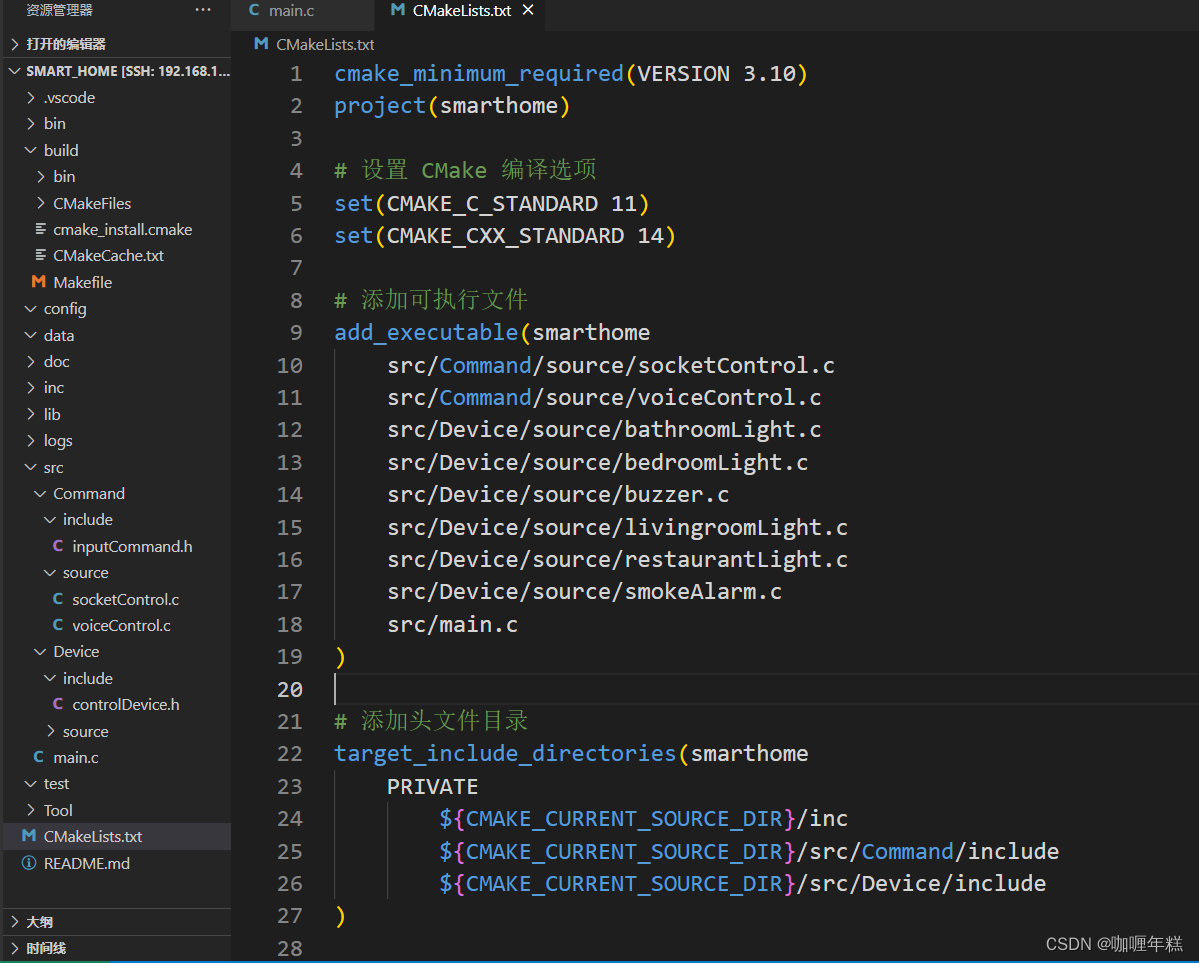

任务1

修改test-3.py的task_one()函数,基于pedestrian.avi进行稀疏光流估计,对行人轨迹进行跟踪。具体输出要求如下:

(1)对视频中跟踪的轨迹进行可视化,将所有的轨迹重叠显示在视频的最后一张图像上,可视化结果保存为trajectory.png。

def task_one():

"""

sparse optical flow and trajectory tracking

"""

cap = cv2.VideoCapture("pedestrian.avi")

# --------Your code--------

# cap = cv2.VideoCapture("images/kk 2022-01-23 18-21-21.mp4")

#cap = cv2.VideoCapture(0)

# 定义角点检测的参数

feature_params = dict(

maxCorners=100, # 最多多少个角点

qualityLevel=0.3, # 品质因子,在角点检测中会使用到,品质因子越大,角点质量越高,那么过滤得到的角点就越少

minDistance=7 # 用于NMS,将最有可能的角点周围某个范围内的角点全部抑制

)

# 定义 lucas kande算法的参数

lk_params = dict(

winSize=(10, 10), # 这个就是周围点临近点区域的范围

maxLevel=2 # 最大的金字塔层数

)

# 拿到第一帧的图像

ret, prev_img = cap.read()

prev_img_gray = cv2.cvtColor(prev_img, cv2.COLOR_BGR2GRAY)

# 先进行角点检测,得到关键点

prev_points = cv2.goodFeaturesToTrack(prev_img_gray, mask=None, **feature_params)

# 制作一个临时的画布,到时候可以将新的一些画的先再mask上画出来,再追加到原始图像上

mask_img = np.zeros_like(prev_img)

while True:

ret, curr_img = cap.read()

if curr_img is None:

print("video is over...")

break

curr_img_gray = cv2.cvtColor(curr_img, cv2.COLOR_BGR2GRAY)

# 光流追踪下

curr_points, status, err = cv2.calcOpticalFlowPyrLK(prev_img_gray,

curr_img_gray,

prev_points,

None,

**lk_params)

# print(status.shape) # 取值都是1/0, 1表示是可以追踪到的,0表示失去了追踪的。

good_new = curr_points[status == 1]

good_old = prev_points[status == 1]

# 绘制图像

for i, (new, old) in enumerate(zip(good_new, good_old)):

a, b = new.ravel()

c, d = old.ravel()

mask_img = cv2.line(mask_img, pt1=(int(a), int(b)), pt2=(int(c), int(d)), color=(0, 0, 255), thickness=1)

mask_img = cv2.circle(mask_img, center=(int(a), int(b)), radius=2, color=(255, 0, 0), thickness=2)

# 将画布上的图像和原始图像叠加,并且展示

img = cv2.add(curr_img, mask_img)

#cv2.imshow("desct", img)

if cv2.waitKey(60) & 0xFF == ord('q'):

print("Bye...")

break

# 更新下原始图像,以及重新得到新的点

prev_img_gray = curr_img_gray.copy()

prev_points = good_new.reshape(-1, 1, 2)

if len(prev_points) < 5:

# 当匹配的太少了,就重新获得当前图像的角点

prev_points = cv2.goodFeaturesToTrack(curr_img_gray, mask=None, **feature_params)

mask_img = np.zeros_like(prev_img) # 重新换个画布

cv2.imwrite("trajectory.png", img)

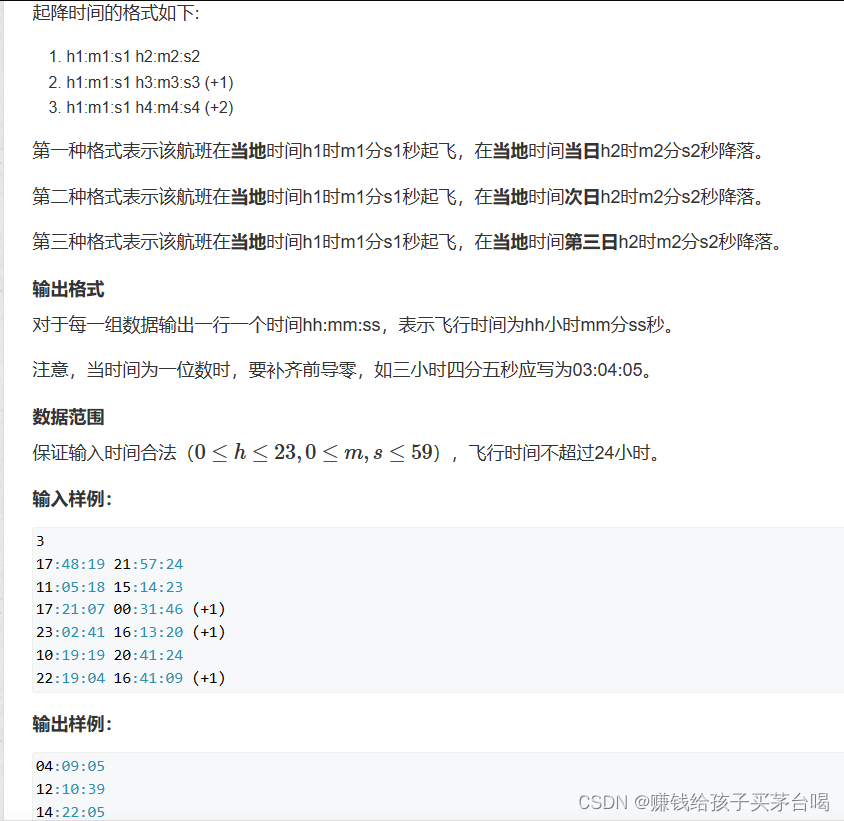

任务2

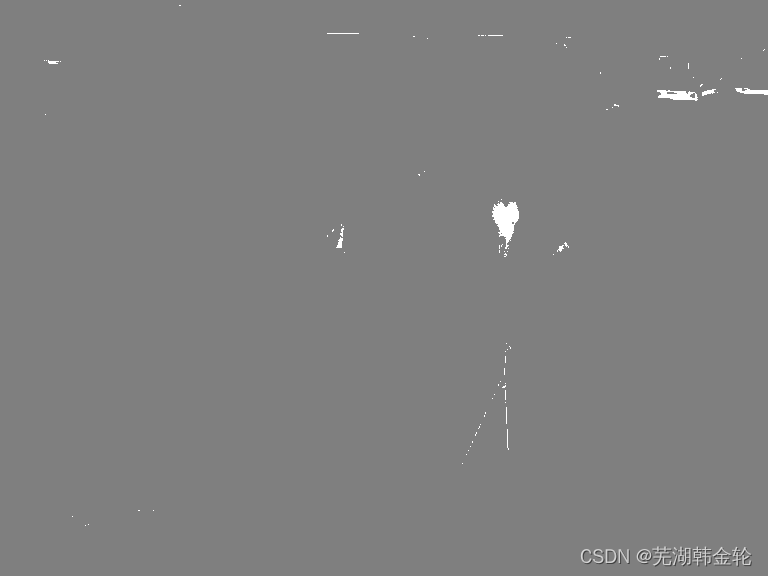

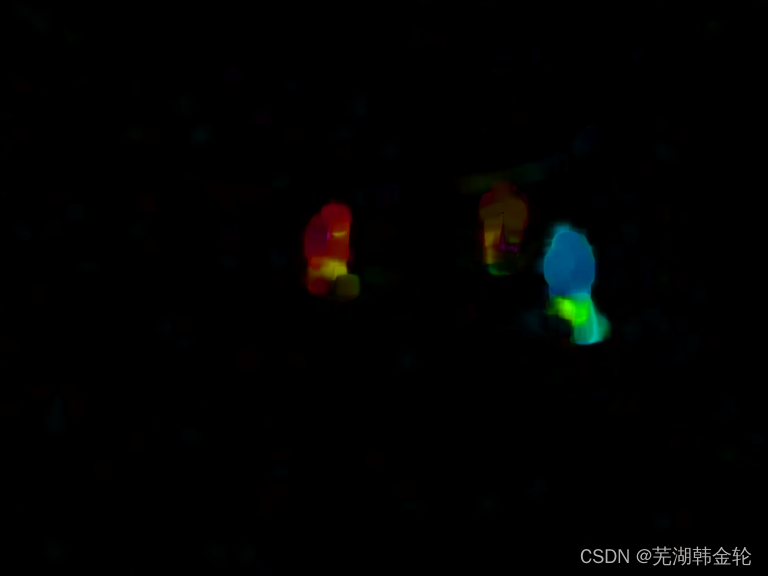

修改test-3.py的task_two()函数,基于frame01.png和frame02.png进行稠密光流估计,并基于光流估计对图像中的行人进行图像分割。具体输出要求如下:

(1)将稠密光流估计的结果进行可视化,可视化结果保存为frame01_flow.png

(2)对行人分割结果进行可视化,得到一个彩色掩码图,每个行人的分割区域用单一的颜色表示(例如red,green,blue),可视化结果保存为frame01_person.png

第二题的第一问的可视化我不清楚题目要问的是什么意思,所以跑出了两种结果。

第一种结果是背景人物分割,移动的人物会被标记为白色,背景会被标记为黑色的

第二种就是frame02图片原照片

def task_two():

"""

dense optical flow and pedestrian segmentation

"""

img1 = cv2.imread('frame01.png')

img2 = cv2.imread('frame02.png')

# --------Your code--------

#cap = cv.VideoCapture(cv.samples.findFile("vtest.avi"))

fgbg = cv2.createBackgroundSubtractorMOG2()

frame1 = img1

prvs = cv2.cvtColor(frame1, cv2.COLOR_BGR2GRAY)

hsv = np.zeros_like(frame1)

hsv[..., 1] = 255

frame2 = img2

fgmask = fgbg.apply(frame2)

next = cv2.cvtColor(frame2, cv2.COLOR_BGR2GRAY)

flow = cv2.calcOpticalFlowFarneback(prvs, next, None, 0.5, 3, 15, 3, 5, 1.2, 0)

mag, ang = cv2.cartToPolar(flow[..., 0], flow[..., 1])

hsv[..., 0] = ang * 180 / np.pi / 2

hsv[..., 2] = cv2.normalize(mag, None, 0, 255, cv2.NORM_MINMAX)

bgr = cv2.cvtColor(hsv, cv2.COLOR_HSV2BGR)

#cv2.imshow('frame2', bgr)

cv2.imwrite('frame01_flow.png', fgmask)

cv2.imwrite('frame01_person.png', bgr)

# cv2.imwrite("frame01_flow.png", img_flow)

# cv2.imwrite("frame01_person.png", img_mask)

源代码

# -*- coding: utf-8 -*-

"""

Created on Mon May 29 15:30:41 2023

@author: cai-mj

"""

import numpy as np

import cv2

from matplotlib import pyplot as plt

import argparse

def task_one():

"""

sparse optical flow and trajectory tracking

"""

cap = cv2.VideoCapture("pedestrian.avi")

# --------Your code--------

# cap = cv2.VideoCapture("images/kk 2022-01-23 18-21-21.mp4")

#cap = cv2.VideoCapture(0)

# 定义角点检测的参数

feature_params = dict(

maxCorners=100, # 最多多少个角点

qualityLevel=0.3, # 品质因子,在角点检测中会使用到,品质因子越大,角点质量越高,那么过滤得到的角点就越少

minDistance=7 # 用于NMS,将最有可能的角点周围某个范围内的角点全部抑制

)

# 定义 lucas kande算法的参数

lk_params = dict(

winSize=(10, 10), # 这个就是周围点临近点区域的范围

maxLevel=2 # 最大的金字塔层数

)

# 拿到第一帧的图像

ret, prev_img = cap.read()

prev_img_gray = cv2.cvtColor(prev_img, cv2.COLOR_BGR2GRAY)

# 先进行角点检测,得到关键点

prev_points = cv2.goodFeaturesToTrack(prev_img_gray, mask=None, **feature_params)

# 制作一个临时的画布,到时候可以将新的一些画的先再mask上画出来,再追加到原始图像上

mask_img = np.zeros_like(prev_img)

while True:

ret, curr_img = cap.read()

if curr_img is None:

print("video is over...")

break

curr_img_gray = cv2.cvtColor(curr_img, cv2.COLOR_BGR2GRAY)

# 光流追踪下

curr_points, status, err = cv2.calcOpticalFlowPyrLK(prev_img_gray,

curr_img_gray,

prev_points,

None,

**lk_params)

# print(status.shape) # 取值都是1/0, 1表示是可以追踪到的,0表示失去了追踪的。

good_new = curr_points[status == 1]

good_old = prev_points[status == 1]

# 绘制图像

for i, (new, old) in enumerate(zip(good_new, good_old)):

a, b = new.ravel()

c, d = old.ravel()

mask_img = cv2.line(mask_img, pt1=(int(a), int(b)), pt2=(int(c), int(d)), color=(0, 0, 255), thickness=1)

mask_img = cv2.circle(mask_img, center=(int(a), int(b)), radius=2, color=(255, 0, 0), thickness=2)

# 将画布上的图像和原始图像叠加,并且展示

img = cv2.add(curr_img, mask_img)

#cv2.imshow("desct", img)

if cv2.waitKey(60) & 0xFF == ord('q'):

print("Bye...")

break

# 更新下原始图像,以及重新得到新的点

prev_img_gray = curr_img_gray.copy()

prev_points = good_new.reshape(-1, 1, 2)

if len(prev_points) < 5:

# 当匹配的太少了,就重新获得当前图像的角点

prev_points = cv2.goodFeaturesToTrack(curr_img_gray, mask=None, **feature_params)

mask_img = np.zeros_like(prev_img) # 重新换个画布

cv2.imwrite("trajectory.png", img)

def task_two():

"""

dense optical flow and pedestrian segmentation

"""

img1 = cv2.imread('frame01.png')

img2 = cv2.imread('frame02.png')

# --------Your code--------

#cap = cv.VideoCapture(cv.samples.findFile("vtest.avi"))

fgbg = cv2.createBackgroundSubtractorMOG2()

frame1 = img1

prvs = cv2.cvtColor(frame1, cv2.COLOR_BGR2GRAY)

hsv = np.zeros_like(frame1)

hsv[..., 1] = 255

frame2 = img2

fgmask = fgbg.apply(frame2)

next = cv2.cvtColor(frame2, cv2.COLOR_BGR2GRAY)

flow = cv2.calcOpticalFlowFarneback(prvs, next, None, 0.5, 3, 15, 3, 5, 1.2, 0)

mag, ang = cv2.cartToPolar(flow[..., 0], flow[..., 1])

hsv[..., 0] = ang * 180 / np.pi / 2

hsv[..., 2] = cv2.normalize(mag, None, 0, 255, cv2.NORM_MINMAX)

bgr = cv2.cvtColor(hsv, cv2.COLOR_HSV2BGR)

#cv2.imshow('frame2', bgr)

cv2.imwrite('frame01_flow.png', fgmask)

cv2.imwrite('frame01_person.png', bgr)

# cv2.imwrite("frame01_flow.png", img_flow)

# cv2.imwrite("frame01_person.png", img_mask)

if __name__ == '__main__':

task_one()

task_two()

![[Linux] Apache的配置与运用](https://img-blog.csdnimg.cn/direct/6d6120c323c84f289e04ddb75fa26eb7.png)

![[LeetCode周赛复盘] 第 119 场双周赛20231209](https://img-blog.csdnimg.cn/direct/c44e61c9ea44425a847435a0bbc074ef.png)