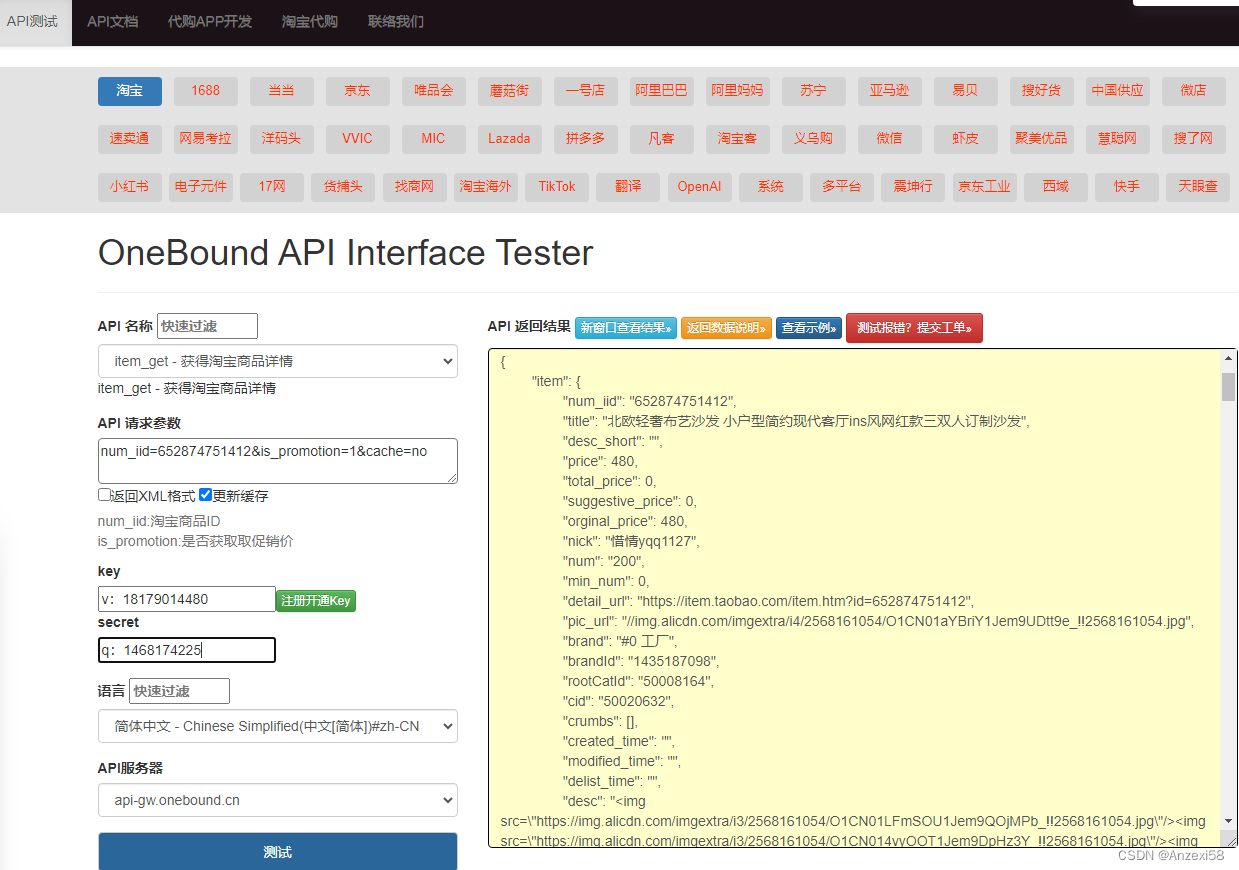

采样器这块基本都是用的k-diffusion,模型用的是stability的原生项目generative-models中的sgm,这点和fooocus不同,fooocus底层依赖comfyui中的models,comfy是用load_state_dict的方式解析的,用的load_checkpoint_guess_config函数,这个函数webui中也有。

webui在paths中导入了generative-models,在sd_model_config中导入了config.sdxl和config.sdxl_refiner两个config,模型使用sgm下的models/diffusion/DiffusionEngine初始化,refiner和base的模型几乎是一致的。

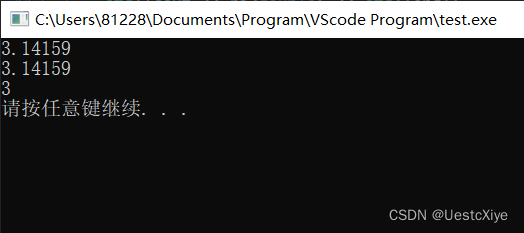

python webui.py --port 6006 --no-half-vae

webui()->

initialize()->

initialize_rest()->

- sd_samplers.py -> set_samplers()->sd_samplers_kdiffusion.py->

- extensions.py -> list_extensions()

- initialize_util.py -> restore_config_state_file()

- sd_models.py -> list_models()

- localization.py -> list_localizations()

- scripts.load_scripts() -> scripts.py

-- scripts_txt2img=ScriptRunner()/scripts_img2img=ScriptRunner()/scripts_postpro=scripts_postprocessing.ScriptPostprocessingRunner()(scripts_postprocessing.py)

- modelloader.py -> load_upscaler()

- sd_vae.py -> refresh_vae_list()

- textual_inversion/textual_inversion.py -> list_textual_inversion_templates()

- script_callbacks.py -> on_list_optimizers(sd_hijack_optimizations.list_optimizers)

- sd_hijack.py -> list_optimizers()

- sd_unet.py -> list_unets()

- load_model -> shared.py

- shared_items.py -> reload_hypernetworks() # 这种方式现在几乎不用了

- ui_extra_networks.py -> initialize()/register_default_pages()

- extra_networks.py -> initialize()/register_default_extra_networks()

ui.py -> ui.create_ui()

ui.py

一些基础参数的初始化也在这里,关于ui设计在webui中代码还是挺多的

ui_components.py 一些设计的ui组件

shared_items.py 重复的一些item

下面就是一个FormRow:

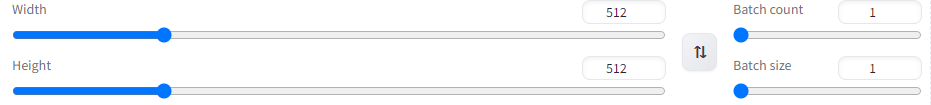

elif category == "dimensions":

with FormRow():

with gr.Column(elem_id="txt2img_column_size", scale=4):

width = gr.Slider(minimum=64, maximum=2048, step=8, label="Width", value=512, elem_id="txt2img_width")

height = gr.Slider(minimum=64, maximum=2048, step=8, label="Height", value=512, elem_id="txt2img_height")

....

调用接口入口:

txt2img_args = dict(

fn=wrap_gradio_gpu_call(modules.txt2img.txt2img, extra_outputs=[None, '', '']),

_js="submit",

inputs=[

dummy_component,

toprow.prompt,

toprow.negative_prompt,

toprow.ui_styles.dropdown,

steps,

sampler_name,

batch_count,

batch_size,

cfg_scale,

height,

width,

enable_hr,

denoising_strength,

hr_scale,

hr_upscaler,

hr_second_pass_steps,

hr_resize_x,

hr_resize_y,

hr_checkpoint_name,

hr_sampler_name,

hr_prompt,

hr_negative_prompt,

override_settings,

] + custom_inputs,txt2img.py

p = processing.StableDiffusionProcessingTxt2Img(sd_model,,prompt,negative_prompt,sampler_name,...)->

processed = processing.process_images(p)

processing.py

res = process_image_inner(p)

- sample_ddim = p.sample(conditioning,unconditional_conditioning,seeds,subseeds,subseed_strength,prompts)-> StableDiffusionProcessingTxt2Img.sample()

-- self.sampler = sd_sampler.create_sampler(self.sampler_name,self.sd_model)

-- samples = self.samplers.sample(c,uc,image_encoditioning=self.txt2img_image_conditioning(x))

sd_samplers_kdiffusion.py

sample()->

samples = self.launch_sampling(steps,lambda:self.func(self.model_wrap_cfg,x,self.sampler_extra_args,...))

model_rap_cfg:CFGDenoiseKDiffusion->sd_samplers_cfg_denoiser.CFGDenoisersd_samplers_common.py

func() = sample_dpmpp_2m ->

repositories/k-diffusion/k_diffusion/sampling.py

sample_dpmpp_2m()->

- denoised = model(x,sigmas[i]*s_in,**extra_args)->

...

# 此处就是去噪产生图片的过程modules/sd_samplers_cfg_denoiser.py model =

CFGDenoiser()->

forward(x:2x4x128x128,sigma:[14.6146,14.6146],uncond:SchedulePromptConditionings,cond:MulticondLearnedConditioning,cond_scale:7,s_min_uncond:0,image_cond:2x5x1x1)->

denoised:2x4x128x128

# unet预测都被封装在这里modules/sd_models.py 这块主要是

reload_model_weights()->

sd_model = reuse_model_from_already_loaded(sd_model,checkpoint_info,...)

load_model()

....modules/sd_samplers_common.py

sd_models.reload_model_weights(refiner_checkpoint_info)

cfg_denoiser.update_inner_model()->

modules/sd_samplers_cfg_denoiser.py

forward()->