torch.pixel_shuffle()是pytorch里面上采样比较常用的方法,但是和tensoflow的depth_to_space不是完全一样的,虽然看起来功能很像,但是细微是有差异的

def tf_pixelshuffle(input, upscale_factor):

temp = []

depth = upscale_factor *upscale_factor

channels = input.shape.as_list()[-1] // depth

for i in range(channels):

out_ = tf.nn.depth_to_space(input=input[:,:, :,i*depth:(i+1)*depth], block_size=upscale_factor)

temp.append(out_)

out = tf.concat(temp, axis=-1)

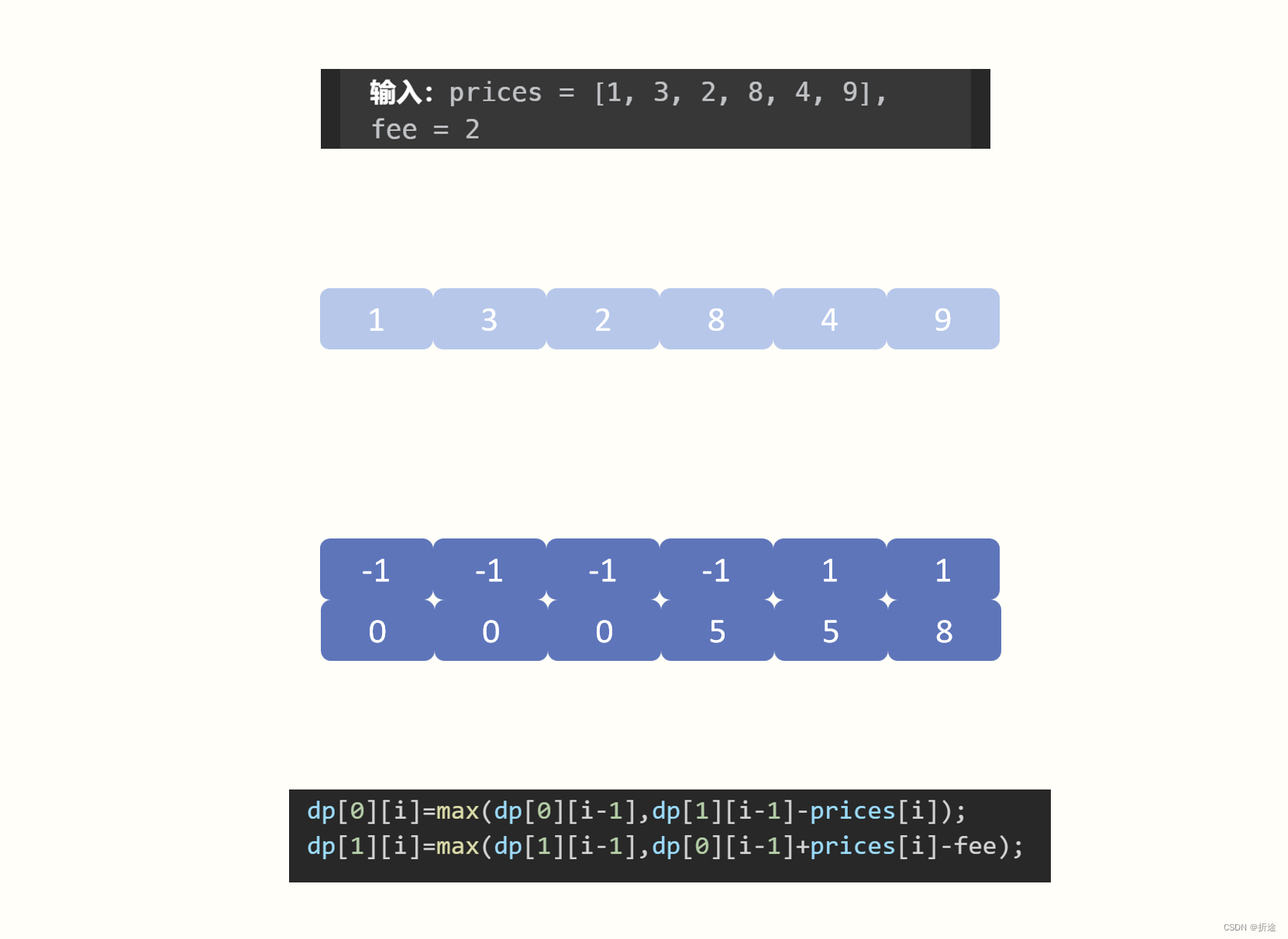

return out因为,有人发现在单通道的时候是depth_to_space和pixel_shuffle结果是一样的,所以拆分出来计算好在合并就行,这样速度基本上没有增加多少,亲测速度也是很快的,比从头开始实现pixel_shuffle是快非常多的。

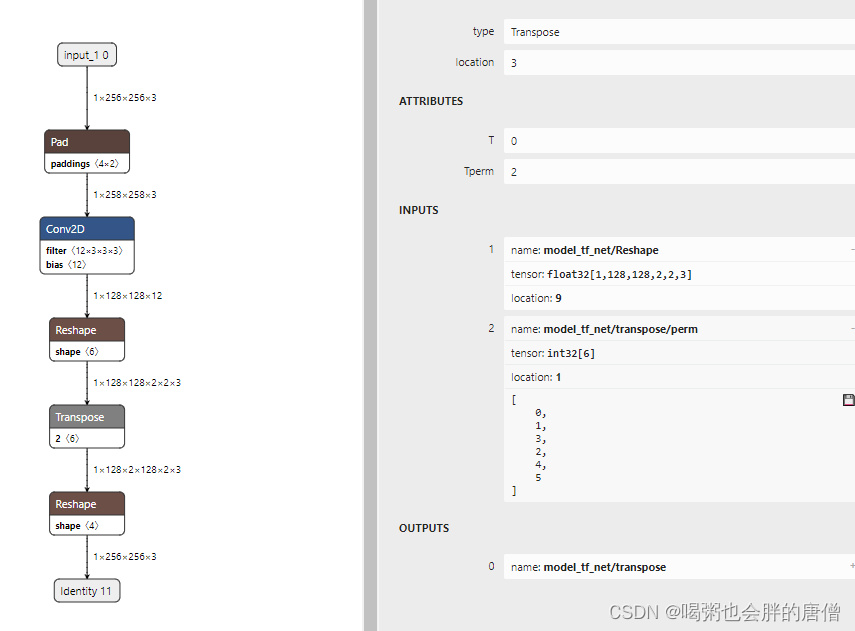

如果使用这样的从头开始实现,转出来的tflite是没法运行在手机上面的,因为tf.transpose的维度太多了,tflite在手机上不支持6个维度的transpose的,因为超过5个维度就会产生flex层,flex层是不被支持的。

def pixel_shuffle(x, upscale_factor):

batch_size, height, width, channels = x.shape

channel_split = channels // (upscale_factor ** 2)

# Reshape the input tensor to split channels

x = tf.reshape(x, (batch_size, height, width, upscale_factor, upscale_factor, channel_split))

# Transpose and reshape to get the pixel shuffled output

x = tf.transpose(x, perm=[0, 1, 3, 2, 4, 5])

x = tf.reshape(x, (batch_size, height * upscale_factor, width * upscale_factor, channel_split))

return x下面就测试一下:

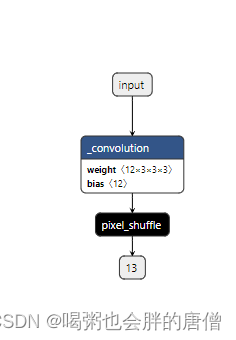

新建pytorch模型

import torch

import torch.nn as nn

class Net(nn.Module):

def __init__(self):

super().__init__()

self.conv=nn.Conv2d(in_channels=3,

out_channels=12,

kernel_size=3,

stride=2,

padding=1)

def forward(self, input):

x=self.conv(input)

out=torch.pixel_shuffle(x,2)

return out可视化出来

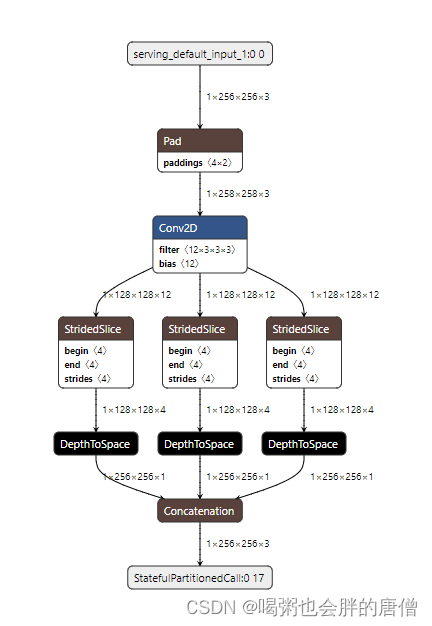

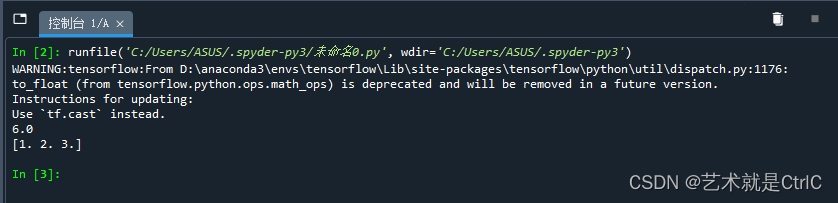

利用tf_pixelshuffle转出来的结果:

利用pixel_shuffle转出来的结果:

![[C++ 网络协议] 重叠I/O模型](https://img-blog.csdnimg.cn/a723a4876df24814b91270cb2416af0f.png)

![MyBatis-Plus通用Service快速实现赠三改查[MyBatis-Plus系列] - 第489篇](https://img-blog.csdnimg.cn/img_convert/a7a8034d824f7b26377535152198926f.png)