Apache DolphinScheduler 是一款开源的分布式任务调度系统,旨在帮助用户实现复杂任务的自动化调度和管理。DolphinScheduler 支持多种任务类型,可以在单机或集群环境下运行。下面将介绍如何实现 DolphinScheduler 的自动化打包和单机/集群部署。

自动化打包

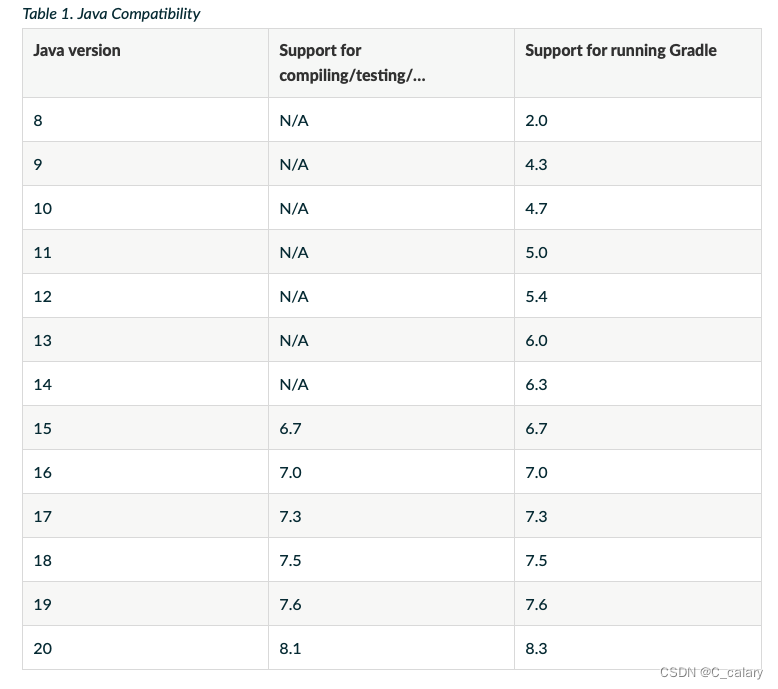

所需环境:maven、jdk

执行以下shell完成代码拉取及打包,打包路径:/opt/action/dolphinscheduler/dolphinscheduler-dist/target/apache-dolphinscheduler-dev-SNAPSHOT-bin.tar.gz

sudo su - root <<EOF

cd /opt/action

git clone git@github.com:apache/dolphinscheduler.git

cd Dolphinscheduler

git fetch origin dev

git checkout -b dev origin/dev

#git log --oneline

EOF

}

# 打包

build(){

sudo su - root <<EOF

cd /opt/action/Dolphinscheduler

mvn -B clean install -Prelease -Dmaven.test.skip=true -Dcheckstyle.skip=true -Dmaven.javadoc.skip=true

EOF

}单机部署

1、DolphinScheduler运行所需环境

所需环境jdk、zookeeper、mysql

初始化zookeeper(高版本zookeeper推荐使用v3.8及以上版本)环境

安装包官网下载地址:https://zookeeper.apache.org/

sudo su - root <<EOF

#进入/opt目录下(安装目录自行选择)

cd /opt

#解压缩

tar -xvf apache-zookeeper-3.8.0-bin.tar.gz

#修改文件名称

sudo mv apache-zookeeper-3.8.0-bin zookeeper

#进入zookeeper目录

cd zookeeper/

#在 /opt/zookeeper 目录下创建目录 zkData,用来存放 zookeeper 的数据文件

mkdir zkData

#进入conf文件夹

cd conf/

#修改配置文件,复制 zoo_sample.cfg 文件并重命名为 zoo.cfg因为zookeeper只能识别 zoo.cfg 配置文件

cp zoo_sample.cfg zoo.cfg

#修改 zoo.cfg 的配置

sed -i 's/\/tmp\/zookeeper/\/opt\/zookeeper\/conf/g' zoo.cfg

#停止之前的zk服务

ps -ef|grep QuorumPeerMain|grep -v grep|awk '{print "kill -9 " $2}' |sh

#使用 vim zoo.cfg 命令修改 zoo.cfg 的配置

sh /opt/zookeeper/bin/zkServer.sh start

EOF

}jdk、mysql这里不做过多赘述。

2、初始化配置

2.1 配置文件初始化

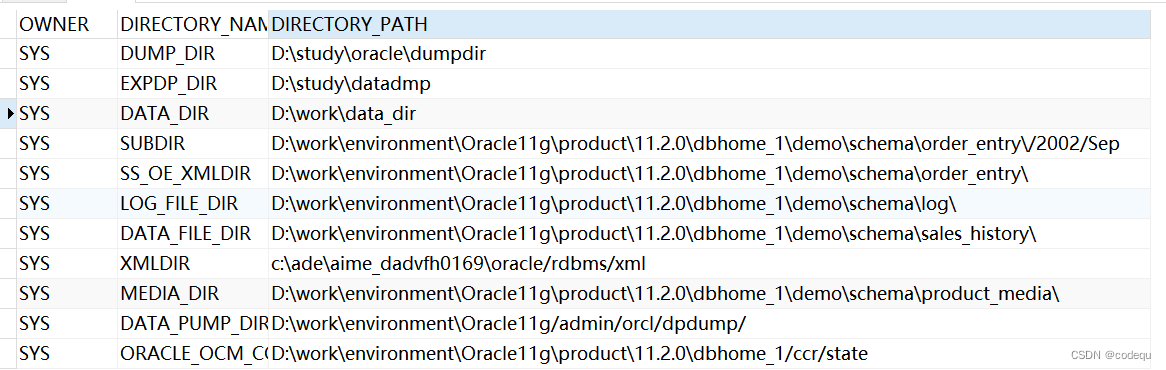

初始化文件要放到指定目录(本文章以/opt/action/tool举例)

- 2.1.1新建文件夹

mkdir -p /opt/action/tool mkdir -p /opt/Dsrelease - 2.1.2新建初始化文件common.properties

# Licensed to the Apache Software Foundation (ASF) under one or more

# contributor license agreements. See the NOTICE file distributed with

# this work for additional information regarding copyright ownership.

# The ASF licenses this file to You under the Apache License, Version 2.0

# (the "License"); you may not use this file except in compliance with

# the License. You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

#

# user data local directory path, please make sure the directory exists and have read write permissions

data.basedir.path=/tmp/dolphinscheduler

# resource storage type: HDFS, S3, NONE

resource.storage.type=HDFS

# resource store on HDFS/S3 path, resource file will store to this hadoop hdfs path, self configuration, please make sure the directory exists on hdfs and have read write permissions. "/dolphinscheduler" is recommended

resource.upload.path=/dolphinscheduler

# whether to startup kerberos

hadoop.security.authentication.startup.state=false

# java.security.krb5.conf path

java.security.krb5.conf.path=/opt/krb5.conf

# login user from keytab username

login.user.keytab.username=hdfs-mycluster@ESZ.COM

# login user from keytab path

login.user.keytab.path=/opt/hdfs.headless.keytab

# kerberos expire time, the unit is hour

kerberos.expire.time=2

# resource view suffixs

#resource.view.suffixs=txt,log,sh,bat,conf,cfg,py,java,sql,xml,hql,properties,json,yml,yaml,ini,js

# if resource.storage.type=HDFS, the user must have the permission to create directories under the HDFS root path

hdfs.root.user=root

# if resource.storage.type=S3, the value like: s3a://dolphinscheduler; if resource.storage.type=HDFS and namenode HA is enabled, you need to copy core-site.xml and hdfs-site.xml to conf dir

fs.defaultFS=file:///

aws.access.key.id=minioadmin

aws.secret.access.key=minioadmin

aws.region=us-east-1

aws.endpoint=http://localhost:9000

# resourcemanager port, the default value is 8088 if not specified

resource.manager.httpaddress.port=8088

# if resourcemanager HA is enabled, please set the HA IPs; if resourcemanager is single, keep this value empty

yarn.resourcemanager.ha.rm.ids=192.168.xx.xx,192.168.xx.xx

# if resourcemanager HA is enabled or not use resourcemanager, please keep the default value; If resourcemanager is single, you only need to replace aws2 to actual resourcemanager hostname

yarn.application.status.address=http://aws2:%s/ws/v1/cluster/apps/%s

# job history status url when application number threshold is reached(default 10000, maybe it was set to 1000)

yarn.job.history.status.address=http://aws2:19888/ws/v1/history/mapreduce/jobs/%s

# datasource encryption enable

datasource.encryption.enable=false

# datasource encryption salt

datasource.encryption.salt=!@#$%^&*

# data quality option

data-quality.jar.name=dolphinscheduler-data-quality-dev-SNAPSHOT.jar

#data-quality.error.output.path=/tmp/data-quality-error-data

# Network IP gets priority, default inner outer

# Whether hive SQL is executed in the same session

support.hive.oneSession=false

# use sudo or not, if set true, executing user is tenant user and deploy user needs sudo permissions; if set false, executing user is the deploy user and doesn't need sudo permissions

sudo.enable=true

# network interface preferred like eth0, default: empty

#dolphin.scheduler.network.interface.preferred=

# network IP gets priority, default: inner outer

#dolphin.scheduler.network.priority.strategy=default

# system env path

#dolphinscheduler.env.path=dolphinscheduler_env.sh

# development state

development.state=false

# rpc port

alert.rpc.port=50052

# Url endpoint for zeppelin RESTful API

zeppelin.rest.url=http://localhost:8080- 2.1.3新建初始化文件core-site.xml

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<!--

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License. See accompanying LICENSE file.

-->

<!-- Put site-specific property overrides in this file. -->

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://aws1</value>

</property>

<property>

<name>ha.zookeeper.quorum</name>

<value>aws1:2181</value>

</property>

<property>

<name>hadoop.proxyuser.root.hosts</name>

<value>*</value>

</property>

<property>

<name>hadoop.proxyuser.root.groups</name>

<value>*</value>

</property>

</configuration>- 2.1.4新建初始化文件hdfs-site.xml

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<!--

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License. See accompanying LICENSE file.

-->

<!-- Put site-specific property overrides in this file. -->

<configuration>

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value>/opt/bigdata/hadoop/ha/dfs/name</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>/opt/bigdata/hadoop/ha/dfs/data</value>

</property>

<property>

<name>dfs.namenode.secondary.http-address</name>

<value>aws2:50090</value>

</property>

<property>

<name>dfs.namenode.checkpoint.dir</name>

<value>/opt/bigdata/hadoop/ha/dfs/secondary</value>

</property>

<property>

<name>dfs.nameservices</name>

<value>aws1</value>

</property>

<property>

<name>dfs.ha.namenodes.aws1</name>

<value>nn1,nn2</value>

</property>

<property>

<name>dfs.namenode.rpc-address.aws1.nn1</name>

<value>aws1:8020</value>

</property>

<property>

<name>dfs.namenode.rpc-address.aws1.nn2</name>

<value>aws2:8020</value>

</property>

<property>

<name>dfs.namenode.http-address.aws1.nn1</name>

<value>aws1:50070</value>

</property>

<property>

<name>dfs.namenode.http-address.aws1.nn2</name>

<value>aws2:50070</value>

</property>

<property>

<property>

<name>dfs.datanode.address</name>

<value>aws1:50010</value>

</property>

<property>

<name>dfs.datanode.ipc.address</name>

<value>aws1:50020</value>

</property>

<property>

<name>dfs.datanode.http.address</name>

<value>aws1:50075</value>

</property>

<property>

<name>dfs.datanode.https.address</name>

<value>aws1:50475</value>

</property>

<name>dfs.namenode.shared.edits.dir</name>

<value>qjournal://aws1:8485;aws2:8485;aws3:8485/mycluster</value>

</property>

<property>

<name>dfs.journalnode.edits.dir</name>

<value>/opt/bigdata/hadoop/ha/dfs/jn</value>

</property>

<property>

<name>dfs.client.failover.proxy.provider.aws1</name>

<value>org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider</value>

</property>

<property>

<name>dfs.ha.fencing.methods</name>

<value>sshfence</value>

</property>

<property>

<name>dfs.ha.fencing.ssh.private-key-files</name>

<value>/root/.ssh/id_dsa</value>

</property>

<property>

<name>dfs.ha.automatic-failover.enabled</name>

<value>true</value>

</property>

</configuration>- 2.1.5上传初始化jar包mysql-connector-java-8.0.16.jar

- 2.1.6上传初始化jar包ojdbc8.jar

2.2 初始化文件替换

cd /opt/Dsrelease

sudo rm -r $today/

echo "rm -r $today"

cd /opt/release

cp $packge_tar /opt/Dsrelease

cd /opt/Dsrelease

tar -zxvf $packge_tar

mv $packge $today

p_api_lib=/opt/Dsrelease/$today/api-server/libs/

p_master_lib=/opt/Dsrelease/$today/master-server/libs/

p_worker_lib=/opt/Dsrelease/$today/worker-server/libs/

p_alert_lib=/opt/Dsrelease/$today/alert-server/libs/

p_tools_lib=/opt/Dsrelease/$today/tools/libs/

p_st_lib=/opt/Dsrelease/$today/standalone-server/libs/

p_api_conf=/opt/Dsrelease/$today/api-server/conf/

p_master_conf=/opt/Dsrelease/$today/master-server/conf/

p_worker_conf=/opt/Dsrelease/$today/worker-server/conf/

p_alert_conf=/opt/Dsrelease/$today/alert-server/conf/

p_tools_conf=/opt/Dsrelease/$today/tools/conf/

p_st_conf=/opt/Dsrelease/$today/standalone-server/conf/

cp $p0 $p4 $p_api_lib

cp $p0 $p4 $p_master_lib

cp $p0 $p4 $p_worker_lib

cp $p0 $p4 $p_alert_lib

cp $p0 $p4 $p_tools_lib

cp $p0 $p4 $p_st_lib

echo "cp $p0 $p_api_lib"

cp $p1 $p2 $p3 $p_api_conf

cp $p1 $p2 $p3 $p_master_conf

cp $p1 $p2 $p3 $p_worker_conf

cp $p1 $p2 $p3 $p_alert_conf

cp $p1 $p2 $p3 $p_tools_conf

cp $p1 $p2 $p3 $p_st_conf

echo "cp $p1 $p2 $p3 $p_api_conf"

}

define_param(){

packge_tar=apache-dolphinscheduler-dev-SNAPSHOT-bin.tar.gz

packge=apache-dolphinscheduler-dev-SNAPSHOT-bin

p0=/opt/action/tool/mysql-connector-java-8.0.16.jar

p1=/opt/action/tool/common.properties

p2=/opt/action/tool/core-site.xml

p3=/opt/action/tool/hdfs-site.xml

p4=/opt/action/tool/ojdbc8.jar

today=`date +%m%d`

}2.3 配置文件内容替换

sed -i 's/spark2/spark/g' /opt/Dsrelease/$today/worker-server/conf/dolphinscheduler_env.sh

cd /opt/Dsrelease/$today/bin/env/

sed -i '$a\export SPRING_PROFILES_ACTIVE=permission_shiro' dolphinscheduler_env.sh

sed -i '$a\export DATABASE="mysql"' dolphinscheduler_env.sh

sed -i '$a\export SPRING_DATASOURCE_DRIVER_CLASS_NAME="com.mysql.jdbc.Driver"' dolphinscheduler_env.sh

#自定义修改mysql配置

sed -i '$a\export SPRING_DATASOURCE_URL="jdbc:mysql://ctyun6:3306/dolphinscheduler?useUnicode=true&characterEncoding=UTF-8&allowMultiQueries=true&allowPublicKeyRetrieval=true"' dolphinscheduler_env.sh

sed -i '$a\export SPRING_DATASOURCE_USERNAME="root"' dolphinscheduler_env.sh

sed -i '$a\export SPRING_DATASOURCE_PASSWORD="root@123"' dolphinscheduler_env.sh

echo "替换jdbc配置成功"

#自定义修改zookeeper配置

sed -i '$a\export REGISTRY_TYPE=${REGISTRY_TYPE:-zookeeper}' dolphinscheduler_env.sh

sed -i '$a\export REGISTRY_ZOOKEEPER_CONNECT_STRING=${REGISTRY_ZOOKEEPER_CONNECT_STRING:-ctyun6:2181}' dolphinscheduler_env.sh

echo "替换zookeeper配置成功"

sed -i 's/resource.storage.type=HDFS/resource.storage.type=NONE/' /opt/Dsrelease/$today/master-server/conf/common.properties

sed -i 's/resource.storage.type=HDFS/resource.storage.type=NONE/' /opt/Dsrelease/$today/worker-server/conf/common.properties

sed -i 's/resource.storage.type=HDFS/resource.storage.type=NONE/' /opt/Dsrelease/$today/alert-server/conf/common.properties

sed -i 's/resource.storage.type=HDFS/resource.storage.type=NONE/' /opt/Dsrelease/$today/api-server/conf/common.properties

sed -i 's/hdfs.root.user=root/resource.hdfs.root.user=root/' /opt/Dsrelease/$today/master-server/conf/common.properties

sed -i 's/hdfs.root.user=root/resource.hdfs.root.user=root/' /opt/Dsrelease/$today/worker-server/conf/common.properties

sed -i 's/hdfs.root.user=root/resource.hdfs.root.user=root/' /opt/Dsrelease/$today/alert-server/conf/common.properties

sed -i 's/hdfs.root.user=root/resource.hdfs.root.user=root/' /opt/Dsrelease/$today/api-server/conf/common.properties

sed -i 's/fs.defaultFS=file:/resource.fs.defaultFS=file:/' /opt/Dsrelease/$today/master-server/conf/common.properties

sed -i 's/fs.defaultFS=file:/resource.fs.defaultFS=file:/' /opt/Dsrelease/$today/worker-server/conf/common.properties

sed -i 's/fs.defaultFS=file:/resource.fs.defaultFS=file:/' /opt/Dsrelease/$today/alert-server/conf/common.properties

sed -i 's/fs.defaultFS=file:/resource.fs.defaultFS=file:/' /opt/Dsrelease/$today/api-server/conf/common.properties

sed -i '$a\resource.hdfs.fs.defaultFS=file:///' /opt/Dsrelease/$today/api-server/conf/common.properties

echo "替换common.properties配置成功"

# 替换master worker内存 api alert也可进行修改,具体根据当前服务器硬件配置而定,但要遵循Xms=Xmx=2Xmn的规律

cd /opt/Dsrelease/$today/

sed -i 's/Xms4g/Xms2g/g' worker-server/bin/start.sh

sed -i 's/Xmx4g/Xmx2g/g' worker-server/bin/start.sh

sed -i 's/Xmn2g/Xmn1g/g' worker-server/bin/start.sh

sed -i 's/Xms4g/Xms2g/g' master-server/bin/start.sh

sed -i 's/Xmx4g/Xmx2g/g' master-server/bin/start.sh

sed -i 's/Xmn2g/Xmn1g/g' master-server/bin/start.sh

echo "master worker内存修改完成"

}3、删除HDFS配置

echo "开始删除hdfs配置"

sudo rm /opt/Dsrelease/$today/api-server/conf/core-site.xml

sudo rm /opt/Dsrelease/$today/api-server/conf/hdfs-site.xml

sudo rm /opt/Dsrelease/$today/worker-server/conf/core-site.xml

sudo rm /opt/Dsrelease/$today/worker-server/conf/hdfs-site.xml

sudo rm /opt/Dsrelease/$today/master-server/conf/core-site.xml

sudo rm /opt/Dsrelease/$today/master-server/conf/hdfs-site.xml

sudo rm /opt/Dsrelease/$today/alert-server/conf/core-site.xml

sudo rm /opt/Dsrelease/$today/alert-server/conf/hdfs-site.xml

echo "结束删除hdfs配置"

}4、MySQL初始化

init_mysql(){

sql_path="/opt/Dsrelease/$today/tools/sql/sql/dolphinscheduler_mysql.sql"

sourceCommand="source $sql_path"

echo $sourceCommand

echo "开始source:"

mysql -hlocalhost -uroot -proot@123 -D "dolphinscheduler" -e "$sourceCommand"

echo "结束source:"

}5、启动DolphinScheduler服务

stop_all_server(){

cd /opt/Dsrelease/$today

./bin/dolphinscheduler-daemon.sh stop api-server

./bin/dolphinscheduler-daemon.sh stop master-server

./bin/dolphinscheduler-daemon.sh stop worker-server

./bin/dolphinscheduler-daemon.sh stop alert-server

ps -ef|grep api-server|grep -v grep|awk '{print "kill -9 " $2}' |sh

ps -ef|grep master-server |grep -v grep|awk '{print "kill -9 " $2}' |sh

ps -ef|grep worker-server |grep -v grep|awk '{print "kill -9 " $2}' |sh

ps -ef|grep alert-server |grep -v grep|awk '{print "kill -9 " $2}' |sh

}

run_all_server(){

cd /opt/Dsrelease/$today

./bin/dolphinscheduler-daemon.sh start api-server

./bin/dolphinscheduler-daemon.sh start master-server

./bin/dolphinscheduler-daemon.sh start worker-server

./bin/dolphinscheduler-daemon.sh start alert-server

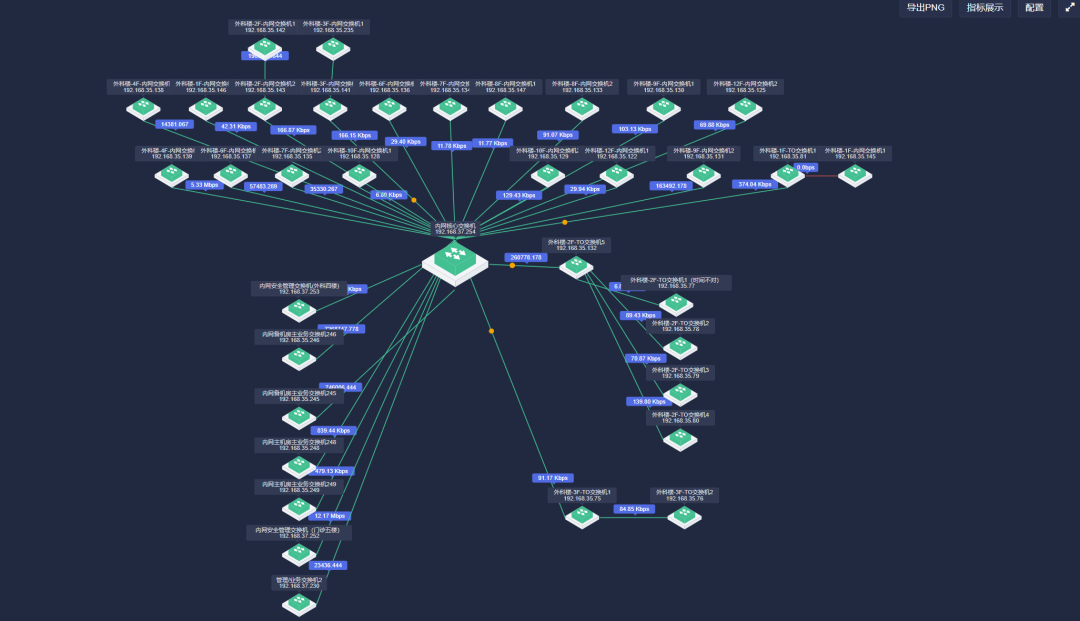

}集群部署

1、开放mysql和zookeeper对外端口 2、集群部署及启动

复制完成初始化的文件夹到指定的服务器,启动指定服务即可完成集群部署,要连同一个Zookeeper和MySQL。

本文由 白鲸开源科技 提供发布支持!