PyTorch 编程基础

文章目录

- PyTorch 编程基础

- 1. backword 求梯度

- 2. 常用损失函数

- 2.1 均方误差损失函数

- 2.2 L1范数误差损失函数

- 2.3 交叉熵损失函数

- 3. 优化器

1. backword 求梯度

import torch

w = torch.tensor([1.], requires_grad=True)

x = torch.tensor([2.], requires_grad=True)

a = torch.add(x, w)

b = torch.add(w, 1)

y = torch.mul(a, b) # y=(x+w)(w+1)

y.backward() # 分别求出两个自变量的导数

print(w.grad) # (w+1)+ (x+w) = x+2w+1 = 5

print(x.grad) # w+1 = 2

tensor([5.])

import torch

w = torch.tensor([1.], requires_grad=True)

x = torch.tensor([2.], requires_grad=True)

for i in range(3):

a = torch.add(x, w)

b = torch.add(w, 1)

y = torch.mul(a, b) # y=(x+w)(w+1)

y.backward() # (w+1)+(x+w) = x+2w+1 = 5

print(w.grad) # 梯度在循环过程中进行了累加

tensor([5.])

tensor([10.])

tensor([15.])

2. 常用损失函数

2.1 均方误差损失函数

loss ( x , y ) = 1 n ∥ x − y ∥ 2 2 = 1 n ∑ i = 1 n ( x i − y i ) 2 \text{loss}(\boldsymbol{x},\boldsymbol{y})=\frac{1}{n}\Vert\boldsymbol{x}-\boldsymbol{y}\Vert_2^2=\frac{1}{n}\sum_{i=1}^n(x_i-y_i)^2 loss(x,y)=n1∥x−y∥22=n1i=1∑n(xi−yi)2

import torch

input = torch.tensor([1.0, 2.0, 3.0, 4.0])

target = torch.tensor([4.0, 5.0, 6.0, 7.0])

loss_fn = torch.nn.MSELoss(reduction='mean')

loss = loss_fn(input, target)

print(loss)

tensor(9.)

2.2 L1范数误差损失函数

loss ( x , y ) = 1 n ∥ x − y ∥ 1 = 1 n ∑ i = 1 n ∣ x i − y i ∣ \text{loss}(\boldsymbol{x},\boldsymbol{y})=\frac{1}{n}\Vert\boldsymbol{x}-\boldsymbol{y}\Vert_1=\frac{1}{n}\sum_{i=1}^n\vert x_i-y_i\vert loss(x,y)=n1∥x−y∥1=n1i=1∑n∣xi−yi∣

import torch

loss = torch.nn.L1Loss(reduction='mean')

input = torch.tensor([1.0, 2.0, 3.0, 4.0])

target = torch.tensor([4.0, 5.0, 6.0, 7.0])

output = loss(input, target)

print(output)

tensor(3.)

2.3 交叉熵损失函数

h ( p , q ) = − ∑ x n p ( x ) ∗ log q ( x ) h(p,q)=-\sum_{x}^np( x)*\log q(x) h(p,q)=−x∑np(x)∗logq(x)

import torch

entroy = torch.nn.CrossEntropyLoss()

input = torch.Tensor([[-0.1181, -0.3682, -0.2209]])

target = torch.tensor([0])

output = entroy(input, target)

print(output)

tensor(0.9862)

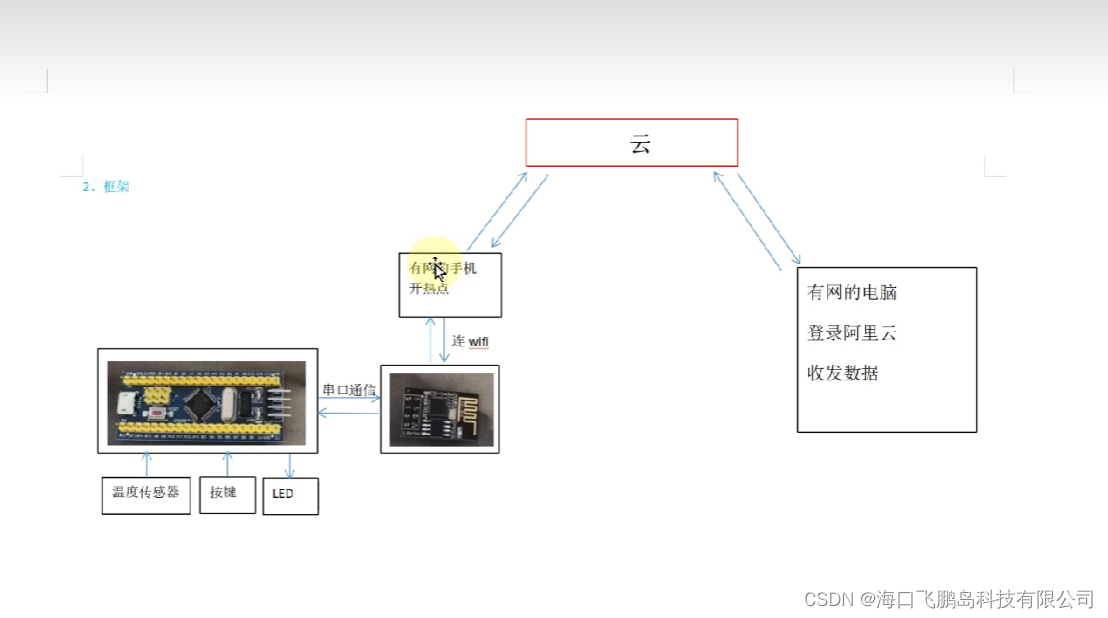

3. 优化器

import torch

import torch.nn

import torch.utils.data as Data

import matplotlib

import matplotlib.pyplot as plt

import os

os.environ["KMP_DUPLICATE_LIB_OK"] = "TRUE"

matplotlib.rcParams['font.sans-serif'] = ['SimHei']

#准备建模数据

x = torch.unsqueeze(torch.linspace(-1, 1, 500), dim=1)

y = x.pow(3)

#设置超参数

LR = 0.01

batch_size = 15

epoches = 5

torch.manual_seed(10)

#设置数据加载器

dataset = Data.TensorDataset(x, y)

loader = Data.DataLoader(

dataset=dataset,

batch_size=batch_size,

shuffle=True,

num_workers=2)

#搭建神经网络

class Net(torch.nn.Module):

def __init__(self, n_input, n_hidden, n_output):

super(Net, self).__init__()

self.hidden_layer = torch.nn.Linear(n_input, n_hidden)

self.output_layer = torch.nn.Linear(n_hidden, n_output)

def forward(self, input):

x = torch.relu(self.hidden_layer(input))

output = self.output_layer(x)

return output

#训练模型并输出折线图

def train():

net_SGD = Net(1, 10, 1)

net_Momentum = Net(1, 10, 1)

net_AdaGrad = Net(1, 10, 1)

net_RMSprop = Net(1, 10, 1)

net_Adam = Net(1, 10, 1)

nets = [net_SGD, net_Momentum, net_AdaGrad, net_RMSprop, net_Adam]

#定义优化器

optimizer_SGD = torch.optim.SGD(net_SGD.parameters(), lr=LR)

optimizer_Momentum = torch.optim.SGD(net_Momentum.parameters(), lr=LR, momentum=0.6)

optimizer_AdaGrad = torch.optim.Adagrad(net_AdaGrad.parameters(), lr=LR, lr_decay=0)

optimizer_RMSprop = torch.optim.RMSprop(net_RMSprop.parameters(), lr=LR, alpha=0.9)

optimizer_Adam = torch.optim.Adam(net_Adam.parameters(), lr=LR, betas=(0.9, 0.99))

optimizers = [optimizer_SGD, optimizer_Momentum, optimizer_AdaGrad, optimizer_RMSprop, optimizer_Adam]

#定义损失函数

loss_function = torch.nn.MSELoss()

losses = [[], [], [], [], []]

for epoch in range(epoches):

for step, (batch_x, batch_y) in enumerate(loader):

for net, optimizer, loss_list in zip(nets, optimizers, losses):

pred_y = net(batch_x)

loss = loss_function(pred_y, batch_y)

optimizer.zero_grad()

loss.backward()

optimizer.step()

loss_list.append(loss.data.numpy())

plt.figure(figsize=(12,7))

labels = ['SGD', 'Momentum', 'AdaGrad', 'RMSprop', 'Adam']

for i, loss in enumerate(losses):

plt.plot(loss, label=labels[i])

plt.legend(loc='upper right',fontsize=15)

plt.tick_params(labelsize=13)

plt.xlabel('Train Step',size=15)

plt.ylabel('Loss',size=15)

plt.ylim((0, 0.3))

plt.show()

if __name__ == "__main__":

train()