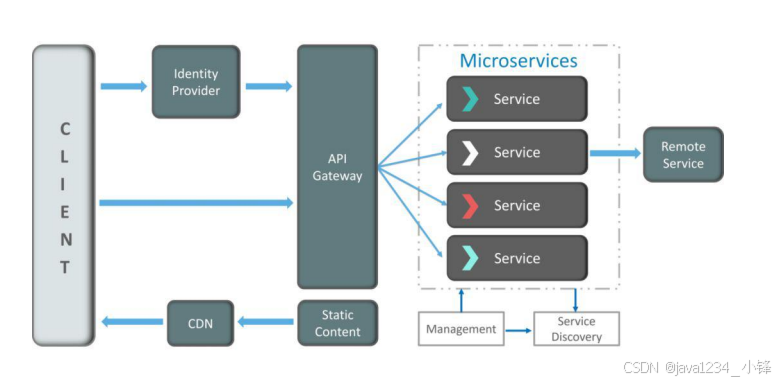

当安装好显卡驱动后怎么样知道驱动程序安装好了,这里以T400 + OpenEuler 正常情况下,我们只要看一下nvidia-smi 状态就可以确定他已经正常了

如图:

这里就已经确定是可以正常使用了,这里只是没有运行对应的程序,那接来下我们就写一个测试程序来测试一下:以下代码通过AI给出然后做了一些小改

这里做两个文件:

首先,让我们创建一个C文件,命名为`gpu_matrix_multiply.cu`:

#include <stdio.h>

#include <stdlib.h>

#include <cuda_runtime.h>

#define N 1024 // Matrix size (N x N)

#define BLOCK_SIZE 32

__global__ void matrixMultiply(float *A, float *B, float *C) {

int row = blockIdx.y * blockDim.y + threadIdx.y;

int col = blockIdx.x * blockDim.x + threadIdx.x;

float sum = 0.0f;

if (row < N && col < N) {

for (int i = 0; i < N; i++) {

sum += A[row * N + i] * B[i * N + col];

}

C[row * N + col] = sum;

}

}

void initMatrix(float *matrix) {

for (int i = 0; i < N * N; i++) {

matrix[i] = rand() / (float)RAND_MAX;

}

}

int main() {

float *h_A, *h_B, *h_C;

float *d_A, *d_B, *d_C;

size_t size = N * N * sizeof(float);

// Allocate host memory

h_A = (float*)malloc(size);

h_B = (float*)malloc(size);

h_C = (float*)malloc(size);

// Initialize host matrices

initMatrix(h_A);

initMatrix(h_B);

// Allocate device memory

cudaMalloc(&d_A, size);

cudaMalloc(&d_B, size);

cudaMalloc(&d_C, size);

// Copy host memory to device

cudaMemcpy(d_A, h_A, size, cudaMemcpyHostToDevice);

cudaMemcpy(d_B, h_B, size, cudaMemcpyHostToDevice);

// Define grid and block dimensions

dim3 dimBlock(BLOCK_SIZE, BLOCK_SIZE);

dim3 dimGrid((N + dimBlock.x - 1) / dimBlock.x, (N + dimBlock.y - 1) / dimBlock.y);

// Create CUDA events for timing

cudaEvent_t start, stop;

cudaEventCreate(&start);

cudaEventCreate(&stop);

// Record start event

cudaEventRecord(start);

// Launch kernel

matrixMultiply<<<dimGrid, dimBlock>>>(d_A, d_B, d_C);

// Record stop event

cudaEventRecord(stop);

cudaEventSynchronize(stop);

// Calculate elapsed time

float milliseconds = 0;

cudaEventElapsedTime(&milliseconds, start, stop);

printf("Matrix multiplication took %f ms\n", milliseconds);

// Copy result back to host

cudaMemcpy(h_C, d_C, size, cudaMemcpyDeviceToHost);

// Clean up

free(h_A); free(h_B); free(h_C);

cudaFree(d_A); cudaFree(d_B); cudaFree(d_C);

cudaEventDestroy(start); cudaEventDestroy(stop);

return 0;

}然后能用批处理就批处理,再来创建一个Shell脚本来编译和运行这个程序。将以下内容保存为`compile_and_run.sh`:

#!/bin/bash

# Compile the CUDA program

nvcc -o gpu_matrix_multiply gpu_matrix_multiply.cu

# Check if compilation was successful

if [ $? -eq 0 ]; then

echo "Compilation successful. Running the program..."

# Run the program

./gpu_matrix_multiply

else

echo "Compilation failed."

fi然后就是跑起来:

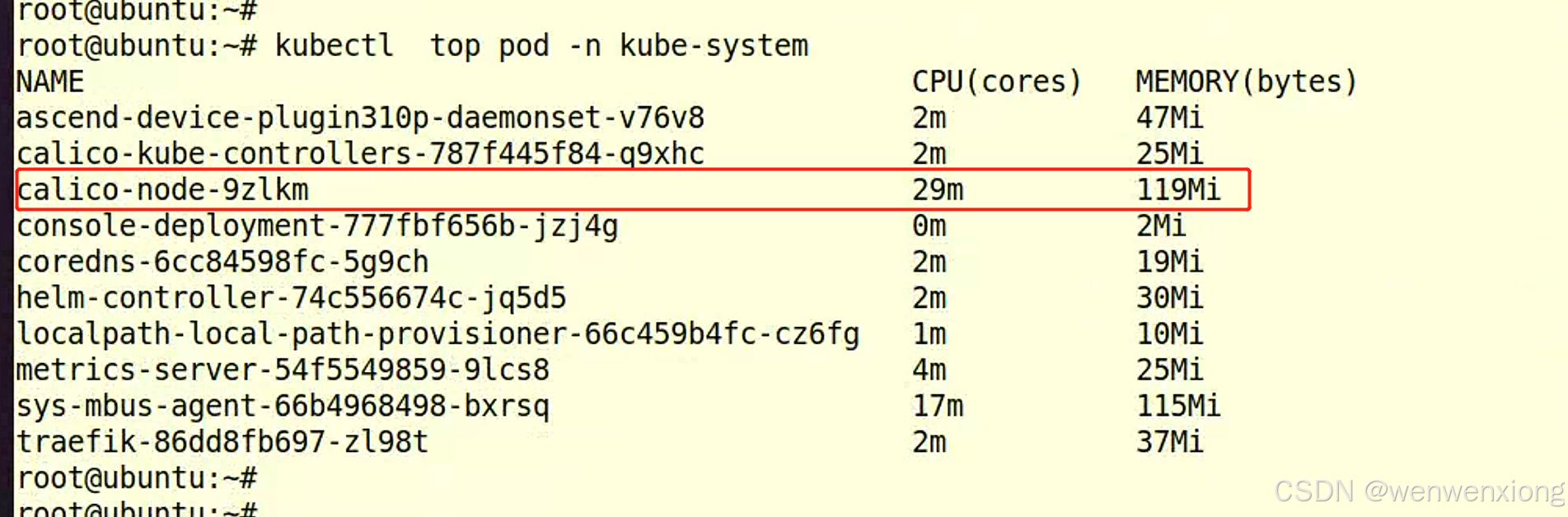

sh compile_and_run.sh再开一个窗口来监控nvidia-smi 情况:

会看到如下结果:

这时Processes里多出来了刚才测试的程序.

测试完成.