unified-runtime编译与验证

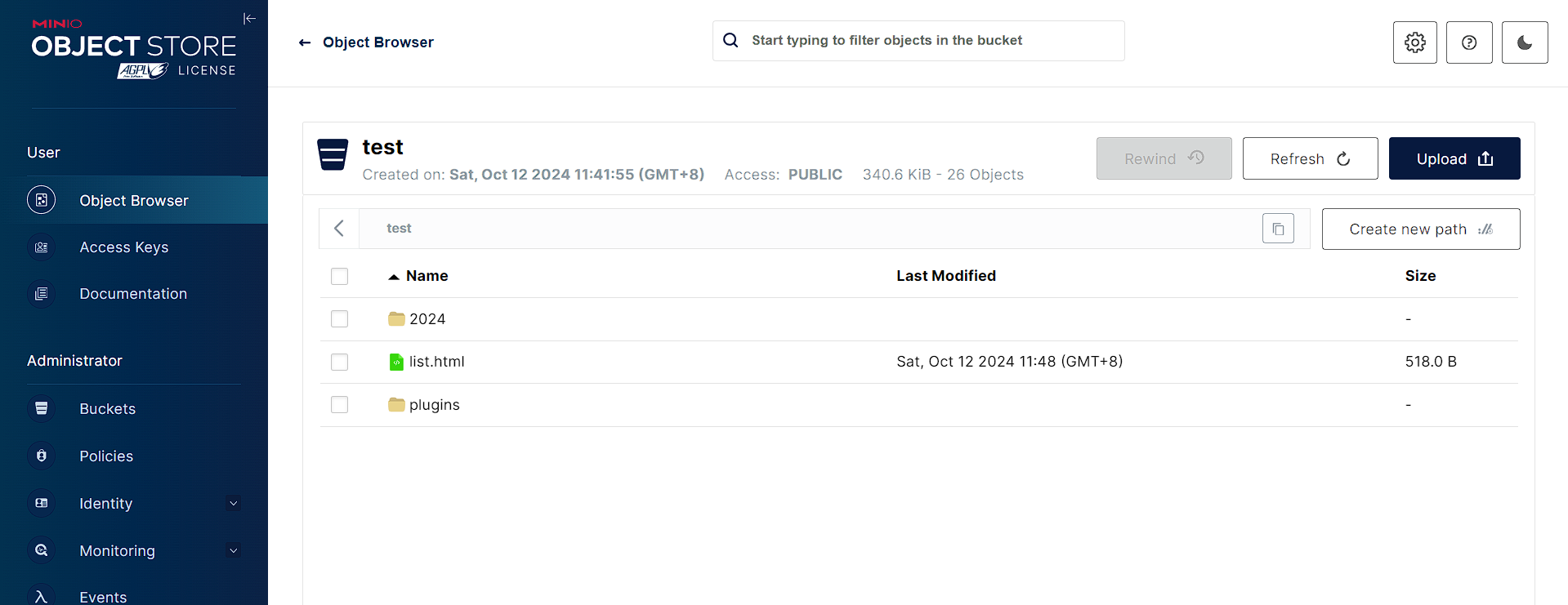

- 一.创建容器

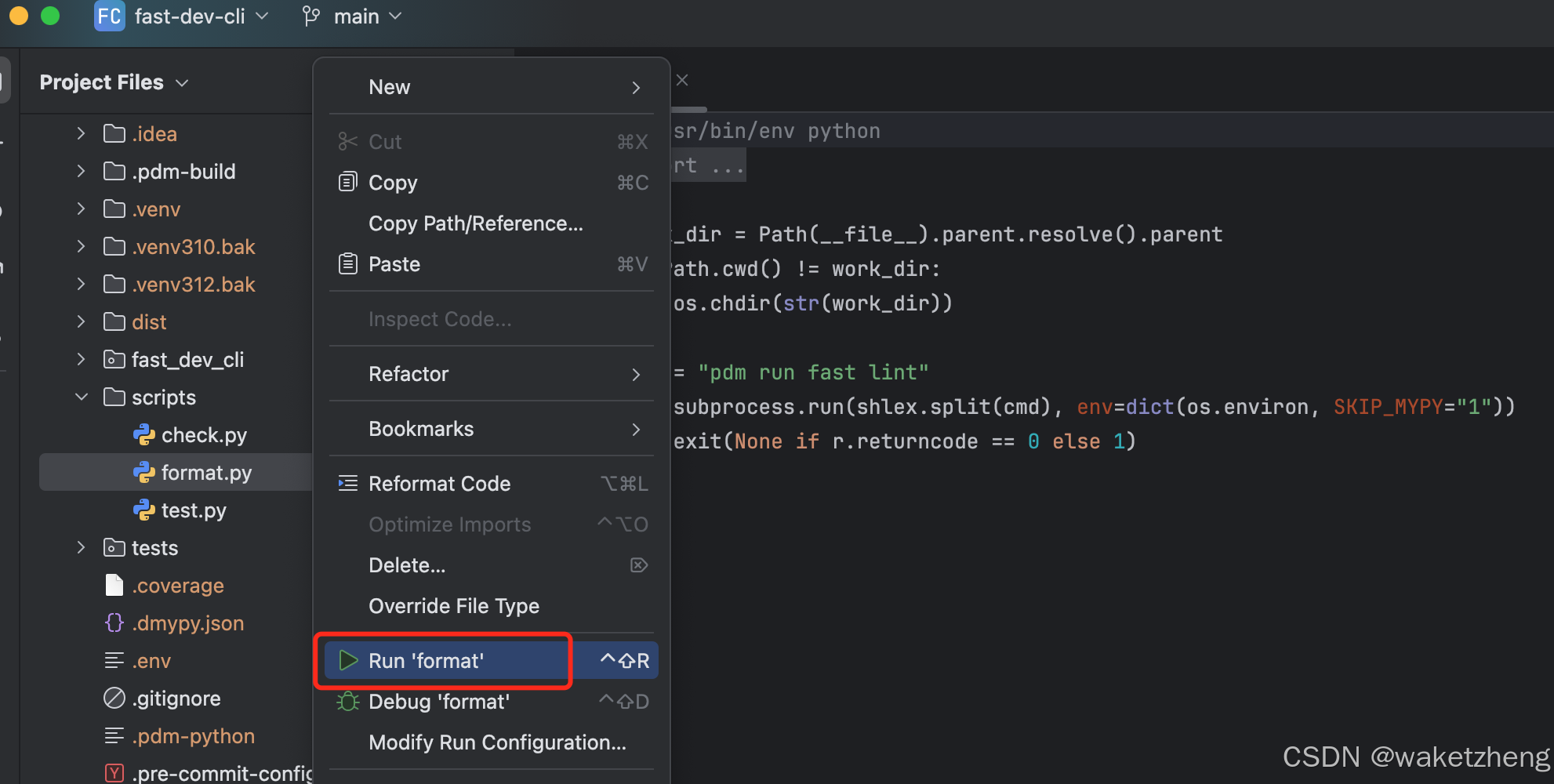

- 二.编译unified-runtime

- 三.生成一个cuda ptx kernel

- 四.API测试

unified-runtime编译与验证

一.创建容器

docker run --gpus all --shm-size=32g -ti \

-e NVIDIA_VISIBLE_DEVICES=all --privileged --net=host \

--rm -it \

-v $PWD:/home \

-w /home ghcr.io/intel/llvm/ubuntu2204_build /bin/bash

二.编译unified-runtime

git clone https://github.com/oneapi-src/unified-runtime

cd unified-runtime

mkdir build

cd build

cmake -DUR_BUILD_ADAPTER_CUDA=ON -DUR_BUILD_ADAPTER_NATIVE_CPU=ON -DUMF_DISABLE_HWLOC=ON ..

make

三.生成一个cuda ptx kernel

tee cuda_copy.cu<<-'EOF'

#include <iostream>

#include <cuda_runtime.h>

#include <iostream>

#include <vector>

#include <stdio.h>

#include <assert.h>

#include <cstdio>

#include <cuda.h>

__global__ void kernel_copy(float *input,float *output)

{

unsigned int tid = threadIdx.x + blockIdx.x * blockDim.x;

output[tid]=input[tid];

}

EOF

/usr/local/cuda/bin/nvcc -std=c++17 -dc -lineinfo -arch=sm_86 -ptx cuda_copy.cu -o cuda_copy.ptx

四.API测试

tee um_query_device.cpp<<-'EOF'

#include <iostream>

#include <memory>

#include <stdlib.h>

#include <vector>

#include <iostream>

#include <fstream>

#include <sstream>

#include "ur_api.h"

#define ur_check(call) \

do { \

ur_result_t error = call; \

if (error != UR_RESULT_SUCCESS) { \

fprintf(stderr, " error in file '%s' in line %i: %d.\n", __FILE__, __LINE__,error); \

exit(EXIT_FAILURE); \

} \

} while (0)

constexpr unsigned PAGE_SIZE = 4096;

template <typename T, size_t N> struct alignas(PAGE_SIZE) AlignedArray {

T data[N];

};

int main(int, char *[]) {

ur_result_t status;

ur_check(urLoaderInit(0, nullptr));

std::cout << "Platform initialized.\n";

uint32_t adapterCount = 0;

std::vector<ur_adapter_handle_t> adapters;

uint32_t platformCount = 0;

std::vector<ur_platform_handle_t> platforms;

ur_check(urAdapterGet(0, nullptr, &adapterCount));

adapters.resize(adapterCount);

ur_check(urAdapterGet(adapterCount, adapters.data(), nullptr));

ur_check(urPlatformGet(adapters.data(), adapterCount, 1, nullptr,&platformCount));

platforms.resize(platformCount);

ur_check(urPlatformGet(adapters.data(), adapterCount, platformCount,

platforms.data(), nullptr));

for (auto p : platforms) {

ur_api_version_t api_version = {};

ur_check(urPlatformGetApiVersion(p, &api_version));

std::cout << "API version: " << UR_MAJOR_VERSION(api_version) << "."

<< UR_MINOR_VERSION(api_version) << std::endl;

uint32_t deviceCount = 0;

ur_check(urDeviceGet(p, UR_DEVICE_TYPE_GPU, 0, nullptr, &deviceCount));

std::vector<ur_device_handle_t> devices(deviceCount);

ur_check(urDeviceGet(p, UR_DEVICE_TYPE_GPU, deviceCount, devices.data(),

nullptr));

for (auto d : devices) {

ur_device_type_t device_type = UR_DEVICE_TYPE_ALL;

ur_check(urDeviceGetInfo(

d, UR_DEVICE_INFO_TYPE, sizeof(ur_device_type_t),

static_cast<void *>(&device_type), nullptr));

static const size_t DEVICE_NAME_MAX_LEN = 1024;

char device_name[DEVICE_NAME_MAX_LEN] = {0};

ur_check(urDeviceGetInfo(d, UR_DEVICE_INFO_NAME, DEVICE_NAME_MAX_LEN - 1,

static_cast<void *>(&device_name), nullptr));

if (device_type == UR_DEVICE_TYPE_GPU) {

std::cout << "Found a " << device_name << " gpu.\n";

}

ur_context_handle_t hContext;

ur_check(urContextCreate(1, &d, nullptr, &hContext));

std::ifstream inputFile("cuda_copy.ptx");

std::ostringstream buffer;

buffer << inputFile.rdbuf();

std::string fileContent = buffer.str();

inputFile.close();

ur_program_handle_t hProgram;

ur_check(urProgramCreateWithBinary(hContext, d, fileContent.length(), (const uint8_t *)fileContent.c_str(),nullptr,&hProgram));

constexpr int a_size = 32;

AlignedArray<float, a_size> a, b;

for (auto i = 0; i < a_size; ++i) {

a.data[i] = a_size - i;

b.data[i] = 0;

}

status=urProgramBuild(hContext, hProgram, nullptr);

ur_mem_handle_t dA, dB;

ur_check(urMemBufferCreate(hContext, UR_MEM_FLAG_READ_WRITE,

a_size * sizeof(int), nullptr, &dA));

ur_check(urMemBufferCreate(hContext, UR_MEM_FLAG_READ_WRITE,

a_size * sizeof(int), nullptr, &dB));

ur_kernel_handle_t hKernel;

ur_check(urKernelCreate(hProgram, "_Z11kernel_copyPfS_", &hKernel));

ur_check(urKernelSetArgMemObj(hKernel, 0, nullptr, dA));

ur_check(urKernelSetArgMemObj(hKernel, 1, nullptr, dB));

ur_queue_handle_t queue;

ur_check(urQueueCreate(hContext, d, nullptr, &queue));

ur_check(urEnqueueMemBufferWrite(queue, dA, true, 0, a_size * sizeof(float),

a.data, 0, nullptr, nullptr));

ur_check(urEnqueueMemBufferWrite(queue, dB, true, 0, a_size * sizeof(float),

b.data, 0, nullptr, nullptr));

const size_t gWorkOffset[] = {0, 0, 0};

const size_t gWorkSize[] = {a_size, 1, 1};

const size_t lWorkSize[] = {1, 1, 1};

ur_event_handle_t event;

ur_check(urEnqueueKernelLaunch(queue, hKernel, 3, gWorkOffset, gWorkSize,

lWorkSize, 0, nullptr, &event));

ur_check(urEnqueueMemBufferRead(queue, dB, true, 0, a_size * sizeof(int),

b.data, 1, &event, nullptr));

ur_check(urQueueFinish(queue));

ur_check(urContextRelease(hContext));

for (auto i = 0; i < a_size; ++i) {

printf("%.2f\n",b.data[i]);

}

}

}

out:

for (auto adapter : adapters) {

urAdapterRelease(adapter);

}

urLoaderTearDown();

return status == UR_RESULT_SUCCESS ? 0 : 1;

}

EOF

g++ -o um_query_device um_query_device.cpp -I../include -L lib -lur_loader -lpthread

LD_LIBRARY_PATH=$LD_LIBRARY_PATH:$PWD/lib ./um_query_device