CUDA 事件 可以为主机代码和设备代码计时。

基本的语法

// 定义事件变量

cudaEvent_t start, stop;

// 初始化

cudaEventCreate(&start);

cudaEventCreate(&stop);

// 记录代表时间开始的事件,注意不是地址

cudaEventRecord(start);

// 在TCC的驱动下可以省略,在WDDM驱动模式下必须保留,所以默认保留

// 不可以使用错误检测函数,默认返回值是错误的

cudaEventQuery(start);

/**

* code

**/

// 记录代表时间结束的事件

cudaEventRecord(stop);

// 事件同步函数,等待事件记录结束

cudaEventSynchronize(stop);

// 计算时间差

float elapsed_time;

cudaEventElapsedTime(&elapsed_time, start, stop);

// 销毁变量

cudaEventDestroy(start);

cudaEventDestroy(stop);

使用示例:

计算两个数组的和

#include <cuda_runtime.h>

#include <device_launch_parameters.h>

#include <stdio.h>

#include <stdlib.h>

#include <string.h>

#include <iostream>

#define NUM_REPEATS 10

static void CheckCudaErrorAux(const char*, unsigned, const char*, cudaError_t);

#define CUDA_CHECK_RETURN(value) CheckCudaErrorAux(__FILE__,__LINE__, #value, value)

// 设备函数

__device__ float add(const float x, const float y)

{

return x + y;

}

__global__ void addFromGPU(float* A, float* B, float* C, const int N)

{

int blockId = blockIdx.x;

int id = blockId * blockDim.x + threadIdx.x;

if (id >= N)

{

return;

}

C[id] = add(A[id], B[id]);

}

void initialData(float* addr, int nCount)

{

for (size_t i = 0; i < nCount; i++)

{

addr[i] = (float)(rand() & 0xFFF) / 100.f;

}

}

int main()

{

int iElemntCount = 4096*10;

size_t stBytesCount = iElemntCount * sizeof(float); // 字节数

// 分配主机内存和设备内存并初始化

float* fpHost_A = new float[iElemntCount];

float* fpHost_B = new float[iElemntCount];

float* fpHost_C = new float[iElemntCount];

memset(fpHost_A, 0, stBytesCount);

memset(fpHost_B, 0, stBytesCount);

memset(fpHost_C, 0, stBytesCount);

float* fpDevice_A, * fpDevice_B, * fpDevice_C;

CUDA_CHECK_RETURN(cudaMalloc((void**)&fpDevice_A, stBytesCount));

CUDA_CHECK_RETURN(cudaMalloc((void**)&fpDevice_B, stBytesCount));

CUDA_CHECK_RETURN(cudaMalloc((void**)&fpDevice_C, stBytesCount));

CUDA_CHECK_RETURN(cudaMemset(fpDevice_C, 0,stBytesCount));

srand(666);

initialData(fpHost_A, iElemntCount);

initialData(fpHost_B, iElemntCount);

CUDA_CHECK_RETURN(cudaMemcpy(fpDevice_A, fpHost_A, stBytesCount, cudaMemcpyHostToDevice));

CUDA_CHECK_RETURN(cudaMemcpy(fpDevice_B, fpHost_B, stBytesCount, cudaMemcpyHostToDevice));

dim3 block(32);

dim3 grid((iElemntCount + block.x - 1)/ block.x);

cudaEvent_t start, stop;

for (int i = 0; i < NUM_REPEATS; i++)

{

CUDA_CHECK_RETURN(cudaEventCreate(&start));

CUDA_CHECK_RETURN(cudaEventCreate(&stop));

CUDA_CHECK_RETURN(cudaEventRecord(start));

cudaEventQuery(start);

addFromGPU <<<grid, block >>> (fpDevice_A, fpDevice_B, fpDevice_C, iElemntCount);

CUDA_CHECK_RETURN(cudaEventRecord(stop));

CUDA_CHECK_RETURN(cudaEventSynchronize(stop));

float elapsed_time = 0.0f;

CUDA_CHECK_RETURN(cudaEventElapsedTime(&elapsed_time, start, stop));

CUDA_CHECK_RETURN(cudaEventDestroy(start));

CUDA_CHECK_RETURN(cudaEventDestroy(stop));

printf("%d \t elapsed_time = %.2f \n", i, elapsed_time);

//CUDA_CHECK_RETURN(cudaMemcpy(fpHost_C, fpDevice_C, stBytesCount, cudaMemcpyDeviceToHost));

//for (size_t j = 0; j < iElemntCount; j++)

//{

// printf("%.2f + %.2f = %.2f \n", fpHost_A[j], fpHost_B[j], fpHost_C[j]);

//}

}

delete[]fpHost_A;

delete[]fpHost_B;

delete[]fpHost_C;

fpHost_A = nullptr;

fpHost_B = nullptr;

fpHost_C = nullptr;

return 0;

}

static void CheckCudaErrorAux(const char* file, unsigned line, const char* statement, cudaError_t err)

{

if (err == cudaSuccess)

return;

std::cerr << statement << " returned: "<< cudaGetErrorName(err) << " \t : " << cudaGetErrorString(err) << "(" << err << ") at " << file << ":" << line << std::endl;

exit(1);

}

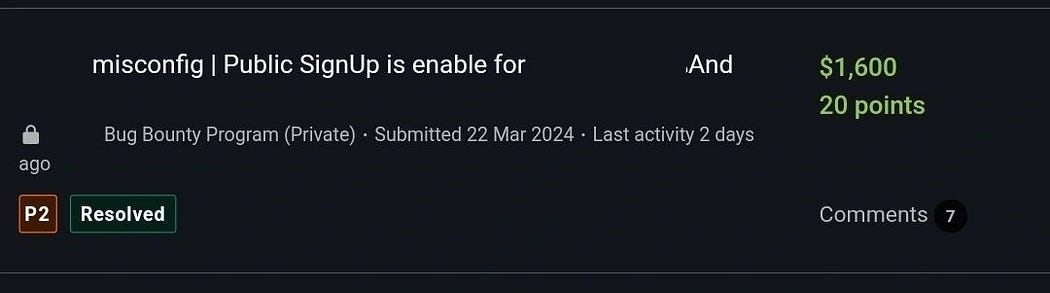

结果:

可以看出第一次调用的时候最费时