RDK™ X5机器人开发套件,D-Robotics RDK X5搭载Sunrise 5智能计算芯片,可提供高达10 Tops的算力,是一款面向智能计算与机器人应用的全能开发套件,接口丰富,极致易用。

本文利用其32GFLOPS的一颗小GPU,支持OpenCL,搭配MLC-LLM框架运行小语言模型。其中,千问2.5 - 0.5B大约0.5 tok/s,SmolLM - 130M大约2tok/s。

注意:RDK X5的核心部件为其10TOPS的BPU,并非GPU。本文只是把玩它的GPU,非量产算法。

摘要

- 百分之零的BPU占用,几乎百分之零的CPU占用,将主要计算资源保留给主要算法。

- 采用int4量化,内存和带宽占用理论上int8量化的一半,空余内存4.1GB。

- 采用GPU作为计算部件,摸着通用计算的尾巴。理论上,可以使用X5的这颗支持OpenCL的GPU运行HuggingFace上面的任何模型。

- 所有涉及的代码仓库或者模型权重均为Apache 2.0协议开源。

运行效果

Bilibili:

https://www.bilibili.com/video/BV1Q428YfEKD

Qwen2.5-0.5B-Instruct-q4f32_1-MLC:

调整/set temperature=0.5;top_p=0.8;seed=23;max_tokens=60;

SmolLM-135M-Instruct-q4f32_1-MLC

RDK X5

不多介绍了,史上千元内最强机器人开发套件。

MLC - LLM

MLC-LLM相当于工具链和推理库,本文利用的是Android目标产物对Mail-GPU的OpenCL支持。

OpenCL

OpenCL is Widely Deployed and Used

OpenCL for Low-level Parallel Programing, OpenCL speeds applications by offloading their most computationally intensive code onto accelerator processors - or devices. OpenCL developers use C or C++ based kernel languages to code programs that are passed through a device compiler for parallel execution on accelerator devices.

步骤参考

注:需要一定Linux操作经验,文件和路径请仔细核对,任何No such file or directory, No module named “xxx”, command not found 等报错请仔细检查,请勿逐条复制运行。

调整RDK X5到最佳状态

超频到全核心1.8Ghz,全程Performace调度

sudo bash -c "echo 1 > /sys/devices/system/cpu/cpufreq/boost"

sudo bash -c "echo performance > /sys/devices/system/cpu/cpufreq/policy0/scaling_governor"

卸载暂时不需要的包以节约外存和内存,得到一个reboot后存储占用约5.5GB,内存占用约240MB的RDK X5环境。

sudo apt remove *xfce*

sudo apt remove hobot-io-samples hobot-multimedia-samples hobot-models-basic

sudo apt autoremove

开启8GB的Swap交换空间

fallocate -l 8G /swapfile # 创建一个用作交换文件的文件,4GB大小

chmod 600 /swapfile # 阻止普通用户读取

mkswap /swapfile # 在这个文件上创建一个 Linux 交换区

swapon /swapfile # 激活交换区

Conda安装 (可选)

wget https://mirrors.tuna.tsinghua.edu.cn/anaconda/miniconda/Miniconda3-py310_24.7.1-0-Linux-aarch64.sh

bash Miniconda3-py310_24.7.1-0-Linux-aarch64.sh

# 安装

Enter, q, Enter, yes

安装相关apt包

sudo apt install gcc libtinfo-dev zlib1g-dev build-essential cmake libedit-dev libxml2-dev rustc cargo doxygen -y

sudo apt install -y git-lfs clinfo libgtest-dev

验证OpenCL驱动

使用clinfo命令,出现以下内容说明OpenCL驱动没有问题。

OpenCL驱动稳稳的,给系统软件的同事点赞👍!

$ clinfo

Number of platforms 1

Platform Name Vivante OpenCL Platform

Platform Vendor Vivante Corporation

Platform Version OpenCL 3.0 V6.4.14.9.674707

Platform Profile FULL_PROFILE

Platform Extensions cl_khr_byte_addressable_store cl_khr_fp16 cl_khr_il_program cl_khr_global_int32_base_atomics cl_khr_global_int32_extended_atomics cl_khr_local_int32_base_atomics cl_khr_local_int32_extended_atomics cl_khr_gl_sharing cl_khr_command_buffer

Platform Extensions with Version cl_khr_byte_addressable_store 0x400000 (1.0.0)

cl_khr_fp16 0x400000 (1.0.0)

cl_khr_il_program 0x400000 (1.0.0)

cl_khr_global_int32_base_atomics 0x400000 (1.0.0)

cl_khr_global_int32_extended_atomics 0x400000 (1.0.0)

cl_khr_local_int32_base_atomics 0x400000 (1.0.0)

cl_khr_local_int32_extended_atomics 0x400000 (1.0.0)

cl_khr_gl_sharing 0x400000 (1.0.0)

cl_khr_command_buffer 0x400000 (1.0.0)

Platform Numeric Version 0xc00000 (3.0.0)

Platform Host timer resolution 0ns

Platform Name Vivante OpenCL Platform

Number of devices 1

Device Name Vivante OpenCL Device GC8000L.6214.0000

Device Vendor Vivante Corporation

Device Vendor ID 0x564956

Device Version OpenCL 3.0

Device Numeric Version 0xc00000 (3.0.0)

Driver Version OpenCL 3.0 V6.4.14.9.674707

Device OpenCL C Version OpenCL C 1.2

Device OpenCL C all versions OpenCL C 0x400000 (1.0.0)

OpenCL C 0x401000 (1.1.0)

OpenCL C 0x402000 (1.2.0)

OpenCL C 0xc00000 (3.0.0)

Device OpenCL C features __opencl_c_images 0x400000 (1.0.0)

__opencl_c_int64 0x400000 (1.0.0)

Latest comfornace test passed v2021-03-25-00

Device Type GPU

Device Profile FULL_PROFILE

Device Available Yes

Compiler Available Yes

Linker Available Yes

Max compute units 1

Max clock frequency 996MHz

Device Partition (core)

Max number of sub-devices 0

Supported partition types None

Supported affinity domains (n/a)

Max work item dimensions 3

Max work item sizes 1024x1024x1024

Max work group size 1024

Preferred work group size multiple (device) 16

Preferred work group size multiple (kernel) 16

Max sub-groups per work group 0

Preferred / native vector sizes

char 4 / 4

short 4 / 4

int 4 / 4

long 4 / 4

half 4 / 4 (cl_khr_fp16)

float 4 / 4

double 0 / 0 (n/a)

Half-precision Floating-point support (cl_khr_fp16)

Denormals No

Infinity and NANs Yes

Round to nearest Yes

Round to zero Yes

Round to infinity No

IEEE754-2008 fused multiply-add No

Support is emulated in software No

Single-precision Floating-point support (core)

Denormals No

Infinity and NANs Yes

Round to nearest Yes

Round to zero Yes

Round to infinity No

IEEE754-2008 fused multiply-add No

Support is emulated in software No

Correctly-rounded divide and sqrt operations No

Double-precision Floating-point support (n/a)

Address bits 32, Little-Endian

Global memory size 268435456 (256MiB)

Error Correction support Yes

Max memory allocation 134217728 (128MiB)

Unified memory for Host and Device Yes

Shared Virtual Memory (SVM) capabilities (core)

Coarse-grained buffer sharing No

Fine-grained buffer sharing No

Fine-grained system sharing No

Atomics No

Minimum alignment for any data type 128 bytes

Alignment of base address 2048 bits (256 bytes)

Preferred alignment for atomics

SVM 0 bytes

Global 0 bytes

Local 0 bytes

Atomic memory capabilities relaxed, work-group scope

Atomic fence capabilities relaxed, acquire/release, work-group scope

Max size for global variable 0

Preferred total size of global vars 0

Global Memory cache type Read/Write

Global Memory cache size 8192 (8KiB)

Global Memory cache line size 64 bytes

Image support Yes

Max number of samplers per kernel 16

Max size for 1D images from buffer 65536 pixels

Max 1D or 2D image array size 8192 images

Max 2D image size 8192x8192 pixels

Max 3D image size 8192x8192x8192 pixels

Max number of read image args 128

Max number of write image args 8

Max number of read/write image args 0

Pipe support No

Max number of pipe args 0

Max active pipe reservations 0

Max pipe packet size 0

Local memory type Global

Local memory size 32768 (32KiB)

Max number of constant args 9

Max constant buffer size 65536 (64KiB)

Generic address space support No

Max size of kernel argument 1024

Queue properties (on host)

Out-of-order execution Yes

Profiling Yes

Device enqueue capabilities (n/a)

Queue properties (on device)

Out-of-order execution No

Profiling No

Preferred size 0

Max size 0

Max queues on device 0

Max events on device 0

Prefer user sync for interop Yes

Profiling timer resolution 1000ns

Execution capabilities

Run OpenCL kernels Yes

Run native kernels No

Non-uniform work-groups No

Work-group collective functions No

Sub-group independent forward progress No

IL version SPIR-V_1.5

ILs with version SPIR-V 0x405000 (1.5.0)

printf() buffer size 1048576 (1024KiB)

Built-in kernels (n/a)

Built-in kernels with version (n/a)

Device Extensions cl_khr_byte_addressable_store cl_khr_fp16 cl_khr_il_program cl_khr_global_int32_base_atomics cl_khr_global_int32_extended_atomics cl_khr_local_int32_base_atomics cl_khr_local_int32_extended_atomics cl_khr_gl_sharing cl_khr_command_buffer

Device Extensions with Version cl_khr_byte_addressable_store 0x400000 (1.0.0)

cl_khr_fp16 0x400000 (1.0.0)

cl_khr_il_program 0x400000 (1.0.0)

cl_khr_global_int32_base_atomics 0x400000 (1.0.0)

cl_khr_global_int32_extended_atomics 0x400000 (1.0.0)

cl_khr_local_int32_base_atomics 0x400000 (1.0.0)

cl_khr_local_int32_extended_atomics 0x400000 (1.0.0)

cl_khr_gl_sharing 0x400000 (1.0.0)

cl_khr_command_buffer 0x400000 (1.0.0)

NULL platform behavior

clGetPlatformInfo(NULL, CL_PLATFORM_NAME, ...) No platform

clGetDeviceIDs(NULL, CL_DEVICE_TYPE_ALL, ...) Success [P0]

clCreateContext(NULL, ...) [default] Success [P0]

clCreateContextFromType(NULL, CL_DEVICE_TYPE_DEFAULT) Success (1)

Platform Name Vivante OpenCL Platform

Device Name Vivante OpenCL Device GC8000L.6214.0000

clCreateContextFromType(NULL, CL_DEVICE_TYPE_CPU) No devices found in platform

clCreateContextFromType(NULL, CL_DEVICE_TYPE_GPU) Success (1)

Platform Name Vivante OpenCL Platform

Device Name Vivante OpenCL Device GC8000L.6214.0000

clCreateContextFromType(NULL, CL_DEVICE_TYPE_ACCELERATOR) No devices found in platform

clCreateContextFromType(NULL, CL_DEVICE_TYPE_CUSTOM) No devices found in platform

clCreateContextFromType(NULL, CL_DEVICE_TYPE_ALL) Success (1)

Platform Name Vivante OpenCL Platform

Device Name Vivante OpenCL Device GC8000L.6214.0000

这段信息描述了一个名为Vivante OpenCL Platform的OpenCL平台及其设备的详细规格。从提供的信息来看,这里没有直接的技术问题或错误,但是有几个点需要注意或可能需要进一步调查:

- Half-precision Floating-point support 和 Single-precision Floating-point support 都表明该设备不支持denormals(非正规化数),这可能会对某些计算精度敏感的应用程序产生影响。

- Double-precision Floating-point support 标记为 (n/a) 表明此设备可能不支持双精度浮点运算。对于需要高精度计算的应用,这可能是一个限制因素。

- Max compute units 只有 1,这意味着该GPU可能在并行处理能力上有限制,尤其是在处理复杂的图形或计算密集型任务时。

- Sub-group independent forward progress 为 No,这表示如果应用程序依赖于子组独立前向进展(sub-group independent forward progress)特性,则可能需要其他解决方案。

- Profiling 在设备端为 No,意味着无法获取设备上的性能数据来进行分析优化。

- Queue properties (on device) 的 Preferred size 和 Max size 均为 0,这可能是信息展示的问题或者意味着队列大小不受限制,后者在实际应用中并不常见。

安装Rust

参考阿里源:https://developer.aliyun.com/mirror/rustup

# Rust 官方

curl --proto '=https' --tlsv1.2 -sSf https://sh.rustup.rs | sh

# or, 使用阿里云安装脚本

curl --proto '=https' --tlsv1.2 -sSf https://mirrors.aliyun.com/repo/rust/rustup-init.sh | sh

输入ructs --version ,出现以下内容说明安装成功

$ source ~/.bashrc

$ rustc --version

rustc 1.81.0 (eeb90cda1 2024-09-04)

为Rust更换阿里源,在.bashrc中加入以下内容

export RUSTUP_UPDATE_ROOT=https://mirrors.aliyun.com/rustup/rustup

export RUSTUP_DIST_SERVER=https://mirrors.aliyun.com/rustup

源码安装CMake

获取最新版本的CMake (>= 3.24,板卡上是3.22且无法apt更新)

wget https://github.com/Kitware/CMake/releases/download/v3.30.5/cmake-3.30.5-linux-aarch64.sh

git clone https://github.com/Kitware/CMake.git

编译&安装

cd CMake

./bootstrap

make

sudo make install

使用cmake --version命令来验证cmake的版本

源码安装TVM Unity Compiler

拉取LLVM

# 18.1.8

wget https://github.com/llvm/llvm-project/releases/download/llvmorg-18.1.8/clang+llvm-18.1.8-aarch64-linux-gnu.tar.xz

tar -xvf clang+llvm-18.1.8-aarch64-linux-gnu.tar.xz

拉取tvm仓库

git clone --recursive https://github.com/mlc-ai/relax.git tvm_unity

其中,git clone --recursive 是 Git 中的一个命令,用于克隆一个包含子模块(submodule)的仓库。这个命令会递归地将所有子模块也一起克隆下来。

如果clone仓库的时候网络不佳导致克隆中断,可以继续git clone动作,如果提示已存在非空目录,此时需要进入仓库(进入那个目录),使用以下命令手动初始化和更新子模块:

git submodule update --init --recursive

编译

cd tvm_unity/

mkdir -p build && cd build

cp ../cmake/config.cmake .

使用vim在config.cmake文件中修改下面几项:

set(CMAKE_BUILD_TYPE RelWithDebInfo) #这一项在文件中没有,需要添加

set(USE_OPENCL ON) #这一项在文件中可以找到,需要修改

set(HIDE_PRIVATE_SYMBOLS ON) #这一项在文件中没有,需要添加

set(USE_LLVM /media/rootfs/gpu_llm_sd/clang+llvm-17.0.2-aarch64-linux-gnu/bin/llvm-config)

开始编译,在编译到100%的时候内存会非常非常紧张,这时候需要耐心等待。

cmake ..

make -j6 # 为什么不j8?因为要留俩核心拉取下一步的代码哈哈哈

安装tvm,安装会build wheel,会非常慢,请耐心等待。如果Ctrl + C,可能需要重新编译,否则python会一直报错。

cd ../python

pip3 install --user .

在.bashrc添加环境变量,并激活环境变量source ~/.bashrc

export PATH="$PATH:/root/.local/bin"

export PYTHONPATH=/media/rootfs/gpu_llm/tvm_unity/python:$PYTHONPATH

安装成功后,使用tvmc命令,出现以下日志,说明安装成功

$ tvmc

usage: tvmc [--config CONFIG] [-v] [--version] [-h] {run,tune,compile} ...

TVM compiler driver

options:

--config CONFIG configuration json file

-v, --verbose increase verbosity

--version print the version and exit

-h, --help show this help message and exit.

commands:

{run,tune,compile}

run run a compiled module

tune auto-tune a model

compile compile a model.

TVMC - TVM driver command-line interface

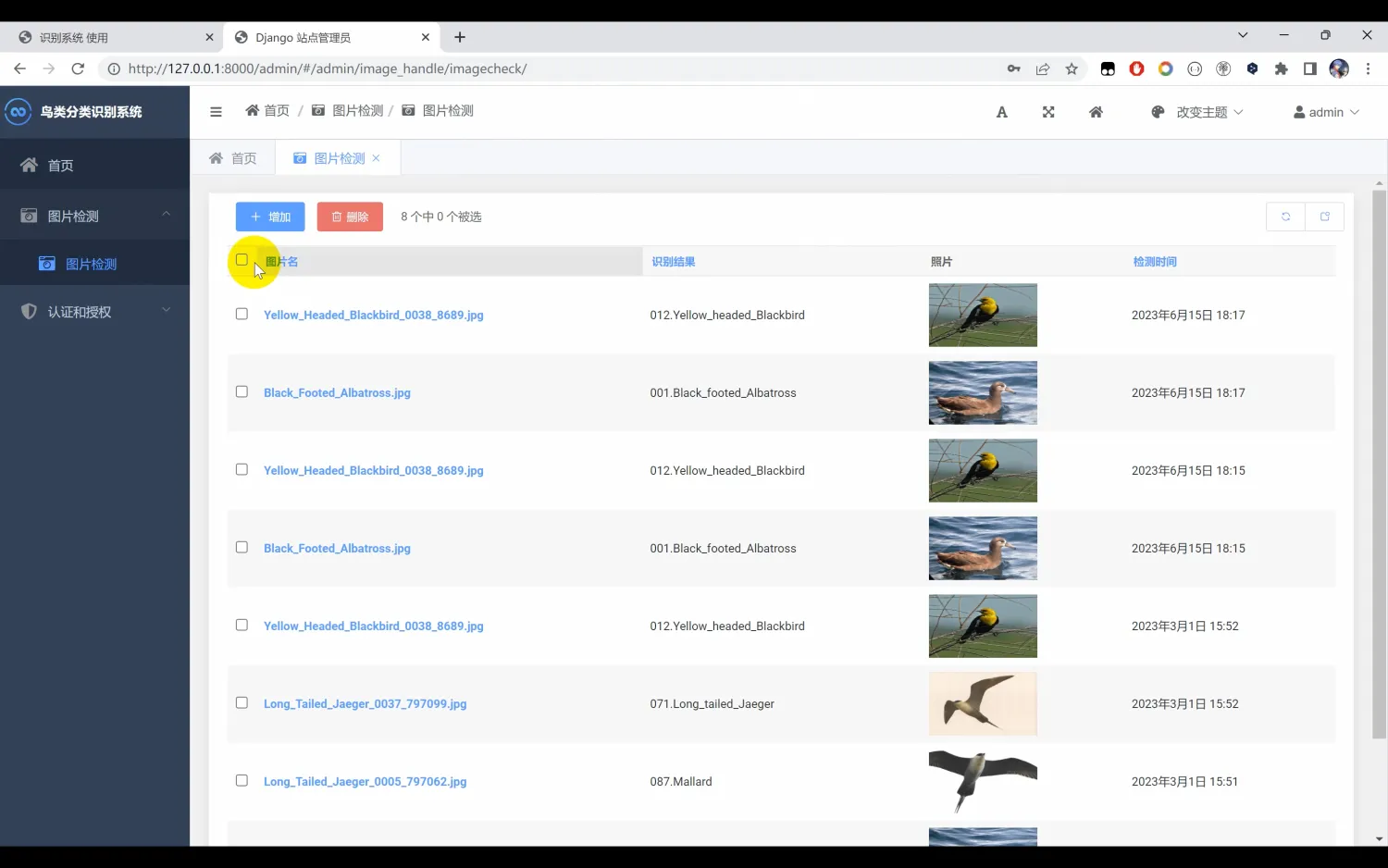

在对应的Python3解释器中,也可使用以下命令确认OpenCL设备存在。

>>> import tvm

>>> tvm.opencl().exist

True

![[图片]](https://i-blog.csdnimg.cn/direct/671c8a9363e24231a877bf66f85f2b5c.png)

源码安装MLC-LLM

拉取项目

git clone --recursive https://github.com/mlc-ai/mlc-llm.git

cd mlc-llm

# git submodule update --init --recursive # 如果clone中断

源码编译

# create build directory

mkdir -p build && cd build

# generate build configuration

python ../cmake/gen_cmake_config.py

# build mlc_llm libraries

cmake .. && cmake --build . --parallel $(nproc) && cd ..

配置环境变量

export MLC_LLM_SOURCE_DIR=/media/rootfs/gpu_llm/mlc-llm

export PYTHONPATH=$MLC_LLM_SOURCE_DIR/python:$PYTHONPATH

alias mlc_llm="python -m mlc_llm"

可能缺少的Python包

pip install pydantic shortuuid fastapi requests tqdm prompt-toolkit safetensors torch

使用命令mlc_llm chat -h,若出现以下内容,则说明成功

$ mlc_llm chat -h

usage: MLC LLM Chat CLI [-h] [--device DEVICE] [--model-lib MODEL_LIB] [--overrides OVERRIDES] model

positional arguments:

model A path to ``mlc-chat-config.json``, or an MLC model directory that contains

`mlc-chat-config.json`. It can also be a link to a HF repository pointing to an

MLC compiled model. (required)

options:

-h, --help show this help message and exit

--device DEVICE The device used to deploy the model such as "cuda" or "cuda:0". Will detect

from local available GPUs if not specified. (default: "auto")

--model-lib MODEL_LIB

The full path to the model library file to use (e.g. a ``.so`` file). If

unspecified, we will use the provided ``model`` to search over possible paths.

It the model lib is not found, it will be compiled in a JIT manner. (default:

"None")

--overrides OVERRIDES

Model configuration override. Supports overriding, `context_window_size`,

`prefill_chunk_size`, `sliding_window_size`, `attention_sink_size`,

`max_num_sequence` and `tensor_parallel_shards`. The overrides could be

explicitly specified via details knobs, e.g. --overrides

"context_window_size=1024;prefill_chunk_size=128". (default: "")

Ex1. 语言小模型 SmolLM

下载已经int4量化好的模型

编译模型

# 135M

mlc_llm compile dist/SmolLM-135M-Instruct-q4f32_1-MLC/mlc-chat-config.json \

--device opencl \

--output libs/SmolLM-135M-Instruct-q4f32_1-MLC.so

# 360M

mlc_llm compile dist/SmolLM-360M-Instruct-q4f32_1-MLC/mlc-chat-config.json \

--device opencl \

--output libs/SmolLM-360M-Instruct-q4f32_1-MLC.so

运行聊天

# 135M

mlc_llm chat dist/SmolLM-135M-Instruct-q4f32_1-MLC \

--device opencl \

--model-lib libs/SmolLM-135M-Instruct-q4f32_1-MLC.so

# 360M

mlc_llm chat dist/SmolLM-360M-Instruct-q4f32_1-MLC \

--device opencl \

--model-lib libs/SmolLM-360M-Instruct-q4f32_1-MLC.so

Ex2. 通义千问:Qwen2.5 - 0.5B

下载已经int4量化好的模型

https://huggingface.co/mlc-ai/Qwen2.5-0.5B-Instruct-q4f32_1-MLC

编译模型

mlc_llm compile dist/Qwen2.5-0.5B-Instruct-q4f32_1-MLC/mlc-chat-config.json \

--quantization q4f32_1 \

--model-type qwen2 \

--device opencl \

--output libs/Qwen2.5-0.5B-Instruct-q4f32_1-MLC.so

运行聊天

mlc_llm chat dist/Qwen2.5-0.5B-Instruct-q4f32_1-MLC/ \

--device opencl \

--model-lib libs/Qwen2.5-0.5B-Instruct-q4f32_1-MLC.so

使用srpi-config命令调整ION内存

嵌入式设备的GPU一般没有独立显存,是跟别的ip共用内存的。所以我们需要调大这部分内存。注意,X5是一次性将这些内存完全分配,所以Ubuntu系统显示的可用内存会变小。

性能监测命令

CPU和内存占用(证明CPU占用极低)

htop

GPU占用

cat /sys/kernel/debug/gc/load

# watch -n 2 cat /sys/kernel/debug/gc/load

BPU占用(证明没有BPU参与计算)

hrut_somstatus

# watch -n 2 hrut_somstatus

外存占用

df -h ~

# watch -n 2 df -h ~

测试提问

Please introduce yourself.

Heilium walks into a bar,The bar tender says"we don't serve noble gases in here". helium doesn't react. This joke is funny because what?

Find one of the following options that is different from the others:(1) water(2) the sun (3)gasoline (4) the wind (5) cement

Find one of the following numbers in particular: (1)1 (2)2 (3)5 (4)7 (5)11 (6)13 (7)15

Tell a story about love

参考资料

机器人开发套件介绍:https://developer.d-robotics.cc/rdkx5

OpenCL标准:https://www.khronos.org/api/index_2017/opencl

文档:https://llvm.org/docs/GettingStarted.html#getting-the-source-code-and-building-llvm

GitHub:https://github.com/llvm/llvm-project/

文档:https://tvm.apache.org/docs/

GitHub:https://github.com/mlc-ai/relax

官网:https://llm.mlc.ai/

文档:https://llm.mlc.ai/docs/get_started/quick_start

GitHub:https://github.com/mlc-ai/mlc-llm

官网:https://www.rust-lang.org

GitHub - Rust:https://github.com/rust-lang/rust

GitHub - Cargo:https://github.com/rust-lang/cargo

Qwen2.5-0.5B:https://huggingface.co/Qwen/Qwen2.5-0.5B

Qwen2.5-0.5B-Instruct:https://huggingface.co/Qwen/Qwen2.5-0.5B-Instruct

Qwen2.5-1.5B-Instruct:https://huggingface.co/Qwen/Qwen2.5-1.5B-Instruct

Qwen2.5-0.5B-Instruct-q4f16:

https://huggingface.co/mlc-ai/Qwen2.5-0.5B-Instruct-q4f32_1-MLC

Qwen2.5-0.5B-Instruct-q4f32:

https://huggingface.co/mlc-ai/Qwen2.5-0.5B-Instruct-q4f32_1-MLC

SmolLM-135M-Instruct-q4f32_1-MLC:

https://huggingface.co/mlc-ai/SmolLM-135M-Instruct-q4f32_1-MLC

SmolLM-360M-Instruct-q4f32_1-MLC:

https://huggingface.co/mlc-ai/SmolLM-360M-Instruct-q4f32_1-MLC