微服务

问:用控制器来完成集群的工作负载,那么应用如何暴漏出去?

答:需要通过微服务暴漏出去后才能被访问

- Service 是一组提供相同服务的Pod对外开放的接口

- 借助Service,应用可以实现服务发现和负载均衡

- Service 默认只支持4层负载均衡能力,没有7层功能,需要借助 Ingress 实现

微服务类型

| 微服务类型 | 作用描述 |

|---|---|

| ClusterIP | 默认值,k8s系统给service自动分配的虚拟IP,只能在集群内部访问 |

| NodePort | 将Service通过指定的Node上的端口暴露给外部,访问任意一个NodeIP:nodePort都将路由到ClusterIP |

| LoadBalancer | 在NodePort的基础上,借助cloud provider创建一个外部的负载均衡器,并将请求转发到 NodeIP:NodePort,此模式只能在云服务器上使用 |

| ExternalName | 将服务通过 DNS CNAME 记录方式转发到指定的域名(通过 spec.externlName 设定 |

用例

[root@k8s-master ~]# kubectl create deployment mini--image myapp:v1 --replicas 2

# 生成控制器文件并建立控制器

[root@k8s-master ~]# kubectl create deployment mini--image myapp:v1 --replicas 2 --dry-run=client -o yaml > mini.yaml

# 生成微服务Yaml追加到已有Yaml中

[root@k8s-master ~]# kubectl expose deployment mini--port 80 --target-port 80 --dry-run=client -o yaml >> mini.yaml

[root@k8s-master ~]# kubectl delete deployments.apps mini

[root@k8s-master ~]# vim mini.yaml

[root@k8s-master ~]# kubectl apply -f mini.yaml

[root@k8s-master ~]# kubectl get service

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 9d

mini ClusterIP 10.104.255.78 <none> 80/TCP 42s

微服务默认使用 iptables 调度

# 可以在火墙中查看到策略信息(一般在底下)

[root@k8s-master ~]# iptables -t nat -nL

...

KUBE-MARK-MASQ 6 -- !10.244.0.0/16 10.104.255.78 /* default/mini cluster IP */ tcp dpt:80

...

IPVS 模式

- Service 是由 kube-proxy 组件,加上 iptables 来共同实现的

- kube-proxy 通过 iptables 处理 Service 的过程,需要在宿主机上设置相当多的 iptables 规则,如果宿主机有大量的Pod,不断刷新iptables规则,会消耗大量的CPU资源

- IPVS模式的service,可以使K8s集群支持更多量级的Pod

IPVS 配置

# 所有节点安装 ipvsadm

dnf install ipvsadm -y

# 修改Master节点的代理配置

[root@k8s-master ~]# kubectl -n kube-system edit cm kube-proxy

metricsBindAddress: ""

mode: "ipvs"

# 设置kube-system使用IPVS模式

nftables:

# 当改变配置文件后,已运行的Pod状态不会改变,需要重启Pod

[root@k8s-master ~]# kubectl -n kube-system get pods | awk '/kube-proxy/{system("kubectl -n kube-system delete pods "$1)}'

[root@k8s-master ~]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE

mini-d5496d8f4-75khx 1/1 Running 0 15m 10.244.2.47 k8s-node2.org

mini-d5496d8f4-792mb 1/1 Running 0 15m 10.244.1.70 k8s-node1.org

[root@k8s-master ~]# ipvsadm -Ln

...

TCP 10.104.255.78:80 rr

-> 10.244.1.70:80 Masq 1 0 0

-> 10.244.2.47:80 Masq 1 0 0

...

切换 IPVS 模式后,kube-proxy会在宿主机上添加一个虚拟网卡:kube-ipvs0,并分配所有service IP

[root@k8s-master ~]# ip a | tail

...

inet 10.96.0.10/32 scope global kube-ipvs0

valid_lft forever preferred_lft forever

深入微服务类型

ClusterIP

ClusterIP 模式只能在集群内访问,并对集群内的Pod提供健康检测和自动发现功能

ClusterIP 用例

[root@k8s-master ~]# vim mini.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: mini

name: mini

spec:

replicas: 2

selector:

matchLabels:

app: mini

template:

metadata:

creationTimestamp: null

labels:

app: mini

spec:

containers:

- image: myapp:v1

name: myapp

---

apiVersion: v1

kind: Service

metadata:

labels:

app: mini

name: mini

spec:

ports:

- port: 80

protocol: TCP

targetPort: 80

selector:

app: mini

type: ClusterIP

# service 创建后 集群DNS 提供解析

[root@k8s-master ~]# dnf install bind-utils -y

[root@k8s-master ~]# dig mini.default.svc.cluster.local @10.96.0.10

...

;; ANSWER SECTION:

mini.default.svc.cluster.local. 30 IN A 10.104.255.78

;; Query time: 3 msec

;; SERVER: 10.96.0.10#53(10.96.0.10)

;; WHEN: Wed Oct 09 21:07:57 CST 2024

;; MSG SIZE rcvd: 117

ClusterIP的另一种模式:HeadLess

HeadLess(无头服务)

对于无头 Services 并不会分配 Cluster IP,kube-proxy 不会处理它们, 而且平台也不会为它们进行负载均衡和路由,集群访问通过 DNS 解析直接指向到业务 Pod 上的 IP,所有的调度由 DNS 单独完成

HeadLess 用例

[root@k8s-master ~]# vim mini.yaml

...

selector:

app: mini

type: ClusterIP

clusterIP: None

[root@k8s-master ~]# kubectl delete -f mini.yaml

[root@k8s-master ~]# kubectl apply -f mini.yaml

# 测试

[root@k8s-master ~]# kubectl get service mini

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

mini ClusterIP None <none> 80/TCP 18s

[root@k8s-master ~]# dig mini.default.svc.cluster.local @10.96.0.10

# mini.default.svc.cluster.local. 集群DNS

...

;; ANSWER SECTION:

mini.default.svc.cluster.local. 30 IN A 10.244.2.48

# 解析到Pod上

mini.default.svc.cluster.local. 30 IN A 10.244.1.71

...

kubectl get services mini

[root@k8s-master ~]# kubectl run ovo --image busyboxplus -it

/ # nslookup mini

/ # nslookup mini.default.svc.cluster.local.

Server: 10.96.0.10

Address 1: 10.96.0.10 kube-dns.kube-system.svc.cluster.local

Name: mini.default.svc.cluster.local.

Address 1: 10.244.1.71 10-244-1-71.mini.default.svc.cluster.local

Address 2: 10.244.2.48 10-244-2-48.mini.default.svc.cluster.local

/ # curl mini

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>

/ # curl mini/hostname.html

mini-d5496d8f4-228g5

[root@k8s-master ~]# kubectl describe service mini

...

Endpoints: 10.244.1.71:80,10.244.2.48:80

...

NodePort

通过 IPVS 暴漏端口,从而使外部主机通过 Mater 节点的对外 IP:Port 来访问 Pod 业务

访问过程:NodePort ——> ClusterIP ——> Pods

NodePort 用例

[root@k8s-master ~]# vim mini.yaml

...

selector:

app: mini

type: NodePort

[root@k8s-master ~]# kubectl delete -f mini.yaml

[root@k8s-master ~]# kubectl apply -f mini.yaml

[root@k8s-master ~]# kubectl get services mini

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

mini NodePort 10.96.170.18 <none> 80:30835/TCP 3m22s

# nodeport在集群节点上绑定端口,一个端口对应一个服务

[root@k8s-master ~]# kubectl describe service mini

...

NodePort: <unset> 30835/TCP

...

[root@k8s-master ~]# for i in {1..5}

> do

> curl 172.25.254.200:30835/hostname.html

> done

mini-d5496d8f4-cts8s

mini-d5496d8f4-9v24v

mini-d5496d8f4-cts8s

mini-d5496d8f4-9v24v

mini-d5496d8f4-cts8s

NodePort 默认端口是 30000—32767,超出会报错

如果需要使用范围外的端口,就需要特殊设定

vim /etc/kubernetes/manifests/kube-apiserver.yaml

# 需要增加到- command:

- --service-node-port-range=30000-40000

添加 --service-node-port-range= 参数,端口范围可以自定义

修改后 api-server 会自动重启,等 apiserver 正常启动后才能操作集群

集群重启自动完成在修改完参数后,全程不需要人为干预

LoadBalancer

云平台会为我们分配vip并实现访问,如果是裸金属主机那么需要metallb来实现ip的分配

过程:LoadBalancer ——> NodePort ——> ClusterIP ——> Pods

LoadBalancer 用例

[root@k8s-master ~]# vim mini.yaml

...

selector:

app: mini

type: LoadBalancer

[root@k8s-master ~]# kubectl delete -f mini.yaml

[root@k8s-master ~]# kubectl apply -f mini.yaml

# 默认无法分配外部访问IP

[root@k8s-master ~]# kubectl get svc mini

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

mini LoadBalancer 10.102.105.5 <pending> 80:31759/TCP 18s

LoadBalancer 模式适用云平台,裸金属环境需要安装 MetalLB提供支持

MetalLB

官网:https://metallb.universe.tf/installation/

MetalLB功能:为 LoadBalancer 分配 VIP

MetalLB 配置

# 设置 IPVS 模式

[root@k8s-master ~]# kubectl edit cm -n kube-system kube-proxy

...

metricsBindAddress: ""

mode: "ipvs"

ipvs:

strictARP: true

...

[root@k8s-master ~]# kubectl -n kube-system get pods | awk '/kube-proxy/{system("kubectl -n kube-system delete pods "$1)}'

# 下载部署文件

[root@k8s-master ~]# dnf install wget -y

[root@k8s-master ~]# wget https://raw.githubusercontent.com/metallb/metallb/v0.13.12/config/manifests/metallb-native.yaml

# 修改文件镜像拉取地址(配置好Docker拉取镜像默认地址)

...

image: metallb/controller:v0.14.8

...

image: metallb/speaker:v0.14.8

...

# 上传镜像到harbor仓库

[root@k8s-master ~]# docker pull quay.io/metallb/controller:v0.14.8

[root@k8s-master ~]# docker pull quay.io/metallb/speaker:v0.14.8

[root@k8s-master ~]# docker tag quay.io/metallb/speaker:v0.14.8 ooovooo.org/metallb/speaker:v0.14.8

[root@k8s-master ~]# docker tag quay.io/metallb/controller:v0.14.8 ooovooo.org/metallb/controller:v0.14.8

[root@k8s-master ~]# docker push ooovooo.org/metallb/speaker:v0.14.8

[root@k8s-master ~]# docker push ooovooo.org/metallb/controller:v0.14.8

# 部署服务

[root@k8s-master ~]# kubectl apply -f metallb-native.yaml

[root@k8s-master ~]# kubectl -n metallb-system get pods

NAME READY STATUS RESTARTS AGE

controller-65957f77c8-c9lrv 1/1 Running 0 23s

speaker-5g4hz 1/1 Running 0 23s

speaker-bw4qh 1/1 Running 0 23s

speaker-t7d7f 1/1 Running 0 23s

# 配置分配地址段

[root@k8s-master ~]# vim configmap.yml

apiVersion: metallb.io/v1beta1

kind: IPAddressPool

metadata:

name: name

# 地址池名称

namespace: metallb-system

spec:

addresses:

- 172.25.254.25-172.25.254.50

# 地址池段

---

# 不同的kind之间使用---分割

apiVersion: metallb.io/v1beta1

kind: L2Advertisement

metadata:

name: example

namespace: metallb-system

spec:

ipAddressPools:

- name

# 使用的地址池

[root@k8s-master ~]# kubectl apply -f configmap.yml

[root@k8s-master ~]# kubectl get services mini

# 自动分配IP

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

mini LoadBalancer 10.102.105.5 172.25.254.25 80:31759/TCP 62m

# 通过分配地址从集群外访问服务

[root@k8s-master ~]# curl 172.25.254.25

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>

ExternalName

- 开启 services 后,不会被分配 IP,而是用 DNS 解析 CNAME 固定域名来解决 IP 变化问题

- 一般应用于外部业务和 Pod 沟通或外部业务迁移到 Pod 内时

- 在应用向集群迁移过程中,ExternalName在过度阶段就可以起作用了

- 集群外的资源迁移到集群时,在迁移的过程中 IP 可能会变化,但是 域名+DNS解析 能完美解决此问题

ExternalName 用例

[root@k8s-master ~]# vim mini.yaml

...

selector:

app: mini

type: ExternalName

externalName: www.mini.org

[root@k8s-master ~]# kubectl delete -f mini.yaml

[root@k8s-master ~]# kubectl apply -f mini.yaml

[root@k8s-master ~]# kubectl get services mini

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

mini ExternalName <none> www.mini.org 80/TCP 5s

Ingress-Nginx

官网:https://kubernetes.github.io/ingress-nginx/deploy/#bare-metal-clusters

Ingress-Nginx 功能

- 一种全局的、为了代理不同后端 Service 而设置的负载均衡服务,支持7层

- Ingress由两部分组成:Ingress controller和Ingress服务

- Ingress Controller 会根据你定义的 Ingress 对象,提供对应的代理能力

部署 Ingress

[root@k8s-master ~]# mkdir ingress

[root@k8s-master ~]# cd ingress/

# 下载部署文件

[root@k8s-master ingress]# wget https://raw.githubusercontent.com/kubernetes/ingress-nginx/controller-v1.11.2/deploy/static/provider/baremetal/deploy.yaml

# 还要下载ingress-nginx的镜像

# 上传镜像到harbor

[root@k8s-master ~]# docker tag reg.harbor.org/ingress-nginx/controller:v1.11.2 ooovooo.org/ingress-nginx/controller:v1.11.2

[root@k8s-master ~]# docker tag reg.harbor.org/ingress-nginx/kube-webhook-certgen:v1.4.3 ooovooo.org/ingress-nginx/kube-webhook-certgen:v1.4.3

[root@k8s-master ~]# docker push ooovooo.org/ingress-nginx/controller:v1.11.2

[root@k8s-master ~]# docker push ooovooo.org/ingress-nginx/kube-webhook-certgen:v1.4.3

# 安装Ingress

[root@k8s-master ~]# vim deploy.yaml

...

image: ingress-nginx/controller:v1.11.2

...

image: ingress-nginx/kube-webhook-certgen:v1.4.3

...

image: ingress-nginx/kube-webhook-certgen:v1.4.3

[root@k8s-master ingress]# kubectl apply -f deploy.yaml

[root@k8s-master ingress]# kubectl -n ingress-nginx get pods

# 一开始可能会有一个error,删掉再加载就好了

NAME READY STATUS RESTARTS AGE

ingress-nginx-admission-create-w6jz9 0/1 Completed 0 14s

ingress-nginx-admission-patch-bbsn6 0/1 Completed 1 14s

ingress-nginx-controller-bb7d8f97c-nx96n 1/1 Running 0 14s

# ingress-nginx-controller 1/1 Running 即运行成功

[root@k8s-master ~]# kubectl -n ingress-nginx get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

ingress-nginx-controller NodePort 10.100.33.214 <none> 80:32416/TCP,443:30320/TCP 30s

ingress-nginx-controller-admission ClusterIP 10.98.75.102 <none> 443/TCP 30s

# 修改微服务为loadbalancer

[root@k8s-master ~]# kubectl -n ingress-nginx edit svc ingress-nginx-controller

...

49 type: LoadBalancer

# 查看修改结果

[root@k8s-master ~]# kubectl -n ingress-nginx get services

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S)

# 需要配置有 MetalLB

ingress-nginx-controller LoadBalancer 10.100.33.214 172.25.254.25 80:32416/TCP,443:30320/TCP

ingress-nginx-controller-admission ClusterIP 10.98.75.102 <none> 443/TCP

在 kubectl -n ingress-nginx get services 中 的 EXTERNAL-IP

即 Ingress 最终对外的 IP

测试 Ingress

[root@k8s-master ingress]# kubectl create deployment myappv1 --image myapp:v1 --dry-run=client -o yaml > myappv1.yml

[root@k8s-master ingress]# kubectl apply -f myappv1.yml

[root@k8s-master ingress]# kubectl expose deployment myappv1 --port 80 --target-port 80 --dry-run=client -o yaml >> myappv1.yml

[root@k8s-master ingress]# vim myappv1.yml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: myappv1

name: myappv1

spec:

replicas: 1

selector:

matchLabels:

app: myappv1

strategy: {}

template:

metadata:

labels:

app: myappv1

spec:

containers:

- image: myapp:v1

name: myapp

---

apiVersion: v1

kind: Service

metadata:

labels:

app: myappv1

name: myappv1

spec:

ports:

- port: 80

protocol: TCP

targetPort: 80

selector:

app: myappv1

kubectl apply -f myappv1.yml

kubectl create ingress webcluster --rule '*/=ooovooo-svc:80' --dry-run=client -o yaml > ingress.yml

[root@k8s-master ingress]# vim ingress.yml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: myappv1

spec:

ingressClassName: nginx

rules:

- http:

paths:

- backend:

service:

name: myappv1

# 与自己的服务名保持一致

port:

number: 80

path: /

pathType: Prefix

# Exact(精确匹配)

# ImplementationSpecific(特定实现)

# Prefix(前缀匹配)

# Regular expression(正则表达式匹配)

# 建立Ingress控制器

kubectl apply -f ingress.yml

# 根据kubectl -n ingress-nginx get services中的IP进行访问

[root@k8s-master ingress]# curl 172.25.254.25

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>

Ingress 必须和输出的 service 资源处于同一 namespace 中

Ingress 高级用法

基于路径的访问

[root@k8s-master ingress]# vim ingress1.yml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

annotations:

nginx.ingress.kubernetes.io/rewrite-target: /

name: ingress1

spec:

ingressClassName: nginx

rules:

- host: www.ooovooo.org

http:

paths:

- backend:

service:

name: myappv1

port:

number: 80

path: /v1

pathType: Prefix

- backend:

service:

name: myappv2

port:

number: 80

path: /v2

pathType: Prefix

[root@k8s-master ingress]# kubectl apply -f ingress1.yml

[root@k8s-master ingress]# echo 172.25.254.25 www.ooovooo.org >> /etc/hosts

[root@k8s-master ingress]# curl www.ooovooo.org/v1

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>

[root@k8s-master ingress]# curl www.ooovooo.org/v2

Hello MyApp | Version: v2 | <a href="hostname.html">Pod Name</a>

[root@k8s-master ingress]# curl www.ooovooo.org/v2/haha

Hello MyApp | Version: v2 | <a href="hostname.html">Pod Name</a>

[root@k8s-master ingress]# curl www.ooovooo.org/v1/gaga

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>

基于域名的访问

[root@k8s-master ingress]# vim ingress2.yml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

annotations:

nginx.ingress.kubernetes.io/rewrite-target: /

name: ingress2

spec:

ingressClassName: nginx

rules:

- host: myappv1.ooovooo.org

http:

paths:

- backend:

service:

name: myappv1

port:

number: 80

path: /

pathType: Prefix

- host: myappv2.ooovooo.org

http:

paths:

- backend:

service:

name: myappv2

port:

number: 80

path: /

pathType: Prefix

[root@k8s-master ingress]# kubectl apply -f ingress2.yml

[root@k8s-master ingress]# kubectl delete -f ingress1.yml

[root@k8s-master ingress]# kubectl describe ingress ingress2

...

Host Path Backends

---- ---- --------

myappv1.ooovooo.org

/ myappv1:80 (10.244.1.89:80)

myappv2.ooovooo.org

/ myappv2:80 (10.244.1.90:80)

[root@k8s-master ingress]# curl myappv1.ooovooo.org

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>

[root@k8s-master ingress]# curl myappv2.ooovooo.org

Hello MyApp | Version: v2 | <a href="hostname.html">Pod Name</a>

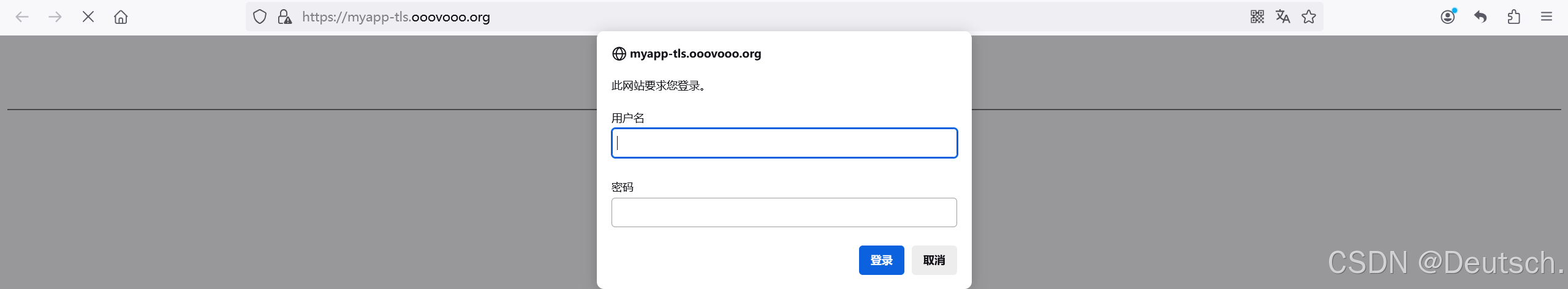

建立 TLS 加密

[root@k8s-master ingress]# openssl req -newkey rsa:2048 -nodes -keyout tls.key -x509 -days 365 -subj "/CN=nginxsvc/O=nginxsvc" -out tls.crt

[root@k8s-master ingress]# kubectl create secret tls web-tls-secret --key tls.key --cert tls.crt

[root@k8s-master ingress]# vim ingress3.yml

[root@k8s-master ingress]# echo 172.25.254.25 myapp-tls.ooovooo.org >> /etc/hosts

[root@k8s-master ingress]# kubectl apply -f ingress3.yml

[root@k8s-master ingress]# kubectl delete -f ingress2.yml

# 在Windows主机添加解析,并进行访问

建立 AUTH 认证

[root@k8s-master ingress]# vim ingress4.yml

[root@k8s-master ingress]# kubectl delete -f ingress3.yml

[root@k8s-master ingress]# kubectl apply -f ingress4.yml

[root@k8s-master ingress]# kubectl describe ingress ingress4

...

TLS:

web-tls-secret terminates myapp-tls.ooovooo.org

...

myapp-tls.ooovooo.org

/ myappv1:80 (10.244.1.89:80)

...

[root@k8s-master ingress]# curl -k https://myapp-tls.ooovooo.org

<html>

<head><title>401 Authorization Required</title></head>

<body>

<center><h1>401 Authorization Required</h1></center>

<hr><center>nginx</center>

</body>

</html>

[root@k8s-master ingress]# curl -k https://myapp-tls.ooovooo.org -u ovo:aaa

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>

# Windows主机访问同样需要登录

Rewrite 重定向

# 将指定访问文件重定向到hostname.html上

[root@k8s-master ingress]# vim ingress5.yml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

annotations:

nginx.ingress.kubernetes.io/app-root: /hostname.html

name: ingress5

spec:

ingressClassName: nginx

rules:

- host: myapp-tls.ooovooo.org

http:

paths:

- backend:

service:

name: myappv1

port:

number: 80

path: /

# 当访问/时,重定向到hostname.html

pathType: Prefix

[root@k8s-master ingress]# kubectl delete -f ingress4.yml

[root@k8s-master ingress]# kubectl apply -f ingress5.yml

[root@k8s-master ingress]# curl -Lk https://myapp-tls.ooovooo.org -u ovo:aaa

myappv1-586444467f-w4dxn

[root@k8s-master ingress]# curl -Lk https://myapp-tls.ooovooo.org/haha/hostname.html -u ovo:aaa

<html>

<head><title>404 Not Found</title></head>

<body bgcolor="white">

<center><h1>404 Not Found</h1></center>

<hr><center>nginx/1.12.2</center>

</body>

</html>

# 以上存在一个问题,当有多路径时需要重定向时,需要配置多个,费人力

# 正则解决指定路径问题

[root@k8s-master ingress]# vim ingress6.yml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

annotations:

nginx.ingress.kubernetes.io/rewrite-target: /$2

nginx.ingress.kubernetes.io/use-regex: "true"

name: ingress6

spec:

ingressClassName: nginx

rules:

- host: myapp-tls.ooovooo.org

http:

paths:

- backend:

service:

name: myapp-v1

port:

number: 80

path: /

pathType: Prefix

- backend:

service:

name: myappv1

port:

number: 80

path: /haha(/|$)(.*)

pathType: ImplementationSpecific

[root@k8s-master ingress]# kubectl delete -f ingress5.yml

[root@k8s-master ingress]# kubectl apply -f ingress6.yml

[root@k8s-master ingress]# curl -Lk https://myapp-tls.ooovooo.org/haha/hostname.html -u ovo:aaa

myappv1-586444467f-w4dxn

Canary 金丝雀发布

金丝雀发布(Canary Release)也称为灰度发布,是一种软件发布策略

主要目的是在将新版本的软件全面推广到生产环境之前,先在一小部分用户或服务器上进行测试和验证,以降低因新版本引入重大问题而对整个系统造成的影响,是一种 Pod 的发布方式

金丝雀发布采取先添加、再删除的方式,保证Pod的总量不低于期望值。并且在更新部分Pod后,暂停更新,当确认新Pod版本运行正常后再进行其他版本的Pod的更新

发布方式

Header > Cookie > Weiht

其中 Header 和 Weiht 中的最多

基于Header (HTTP包头)灰度

- 通过Annotaion扩展

- 创建灰度 Ingress,配置灰度头部 key 以及 value

- 灰度流量验证完毕后,切换正式 Ingress 到新版本

- 之前我们在做升级时可以通过控制器做滚动更新,默认25%利用Header 可以使升级更为平滑,通过 key 和 value 测试新的业务体系是否有问题

# 创建版本v1的ingress

[root@k8s-master ingress]# kubectl delete -f ingress6.yml

[root@k8s-master ingress]# vim ingress7.yml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

annotations:

name: myapp-v1-ingress

spec:

ingressClassName: nginx

rules:

- host: myapp-tls.ooovooo.org

http:

paths:

- backend:

service:

name: myappv1

port:

number: 80

path: /

pathType: Prefix

[root@k8s-master ingress]# kubectl apply -f ingress7.yml

# 建立基于header的ingress

[root@k8s-master ingress]# vim ingress8.yml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

annotations:

nginx.ingress.kubernetes.io/canary: "true"

nginx.ingress.kubernetes.io/canary-by-header: "version"

nginx.ingress.kubernetes.io/canary-by-header-value: "2"

name: myapp-v2-ingress

spec:

ingressClassName: nginx

rules:

- host: myapp-tls.ooovooo.org

http:

paths:

- backend:

service:

name: myappv2

port:

number: 80

path: /

pathType: Prefix

[root@k8s-master ingress]# kubectl apply -f ingress8.yml

# 进行测试

[root@k8s-master ingress]# curl myapp-tls.ooovooo.org

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>

[root@k8s-master ingress]# curl -H "version: 2" myapp-tls.ooovooo.org

Hello MyApp | Version: v2 | <a href="hostname.html">Pod Name</a>

基于权重的灰度发布

- 通过 Annotaion 拓展

- 创建灰度 Ingress,配置灰度权重以及总权重

- 灰度流量验证完毕后,切换正式 Ingress 到新版本

[root@k8s-master ingress]# vim ingress9.yml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

annotations:

nginx.ingress.kubernetes.io/canary: "true"

nginx.ingress.kubernetes.io/canary-weight: "10"

# 灰度权重

nginx.ingress.kubernetes.io/canary-weight-total: "100"

# 总权重

name: myapp-v2-ingress

spec:

ingressClassName: nginx

rules:

- host: myapp-tls.ooovooo.org

http:

paths:

- backend:

service:

name: myappv2

port:

number: 80

path: /

pathType: Prefix

[root@k8s-master ingress]# kubectl delete -f ingress8.yml

[root@k8s-master ingress]# kubectl apply -f ingress9.yml

[root@k8s-master ingress]# vim check_ingress.sh

#!/bin/bash

v1=0

v2=0

for (( i=0; i<100; i++))

do

response=`curl -s myapp-tls.ooovooo.org |grep -c v1`

v1=`expr $v1 + $response`

v2=`expr $v2 + 1 - $response`

done

echo "v1:$v1, v2:$v2"

[root@k8s-master ingress]# chmod +x check_ingress.sh

[root@k8s-master ingress]# sh check_ingress.sh

v1:89, v2:11

# 根据不同灰度权重而不同