JavaScript中的with语句详解

news2026/2/15 15:09:33

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。如若转载,请注明出处:http://www.coloradmin.cn/o/2203638.html

如若内容造成侵权/违法违规/事实不符,请联系多彩编程网进行投诉反馈,一经查实,立即删除!相关文章

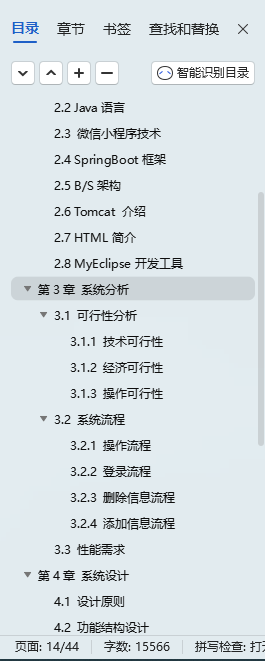

从0到1:小区业主决策投票小程序开发笔记

可研

小区业主决策投票小程序: 便于业主参与社区事务的决策,通过网络投票的形式,大大节省了业委会和业主时间,也提高了投票率。其主要功能:通过身份证、业主证或其他方式确认用户身份;小区管理人员或业委会…

prometheus client_java实现进程的CPU、内存、IO、流量的可观测

文章目录 1、获取进程信息的方法1.1、通过读取/proc目录获取进程相关信息1.2、通过Linux命令获取进程信息1.2.1、top(CPU/内存)命令1.2.2、iotop(磁盘IO)命令1.2.3、nethogs(流量)命令 2、使用prometheus c…

tableau除了图表好看,在业务中真有用吗?

tableau之前的市值接近150亿美金,被saleforce以157亿美金收购,这个市值和现在的蔚来汽车差不多。

如果tableau仅仅是个show的可视化工具,必然不会有这么高的市值,资本市场的眼睛是雪亮的。 很多人觉得tableau做图表好看ÿ…

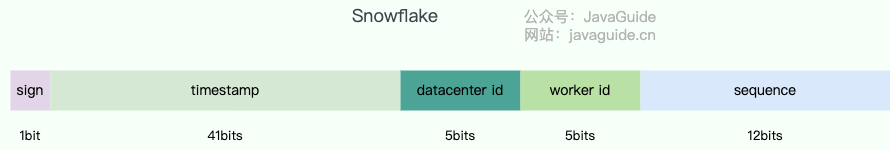

分布式常见面试题总结

文章目录 1 什么是 UUID 算法?2 什么是雪花算法?🔥3 说说什么是幂等性?🔥4 怎么保证接口幂等性?🔥5 paxos算法6 Raft 算法7 CAP理论和 BASE 理论7.1 CAP 理论🔥7.2 为什么无法同时保…

Echarts合集更更更之树图

实现效果

写在最后🍒

源码,关注🍥苏苏的bug,🍡苏苏的github,🍪苏苏的码云

DGL库之HGTConv的使用

DGL库之HGTConv的使用 论文地址和异构图构建教程HGTConv语法格式HGTConv的使用 论文地址和异构图构建教程

论文地址:https://arxiv.org/pdf/2003.01332 异构图构建教程:异构图构建 异构图转同构图:异构图转同构图

HGTConv语法格式

dgl.nn.…

极客兔兔Gee-Cache Day7

protobuf配置: 从 Protobuf Releases 下载最先版本的发布包安装。解压后将解压路径下的 bin 目录 加入到环境变量即可。 如果能正常显示版本,则表示安装成功。 $ protoc --version

libprotoc 3.11.2在Golang中使用protobuf,还需要protoc-g…

【单链表的模拟实现Java】

【单链表的模拟实现Java】 1. 了解单链表的功能2. 模拟实现单链表的功能2.1 单链表的创建2.2 链表的头插2.3 链表的尾插2.3 链表的长度2.4 链表的打印2.5 在指定位置插入2.6 查找2.7 删除第一个出现的节点2.8 删除出现的所有节点2.9 清空链表 3. 正确使用模拟单链表 1. 了解单链…

重头开始嵌入式第四十八天(Linux内核驱动 linux启动流程)

目录 什么是操作系统?

一、管理硬件资源

二、提供用户接口

三、管理软件资源

什么是操作系统内核?

一、主要功能 1. 进程管理:

2. 内存管理:

3. 设备管理:

4. 文件系统管理:

二、特点

什么是驱动…

WebGoat JAVA反序列化漏洞源码分析

目录

InsecureDeserializationTask.java 代码分析

反序列化漏洞知识补充

VulnerableTaskHolder类分析

poc 编写 WebGoat 靶场地址:GitHub - WebGoat/WebGoat: WebGoat is a deliberately insecure application

这里就不介绍怎么搭建了,可以参考其他…

【重学 MySQL】六十三、唯一约束的使用

【重学 MySQL】六十三、唯一约束的使用 创建表时定义唯一约束示例 在已存在的表上添加唯一约束示例 删除唯一约束示例 复合唯一约束案例背景创建表并添加复合唯一约束插入数据测试总结 特点注意事项 在 MySQL 中,唯一约束(UNIQUE Constraint)…

butterfly主题留言板 报错记录 未解决

新建留言板,在博客根目录执行下面的命令

hexo new page messageboard

在博客/source/messageboard的文件夹下找到index.md文件并修改

---

title: 留言板

date: 2018-01-05 00:00:00

type: messageboard

---找到butterfly主题下的_config.yml文件

把留言板的注释…

基于springboot+小程序的智慧物流管理系统(物流1)

👉文末查看项目功能视频演示获取源码sql脚本视频导入教程视频

1、项目介绍

基于springboot小程序的智慧物流管理系统实现了管理员、司机及用户。 1、管理员实现了司机管理、用户管理、车辆管理、商品管理、物流信息管理、基础数据管理、论坛管理、公告信息管理等。…

帮助自闭症孩子融入社会,寄宿学校是明智选择

在广州这座充满活力与温情的城市,有一群特殊的孩子,他们被称为“星星的孩子”——自闭症儿童。自闭症,这个让人既陌生又熟悉的名词,背后承载的是无数家庭的辛酸与希望。对于自闭症儿童来说,融入社会、与人交流、理解世…

【Linux第一弹】- 基本指令

🌈 个人主页:白子寰 🔥 分类专栏:重生之我在学Linux,C打怪之路,python从入门到精通,数据结构,C语言,C语言题集👈 希望得到您的订阅和支持~ 💡 坚持…

blender 记一下lattice

这个工具能够辅助你捏形状 这里演示如何操作BOX shift A分别创建俩对象一个BOX 一个就是lattice对象 然后在BOX的修改器内 创建一个叫做lattice的修改器 然后指定object为刚刚创建的lattice对象 这样就算绑定好了

接下来 进入lattice的编辑模式下 你选取一个点进行运动&#…

量化交易与基础投资工具介绍

🌟作者简介:热爱数据分析,学习Python、Stata、SPSS等统计语言的小高同学~🍊个人主页:小高要坚强的博客🍓当前专栏:Python之机器学习《Python之量化交易》Python之机器学习🍎本文内容…

谈谈留学生毕业论文如何分析问卷采访数据

留学生毕业论文在设计好采访问题并且顺利进行了采访之后,我们便需要将得到的采访答案进行必要的分析,从而得出一些结论。我们可以通过这些结论回答研究问题,或者提出进一步的思考等等。那么我们应当如何分析采访数据呢?以下有若干…