文章目录

- 1、任务描述

- 2、MASR RCNN 网络结构

- 3、方法实现

- 4、结果展示

- 5、涉及到的库

- getPerfProfile

- 6、参考

1、任务描述

利用 mask rcnn 网络,进行图片和视频的目标检测和实例分割

2、MASR RCNN 网络结构

3、方法实现

# Copyright (C) 2018-2019, BigVision LLC (LearnOpenCV.com), All Rights Reserved.

# Author : Sunita Nayak

# Article : https://www.learnopencv.com/deep-learning-based-object-detection-and-instance-segmentation-using-mask-r-cnn-in-opencv-python-c/

# License: BSD-3-Clause-Attribution (Please read the license file.)

# This work is based on OpenCV samples code (https://opencv.org/license.html)

import cv2 as cv

import argparse

import numpy as np

import os.path

import sys

import random

# Initialize the parameters

confThreshold = 0.5 # Confidence threshold

maskThreshold = 0.3 # Mask threshold

parser = argparse.ArgumentParser(description='Use this script to run Mask-RCNN object detection and segmentation')

parser.add_argument('--image', help='Path to image file.')

parser.add_argument('--video', help='Path to video file.', default="cars.mp4")

parser.add_argument("--device", default="gpu", help="Device to inference on")

args = parser.parse_args()

"""

python mask_rcnn.py --image ./images/person.jpg --device cpu

python mask_rcnn.py --video ./cars.mp4 --device cpu

"""

# Draw the predicted bounding box, colorize and show the mask on the image

def drawBox(frame, classId, conf, left, top, right, bottom, classMask):

# Draw a bounding box.

cv.rectangle(frame, (left, top), (right, bottom), (255, 178, 50), 3)

# Print a label of class.

label = '%.2f' % conf

if classes:

assert (classId < len(classes))

label = '%s:%s' % (classes[classId], label) # 'person:1.00'

# Display the label at the top of the bounding box

labelSize, baseLine = cv.getTextSize(label, cv.FONT_HERSHEY_SIMPLEX, 0.5, 1)

top = max(top, labelSize[1])

cv.rectangle(frame, (left, top - round(1.5 * labelSize[1])), (left + round(1.5 * labelSize[0]), top + baseLine),

(255, 255, 255), cv.FILLED)

cv.putText(frame, label, (left, top), cv.FONT_HERSHEY_SIMPLEX, 0.75, (0, 0, 0), 1)

# Resize the mask, threshold, color and apply it on the image

classMask = cv.resize(classMask, (right - left + 1, bottom - top + 1))

mask = (classMask > maskThreshold)

roi = frame[top:bottom + 1, left:right + 1][mask]

# color = colors[classId%len(colors)]

# Comment the above line and uncomment the two lines below to generate different instance colors

colorIndex = random.randint(0, len(colors) - 1)

color = colors[colorIndex]

frame[top:bottom + 1, left:right + 1][mask] = ([0.3 * color[0], 0.3 * color[1], 0.3 * color[2]] + 0.7 * roi).astype(

np.uint8)

# Draw the contours on the image

mask = mask.astype(np.uint8)

contours, hierarchy = cv.findContours(mask, cv.RETR_TREE, cv.CHAIN_APPROX_SIMPLE)

cv.drawContours(frame[top:bottom + 1, left:right + 1], contours, -1, color, 3, cv.LINE_8, hierarchy, 100)

# For each frame, extract the bounding box and mask for each detected object

def postprocess(boxes, masks):

# Output size of masks is NxCxHxW where

# N - number of detected boxes

# C - number of classes (excluding background)

# HxW - segmentation shape

numClasses = masks.shape[1] # 90

numDetections = boxes.shape[2] # 100

frameH = frame.shape[0] # 531

frameW = frame.shape[1] # 800

for i in range(numDetections): # traverse top 100 ROI

box = boxes[0, 0, i] # (1, 1, 100, 7) -> (7,)

# array([0. , 0. , 0.99842095, 0.7533724 , 0.152397 , 0.92448074, 0.9131955 ], dtype=float32)

mask = masks[i] # (100, 90, 15, 15) -> (90, 15, 15)

score = box[2] # 0.99842095

if score > confThreshold:

classId = int(box[1])

# Extract the bounding box

left = int(frameW * box[3])

top = int(frameH * box[4])

right = int(frameW * box[5])

bottom = int(frameH * box[6])

left = max(0, min(left, frameW - 1))

top = max(0, min(top, frameH - 1))

right = max(0, min(right, frameW - 1))

bottom = max(0, min(bottom, frameH - 1))

# Extract the mask for the object

classMask = mask[classId]

# Draw bounding box, colorize and show the mask on the image

drawBox(frame, classId, score, left, top, right, bottom, classMask)

# Load names of classes

classesFile = "mscoco_labels.names"

classes = None

"""

person

bicycle

car

motorcycle

airplane

bus

train

truck

boat

traffic light

fire hydrant

stop sign

parking meter

bench

bird

cat

dog

horse

sheep

cow

elephant

bear

zebra

giraffe

backpack

umbrella

handbag

tie

suitcase

frisbee

skis

snowboard

sports ball

kite

baseball bat

baseball glove

skateboard

surfboard

tennis racket

bottle

wine glass

cup

fork

knife

spoon

bowl

banana

apple

sandwich

orange

broccoli

carrot

hot dog

pizza

donut

cake

chair

couch

potted plant

bed

dining table

toilet

tv

laptop

mouse

remote

keyboard

cell phone

microwave

oven

toaster

sink

refrigerator

book

clock

vase

scissors

teddy bear

hair drier

toothbrush

"""

with open(classesFile, 'rt') as f:

classes = f.read().rstrip('\n').split('\n')

# Give the textGraph and weight files for the model

textGraph = "./mask_rcnn_inception_v2_coco_2018_01_28.pbtxt"

modelWeights = "./mask_rcnn_inception_v2_coco_2018_01_28/frozen_inference_graph.pb"

# Load the network

net = cv.dnn.readNetFromTensorflow(modelWeights, textGraph)

if args.device == "cpu":

net.setPreferableBackend(cv.dnn.DNN_TARGET_CPU)

print("Using CPU device")

elif args.device == "gpu":

net.setPreferableBackend(cv.dnn.DNN_BACKEND_CUDA)

net.setPreferableTarget(cv.dnn.DNN_TARGET_CUDA)

print("Using GPU device")

# Load the classes

colorsFile = "colors.txt"

with open(colorsFile, 'rt') as f:

colorsStr = f.read().rstrip('\n').split('\n')

# ['0 255 0', '0 0 255', '255 0 0', '0 255 255', '255 255 0', '255 0 255', '80 70 180',

# '250 80 190', '245 145 50', '70 150 250', '50 190 190']

colors = [] # [0,0,0]

for i in range(len(colorsStr)):

rgb = colorsStr[i].split(' ')

color = np.array([float(rgb[0]), float(rgb[1]), float(rgb[2])])

colors.append(color)

"""

[array([ 0., 255., 0.]), array([ 0., 0., 255.]), array([255., 0., 0.]), array([ 0., 255., 255.]),

array([255., 255., 0.]), array([255., 0., 255.]), array([ 80., 70., 180.]), array([250., 80., 190.]),

array([245., 145., 50.]), array([ 70., 150., 250.]), array([ 50., 190., 190.])]

"""

winName = 'Mask-RCNN Object detection and Segmentation in OpenCV'

cv.namedWindow(winName, cv.WINDOW_NORMAL)

outputFile = "mask_rcnn_out_py.avi"

if (args.image):

# Open the image file

if not os.path.isfile(args.image):

print("Input image file ", args.image, " doesn't exist")

sys.exit(1)

cap = cv.VideoCapture(args.image)

outputFile = args.image[:-4] + '_mask_rcnn_out_py.jpg'

elif (args.video):

# Open the video file

if not os.path.isfile(args.video):

print("Input video file ", args.video, " doesn't exist")

sys.exit(1)

cap = cv.VideoCapture(args.video)

outputFile = args.video[:-4] + '_mask_rcnn_out_py.avi'

else:

# Webcam input

cap = cv.VideoCapture(0)

# Get the video writer initialized to save the output video

if (not args.image):

vid_writer = cv.VideoWriter(outputFile, cv.VideoWriter_fourcc('M', 'J', 'P', 'G'), 28,

(round(cap.get(cv.CAP_PROP_FRAME_WIDTH)), round(cap.get(cv.CAP_PROP_FRAME_HEIGHT))))

while cv.waitKey(1) < 0:

# Get frame from the video

hasFrame, frame = cap.read()

# Stop the program if reached end of video

if not hasFrame:

print("Done processing !!!")

print("Output file is stored as ", outputFile)

cv.waitKey(3000)

break

# Create a 4D blob from a frame.

blob = cv.dnn.blobFromImage(frame, swapRB=True, crop=False) # (1, 3, 531, 800)

# Set the input to the network

net.setInput(blob)

# Run the forward pass to get output from the output layers

boxes, masks = net.forward(['detection_out_final', 'detection_masks'])

"""

(1, 1, 100, 7) top 100 RoI, (0, classid, score, x0, y0, x1, y1)

(100, 90, 15, 15) 100 RoI, 90 classes, 15*15 feature maps size

"""

# Extract the bounding box and mask for each of the detected objects

postprocess(boxes, masks)

# Put efficiency information.

t, _ = net.getPerfProfile()

label = 'Mask-RCNN Inference time for a frame : %0.0f ms' % abs(t * 1000.0 / cv.getTickFrequency())

cv.putText(frame, label, (0, 15), cv.FONT_HERSHEY_SIMPLEX, 0.5, (0, 0, 0))

# Write the frame with the detection boxes

if (args.image):

cv.imwrite(outputFile, frame.astype(np.uint8));

else:

vid_writer.write(frame.astype(np.uint8))

cv.imshow(winName, frame)

根据 bbox 的类别,取 mask 输出特征图对应类别的通道特征 classMask = mask[classId]

画 mask 的时候,先 resize 到 bbox 的大小,再借助了 cv2.findContours 和 drawContours 绘制出轮廓

4、结果展示

输入图片

输出结果

输入图片

输出结果

输入图片

输出结果

输入图片

输出结果

输入图片

输出结果

输入图片

输出结果

输入图片

输出结果

看看视频的结果

cars_mask_rcnn_out

5、涉及到的库

getPerfProfile

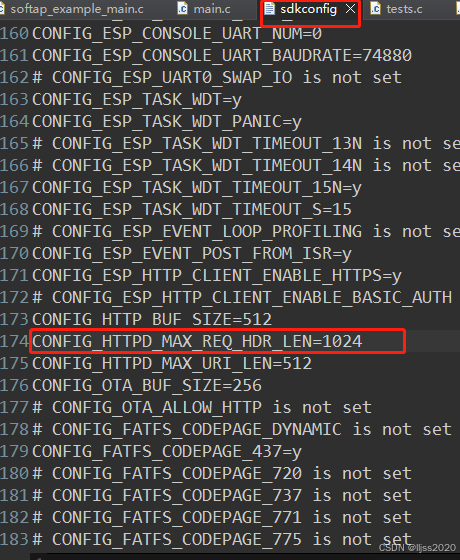

getPerfProfile 是 OpenCV 库中用于获取深度学习模型推理性能概况的一个函数。该函数主要用于分析模型中各层的执行时间,帮助开发者了解模型性能瓶颈和优化方向。

一、功能

getPerfProfile 函数返回一个包含模型各层执行时间的向量(或类似结构),单位通常为毫秒或秒,具体取决于函数实现和调用方式。

通过这个函数,开发者可以获取到模型推理过程中每一层所消耗的时间,进而分析哪些层是性能瓶颈,需要进一步优化。

使用场景

在使用 OpenCV 进行深度学习模型推理时,尤其是在对实时性要求较高的应用场景中,如视频处理、实时监控系统等,使用 getPerfProfile 函数可以帮助开发者评估和优化模型性能。

二、示例代码

import cv2

# 加载预训练模型

net = cv2.dnn.readNet("model.xml", "model.bin")

# 假设有一个输入图像 blob

blob = cv2.dnn.blobFromImage(...)

# 设置输入并进行推理

net.setInput(blob)

outputs = net.forward()

# 获取性能概况

t, _ = net.getPerfProfile()

# 假设 t 是以秒为单位的时间,转换为毫秒并打印

print("Inference time: %.2f ms" % (t * 1000.0))

# 如果需要更详细的每层时间,可以遍历 t

# 注意:这里的 t 可能是一个向量,包含了多层的执行时间

for layer_idx, layer_time in enumerate(t):

print(f"Layer {layer_idx}: {layer_time * 1000.0} ms")

三、注意事项

getPerfProfile 函数的返回值和单位可能因 OpenCV 的不同版本或不同的深度学习后端(如 DNN 模块支持的 TensorFlow、Caffe、PyTorch 等)而有所不同。

在使用该函数时,请确保您的 OpenCV 版本支持该功能,并仔细阅读相关文档以了解其具体用法和注意事项。

由于深度学习模型的复杂性和多样性,getPerfProfile 函数提供的性能数据仅供参考,实际的优化工作还需要结合模型的具体结构和应用场景进行。

6、参考

-

论文解读

【Mask RCNN】《Mask R-CNN》 -

tensorflow 代码解读

Mask RCNN without Mask -

OpenCV进阶(7)在 OpenCV中使用 Mask RCNN实现对象检测和实例分割