文章目录

- 前言

- 一、kitti深度图官网介绍

-

- 1、官网深度图介绍

- 2、深度图读取官网代码(python)

- 3、深度图解读

-

- 1、数据格式内容

- 2、深度图加工

- 3、深度图转相机坐标深度

- 二、kitti数据内参P矩阵解读

-

- 1、P2矩阵举例

- 2、内参矩阵 (3x3)

- 3、特殊平移向量(第4列)

- 4、kitti的bx与by解释

- 三、kitti深度图转换基础函数

-

- 1、图像获取函数方法

- 2、点云数据获取函数方法

- 3、标定文件数据获取函数方法

-

- 外参逆矩阵求解(转置方法求解)

- 外参逆矩阵求解(直接求解)

- 4、可视化点云数据函数方法

- 四、kitti深度图转点云坐标方法

-

- 1、深度图读取与转深度坐标方法

- 2、深度图转点云坐标整体代码

- 3、像素坐标与深度值获取

- 4、坐标转换 u v depth格式

- 5、深度图图像深度值绘制

- 6、像素与深度值对转相机坐标

- 7、相机坐标系转点云坐标系

- 五、完整代码与结果现实

-

- 1、结果现实

- 2、完整代码

前言

kitti数据是一个通用数据,但里面点云坐标如何转到像素坐标以及对应点云转到像素坐标对应的相机坐标的深度值求解,也是一个较为麻烦的事情。如果你是小白,必然会在其中摸不着头脑。为此,本文分享kitti数据的点云坐标转像素坐标方法以及对应像素坐标的深度值求解方法,这也是本文重点介绍内容。而本文与其它文章不太相同,我们不纯粹讲解原理,而是使用代码直接转换,并在代码过程中说明其原理,我们也会给出完整代码。

注:像素与深度值对转相机坐标内容就是给出深度值如何转3d相机坐标,该部分内容十分重要!

一、kitti深度图官网介绍

1、官网深度图介绍

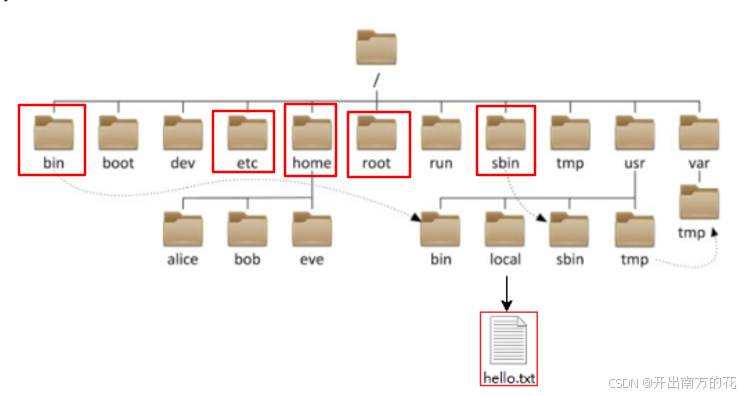

通过kitti官网下载后获得devkit内容获得了深度图说明,而devkit内容如下:

深度图解读如下,该部分内容来源readme.txt文件内容,我只是将其部分翻译成了中文而已。

###########################################################################

# THE KITTI VISION BENCHMARK: DEPTH PREDICTION/COMPLETION BENCHMARKS 2017 #

# based on our publication Sparsity Invariant CNNs (3DV 2017) #

# #

# Jonas Uhrig Nick Schneider Lukas Schneider #

# Uwe Franke Thomas Brox Andreas Geiger #

# #

# Daimler R&D Sindelfingen University of Freiburg #

# KIT Karlsruhe ETH Zürich MPI Tübingen #

# #

###########################################################################

This file describes the 2017 KITTI depth completion and single image depth

prediction benchmarks, consisting of 93k training and 1.5k test images.

Ground truth has been acquired by accumulating 3D point clouds from a

360 degree Velodyne HDL-64 Laserscanner and a consistency check using

stereo camera pairs. Please have a look at our publications for details.

这份文件描述了2017年的KITTI深度完成及单目图像深度预测基准测试,包含93,000张训练图

像和1,500张测试图像。真实数据是通过累积来自360度Velodyne HDL-64激光雷达扫描仪的3D

点云数据,并使用立体相机对进行一致性检查获得的。更多详情请参阅我们的出版物。

Dataset description:

====================

If you unzip all downloaded files from the KITTI vision benchmark website

into the same base directory, your folder structure will look like this:

|-- devkit

|-- test_depth_completion_anonymous

|-- image

|-- 0000000000.png

|-- ...

|-- 0000000999.png

|-- velodyne_raw

|-- 0000000000.png

|-- ...

|-- 0000000999.png

|-- test_depth_prediction_anonymous

|-- image

|-- 0000000000.png

|-- ...

|-- 0000000999.png

|-- train

|-- 2011_xx_xx_drive_xxxx_sync

|-- proj_depth

|-- groundtruth # "groundtruth" describes our annotated depth maps

|-- image_02 # image_02 is the depth map for the left camera

|-- 0000000005.png # image IDs start at 5 because we accumulate 11 frames

|-- ... # .. which is +-5 around the current frame ;)

|-- image_03 # image_02 is the depth map for the right camera

|-- 0000000005.png

|-- ...

|-- velodyne_raw # this contains projected and temporally unrolled

|-- image_02 # raw Velodyne laser scans

|-- 0000000005.png

|-- ...

|-- image_03

|-- 0000000005.png

|-- ...

|-- ... (all drives of all days in the raw KITTI dataset)

|-- val

|-- (same as in train)

|-- val_selection_cropped # 1000 images of size 1216x352, cropped and manually

|-- groundtruth_depth # selected frames from from the full validation split

|-- 2011_xx_xx_drive_xxxx_sync_groundtruth_depth_xxxxxxxxxx_image_0x.png

|-- ...

|-- image

|-- 2011_xx_xx_drive_xxxx_sync_groundtruth_depth_xxxxxxxxxx_image_0x.png

|-- ...

|-- velodyne_raw

|-- 2011_xx_xx_drive_xxxx_sync_groundtruth_depth_xxxxxxxxxx_image_0x.png

|-- ...

For train and val splits, the mapping from the KITTI raw dataset to our

generated depth maps and projected raw laser scans can be extracted. All

files are uniquely identified by their recording date, the drive ID as well

as the camera ID (02 for left, 03 for right camera).

对于训练和验证集的划分,可以从原始的KITTI数据集映射到我们生成的深度图和投影的原始激光雷达扫描。

所有文件都通过记录日期、驾驶ID以及相机ID(02表示左相机,03表示右相机)唯一标识。

Submission instructions:

========================

NOTE: WHEN SUBMITTING RESULTS, PLEASE STORE THEM IN THE SAME DATA FORMAT IN

WHICH THE GROUND TRUTH DATA IS PROVIDED (SEE BELOW), USING THE FILE NAMES

0000000000.png TO 0000000999.png (DEPTH COMPLETION) OR 0000000499.png (DEPTH

PREDICTION). CREATE A ZIP ARCHIVE OF THEM AND STORE YOUR RESULTS IN YOUR

ZIP'S ROOT FOLDER:

|-- zip

|-- 0000000000.png

|-- ...

|-- 0000000999.png

Data format:

============

Depth maps (annotated and raw Velodyne scans) are saved as uint16 PNG images,

which can be opened with either MATLAB, libpng++ or the latest version of

Python's pillow (from PIL import Image). A 0 value indicates an invalid pixel

(ie, no ground truth exists, or the estimation algorithm didn't produce an

estimate for that pixel). Otherwise, the depth for a pixel can be computed

in meters by converting the uint16 value to float and dividing it by 256.0:

深度图(标注过的和原始的Velodyne扫描)保存为uint16的PNG图像,可以使用MATLAB、

libpng++或Python的pillow库的最新版本(从PIL导入Image)打开。一个0值表示无效像

素(即,没有真实值,或者估计算法没有为该像素产生估计)。否则,可以通过将uint16

值转换为浮点数并除以256.0来计算像素的深度(以米为单位):

disp(u,v) = ((float)I(u,v))/256.0;

valid(u,v) = I(u,v)>0;

Evaluation Code:

================

For transparency we have included the benchmark evaluation code in the

sub-folder 'cpp' of this development kit. It can be compiled by running

the 'make.sh' script. Run it using two arguments:

./evaluate_depth gt_dir prediction_dir

Note that gt_dir is most likely '../../val_selection_cropped/groundtruth_depth'

if you unzipped all files in the same base directory. We also included a sample

result of our proposed approach for the validation split ('predictions/sparseConv_val').

为了保证透明性,我们在此开发套件的子文件夹'cpp'中包含了基准评估代码。可以通过运行

'make.sh'脚本来编译它。使用两个参数来运行它:

./evaluate_depth gt_dir prediction_dir

注意,gt_dir很可能就是'../../val_selection_cropped/groundtruth_depth',假如你是在

同一个基础目录下解压了所有文件的话。我们也包括了我们提出的方法在验证集上的一个示例

结果('predictions/sparseConv_val')。

2、深度图读取官网代码(python)

读取深度图有MATLAB、cpp与python代码,我这里直接给出python代码,如下:

#!/usr/bin/python

from PIL import Image

import numpy as np

def depth_read(filename):

# loads depth map D from png file

# and returns it as a numpy array,

# for details see readme.txt

depth_png = np.array(Image.open(filename), dtype=int)

# make sure we have a proper 16bit depth map here.. not 8bit!

assert(np.max(depth_png) > 255)

depth = depth_png.astype(np.float) / 256.

depth[depth_png == 0] = -1.

return depth

3、深度图解读

1、数据格式内容

我还是引入官网内容,如下:

|-- devkit

|-- test_depth_completion_anonymous

|-- image

|-- 0000000000.png

|-- ...

|-- 0000000999.png

|-- velodyne_raw

|-- 0000000000.png

|-- ...

|-- 0000000999.png

|-- test_depth_prediction_anonymous

|-- image

|-- 0000000000.png

|-- ...

|-- 0000000999.png

|-- train

|-- 2011_xx_xx_drive_xxxx_sync

|-- proj_depth

|-- groundtruth # "groundtruth" describes our annotated depth maps

|-- image_02 # image_02 is the depth map for the left camera

|-- 0000000005.png # image IDs start at 5 because we accumulate 11 frames

|-- ... # .. which is +-5 around the current frame ;)

|-- image_03 # image_02 is the depth map for the right camera

|-- 0000000005.png

|-- ...

|-- velodyne_raw # this contains projected and temporally unrolled

|-- image_02 # raw Velodyne laser scans

|-- 0000000005.png

|-- ...

|-- image_03

|-- 0000000005.png

|-- ...

|-- ... (all drives of all days in the raw KITTI dataset)

|-- val

|-- (same as in train)

|-- val_selection_cropped # 1000 images of size 1216x352, cropped and manually

|-- groundtruth_depth # selected frames from from the full validation split

|-- 2011_xx_xx_drive_xxxx_sync_groundtruth_depth_xxxxxxxxxx_image_0x.png

|-- ...

|-- image

|-- 2011_xx_xx_drive_xxxx_sync_groundtruth_depth_xxxxxxxxxx_image_0x.png

|-- ...

|-- velodyne_raw

|-- 2011_xx_xx_drive_xxxx_sync_groundtruth_depth_xxxxxxxxxx_image_0x.png

|-- ...

2、深度图加工

官网已经说的很明白了,我们读取的深度图(标注过的和原始的Velodyne扫描)保存为uint16的PNG图像,可以使用MATLAB、libpng++或Python的pillow库的最新版本(从PIL导入Image)打开。一个0值表示无效像素(即,没有真实值,或者估计算法没有为该像素产生估计)。否则,可以通过将uint16值转换为浮点数并除以256.0来计算像素的深度(以米为单位)。使用计算方法如下代码:

disp(u,v) = ((float)I(u,v))/256.0;

valid(u,v) = I(u,v)>0;

3、深度图转相机坐标深度

其实就是1中的代码,我这里将其再次给出,以此强调含义,代码如下:

from PIL import Image

import numpy as np

def depth_read(filename):

# loads depth map D from png file

# and returns it as a numpy array,

# for details see readme.txt

depth_png = np.array(Image.open(filename), dtype=int)

# make sure we have a proper 16bit depth map here.. not 8bit!

assert(np.max(depth_png) > 255)

depth = depth_png.astype(np.float) / 256.

depth[depth_png == 0] = -1.

return depth

二、kitti数据内参P矩阵解读

1、P2矩阵举例

在KITTI数据集中,校准文件中的每个数值都有其特定的含义。下面是对您提供的P2矩阵中各个数值的具体解释:

P2: 7.215377000000e+02 0.000000000000e+00 6.095593000000e+02 4.485728000000e+01

0.000000000000e+00 7.215377000000e+02 1.728540000000e+02 2.163791000000e-01

0.000000000000e+00 0.000000000000e+00 1.000000000000e+00 2.745884000000e-03

我们可以将其重组成一个3x4矩阵的形式:

[ 7.215377000000e+02 0.000000000000e+00 6.095593000000e+02 4.485728000000e+01 ]

[ 0.000000000000e+00 7.215377000000e+02 1.728540000000e+02 2.163791000000e-01 ]

[ 0.000000000000e+00 0.000000000000e+00 1.000000000000e+00 2.745884000000e-03 ]

这个矩阵可以分为两部分:左上角的3x3子矩阵是相机的内参矩阵,右列(第4列)是与相机坐标系到图像坐标系之间平移相关的参数。

2、内参矩阵 (3x3)

- 第一行第一列(

fx):x轴方向上的焦距(以像素为单位)。这是将物理尺寸转换为像素的重要参数。在本例中,fx = 7.215377。 - 第二行第二列(

fy):y轴方向上的焦距(以像素为单位)。这同样用于物理尺寸到像素的转换。在本例中,fy = 7.215377。 - 第一行第三列(

cx):图像坐标系的主点(光心)在x轴上的位置(以像素为单位)。这是图像中心的x坐标。在本例中,cx = 6.095593。 - 第二行第三列(

cy):图像坐标系的主点(光心)在y轴上的位置(以像素为单位)。这是图像中心的y坐标。在本例中,cy = 1.728540。

3、特殊平移向量(第4列)

- 第一行第四列(

tx):相机坐标系到图像平面在x轴上的平移距离(通常以像素为单位)。在本例中,tx = 4.4857