目录

- 1. 正弦数据生成

- 2. 构建网络

- 3. 训练

- 4. 预测

- 5. 完整代码

- 6. 结果展示

1. 正弦数据生成

曲线如下图:

代码如下图:

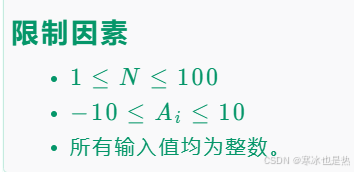

- 50个点构成一个正弦曲线

- 随机生成一个0~3之间的一个值(随机的原因是防止每次都从相同的点开始,50个点的正弦曲线一样,被模型记住),值的范围区间是[start, start+10]

- 输入x范围[0,48],预测值y范围是[1,49]

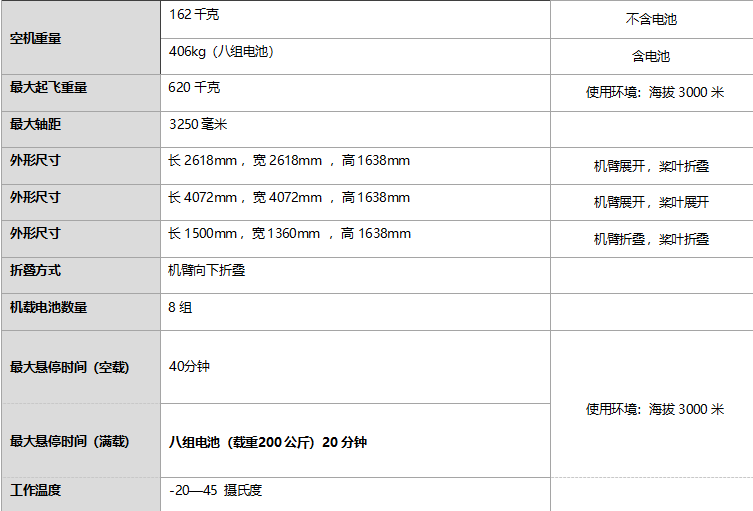

2. 构建网络

下图是构建的网络,注意out维度扩展出一个维度,是为了和y维度一致

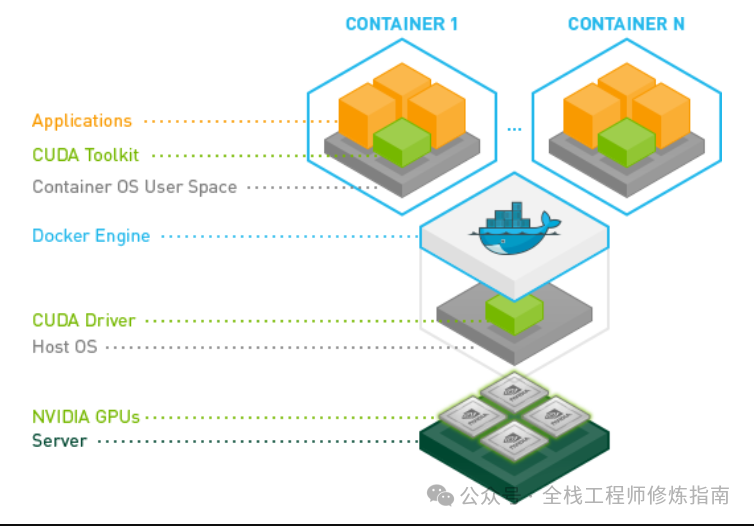

3. 训练

loss计算采用均方差MSE,优化器采用Adam

注意:hidden_prev的自更新

4. 预测

预测是循环一个点一个点的预测,每次预测的点的结果作为下次点的输入,直到预测出全部点,放到predictions中。

input = x[:,0,:] 去掉了x[1,seq,1]中的seq维度,变成[1,1]

5. 完整代码

import numpy as np

import torch

import torch.nn as nn

import torch.optim as optim

from matplotlib import pyplot as plt

num_time_steps = 50

input_size = 1

hidden_size = 16

output_size = 1

lr=0.01

class Net(nn.Module):

def __init__(self, ):

super(Net, self).__init__()

self.rnn = nn.RNN(

input_size=input_size,

hidden_size=hidden_size,

num_layers=1,

batch_first=True,

)

for p in self.rnn.parameters():

nn.init.normal_(p, mean=0.0, std=0.001)

self.linear = nn.Linear(hidden_size, output_size)

def forward(self, x, hidden_prev):

out, hidden_prev = self.rnn(x, hidden_prev)

# [b, seq, h]

out = out.view(-1, hidden_size)

out = self.linear(out)

out = out.unsqueeze(dim=0)

return out, hidden_prev

model = Net()

criterion = nn.MSELoss()

optimizer = optim.Adam(model.parameters(), lr)

hidden_prev = torch.zeros(1, 1, hidden_size)

for iter in range(6000):

start = np.random.randint(3, size=1)[0]

time_steps = np.linspace(start, start + 10, num_time_steps)

data = np.sin(time_steps)

data = data.reshape(num_time_steps, 1)

x = torch.tensor(data[:-1]).float().view(1, num_time_steps - 1, 1)

y = torch.tensor(data[1:]).float().view(1, num_time_steps - 1, 1)

output, hidden_prev = model(x, hidden_prev)

hidden_prev = hidden_prev.detach()

loss = criterion(output, y)

model.zero_grad()

loss.backward()

# for p in model.parameters():

# print(p.grad.norm())

# torch.nn.utils.clip_grad_norm_(p, 10)

optimizer.step()

if iter % 100 == 0:

print("Iteration: {} loss {}".format(iter, loss.item()))

start = np.random.randint(3, size=1)[0]

time_steps = np.linspace(start, start + 10, num_time_steps)

data = np.sin(time_steps)

data = data.reshape(num_time_steps, 1)

x = torch.tensor(data[:-1]).float().view(1, num_time_steps - 1, 1)

y = torch.tensor(data[1:]).float().view(1, num_time_steps - 1, 1)

predictions = []

input = x[:, 0, :]

for _ in range(x.shape[1]):

input = input.view(1, 1, 1)

(pred, hidden_prev) = model(input, hidden_prev)

input = pred

predictions.append(pred.detach().numpy().ravel()[0])

x = x.data.numpy().ravel()

y = y.data.numpy()

plt.scatter(time_steps[:-1], x.ravel(), s=90)

plt.plot(time_steps[:-1], x.ravel())

plt.scatter(time_steps[1:], predictions)

plt.show()

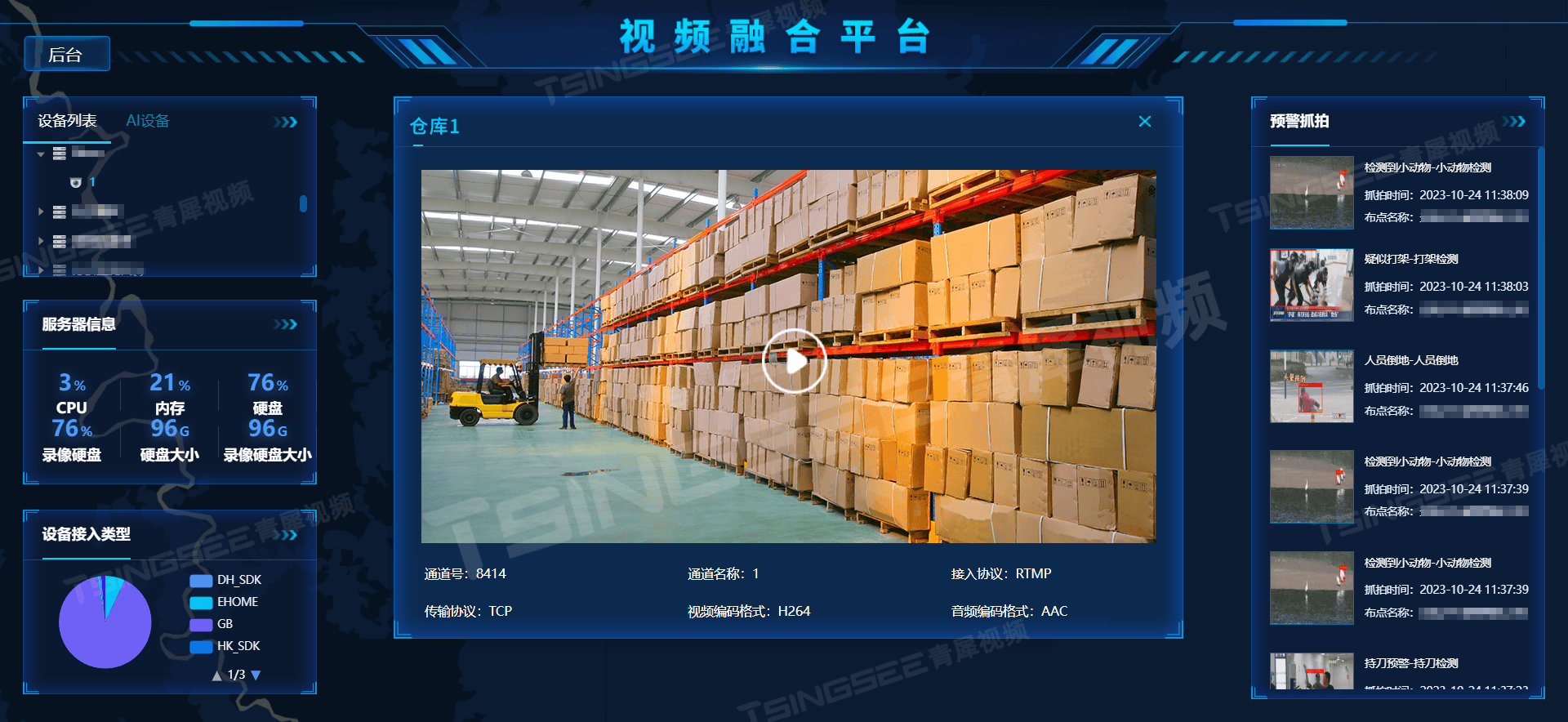

6. 结果展示

图中黄色点是预测点,蓝色为实际点,前面的曲线是start不随机预测的效果,说明曲线已经被模型记住了;后面的曲线是start随机预测的效果,基本趋势和真实点是一致的。