ShuffleNet图像分类

当前案例不支持在GPU设备上静态图模式运行,其他模式运行皆支持。

ShuffleNet网络介绍

ShuffleNetV1是旷视科技提出的一种计算高效的CNN模型,和MobileNet, SqueezeNet等一样主要应用在移动端,所以模型的设计目标就是利用有限的计算资源来达到最好的模型精度。ShuffleNetV1的设计核心是引入了两种操作:Pointwise Group Convolution和Channel Shuffle,这在保持精度的同时大大降低了模型的计算量。因此,ShuffleNetV1和MobileNet类似,都是通过设计更高效的网络结构来实现模型的压缩和加速。

了解ShuffleNet更多详细内容,详见论文ShuffleNet。

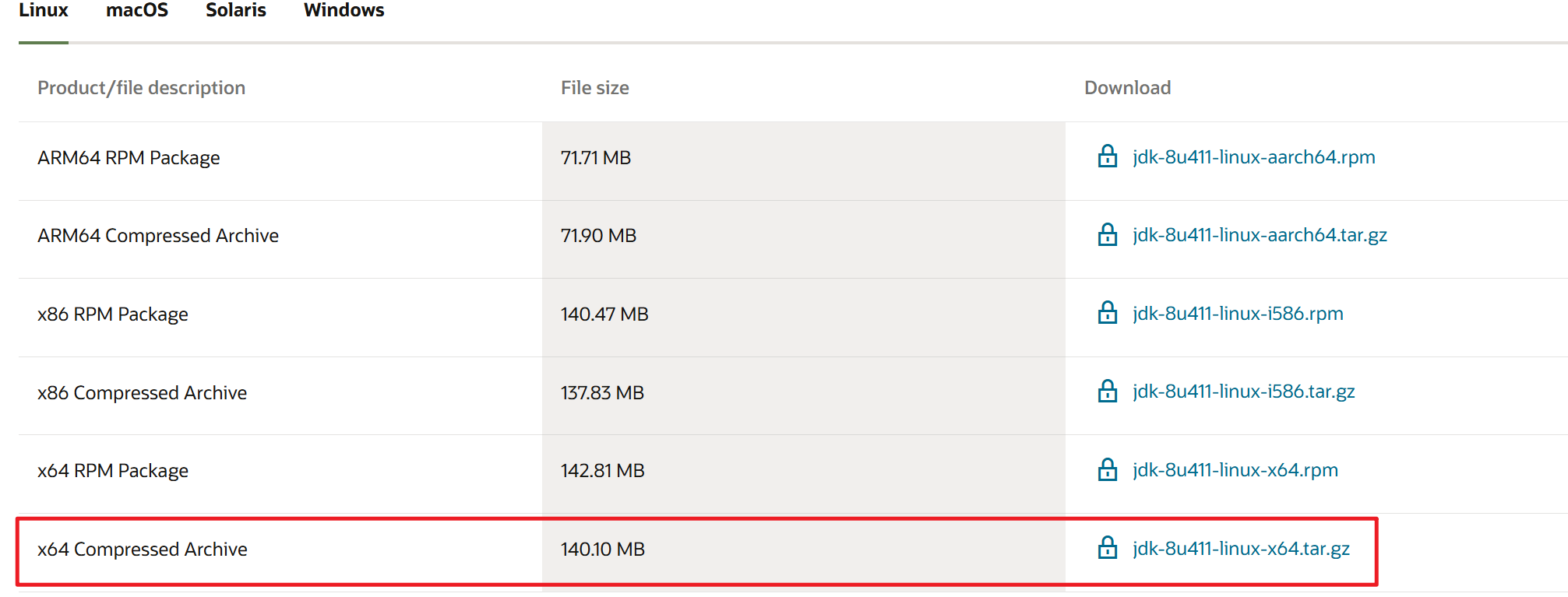

如下图所示,ShuffleNet在保持不低的准确率的前提下,将参数量几乎降低到了最小,因此其运算速度较快,单位参数量对模型准确率的贡献非常高。

图片来源:Bianco S, Cadene R, Celona L, et al. Benchmark analysis of representative deep neural network architectures[J]. IEEE access, 2018, 6: 64270-64277.

模型架构

ShuffleNet最显著的特点在于对不同通道进行重排来解决Group Convolution带来的弊端。通过对ResNet的Bottleneck单元进行改进,在较小的计算量的情况下达到了较高的准确率。

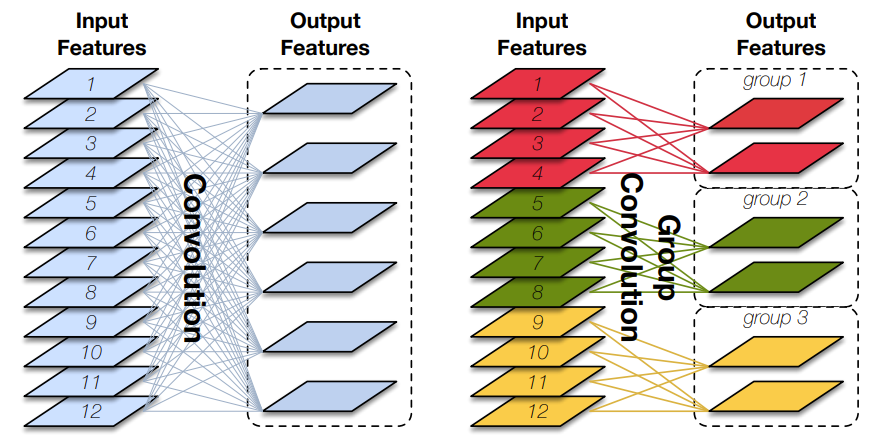

Pointwise Group Convolution

Group Convolution(分组卷积)原理如下图所示,相比于普通的卷积操作,分组卷积的情况下,每一组的卷积核大小为in_channels/g*k*k,一共有g组,所有组共有(in_channels/g*k*k)*out_channels个参数,是正常卷积参数的1/g。分组卷积中,每个卷积核只处理输入特征图的一部分通道,其优点在于参数量会有所降低,但输出通道数仍等于卷积核的数量。

图片来源:Huang G, Liu S, Van der Maaten L, et al. Condensenet: An efficient densenet using learned group convolutions[C]//Proceedings of the IEEE conference on computer vision and pattern recognition. 2018: 2752-2761.

Depthwise Convolution(深度可分离卷积)将组数g分为和输入通道相等的in_channels,然后对每一个in_channels做卷积操作,每个卷积核只处理一个通道,记卷积核大小为1*k*k,则卷积核参数量为:in_channels*k*k,得到的feature maps通道数与输入通道数相等;

Pointwise Group Convolution(逐点分组卷积)在分组卷积的基础上,令每一组的卷积核大小为 1 × 1 1\times 1 1×1,卷积核参数量为(in_channels/g*1*1)*out_channels。

%%capture captured_output

# 实验环境已经预装了mindspore==2.2.14,如需更换mindspore版本,可更改下面mindspore的版本号

!pip uninstall mindspore -y

!pip install -i https://pypi.mirrors.ustc.edu.cn/simple mindspore==2.2.14

# 查看当前 mindspore 版本

!pip show mindspore

Name: mindspore

Version: 2.2.14

Summary: MindSpore is a new open source deep learning training/inference framework that could be used for mobile, edge and cloud scenarios.

Home-page: https://www.mindspore.cn

Author: The MindSpore Authors

Author-email: contact@mindspore.cn

License: Apache 2.0

Location: /home/nginx/miniconda/envs/jupyter/lib/python3.9/site-packages

Requires: asttokens, astunparse, numpy, packaging, pillow, protobuf, psutil, scipy

Required-by:

from mindspore import nn

import mindspore.ops as ops

from mindspore import Tensor

class GroupConv(nn.Cell):

def __init__(self, in_channels, out_channels, kernel_size,

stride, pad_mode="pad", pad=0, groups=1, has_bias=False):

super(GroupConv, self).__init__()

self.groups = groups

self.convs = nn.CellList()

for _ in range(groups):

self.convs.append(nn.Conv2d(in_channels // groups, out_channels // groups,

kernel_size=kernel_size, stride=stride, has_bias=has_bias,

padding=pad, pad_mode=pad_mode, group=1, weight_init='xavier_uniform'))

def construct(self, x):

features = ops.split(x, split_size_or_sections=int(len(x[0]) // self.groups), axis=1)

outputs = ()

for i in range(self.groups):

outputs = outputs + (self.convs[i](features[i].astype("float32")),)

out = ops.cat(outputs, axis=1)

return out

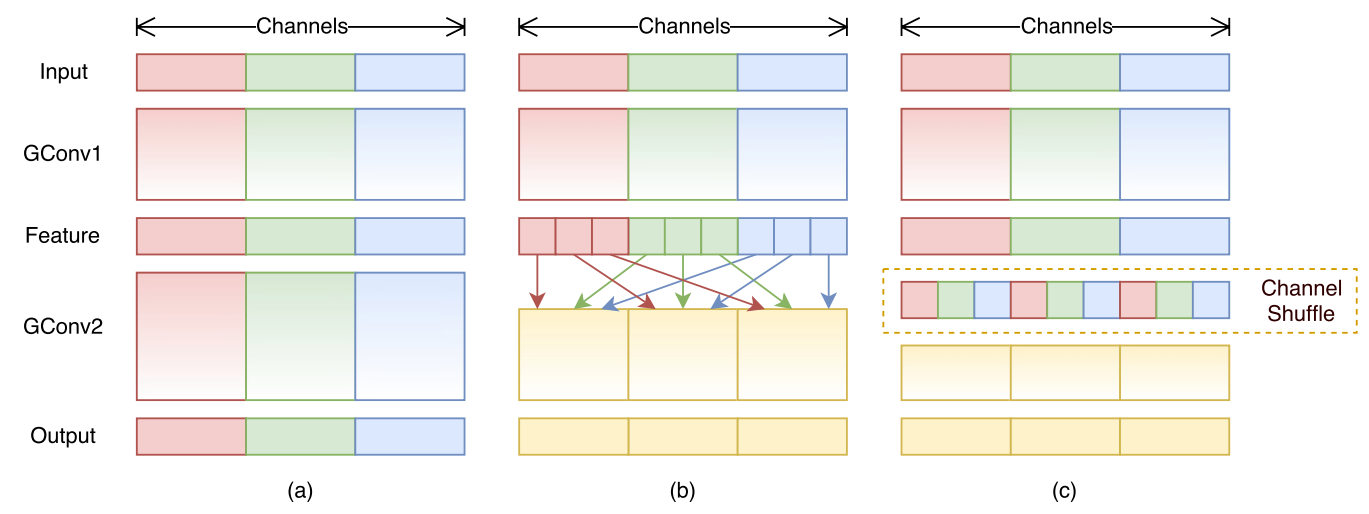

Channel Shuffle

Group Convolution的弊端在于不同组别的通道无法进行信息交流,堆积GConv层后一个问题是不同组之间的特征图是不通信的,这就好像分成了g个互不相干的道路,每一个人各走各的,这可能会降低网络的特征提取能力。这也是Xception,MobileNet等网络采用密集的1x1卷积(Dense Pointwise Convolution)的原因。

为了解决不同组别通道“近亲繁殖”的问题,ShuffleNet优化了大量密集的1x1卷积(在使用的情况下计算量占用率达到了惊人的93.4%),引入Channel Shuffle机制(通道重排)。这项操作直观上表现为将不同分组通道均匀分散重组,使网络在下一层能处理不同组别通道的信息。

如下图所示,对于g组,每组有n个通道的特征图,首先reshape成g行n列的矩阵,再将矩阵转置成n行g列,最后进行flatten操作,得到新的排列。这些操作都是可微分可导的且计算简单,在解决了信息交互的同时符合了ShuffleNet轻量级网络设计的轻量特征。

为了阅读方便,将Channel Shuffle的代码实现放在下方ShuffleNet模块的代码中。

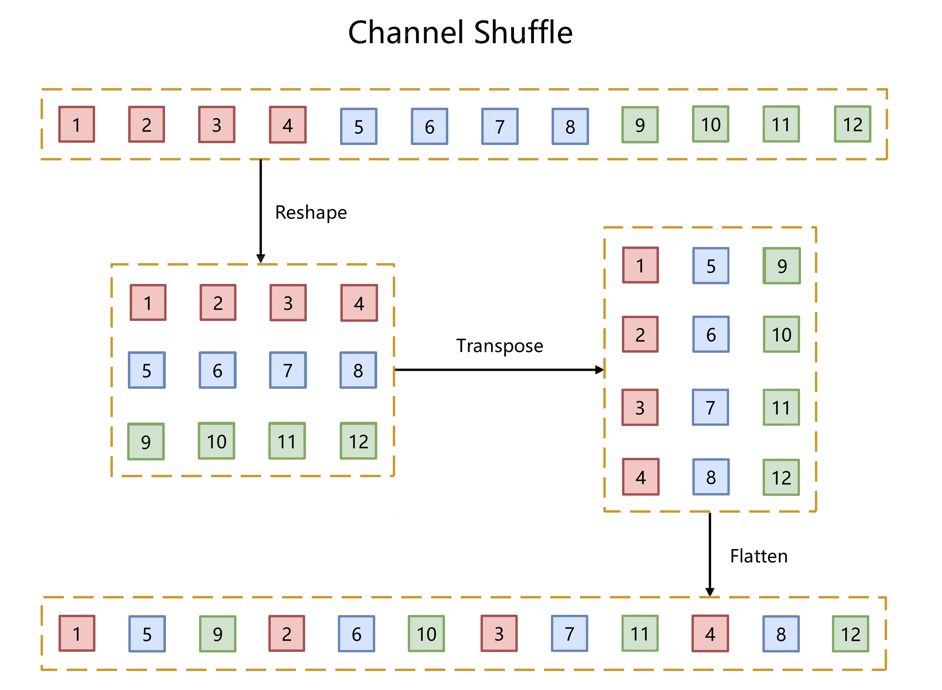

ShuffleNet模块

如下图所示,ShuffleNet对ResNet中的Bottleneck结构进行由(a)到(b), ©的更改:

-

将开始和最后的 1 × 1 1\times 1 1×1卷积模块(降维、升维)改成Point Wise Group Convolution;

-

为了进行不同通道的信息交流,再降维之后进行Channel Shuffle;

-

降采样模块中, 3 × 3 3 \times 3 3×3 Depth Wise Convolution的步长设置为2,长宽降为原来的一般,因此shortcut中采用步长为2的 3 × 3 3\times 3 3×3平均池化,并把相加改成拼接。

class ShuffleV1Block(nn.Cell):

def __init__(self, inp, oup, group, first_group, mid_channels, ksize, stride):

super(ShuffleV1Block, self).__init__()

self.stride = stride

pad = ksize // 2

self.group = group

if stride == 2:

outputs = oup - inp

else:

outputs = oup

self.relu = nn.ReLU()

branch_main_1 = [

GroupConv(in_channels=inp, out_channels=mid_channels,

kernel_size=1, stride=1, pad_mode="pad", pad=0,

groups=1 if first_group else group),

nn.BatchNorm2d(mid_channels),

nn.ReLU(),

]

branch_main_2 = [

nn.Conv2d(mid_channels, mid_channels, kernel_size=ksize, stride=stride,

pad_mode='pad', padding=pad, group=mid_channels,

weight_init='xavier_uniform', has_bias=False),

nn.BatchNorm2d(mid_channels),

GroupConv(in_channels=mid_channels, out_channels=outputs,

kernel_size=1, stride=1, pad_mode="pad", pad=0,

groups=group),

nn.BatchNorm2d(outputs),

]

self.branch_main_1 = nn.SequentialCell(branch_main_1)

self.branch_main_2 = nn.SequentialCell(branch_main_2)

if stride == 2:

self.branch_proj = nn.AvgPool2d(kernel_size=3, stride=2, pad_mode='same')

def construct(self, old_x):

left = old_x

right = old_x

out = old_x

right = self.branch_main_1(right)

if self.group > 1:

right = self.channel_shuffle(right)

right = self.branch_main_2(right)

if self.stride == 1:

out = self.relu(left + right)

elif self.stride == 2:

left = self.branch_proj(left)

out = ops.cat((left, right), 1)

out = self.relu(out)

return out

def channel_shuffle(self, x):

batchsize, num_channels, height, width = ops.shape(x)

group_channels = num_channels // self.group

x = ops.reshape(x, (batchsize, group_channels, self.group, height, width))

x = ops.transpose(x, (0, 2, 1, 3, 4))

x = ops.reshape(x, (batchsize, num_channels, height, width))

return x

构建ShuffleNet网络

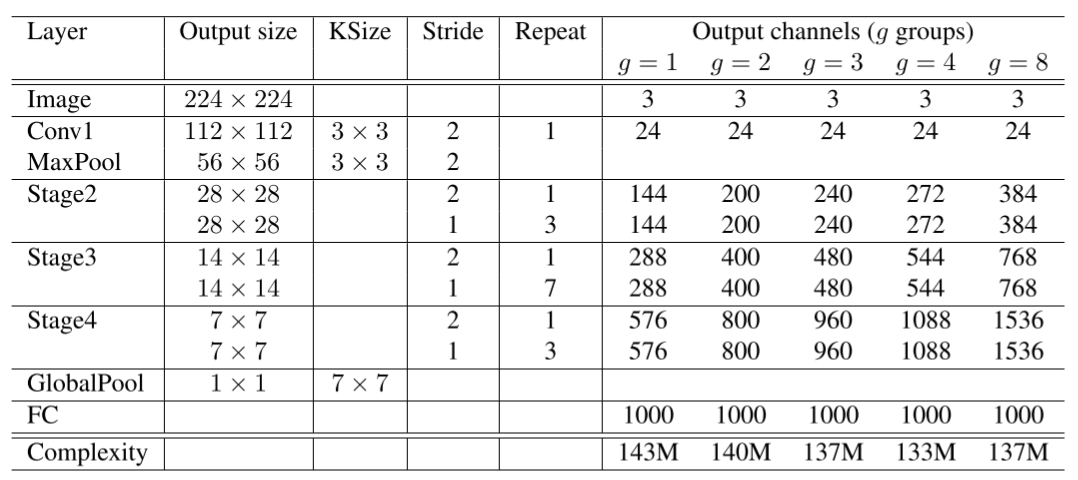

ShuffleNet网络结构如下图所示,以输入图像 224 × 224 224 \times 224 224×224,组数3(g = 3)为例,首先通过数量24,卷积核大小为 3 × 3 3 \times 3 3×3,stride为2的卷积层,输出特征图大小为 112 × 112 112 \times 112 112×112,channel为24;然后通过stride为2的最大池化层,输出特征图大小为 56 × 56 56 \times 56 56×56,channel数不变;再堆叠3个ShuffleNet模块(Stage2, Stage3, Stage4),三个模块分别重复4次、8次、4次,其中每个模块开始先经过一次下采样模块(上图©),使特征图长宽减半,channel翻倍(Stage2的下采样模块除外,将channel数从24变为240);随后经过全局平均池化,输出大小为 1 × 1 × 960 1 \times 1 \times 960 1×1×960,再经过全连接层和softmax,得到分类概率。

class ShuffleNetV1(nn.Cell):

def __init__(self, n_class=1000, model_size='2.0x', group=3):

super(ShuffleNetV1, self).__init__()

print('model size is ', model_size)

self.stage_repeats = [4, 8, 4]

self.model_size = model_size

if group == 3:

if model_size == '0.5x':

self.stage_out_channels = [-1, 12, 120, 240, 480]

elif model_size == '1.0x':

self.stage_out_channels = [-1, 24, 240, 480, 960]

elif model_size == '1.5x':

self.stage_out_channels = [-1, 24, 360, 720, 1440]

elif model_size == '2.0x':

self.stage_out_channels = [-1, 48, 480, 960, 1920]

else:

raise NotImplementedError

elif group == 8:

if model_size == '0.5x':

self.stage_out_channels = [-1, 16, 192, 384, 768]

elif model_size == '1.0x':

self.stage_out_channels = [-1, 24, 384, 768, 1536]

elif model_size == '1.5x':

self.stage_out_channels = [-1, 24, 576, 1152, 2304]

elif model_size == '2.0x':

self.stage_out_channels = [-1, 48, 768, 1536, 3072]

else:

raise NotImplementedError

input_channel = self.stage_out_channels[1]

self.first_conv = nn.SequentialCell(

nn.Conv2d(3, input_channel, 3, 2, 'pad', 1, weight_init='xavier_uniform', has_bias=False),

nn.BatchNorm2d(input_channel),

nn.ReLU(),

)

self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, pad_mode='same')

features = []

for idxstage in range(len(self.stage_repeats)):

numrepeat = self.stage_repeats[idxstage]

output_channel = self.stage_out_channels[idxstage + 2]

for i in range(numrepeat):

stride = 2 if i == 0 else 1

first_group = idxstage == 0 and i == 0

features.append(ShuffleV1Block(input_channel, output_channel,

group=group, first_group=first_group,

mid_channels=output_channel // 4, ksize=3, stride=stride))

input_channel = output_channel

self.features = nn.SequentialCell(features)

self.globalpool = nn.AvgPool2d(7)

self.classifier = nn.Dense(self.stage_out_channels[-1], n_class)

def construct(self, x):

x = self.first_conv(x)

x = self.maxpool(x)

x = self.features(x)

x = self.globalpool(x)

x = ops.reshape(x, (-1, self.stage_out_channels[-1]))

x = self.classifier(x)

return x

模型训练和评估

采用CIFAR-10数据集对ShuffleNet进行预训练。

训练集准备与加载

采用CIFAR-10数据集对ShuffleNet进行预训练。CIFAR-10共有60000张32*32的彩色图像,均匀地分为10个类别,其中50000张图片作为训练集,10000图片作为测试集。如下示例使用mindspore.dataset.Cifar10Dataset接口下载并加载CIFAR-10的训练集。目前仅支持二进制版本(CIFAR-10 binary version)。

from download import download

url = "https://mindspore-website.obs.cn-north-4.myhuaweicloud.com/notebook/datasets/cifar-10-binary.tar.gz"

download(url, "./dataset", kind="tar.gz", replace=True)

Downloading data from https://mindspore-website.obs.cn-north-4.myhuaweicloud.com/notebook/datasets/cifar-10-binary.tar.gz (162.2 MB)

file_sizes: 100%|█████████████████████████████| 170M/170M [00:01<00:00, 111MB/s]

Extracting tar.gz file...

Successfully downloaded / unzipped to ./dataset

'./dataset'

import mindspore as ms

from mindspore.dataset import Cifar10Dataset

from mindspore.dataset import vision, transforms

def get_dataset(train_dataset_path, batch_size, usage):

image_trans = []

if usage == "train":

image_trans = [

vision.RandomCrop((32, 32), (4, 4, 4, 4)),

vision.RandomHorizontalFlip(prob=0.5),

vision.Resize((224, 224)),

vision.Rescale(1.0 / 255.0, 0.0),

vision.Normalize([0.4914, 0.4822, 0.4465], [0.2023, 0.1994, 0.2010]),

vision.HWC2CHW()

]

elif usage == "test":

image_trans = [

vision.Resize((224, 224)),

vision.Rescale(1.0 / 255.0, 0.0),

vision.Normalize([0.4914, 0.4822, 0.4465], [0.2023, 0.1994, 0.2010]),

vision.HWC2CHW()

]

label_trans = transforms.TypeCast(ms.int32)

dataset = Cifar10Dataset(train_dataset_path, usage=usage, shuffle=True)

dataset = dataset.map(image_trans, 'image')

dataset = dataset.map(label_trans, 'label')

dataset = dataset.batch(batch_size, drop_remainder=True)

return dataset

dataset = get_dataset("./dataset/cifar-10-batches-bin", 128, "train")

batches_per_epoch = dataset.get_dataset_size()

模型训练

本节用随机初始化的参数做预训练。首先调用ShuffleNetV1定义网络,参数量选择"2.0x",并定义损失函数为交叉熵损失,学习率经过4轮的warmup后采用余弦退火,优化器采用Momentum。最后用train.model中的Model接口将模型、损失函数、优化器封装在model中,并用model.train()对网络进行训练。将ModelCheckpoint、CheckpointConfig、TimeMonitor和LossMonitor传入回调函数中,将会打印训练的轮数、损失和时间,并将ckpt文件保存在当前目录下。

import time

import mindspore

import numpy as np

from mindspore import Tensor, nn

from mindspore.train import ModelCheckpoint, CheckpointConfig, TimeMonitor, LossMonitor, Model, Top1CategoricalAccuracy, Top5CategoricalAccuracy

def train():

mindspore.set_context(mode=mindspore.PYNATIVE_MODE, device_target="Ascend")

# net = ShuffleNetV1(model_size="2.0x", n_class=10)

net = ShuffleNetV1(model_size="0.5x", n_class=10)

loss = nn.CrossEntropyLoss(weight=None, reduction='mean', label_smoothing=0.1)

min_lr = 0.0005

base_lr = 0.05

lr_scheduler = mindspore.nn.cosine_decay_lr(min_lr,

base_lr,

batches_per_epoch*250,

batches_per_epoch,

decay_epoch=250)

lr = Tensor(lr_scheduler[-1])

optimizer = nn.Momentum(params=net.trainable_params(), learning_rate=lr, momentum=0.9, weight_decay=0.00004, loss_scale=1024)

loss_scale_manager = ms.amp.FixedLossScaleManager(1024, drop_overflow_update=False)

model = Model(net, loss_fn=loss, optimizer=optimizer, amp_level="O3", loss_scale_manager=loss_scale_manager)

callback = [TimeMonitor(), LossMonitor()]

save_ckpt_path = "./"

config_ckpt = CheckpointConfig(save_checkpoint_steps=batches_per_epoch, keep_checkpoint_max=5)

ckpt_callback = ModelCheckpoint("shufflenetv1", directory=save_ckpt_path, config=config_ckpt)

callback += [ckpt_callback]

print("============== Starting Training ==============")

start_time = time.time()

# 由于时间原因,epoch = 5,可根据需求进行调整

model.train(5, dataset, callbacks=callback)

use_time = time.time() - start_time

hour = str(int(use_time // 60 // 60))

minute = str(int(use_time // 60 % 60))

second = str(int(use_time % 60))

print("total time:" + hour + "h " + minute + "m " + second + "s")

print("============== Train Success ==============")

if __name__ == '__main__':

train()

model size is 0.5x

============== Starting Training ==============

epoch: 1 step: 1, loss is 2.602555274963379

epoch: 1 step: 2, loss is 2.5641419887542725

epoch: 1 step: 3, loss is 2.5605194568634033

epoch: 1 step: 4, loss is 2.445266008377075

epoch: 1 step: 5, loss is 2.4659340381622314

epoch: 1 step: 6, loss is 2.4339487552642822

epoch: 1 step: 7, loss is 2.3650155067443848

epoch: 1 step: 8, loss is 2.352776050567627

epoch: 1 step: 9, loss is 2.3119568824768066

epoch: 1 step: 10, loss is 2.297975778579712

epoch: 1 step: 11, loss is 2.2929701805114746

epoch: 1 step: 12, loss is 2.236536741256714

epoch: 1 step: 13, loss is 2.40505313873291

epoch: 1 step: 14, loss is 2.3632290363311768

epoch: 1 step: 15, loss is 2.427211284637451

epoch: 1 step: 16, loss is 2.389260768890381

epoch: 1 step: 17, loss is 2.278745651245117

epoch: 1 step: 18, loss is 2.3015830516815186

epoch: 1 step: 19, loss is 2.2679598331451416

epoch: 1 step: 20, loss is 2.251993417739868

epoch: 1 step: 21, loss is 2.2501304149627686

epoch: 1 step: 22, loss is 2.2664272785186768

epoch: 1 step: 23, loss is 2.268998384475708

epoch: 1 step: 24, loss is 2.249323606491089

epoch: 1 step: 25, loss is 2.2754223346710205

epoch: 1 step: 26, loss is 2.2544331550598145

epoch: 1 step: 27, loss is 2.2413394451141357

epoch: 1 step: 28, loss is 2.310964822769165

epoch: 1 step: 190, loss is 1.9756882190704346

epoch: 1 step: 191, loss is 2.0467123985290527

epoch: 1 step: 192, loss is 2.015138626098633

epoch: 1 step: 193, loss is 2.0590052604675293

epoch: 1 step: 194, loss is 2.08339786529541

epoch: 1 step: 195, loss is 2.0886242389678955

epoch: 1 step: 196, loss is 2.0785837173461914

…

…

epoch: 5 step: 26, loss is 1.7299295663833618

epoch: 5 step: 27, loss is 1.7681633234024048

epoch: 5 step: 28, loss is 1.6620925664901733

epoch: 5 step: 29, loss is 1.6640541553497314

epoch: 5 step: 30, loss is 1.700564980506897

epoch: 5 step: 31, loss is 1.7993314266204834

epoch: 5 step: 32, loss is 1.7511837482452393

epoch: 5 step: 33, loss is 1.7358088493347168

epoch: 5 step: 34, loss is 1.8399680852890015

epoch: 5 step: 35, loss is 1.8288452625274658

epoch: 5 step: 36, loss is 1.760751724243164

epoch: 5 step: 37, loss is 1.8667253255844116

epoch: 5 step: 38, loss is 1.7133476734161377

epoch: 5 step: 39, loss is 1.766150712966919

epoch: 5 step: 40, loss is 1.7172778844833374

epoch: 5 step: 41, loss is 1.6493042707443237

epoch: 5 step: 42, loss is 1.706695795059204

epoch: 5 step: 43, loss is 1.7643200159072876

epoch: 5 step: 44, loss is 1.8378987312316895

epoch: 5 step: 45, loss is 1.6942284107208252

epoch: 5 step: 46, loss is 1.6833163499832153

epoch: 5 step: 47, loss is 1.7402489185333252

epoch: 5 step: 48, loss is 1.642223834991455

epoch: 5 step: 49, loss is 1.6894333362579346

epoch: 5 step: 50, loss is 1.7403620481491089

epoch: 5 step: 51, loss is 1.714734673500061

epoch: 5 step: 52, loss is 1.5632680654525757

Train epoch time: 127445.385 ms, per step time: 326.783 ms

total time:0h 16m 20s

============== Train Success ==============

训练好的模型保存在当前目录的shufflenetv1-5_390.ckpt中,用作评估。

模型评估

在CIFAR-10的测试集上对模型进行评估。

设置好评估模型的路径后加载数据集,并设置Top 1, Top 5的评估标准,最后用model.eval()接口对模型进行评估。

from mindspore import load_checkpoint, load_param_into_net

def test():

mindspore.set_context(mode=mindspore.GRAPH_MODE, device_target="Ascend")

dataset = get_dataset("./dataset/cifar-10-batches-bin", 128, "test")

net = ShuffleNetV1(model_size="2.0x", n_class=10)

param_dict = load_checkpoint("shufflenetv1-5_390.ckpt")

load_param_into_net(net, param_dict)

net.set_train(False)

loss = nn.CrossEntropyLoss(weight=None, reduction='mean', label_smoothing=0.1)

eval_metrics = {'Loss': nn.Loss(), 'Top_1_Acc': Top1CategoricalAccuracy(),

'Top_5_Acc': Top5CategoricalAccuracy()}

model = Model(net, loss_fn=loss, metrics=eval_metrics)

start_time = time.time()

res = model.eval(dataset, dataset_sink_mode=False)

use_time = time.time() - start_time

hour = str(int(use_time // 60 // 60))

minute = str(int(use_time // 60 % 60))

second = str(int(use_time % 60))

log = "result:" + str(res) + ", ckpt:'" + "./shufflenetv1-5_390.ckpt" \

+ "', time: " + hour + "h " + minute + "m " + second + "s"

print(log)

filename = './eval_log.txt'

with open(filename, 'a') as file_object:

file_object.write(log + '\n')

if __name__ == '__main__':

test()

model size is 2.0x

[ERROR] CORE(16936,ffff9fb5a930,python):2024-07-06-04:53:44.572.359 [mindspore/core/utils/file_utils.cc:253] GetRealPath] Get realpath failed, path[/tmp/ipykernel_16936/3162391481.py]

…

result:{'Loss': 1.5386667603101485, 'Top_1_Acc': 0.5278445512820513, 'Top_5_Acc': 0.9424078525641025}, ckpt:'./shufflenetv1-5_390.ckpt', time: 0h 0m 52s

模型预测

在CIFAR-10的测试集上对模型进行预测,并将预测结果可视化。

import mindspore

import matplotlib.pyplot as plt

import mindspore.dataset as ds

net = ShuffleNetV1(model_size="2.0x", n_class=10)

show_lst = []

param_dict = load_checkpoint("shufflenetv1-5_390.ckpt")

load_param_into_net(net, param_dict)

model = Model(net)

dataset_predict = ds.Cifar10Dataset(dataset_dir="./dataset/cifar-10-batches-bin", shuffle=False, usage="train")

dataset_show = ds.Cifar10Dataset(dataset_dir="./dataset/cifar-10-batches-bin", shuffle=False, usage="train")

dataset_show = dataset_show.batch(16)

show_images_lst = next(dataset_show.create_dict_iterator())["image"].asnumpy()

image_trans = [

vision.RandomCrop((32, 32), (4, 4, 4, 4)),

vision.RandomHorizontalFlip(prob=0.5),

vision.Resize((224, 224)),

vision.Rescale(1.0 / 255.0, 0.0),

vision.Normalize([0.4914, 0.4822, 0.4465], [0.2023, 0.1994, 0.2010]),

vision.HWC2CHW()

]

dataset_predict = dataset_predict.map(image_trans, 'image')

dataset_predict = dataset_predict.batch(16)

class_dict = {0:"airplane", 1:"automobile", 2:"bird", 3:"cat", 4:"deer", 5:"dog", 6:"frog", 7:"horse", 8:"ship", 9:"truck"}

# 推理效果展示(上方为预测的结果,下方为推理效果图片)

plt.figure(figsize=(16, 5))

predict_data = next(dataset_predict.create_dict_iterator())

output = model.predict(ms.Tensor(predict_data['image']))

pred = np.argmax(output.asnumpy(), axis=1)

index = 0

for image in show_images_lst:

plt.subplot(2, 8, index+1)

plt.title('{}'.format(class_dict[pred[index]]))

index += 1

plt.imshow(image)

plt.axis("off")

plt.show()

![[数据结构] 排序#插入排序希尔排序](https://i-blog.csdnimg.cn/direct/570343accdbd428bb9d9948693d69c05.png)

![[FreeRTOS 功能应用] 互斥量 功能应用](https://i-blog.csdnimg.cn/direct/9e9f8c31a549452298de10935da1e7ce.png)