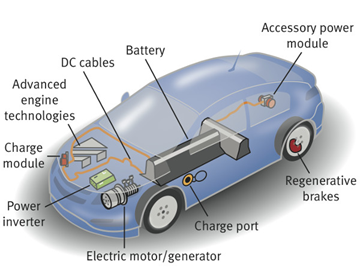

本文将介绍如何使用 Amazon Bedrock 最新推出的 Converse API,来简化与各种大型语言模型的交互。该 API 提供了一致的接口,可以无缝调用各种大型模型,从而消除了需要自己编写复杂辅助功能函数的重复性工作。文中示例将展示它相比于以前针对每个模型进行独立集成的方式,具有更简单的实现。文中还将提供完整代码,展示使用 Converse API 来调用 Claude 3 Sonnet 模型进行多模态图像描述。

亚马逊云科技开发者社区为开发者们提供全球的开发技术资源。这里有技术文档、开发案例、技术专栏、培训视频、活动与竞赛等。帮助中国开发者对接世界最前沿技术,观点,和项目,并将中国优秀开发者或技术推荐给全球云社区。如果你还没有关注/收藏,看到这里请一定不要匆匆划过,点这里让它成为你的技术宝库!

为了帮助开发者快速理解新的 Converse API,我对比了在 Converse API 发布之前,开发者是如何用代码实现调用多个大模型,并集成到统一接口的示例。通过 Converse API 示例代码,我将展示 Converse API 是如何轻松完成简化统一多模型交互接口的工作。最后,我还会重点分享如何使用 Converse API 调用 Claude 3 Sonnet 模型,分析两张在美丽的中国香港拍摄的街景照片。

本文选自我于 2024 年 6 月,在 Amazon Web Services 开发者社区上发表的技术博客“Streaming Large Language Model Interactions with Amazon Bedrock Converse API”。

Converse API 之前的世界

过去,开发人员必须编写复杂的辅助函数,来统一应付不同大语言模型之前不同的的输入和输出格式。例如,在 2024 年 5 月初的亚马逊云科技香港峰会中,为了在一个文件中使用 Amazon Bedrock 调用 5-6 个不同的大语言模型,我需要编写总共 116 行代码来实现这个统一接口的功能。

我当时是使用 Python 语言来编写这个函数,其它语言实现也基本类似。在没有 Converse API 之前,开发者需要自己编写辅助函数,调用 Amazon Bedrock 中来自不同提供商(Anthropic、Mistral、AI21、Amazon、Cohere 和 Meta 等)的不同大型语言模型。

以下我的代码中的“invoke_model”函数接受提示词、模型名,以及各种参数配置(例如:温度、top-k、top-p 和停止序列等),最终得到来自指定语言模型生成的输出文本。

我之前需要编写的辅助函数代码中,需要考虑来自不同模型提供商的提示词格式要求,然后才能发送针对某些特定模型的指定输入数据和提示词结构。代码如下所示:

import json

import boto3

def invoke_model(client, prompt, model,

accept = 'application/json', content_type = 'application/json',

max_tokens = 512, temperature = 1.0, top_p = 1.0, top_k = 200, stop_sequences = [],

count_penalty = 0, presence_penalty = 0, frequency_penalty = 0, return_likelihoods = 'NONE'):

# default response

output = ''

# identify the model provider

provider = model.split('.')[0]

# InvokeModel

if (provider == 'anthropic'):

input = {

'prompt': prompt,

'max_tokens_to_sample': max_tokens,

'temperature': temperature,

'top_k': top_k,

'top_p': top_p,

'stop_sequences': stop_sequences

}

body=json.dumps(input)

response = client.invoke_model(body=body, modelId=model, accept=accept,contentType=content_type)

response_body = json.loads(response.get('body').read())

output = response_body['completion']

elif (provider == 'mistral'):

input = {

'prompt': prompt,

'max_tokens': max_tokens,

'temperature': temperature,

'top_k': top_k,

'top_p': top_p,

'stop': stop_sequences

}

body=json.dumps(input)

response = client.invoke_model(body=body, modelId=model, accept=accept,contentType=content_type)

response_body = json.loads(response.get('body').read())

results = response_body['outputs']

for result in results:

output = output + result['text']

elif (provider == 'ai21'):

input = {

'prompt': prompt,

'maxTokens': max_tokens,

'temperature': temperature,

'topP': top_p,

'stopSequences': stop_sequences,

'countPenalty': {'scale': count_penalty},

'presencePenalty': {'scale': presence_penalty},

'frequencyPenalty': {'scale': frequency_penalty}

}

body=json.dumps(input)

response = client.invoke_model(body=body, modelId=model, accept=accept,contentType=content_type)

response_body = json.loads(response.get('body').read())

completions = response_body['completions']

for part in completions:

output = output + part['data']['text']

elif (provider == 'amazon'):

input = {

'inputText': prompt,

'textGenerationConfig': {

'maxTokenCount': max_tokens,

'stopSequences': stop_sequences,

'temperature': temperature,

'topP': top_p

}

}

body=json.dumps(input)

response = client.invoke_model(body=body, modelId=model, accept=accept,contentType=content_type)

response_body = json.loads(response.get('body').read())

results = response_body['results']

for result in results:

output = output + result['outputText']

elif (provider == 'cohere'):

input = {

'prompt': prompt,

'max_tokens': max_tokens,

'temperature': temperature,

'k': top_k,

'p': top_p,

'stop_sequences': stop_sequences,

'return_likelihoods': return_likelihoods

}

body=json.dumps(input)

response = client.invoke_model(body=body, modelId=model, accept=accept,contentType=content_type)

response_body = json.loads(response.get('body').read())

results = response_body['generations']

for result in results:

output = output + result['text']

elif (provider == 'meta'):

input = {

'prompt': prompt,

'max_gen_len': max_tokens,

'temperature': temperature,

'top_p': top_p

}

body=json.dumps(input)

response = client.invoke_model(body=body, modelId=model, accept=accept,contentType=content_type)

response_body = json.loads(response.get('body').read())

output = response_body['generation']

# return

return output

# main function

bedrock = boto3.client(

service_name='bedrock-runtime'

)

model = 'mistral.mistral-7b-instruct-v0:2'

prompt = """

Human: Explain how chicken swim to an 8 year old using 2 paragraphs.

Assistant:

"""

output = invoke_model(client=bedrock, prompt=prompt, model=model)

print(output)

以上代码行数仅展示了针对这几个模型的接口函数实现,随着需要统一调用的不同大型模型越来越多,代码量还会不断增长。完整代码如下所示:https://catalog.us-east-1.prod.workshops.aws/workshops/5501fb48-e04b-476d-89b0-43a7ecaf1595/en-US/full-day-event-fm-and-embedding/fm/03-making-things-simpler?trk=cndc-detail

使用 Converse API 的世界

以下来自亚马逊云科技官方网站的代码片段,展示了使用 Amazon Bedrock Converse API 调用大型语言模型的简易性:

def generate_conversation(bedrock_client,

model_id,

system_text,

input_text):

……

# Send the message.

response = bedrock_client.converse(

modelId=model_id,

messages=messages,

system=system_prompts,

inferenceConfig=inference_config,

additionalModelRequestFields=additional_model_fields

)

……

完整代码可以参考以下链接:https://docs.aws.amazon.com/bedrock/latest/userguide/conversation-inference.html#message-inference-examples?trk=cndc-detail

为了提供给开发者们一个使用 Converse API 调用大模型的完整代码示例,特设计以下这个香港铜锣湾街景的大模型描述任务。

代码示例中,主要使用 converse() 方法将文本和图像发送到 Claude 3 Sonnet 模型的示例。代码读入一个图像文件,使用文本提示和图像字节创建消息有效负载,然后打印出模型对场景的描述。另外,在这段代码中如果要使用不同的图像进行测试,只需更新输入文件路径即可。

输入图片为两张照片,如下所示。这是我在撰写这篇技术文章时,从窗户拍摄出去的香港铜锣湾的美丽街景照:

Causeway Bay Street View, Hong Kong (Image 1)

Causeway Bay Street View, Hong Kong (Image 2)

在核心代码部分,我根据前面提到的亚马逊云科技官方网站提供的示例代码,稍做修改编写了一个新的 generate_conversation_with_image() 函数,并在 main() 主函数的合适位置调用这个函数。完整代码如下所示:

def generate_conversation_with_image(bedrock_client,

model_id,

input_text,

input_image):

"""

Sends a message to a model.

Args:

bedrock_client: The Boto3 Bedrock runtime client.

model_id (str): The model ID to use.

input text : The input message.

input_image : The input image.

Returns:

response (JSON): The conversation that the model generated.

"""

logger.info("Generating message with model %s", model_id)

# Message to send.

with open(input_image, "rb") as f:

image = f.read()

message = {

"role": "user",

"content": [

{

"text": input_text

},

{

"image": {

"format": 'png',

"source": {

"bytes": image

}

}

}

]

}

messages = [message]

# Send the message.

response = bedrock_client.converse(

modelId=model_id,

messages=messages

)

return response

def main():

"""

Entrypoint for Anthropic Claude 3 Sonnet example.

"""

logging.basicConfig(level=logging.INFO,

format="%(levelname)s: %(message)s")

model_id = "anthropic.claude-3-sonnet-20240229-v1:0"

input_text = "What's in this image?"

input_image = "IMG_1_Haowen.jpg"

try:

bedrock_client = boto3.client(service_name="bedrock-runtime")

response = generate_conversation_with_image(

bedrock_client, model_id, input_text, input_image)

output_message = response['output']['message']

print(f"Role: {output_message['role']}")

for content in output_message['content']:

print(f"Text: {content['text']}")

token_usage = response['usage']

print(f"Input tokens: {token_usage['inputTokens']}")

print(f"Output tokens: {token_usage['outputTokens']}")

print(f"Total tokens: {token_usage['totalTokens']}")

print(f"Stop reason: {response['stopReason']}")

except ClientError as err:

message = err.response['Error']['Message']

logger.error("A client error occurred: %s", message)

print(f"A client error occured: {message}")

else:

print(

f"Finished generating text with model {model_id}.")

if __name__ == "__main__":

main()

对于铜锣湾街景照片之一,我从 Claude 3 Sonnet 模型中获得以下输出结果:

为了读者阅读的方便,我在此处复制了这个模型的输出结果:

Role: assistant

Text: This image shows a dense urban cityscape with numerous high-rise residential and office buildings in Hong Kong. In the foreground, there are sports facilities like a running track, soccer/football fields, and tennis/basketball courts surrounded by the towering skyscrapers of the city. The sports venues provide open green spaces amidst the densely packed urban environment. The scene captures the juxtaposition of modern city living and recreational amenities in a major metropolitan area like Hong Kong.

Input tokens: 1580

Output tokens: 103

Total tokens: 1683

Stop reason: end_turn

Finished generating text with model anthropic.claude-3-sonnet-20240229-v1:0.

对于铜锣湾街景照片之二,我只是简单地将代码中的 input_image 路径修改为新的图像路径。当我将该照片作为新图像输入到 Claude 3 Sonnet 模型时,我从 Claude 3 Sonnet 模型中获得了以下输出结果:

为了读者阅读的方便,我在此处同样复制了这个模型的输出结果:

Role: assistant

Text: This image shows an aerial view of a dense urban city skyline, likely in a major metropolitan area. The cityscape is dominated by tall skyscrapers and high-rise apartment or office buildings of varying architectural styles, indicating a highly developed and populous city center.

In the foreground, a major highway or expressway can be seen cutting through the city, with multiple lanes of traffic visible, though the traffic appears relatively light in this particular view. There are also some pockets of greenery interspersed among the buildings, such as parks or green spaces.

One notable feature is a large billboard or advertisement for the luxury brand Chanel prominently displayed on the side of a building, suggesting this is a commercial and shopping district.

Overall, the image captures the concentrated urban density, modern infrastructure, and mixture of residential, commercial, and transportation elements characteristic of a major cosmopolitan city.

Input tokens: 1580

Output tokens: 188

Total tokens: 1768

Stop reason: end_turn

Finished generating text with model anthropic.claude-3-sonnet-20240229-v1:0.

小结

Amazon Bedrock 的新 Converse API 通过提供一致的接口,简化了与大型语言模型之间的不同交互,而无需针对于特定模型编写特定的实现。传统方式下,开发人员需要编写包含数百行代码的复杂辅助函数,以统一各个模型的输入/输出格式。Converse API 允许使用相同的 API 无缝调用各种大语言模型,从而大大降低了代码复杂度。

本文的代码示例展示了 Converse API 的简洁性,而过去的方法需要针对每个模型提供者进行独特的集成。第二个代码示例重点介绍了通过 Converse API 调用 Claude 3 Sonnet 模型进行图像描述。

总体而言,Converse API 简化了在 Amazon Bedrock 中使用不同的大型语言模型的交互过程,通过一致性的界面大幅减少了开发工作量,让生成式 AI 应用的开发者可以更加专注于基于自己业务的独特创新和想象力。

说明:本博客文章的封面图像由在 Amazon Bedrock 上的 Stable Diffusion SDXL 1.0 大模型生成。

提供给 Stable Diffusion SDXL 1.0 大模型的英文提示词如下,供参考:

“a developer sitting in the cafe, comic, graphic illustration, comic art, graphic novel art, vibrant, highly detailed, colored, 2d minimalistic”

文章来源:使用 Amazon Bedrock Converse API 简化大语言模型交互