系列文章目录

文章目录

- 系列文章目录

- 前言

- 一、本文要点

- 二、开发环境

- 三、原项目

- 四、修改项目

- 五、测试一下

- 五、小结

前言

本插件稳定运行上百个kafka项目,每天处理上亿级的数据的精简小插件,快速上手。

<dependency>

<groupId>io.github.vipjoey</groupId>

<artifactId>multi-kafka-consumer-starter</artifactId>

<version>最新版本号</version>

</dependency>

例如下面这样简单的配置就完成SpringBoot和kafka的整合,我们只需要关心com.mmc.multi.kafka.starter.OneProcessor和com.mmc.multi.kafka.starter.TwoProcessor 这两个Service的代码开发。

## topic1的kafka配置

spring.kafka.one.enabled=true

spring.kafka.one.consumer.bootstrapServers=${spring.embedded.kafka.brokers}

spring.kafka.one.topic=mmc-topic-one

spring.kafka.one.group-id=group-consumer-one

spring.kafka.one.processor=com.mmc.multi.kafka.starter.OneProcessor // 业务处理类名称

spring.kafka.one.consumer.auto-offset-reset=latest

spring.kafka.one.consumer.max-poll-records=10

spring.kafka.one.consumer.value-deserializer=org.apache.kafka.common.serialization.StringDeserializer

spring.kafka.one.consumer.key-deserializer=org.apache.kafka.common.serialization.StringDeserializer

## topic2的kafka配置

spring.kafka.two.enabled=true

spring.kafka.two.consumer.bootstrapServers=${spring.embedded.kafka.brokers}

spring.kafka.two.topic=mmc-topic-two

spring.kafka.two.group-id=group-consumer-two

spring.kafka.two.processor=com.mmc.multi.kafka.starter.TwoProcessor // 业务处理类名称

spring.kafka.two.consumer.auto-offset-reset=latest

spring.kafka.two.consumer.max-poll-records=10

spring.kafka.two.consumer.value-deserializer=org.apache.kafka.common.serialization.StringDeserializer

spring.kafka.two.consumer.key-deserializer=org.apache.kafka.common.serialization.StringDeserializer

## pb 消息消费者

spring.kafka.pb.enabled=true

spring.kafka.pb.consumer.bootstrapServers=${spring.embedded.kafka.brokers}

spring.kafka.pb.topic=mmc-topic-pb

spring.kafka.pb.group-id=group-consumer-pb

spring.kafka.pb.processor=pbProcessor

spring.kafka.pb.consumer.auto-offset-reset=latest

spring.kafka.pb.consumer.max-poll-records=10

spring.kafka.pb.consumer.key-deserializer=org.apache.kafka.common.serialization.StringDeserializer

spring.kafka.pb.consumer.value-deserializer=org.apache.kafka.common.serialization.ByteArrayDeserializer

国籍惯例,先上源码:Github源码

一、本文要点

本文将介绍通过封装一个starter,来实现多kafka数据源的配置,通过通过源码,可以学习以下特性。系列文章完整目录

- SpringBoot 整合多个kafka数据源

- SpringBoot 批量消费kafka消息

- SpringBoot 优雅地启动或停止消费kafka

- SpringBoot kafka本地单元测试(免集群)

- SpringBoot 利用map注入多份配置

- SpringBoot BeanPostProcessor 后置处理器使用方式

- SpringBoot 将自定义类注册到IOC容器

- SpringBoot 注入bean到自定义类成员变量

- Springboot 取消限定符

- Springboot 支持消费protobuf类型的kafka消息

二、开发环境

- jdk 1.8

- maven 3.6.2

- springboot 2.4.3

- kafka-client 2.6.6

- idea 2020

三、原项目

1、接前文,我们开发了一个kafka插件,但在使用过程中发现有些不方便的地方,在公共接口MmcKafkaStringInputer 显示地继承了BatchMessageListener<String, String>,导致我们没办法去指定消费protobuf类型的message。

public interface MmcKafkaStringInputer extends MmcInputer, BatchMessageListener<String, String> {

}

/**

* 消费kafka消息.

*/

@Override

public void onMessage(List<ConsumerRecord<String, String>> records) {

if (null == records || CollectionUtils.isEmpty(records)) {

log.warn("{} records is null or records.value is empty.", name);

return;

}

Assert.hasText(name, "You must pass the field `name` to the Constructor or invoke the setName() after the class was created.");

Assert.notNull(properties, "You must pass the field `properties` to the Constructor or invoke the setProperties() after the class was created.");

try {

Stream<T> dataStream = records.stream()

.map(ConsumerRecord::value)

.flatMap(this::doParse)

.filter(Objects::nonNull)

.filter(this::isRightRecord);

// 支持配置强制去重或实现了接口能力去重

if (properties.isDuplicate() || isSubtypeOfInterface(MmcKafkaMsg.class)) {

// 检查是否实现了去重接口

if (!isSubtypeOfInterface(MmcKafkaMsg.class)) {

throw new RuntimeException("The interface "

+ MmcKafkaMsg.class.getName() + " is not implemented if you set the config `spring.kafka.xxx.duplicate=true` .");

}

dataStream = dataStream.collect(Collectors.groupingBy(this::buildRoutekey))

.entrySet()

.stream()

.map(this::findLasted)

.filter(Objects::nonNull);

}

List<T> datas = dataStream.collect(Collectors.toList());

if (CommonUtil.isNotEmpty(datas)) {

this.dealMessage(datas);

}

} catch (Exception e) {

log.error(name + "-dealMessage error ", e);

}

}

2、由于实现了BatchMessageListener<String, String>接口,抽象父类必须实现onMessage(List<ConsumerRecord<String, String>> records)方法,这样会导致子类局限性很大,没办法去实现其它kafka的xxxListener接口,例如手工提交offset,单条消息消费等。

因此、所以我们要升级和优化。

四、修改项目

1、新增KafkaAbastrctProcessor抽象父类,直接实现MmcInputer接口,要求所有子类都需要继承本类,子类通过调用{@link #receiveMessage(List)} 模板方法来实现通用功能;

@Slf4j

@Setter

abstract class KafkaAbstractProcessor<T> implements MmcInputer {

// 类的内容基本和MmcKafkaKafkaAbastrctProcessor保持一致

// 主要修改了doParse方法,目的是让子类可以自定义解析protobuf

/**

* 将kafka消息解析为实体,支持json对象或者json数组.

*

* @param msg kafka消息

* @return 实体类

*/

protected Stream<T> doParse(ConsumerRecord<String, Object> msg) {

// 消息对象

Object record = msg.value();

// 如果是pb格式

if (record instanceof byte[]) {

return doParseProtobuf((byte[]) record);

} else if (record instanceof String) {

// 普通kafka消息

String json = record.toString();

if (json.startsWith("[")) {

// 数组

List<T> datas = doParseJsonArray(json);

if (CommonUtil.isEmpty(datas)) {

log.warn("{} doParse error, json={} is error.", name, json);

return Stream.empty();

}

// 反序列对象后,做一些初始化操作

datas = datas.stream().peek(this::doAfterParse).collect(Collectors.toList());

return datas.stream();

} else {

// 对象

T data = doParseJsonObject(json);

if (null == data) {

log.warn("{} doParse error, json={} is error.", name, json);

return Stream.empty();

}

// 反序列对象后,做一些初始化操作

doAfterParse(data);

return Stream.of(data);

}

} else if (record instanceof MmcKafkaMsg) {

// 如果本身就是PandoKafkaMsg对象,直接返回

//noinspection unchecked

return Stream.of((T) record);

} else {

throw new UnsupportedForMessageFormatException("not support message type");

}

}

/**

* 将json消息解析为实体.

*

* @param json kafka消息

* @return 实体类

*/

protected T doParseJsonObject(String json) {

if (properties.isSnakeCase()) {

return JsonUtil.parseSnackJson(json, getEntityClass());

} else {

return JsonUtil.parseJsonObject(json, getEntityClass());

}

}

/**

* 将json消息解析为数组.

*

* @param json kafka消息

* @return 数组

*/

protected List<T> doParseJsonArray(String json) {

if (properties.isSnakeCase()) {

try {

return JsonUtil.parseSnackJsonArray(json, getEntityClass());

} catch (Exception e) {

throw new RuntimeException(e);

}

} else {

return JsonUtil.parseJsonArray(json, getEntityClass());

}

}

/**

* 序列化为pb格式,假设你消费的是pb消息,需要自行实现这个类.

*

* @param record pb字节数组

* @return pb实体类流

*/

protected Stream<T> doParseProtobuf(byte[] record) {

throw new NotImplementedException();

}

}

2、修改MmcKafkaBeanPostProcessor类,暂存KafkaAbastrctProcessor的子类。

public class MmcKafkaBeanPostProcessor implements BeanPostProcessor {

@Getter

private final Map<String, KafkaAbstractProcessor<?>> suitableClass = new ConcurrentHashMap<>();

@Override

public Object postProcessAfterInitialization(Object bean, String beanName) throws BeansException {

if (bean instanceof KafkaAbstractProcessor) {

KafkaAbstractProcessor<?> target = (KafkaAbstractProcessor<?>) bean;

suitableClass.putIfAbsent(beanName, target);

suitableClass.putIfAbsent(bean.getClass().getName(), target);

}

return bean;

}

}

3、修改MmcKafkaProcessorFactory,更换构造的目标类为KafkaAbstractProcessor。

public class MmcKafkaProcessorFactory {

@Resource

private DefaultListableBeanFactory defaultListableBeanFactory;

public KafkaAbstractProcessor<? > buildInputer(

String name, MmcMultiKafkaProperties.MmcKafkaProperties properties,

Map<String, KafkaAbstractProcessor<? >> suitableClass) throws Exception {

// 如果没有配置process,则直接从注册的Bean里查找

if (!StringUtils.hasText(properties.getProcessor())) {

return findProcessorByName(name, properties.getProcessor(), suitableClass);

}

// 如果配置了process,则从指定配置中生成实例

// 判断给定的配置是类,还是bean名称

if (!isClassName(properties.getProcessor())) {

throw new IllegalArgumentException("It's not a class, wrong value of ${spring.kafka." + name + ".processor}.");

}

// 如果ioc容器已经存在该处理实例,则直接使用,避免既配置了process,又使用了@Service等注解

KafkaAbstractProcessor<? > inc = findProcessorByClass(name, properties.getProcessor(), suitableClass);

if (null != inc) {

return inc;

}

// 指定的processor处理类必须继承KafkaAbstractProcessor

Class<?> clazz = Class.forName(properties.getProcessor());

boolean isSubclass = KafkaAbstractProcessor.class.isAssignableFrom(clazz);

if (!isSubclass) {

throw new IllegalStateException(clazz.getName() + " is not subClass of KafkaAbstractProcessor.");

}

// 创建实例

Constructor<?> constructor = clazz.getConstructor();

KafkaAbstractProcessor<? > ins = (KafkaAbstractProcessor<? >) constructor.newInstance();

// 注入依赖的变量

defaultListableBeanFactory.autowireBean(ins);

return ins;

}

private KafkaAbstractProcessor<? > findProcessorByName(String name, String processor, Map<String,

KafkaAbstractProcessor<? >> suitableClass) {

return suitableClass.entrySet()

.stream()

.filter(e -> e.getKey().startsWith(name) || e.getKey().equalsIgnoreCase(processor))

.map(Map.Entry::getValue)

.findFirst()

.orElseThrow(() -> new RuntimeException("Can't found any suitable processor class for the consumer which name is " + name

+ ", please use the config ${spring.kafka." + name + ".processor} or set name of Bean like @Service(\"" + name + "Processor\") "));

}

private KafkaAbstractProcessor<? > findProcessorByClass(String name, String processor, Map<String,

KafkaAbstractProcessor<? >> suitableClass) {

return suitableClass.entrySet()

.stream()

.filter(e -> e.getKey().startsWith(name) || e.getKey().equalsIgnoreCase(processor))

.map(Map.Entry::getValue)

.findFirst()

.orElse(null);

}

private boolean isClassName(String processor) {

// 使用正则表达式验证类名格式

String regex = "^[a-zA-Z_$][a-zA-Z\\d_$]*([.][a-zA-Z_$][a-zA-Z\\d_$]*)*$";

return Pattern.matches(regex, processor);

}

}

4、修改MmcMultiConsumerAutoConfiguration,更换构造的目标类的父类为KafkaAbstractProcessor。

@Bean

public MmcKafkaInputerContainer mmcKafkaInputerContainer(MmcKafkaProcessorFactory factory,

MmcKafkaBeanPostProcessor beanPostProcessor) throws Exception {

Map<String, MmcInputer> inputers = new HashMap<>();

Map<String, MmcMultiKafkaProperties.MmcKafkaProperties> kafkas = mmcMultiKafkaProperties.getKafka();

// 逐个遍历,并生成consumer

for (Map.Entry<String, MmcMultiKafkaProperties.MmcKafkaProperties> entry : kafkas.entrySet()) {

// 唯一消费者名称

String name = entry.getKey();

// 消费者配置

MmcMultiKafkaProperties.MmcKafkaProperties properties = entry.getValue();

// 是否开启

if (properties.isEnabled()) {

// 生成消费者

KafkaAbstractProcessor inputer = factory.buildInputer(name, properties, beanPostProcessor.getSuitableClass());

// 输入源容器

ConcurrentMessageListenerContainer<Object, Object> container = concurrentMessageListenerContainer(properties);

// 设置容器

inputer.setContainer(container);

inputer.setName(name);

inputer.setProperties(properties);

// 设置消费者

container.setupMessageListener(inputer);

// 关闭时候停止消费

Runtime.getRuntime().addShutdownHook(new Thread(inputer::stop));

// 直接启动

container.start();

// 加入集合

inputers.put(name, inputer);

}

}

return new MmcKafkaInputerContainer(inputers);

}

5、修改MmcKafkaKafkaAbastrctProcessor,用于实现kafka的BatchMessageListener 接口,当然你也可以实现其它Listener接口,或者在这基础上扩展。

public abstract class MmcKafkaKafkaAbastrctProcessor<T> extends KafkaAbstractProcessor<T> implements BatchMessageListener<String, Object> {

@Override

public void onMessage(List<ConsumerRecord<String, Object>> records) {

if (null == records || CollectionUtils.isEmpty(records)) {

log.warn("{} records is null or records.value is empty.", name);

return;

}

receiveMessage(records);

}

}

五、测试一下

1、引入kafka测试需要的jar。参考文章:kafka单元测试

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-test</artifactId>

<scope>test</scope>

</dependency>

<dependency>

<groupId>org.springframework.kafka</groupId>

<artifactId>spring-kafka-test</artifactId>

<scope>test</scope>

</dependency>

<dependency>

<groupId>com.google.protobuf</groupId>

<artifactId>protobuf-java</artifactId>

<version>3.18.0</version>

<scope>test</scope>

</dependency>

<dependency>

<groupId>com.google.protobuf</groupId>

<artifactId>protobuf-java-util</artifactId>

<version>3.18.0</version>

<scope>test</scope>

</dependency>

2、定义一个pb类型消息和业务处理类。

(1) 定义pb,然后通过命令生成对应的实体类;

syntax = "proto2";

package com.mmc.multi.kafka;

option java_package = "com.mmc.multi.kafka.starter.proto";

option java_outer_classname = "DemoPb";

message PbMsg {

optional string routekey = 1;

optional string cosImgUrl = 2;

optional string base64str = 3;

}

(2)创建PbProcessor消息处理类,用于消费protobuf类型的消息;

@Slf4j

@Service("pbProcessor")

public class PbProcessor extends MmcKafkaKafkaAbastrctProcessor<DemoMsg> {

@Override

protected Stream<DemoMsg> doParseProtobuf(byte[] record) {

try {

DemoPb.PbMsg msg = DemoPb.PbMsg.parseFrom(record);

DemoMsg demo = new DemoMsg();

BeanUtils.copyProperties(msg, demo);

return Stream.of(demo);

} catch (InvalidProtocolBufferException e) {

log.error("parssPbError", e);

return Stream.empty();

}

}

@Override

protected void dealMessage(List<DemoMsg> datas) {

System.out.println("PBdatas: " + datas);

}

}

3、配置kafka地址和指定业务处理类。

## pb 消息消费者

spring.kafka.pb.enabled=true

spring.kafka.pb.consumer.bootstrapServers=${spring.embedded.kafka.brokers}

spring.kafka.pb.topic=mmc-topic-pb

spring.kafka.pb.group-id=group-consumer-pb

spring.kafka.pb.processor=pbProcessor

spring.kafka.pb.consumer.auto-offset-reset=latest

spring.kafka.pb.consumer.max-poll-records=10

spring.kafka.pb.consumer.key-deserializer=org.apache.kafka.common.serialization.StringDeserializer

spring.kafka.pb.consumer.value-deserializer=org.apache.kafka.common.serialization.ByteArrayDeserializer

4、编写测试类。

@Slf4j

@ActiveProfiles("dev")

@ExtendWith(SpringExtension.class)

@SpringBootTest(classes = {MmcMultiConsumerAutoConfiguration.class, DemoService.class, PbProcessor.class})

@TestPropertySource(value = "classpath:application-pb.properties")

@DirtiesContext

@EmbeddedKafka(partitions = 1, brokerProperties = {"listeners=PLAINTEXT://localhost:9092", "port=9092"},

topics = {"${spring.kafka.pb.topic}"})

class KafkaPbMessageTest {

@Resource

private EmbeddedKafkaBroker embeddedKafkaBroker;

@Value("${spring.kafka.pb.topic}")

private String topicPb;

@Test

void testDealMessage() throws Exception {

Thread.sleep(2 * 1000);

// 模拟生产数据

produceMessage();

Thread.sleep(10 * 1000);

}

void produceMessage() {

Map<String, Object> configs = new HashMap<>(KafkaTestUtils.producerProps(embeddedKafkaBroker));

Producer<String, byte[]> producer = new DefaultKafkaProducerFactory<>(configs, new StringSerializer(), new ByteArraySerializer()).createProducer();

for (int i = 0; i < 10; i++) {

DemoPb.PbMsg msg = DemoPb.PbMsg.newBuilder()

.setCosImgUrl("http://google.com")

.setRoutekey("routekey-" + i).build();

producer.send(new ProducerRecord<>(topicPb, "my-aggregate-id", msg.toByteArray()));

producer.flush();

}

}

}

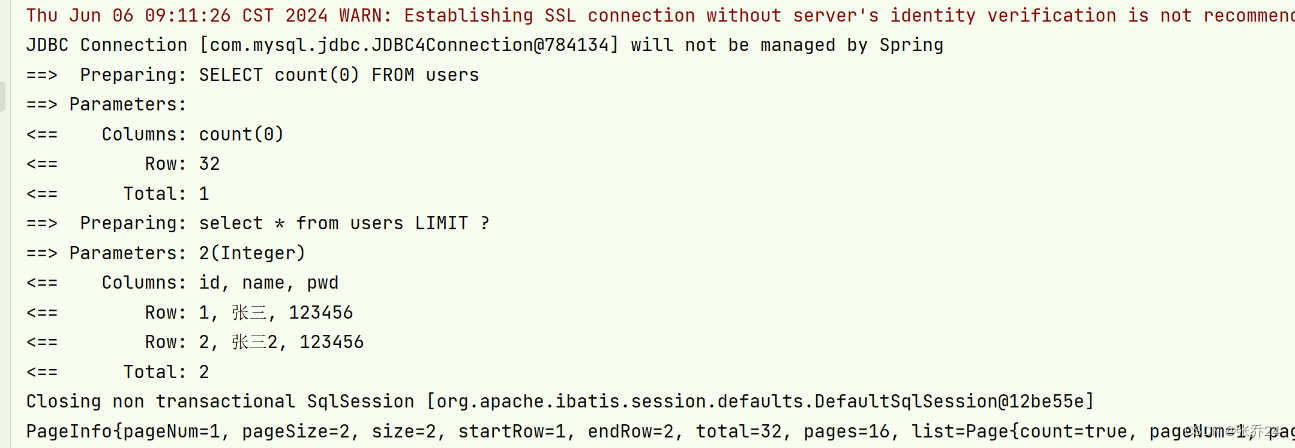

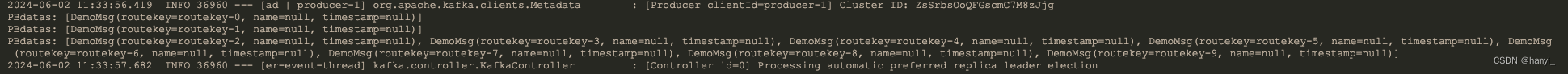

5、运行一下,测试通过。

五、小结

将本项目代码构建成starter,就可以大大提升我们开发效率,我们只需要关心业务代码的开发,github项目源码:轻触这里。如果对你有用可以打个星星哦。下一篇,升级本starter,在kafka单分区下实现十万级消费处理速度。

《搭建大型分布式服务(三十六)SpringBoot 零代码方式整合多个kafka数据源》

《搭建大型分布式服务(三十七)SpringBoot 整合多个kafka数据源-取消限定符》

《搭建大型分布式服务(三十八)SpringBoot 整合多个kafka数据源-支持protobuf》

《搭建大型分布式服务(三十九)SpringBoot 整合多个kafka数据源-支持Aware模式》

加我加群一起交流学习!更多干货下载、项目源码和大厂内推等着你

|  |