文章目录

- InternLM-Chat-1.8B 智能对话 Demo

- 环境准备

- 下载模型

- 运行 InternLM-Chat-1.8B

- web 运行八戒 demo

- 下载模型

- 执行Demo

InternLM-Chat-1.8B 智能对话 Demo

环境准备

-

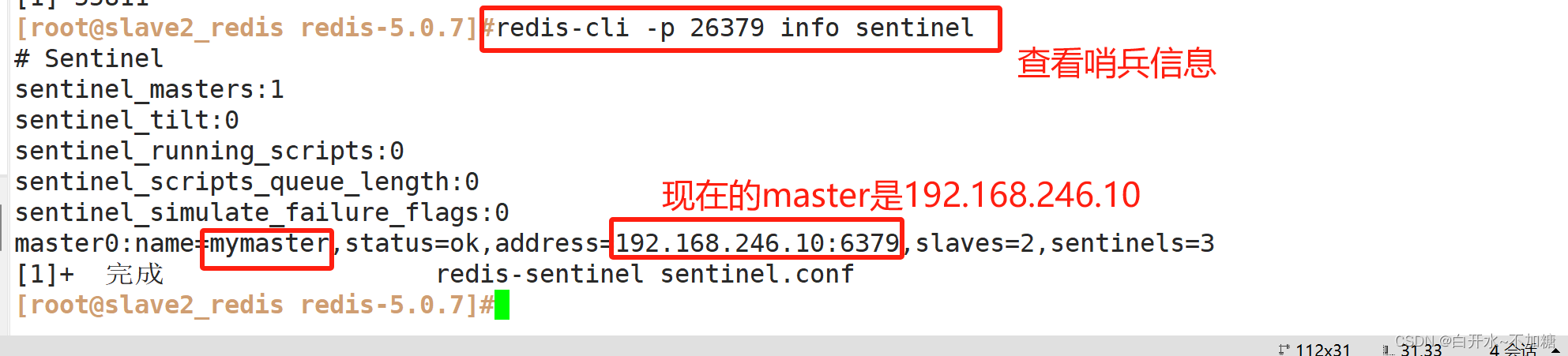

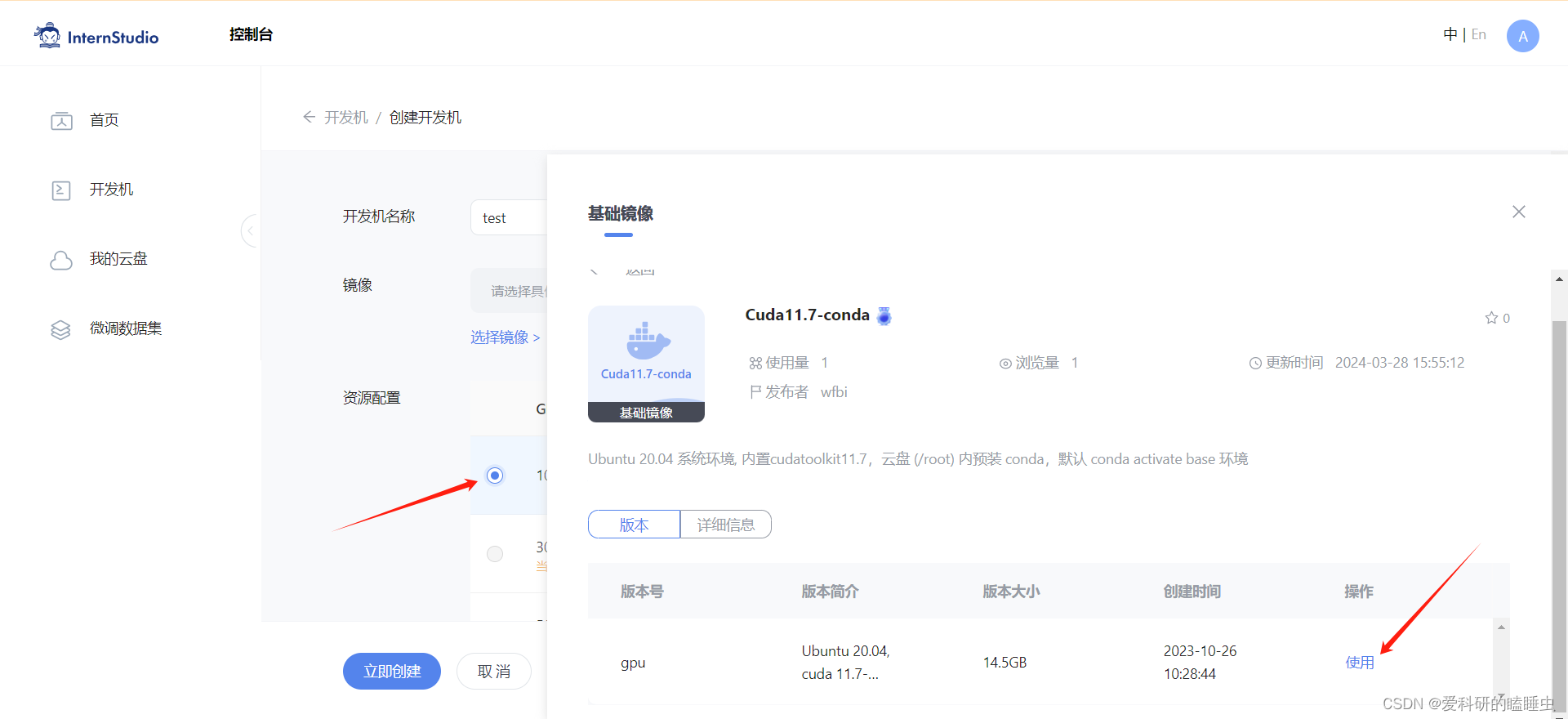

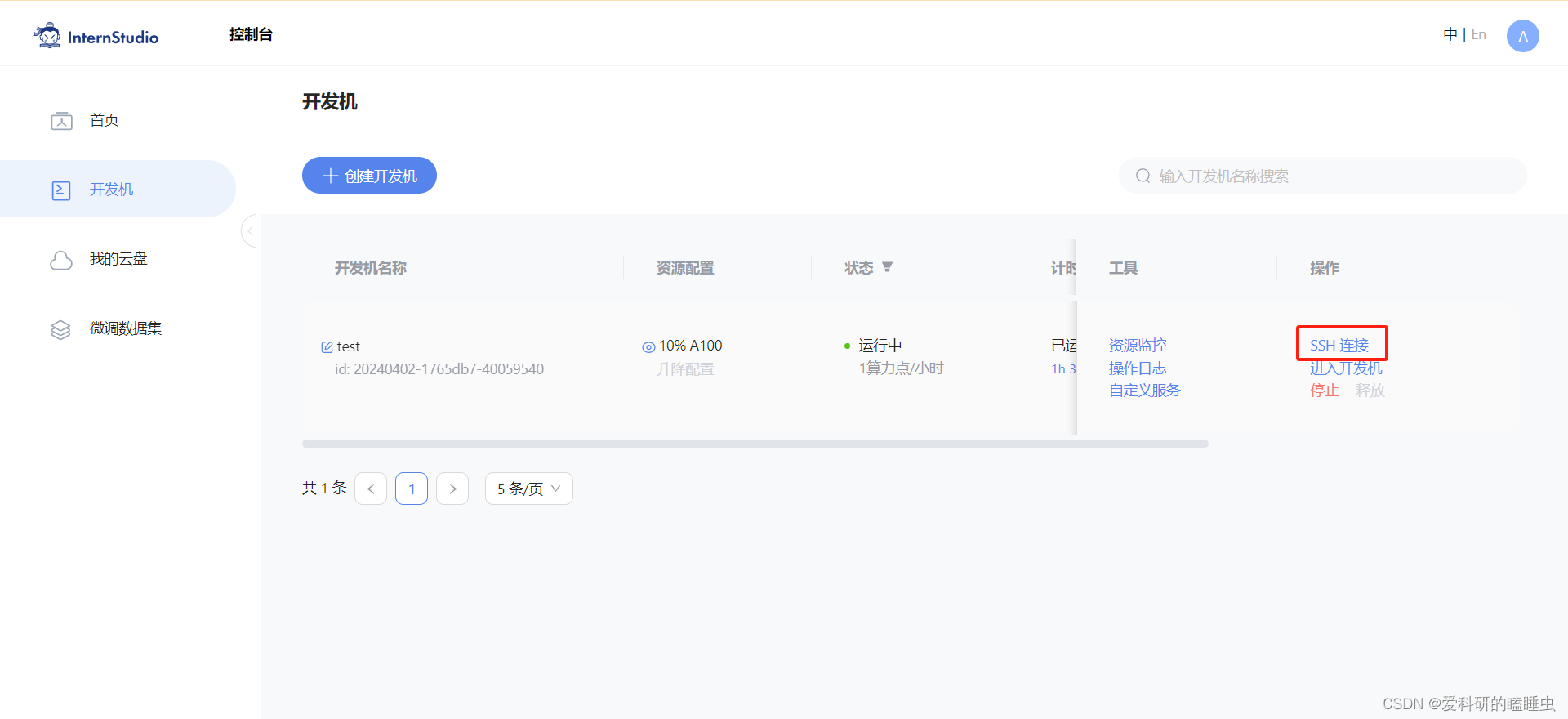

在InternStudio平台中选择 10% A100(1/4) 的配置(平台资源有限),如下图所示镜像选择 Cuda11.7-conda,如下图所示:

-

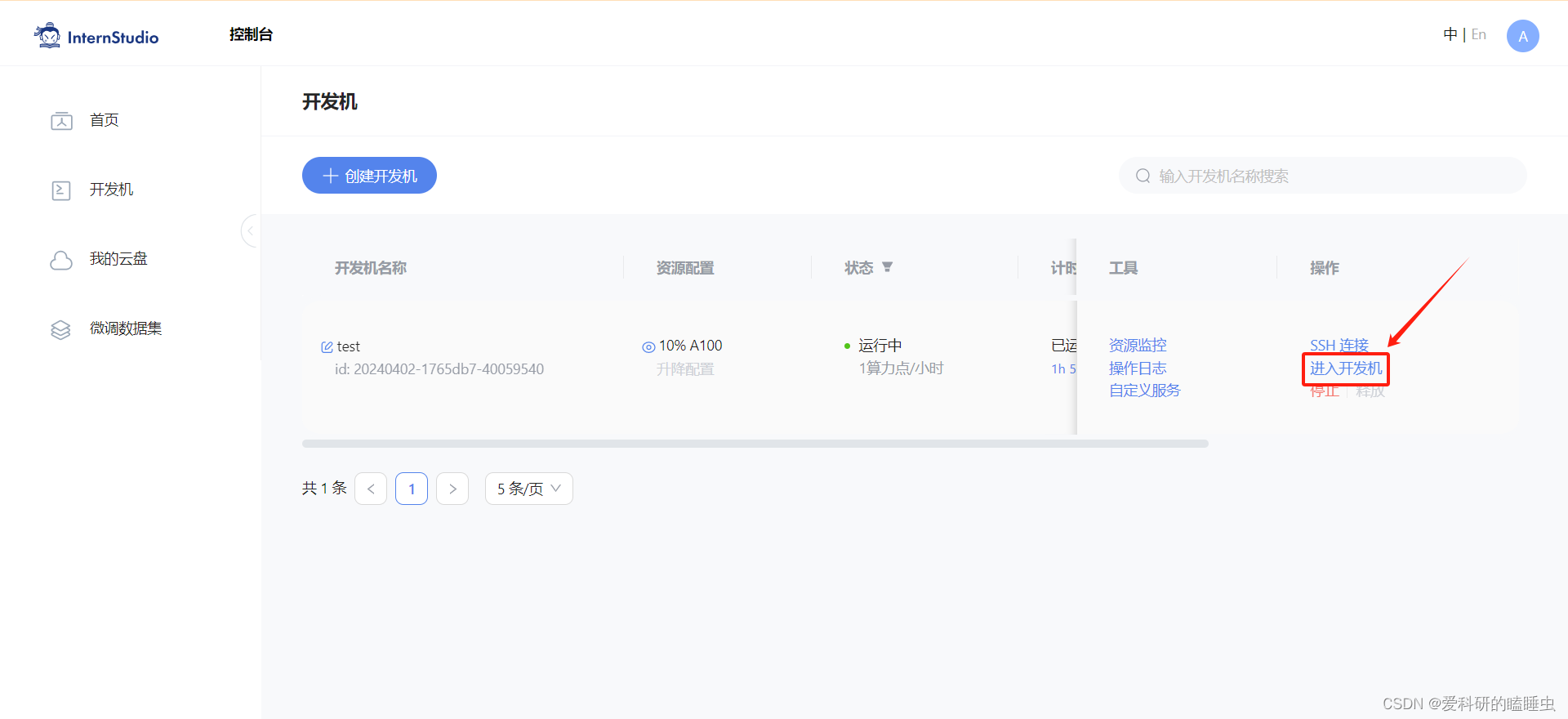

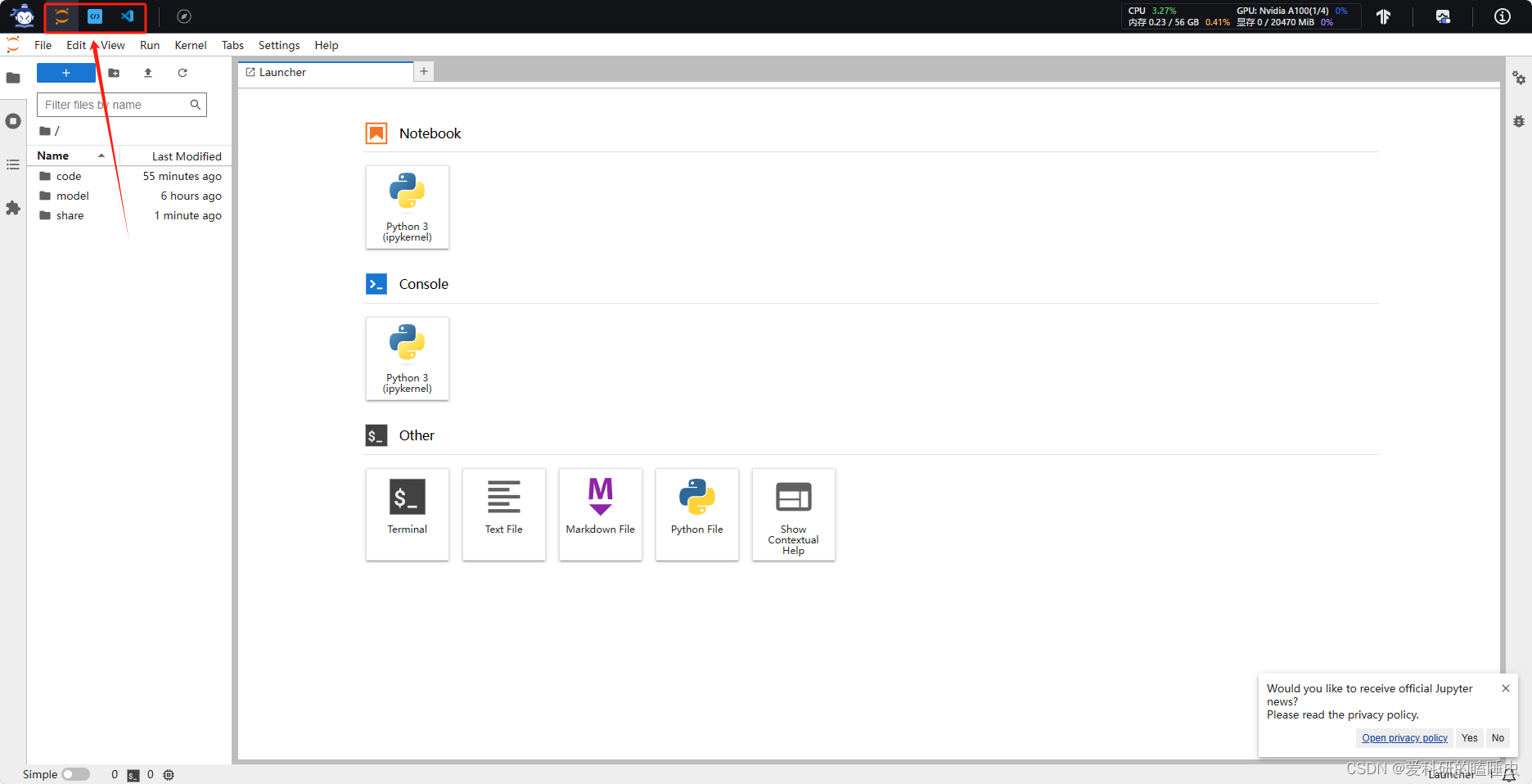

打开刚刚租用服务器的进入开发机,进入开发机后,在页面的左上角可以切换 JupyterLab、终端和 VScode

- 配置开发环境

-

创建python=3.10.13,pytorch=2.0.1虚拟环境

studio-conda -o internlm-base -t demo # 与 studio-conda 等效的配置方案 # conda create -n demo python==3.10 -y # conda activate demo # conda install pytorch==2.0.1 torchvision==0.15.2 torchaudio==2.0.2 pytorch-cuda=11.7 -c pytorch -c nvidia # 或者直接克隆一个pytorch=2.0.1的环境 # conda create --name demo --clone=/root/share/conda_envs/internlm-base -

激活internlm-demo环境

conda activate demo -

安装demo需要的依赖包

# 升级pip python -m pip install --upgrade pip pip install huggingface-hub==0.17.3 pip install transformers==4.34 pip install psutil==5.9.8 pip install accelerate==0.24.1 pip install streamlit==1.32.2 pip install matplotlib==3.8.3 pip install modelscope==1.9.5 pip install sentencepiece==0.1.99

-

下载模型

-

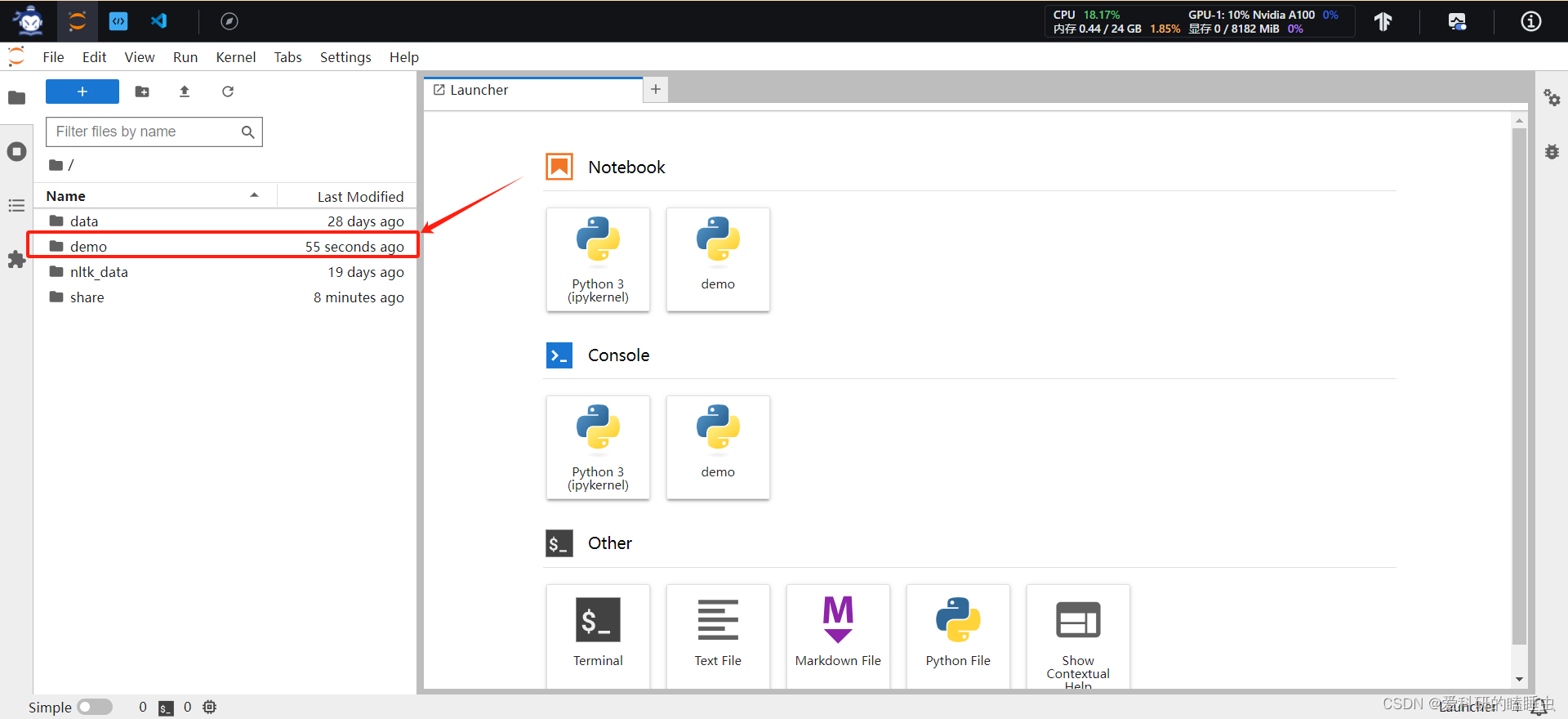

按路径创建文件夹,并进入到对应文件目录中:

mkdir -p /root/demo # 在root文件夹下创建demo文件夹 touch /root/demo/cli_demo.py # 在demo文件夹下创建cli_demo.py文件 touch /root/demo/download_mini.py # 在demo文件夹下创建download_mini.py文件 cd /root/demo # 进入到demo文件夹下 -

通过左侧文件夹栏目,双击进入 demo 文件夹

-

双击打开 /root/demo/download_mini.py 文件,复制以下代码:

import os from modelscope.hub.snapshot_download import snapshot_download # 创建保存模型目录 os.system("mkdir /root/models") # save_dir是模型保存到本地的目录 save_dir="/root/models" snapshot_download("Shanghai_AI_Laboratory/internlm2-chat-1_8b", cache_dir=save_dir, revision='v1.1.0') -

执行命令,下载模型参数文件:

python /root/demo/download_mini.py

运行 InternLM-Chat-1.8B

-

双击打开 /root/demo/cli_demo.py 文件,复制以下代码:

import torch from transformers import AutoTokenizer, AutoModelForCausalLM model_name_or_path = "/root/models/Shanghai_AI_Laboratory/internlm2-chat-1_8b" tokenizer = AutoTokenizer.from_pretrained(model_name_or_path, trust_remote_code=True, device_map='cuda:0') model = AutoModelForCausalLM.from_pretrained(model_name_or_path, trust_remote_code=True, torch_dtype=torch.bfloat16, device_map='cuda:0') model = model.eval() system_prompt = """You are an AI assistant whose name is InternLM (书生·浦语). - InternLM (书生·浦语) is a conversational language model that is developed by Shanghai AI Laboratory (上海人工智能实验室). It is designed to be helpful, honest, and harmless. - InternLM (书生·浦语) can understand and communicate fluently in the language chosen by the user such as English and 中文. """ messages = [(system_prompt, '')] print("=============Welcome to InternLM chatbot, type 'exit' to exit.=============") while True: input_text = input("\nUser >>> ") input_text = input_text.replace(' ', '') if input_text == "exit": break length = 0 for response, _ in model.stream_chat(tokenizer, input_text, messages): if response is not None: print(response[length:], flush=True, end="") length = len(response) -

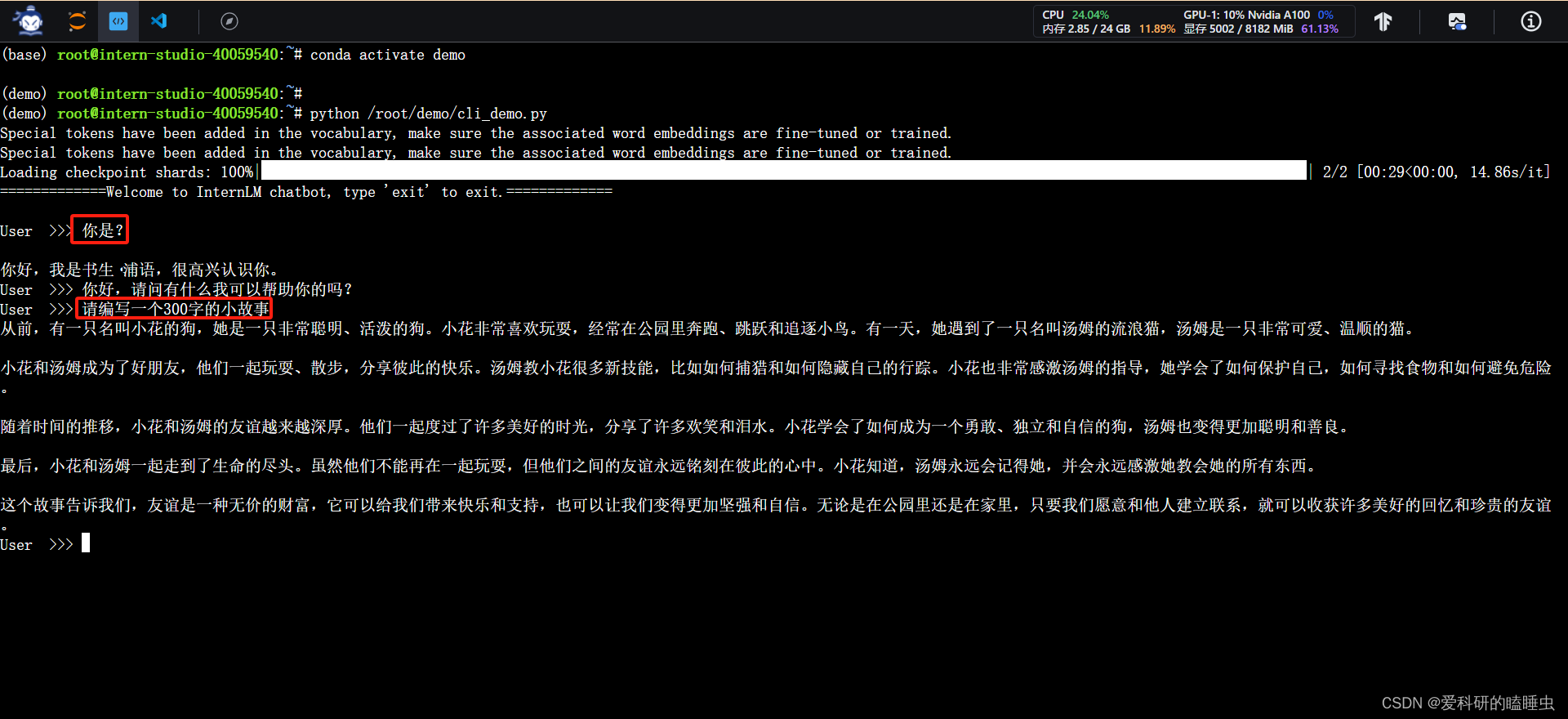

输入命令,执行 Demo 程序:

conda activate demo python /root/demo/cli_demo.py -

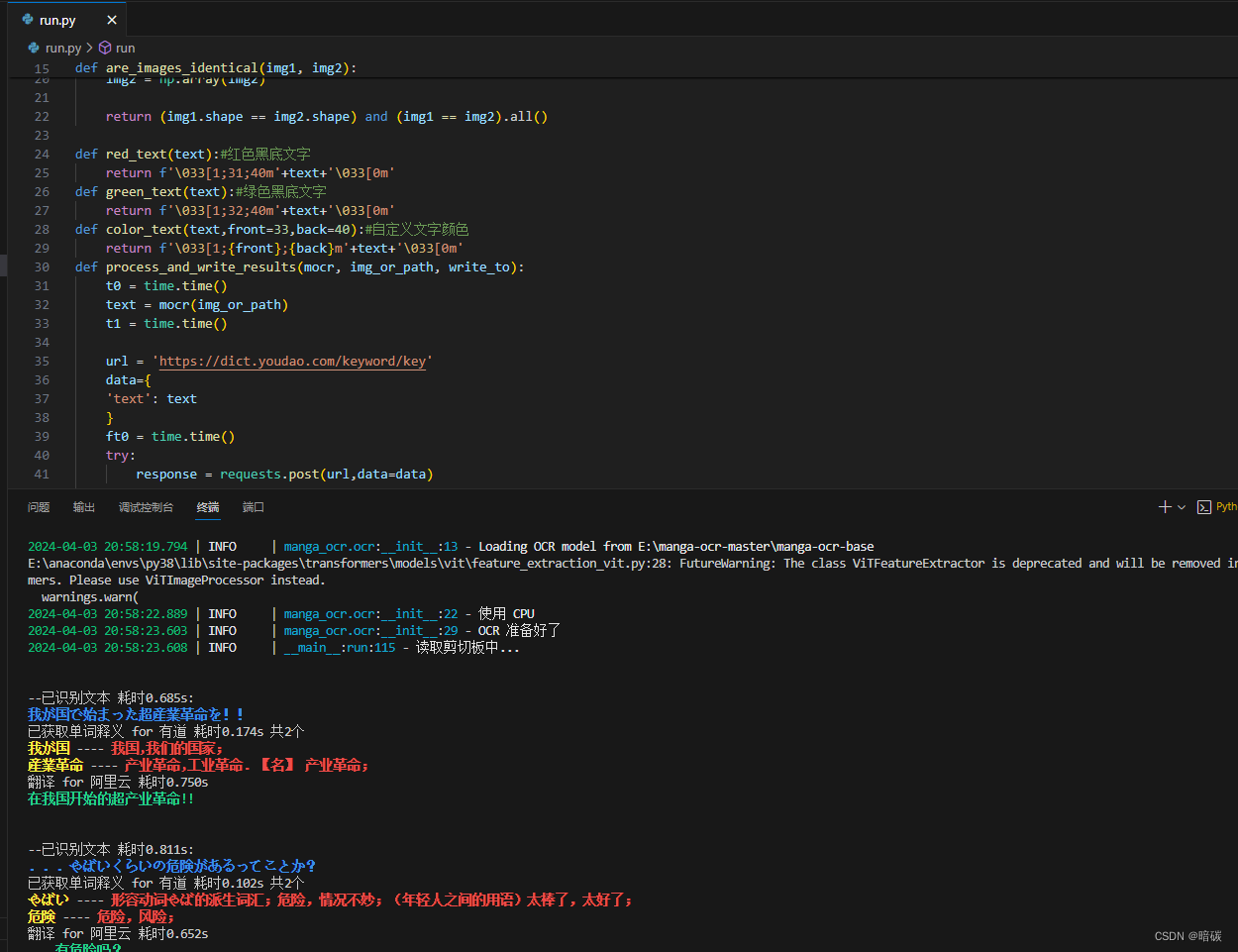

运行效果

web 运行八戒 demo

下载模型

-

使用 git 命令来获得仓库内的 Demo 文件

conda activate demo cd /root/ git clone https://gitee.com/InternLM/Tutorial -b camp2 # git clone https://github.com/InternLM/Tutorial -b camp2 cd /root/Tutorial -

在Terminal中执行 bajie_download.py,下载模型

python /root/Tutorial/helloworld/bajie_download.py

执行Demo

-

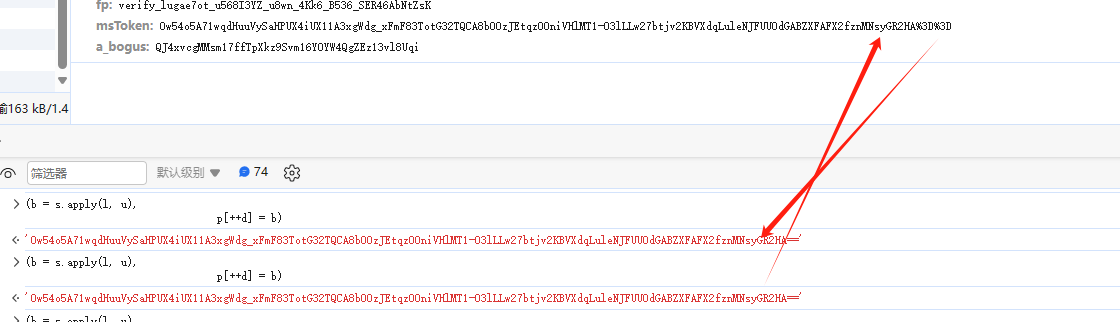

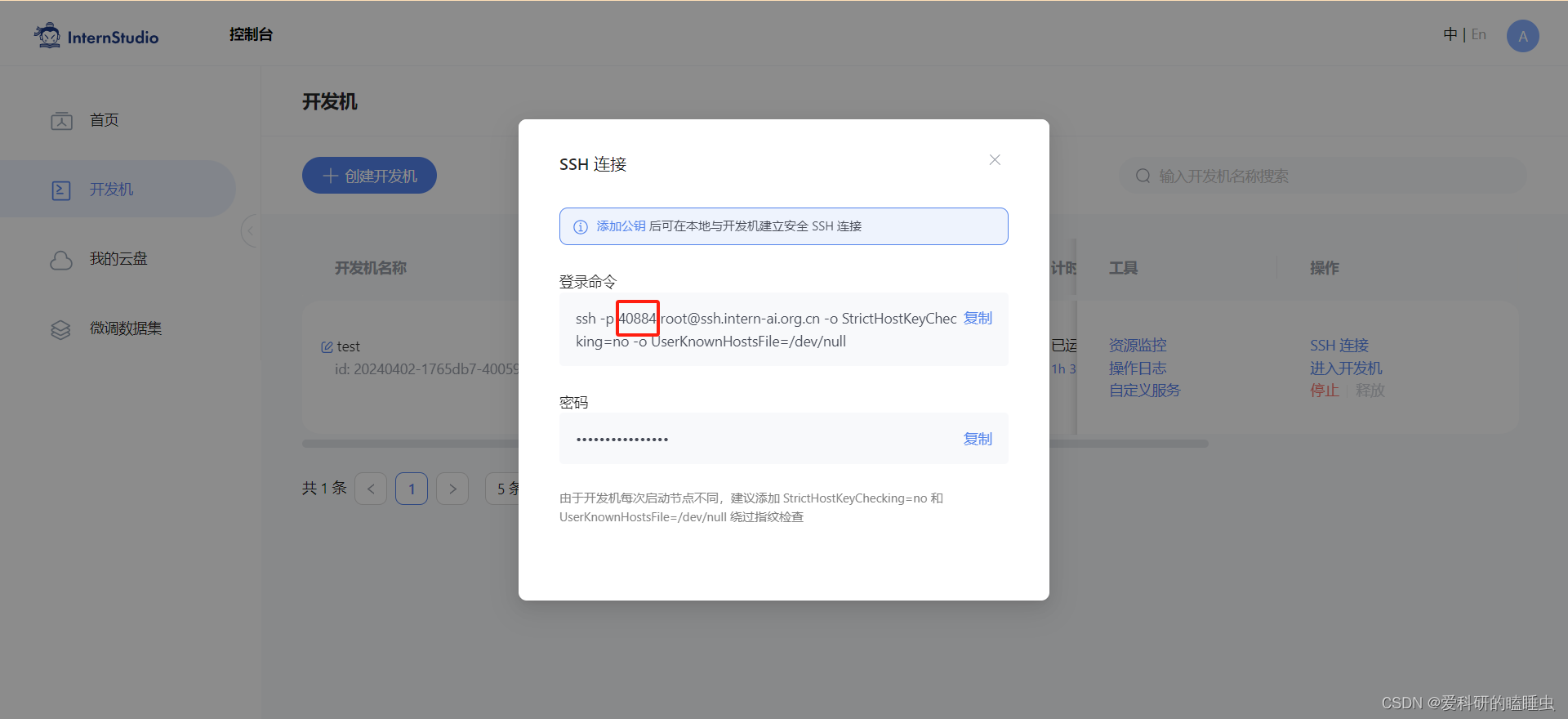

端口映射到本地,在本地浏览器才可浏览

-

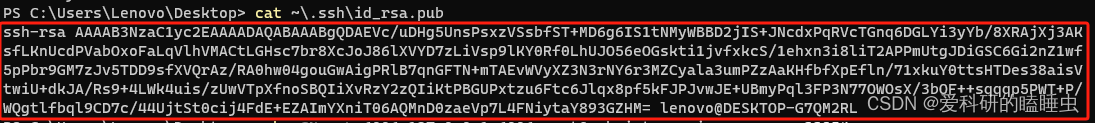

在本地打开Power Shell终端,SSH公钥默认存储在 ~/.ssh/id_rsa.pub,可以通过系统自带的 cat 工具查看文件内容

-

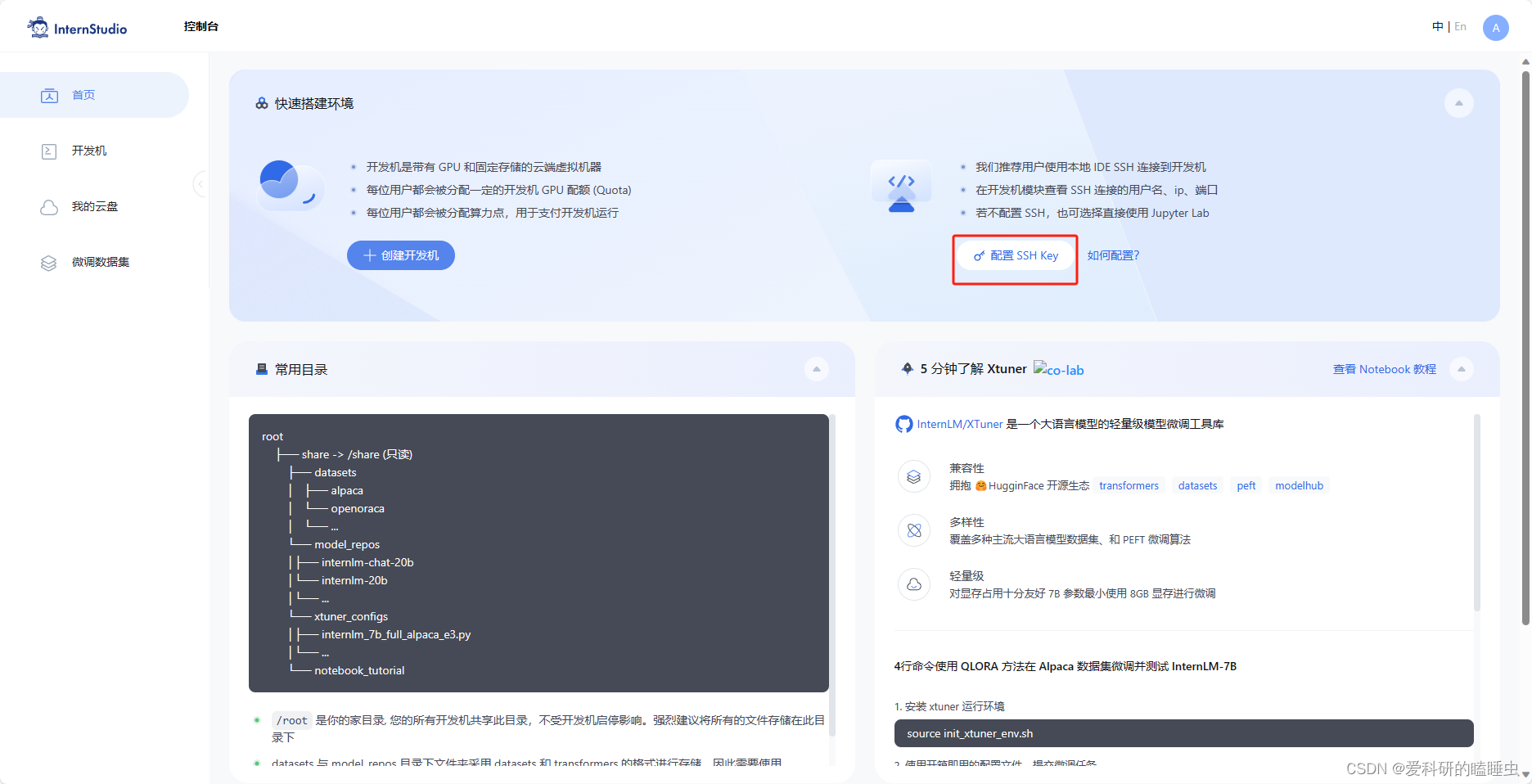

将公钥复制到剪贴板中,然后回到 InternStudio 控制台,点击配置 SSH Key

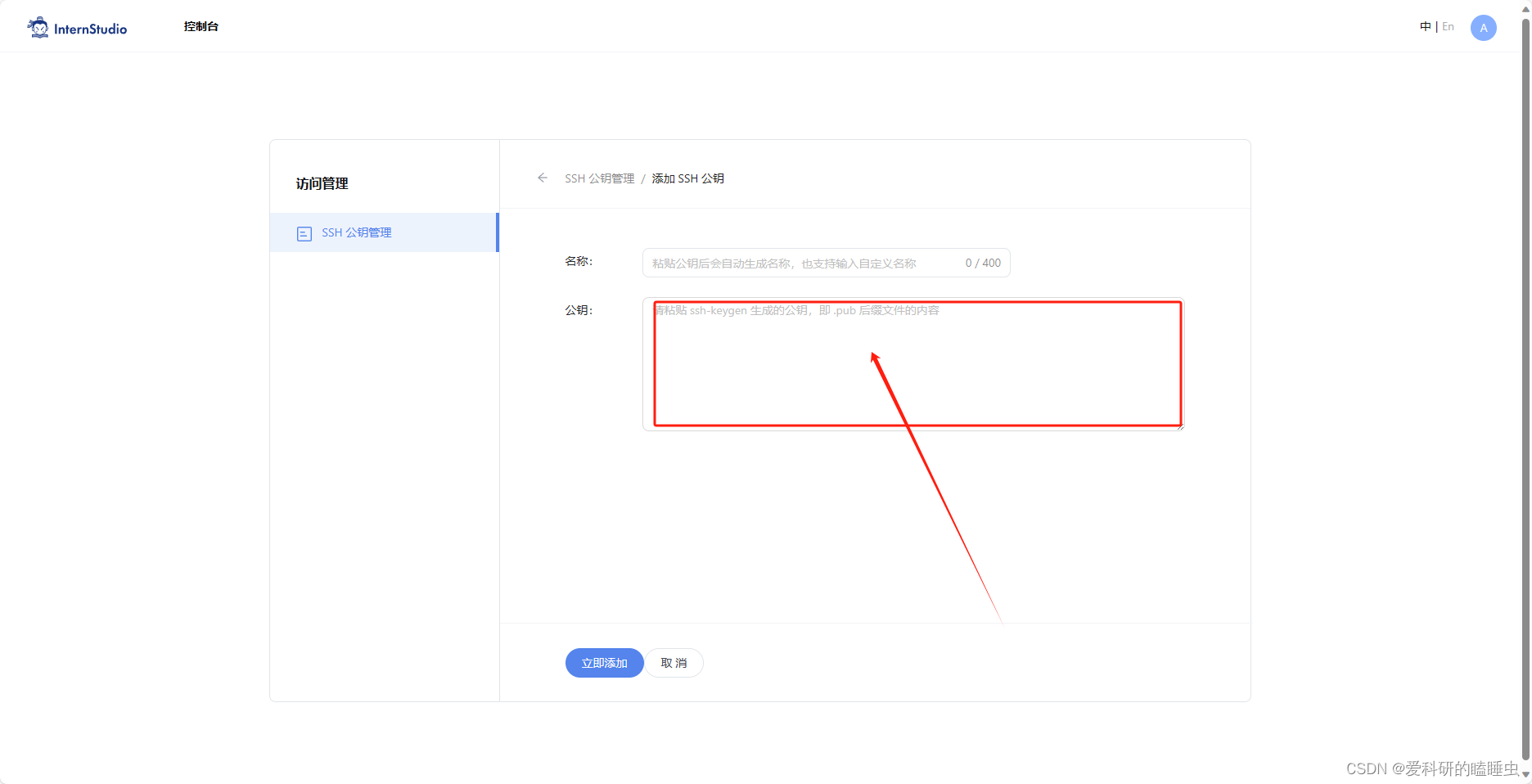

-

将刚刚复制的公钥添加进入即可

-

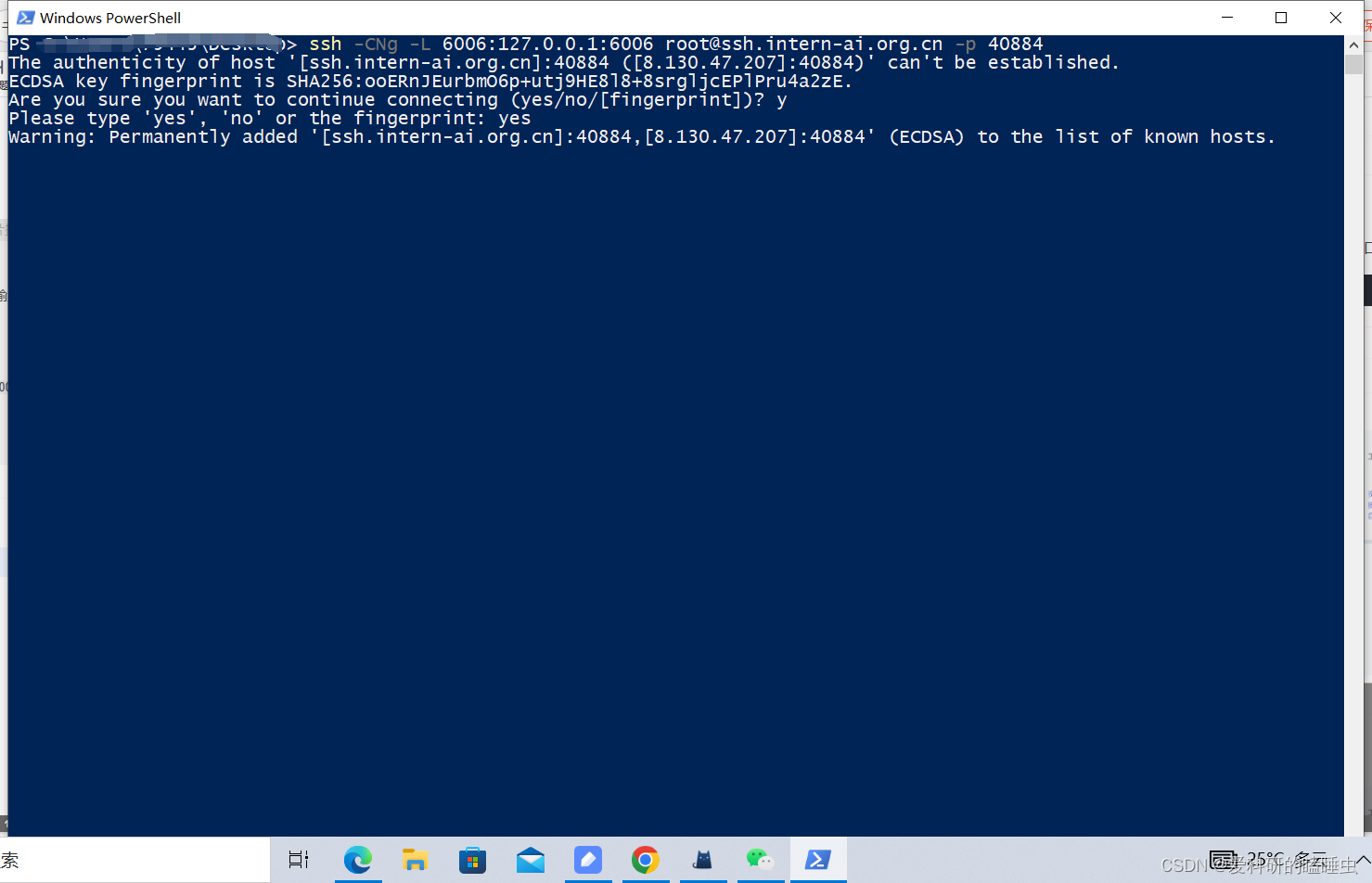

在本地终端输入以下指令 .6006 是在服务器中打开的端口,而 33090 是根据开发机的端口进行更改

ssh -CNg -L 6006:127.0.0.1:6006 root@ssh.intern-ai.org.cn -p 33854

这样就映射成功了

-

-

在InternStudio终端运行以下代码:

bash conda activate demo # 首次进入 vscode 会默认是 base 环境,所以首先切换环境 streamlit run /root/Tutorial/helloworld/bajie_chat.py --server.address 127.0.0.1 --server.port 6006 -

测试结果