引言:上一期我们进行了对Elasticsearch和kibana的部署,今天我们来解决如何使用Logstash搜集日志传输到es集群并使用kibana检测

目录

Logstash部署

1.安装配置Logstash

(1)安装

(2)测试文件

(3)配置

grok

1、手动输入日志数据

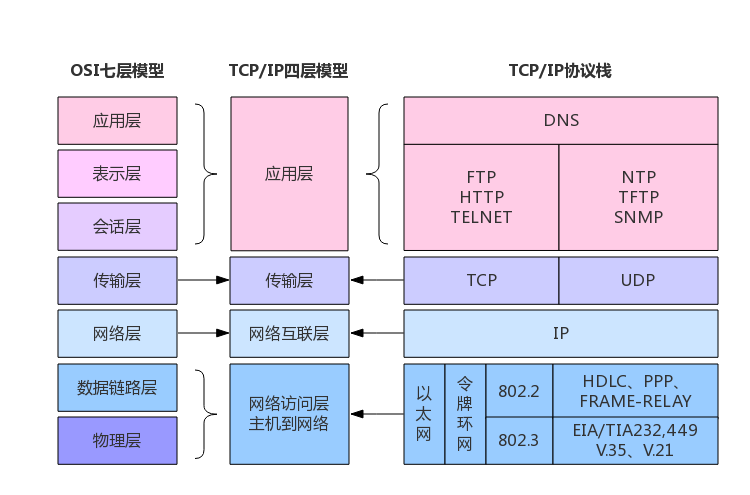

数据链路

2、手动输入数据,并存储到 es

数据链路

3、自定义日志1

数据链路

5、nginx access 日志

数据链路

6、nginx error日志

数据链路

7、filebate 传输给 logstash

filebeat 日志模板

Logstash部署

-

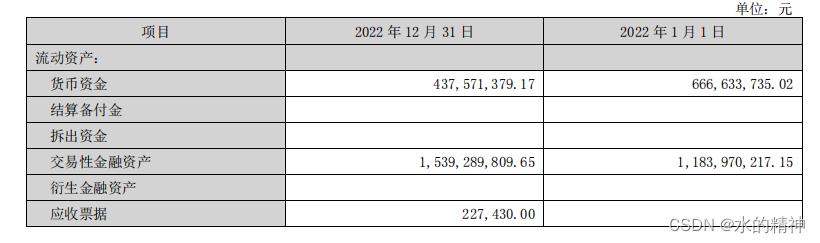

服务器

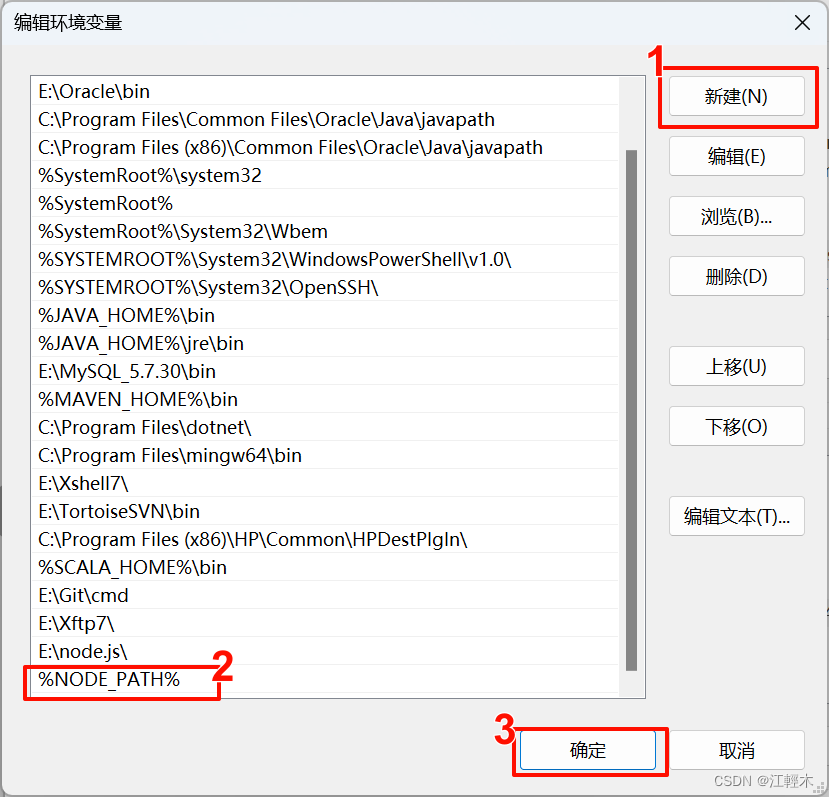

| 安装软件 | 主机名 | IP地址 | 系统版本 | 配置 |

|---|---|---|---|---|

| Logstash | Elk | 10.12.153.71 | centos7.5.1804 | 2核4G |

-

软件版本:logstash-7.13.2.tar.gz

1.安装配置Logstash

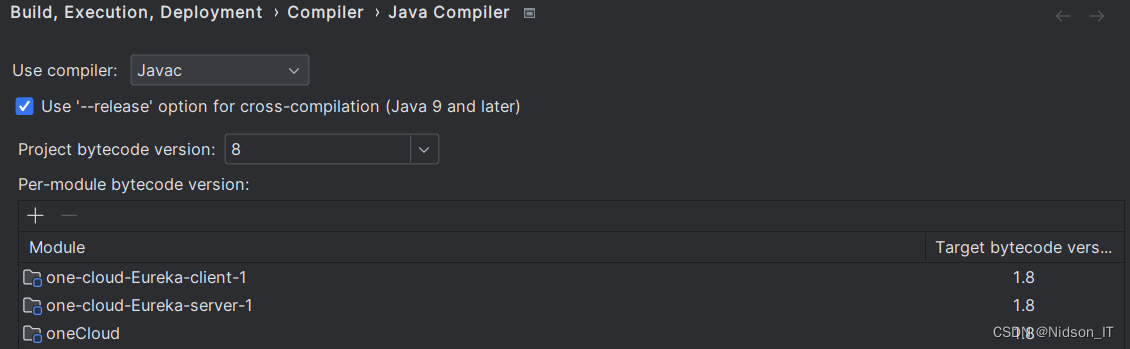

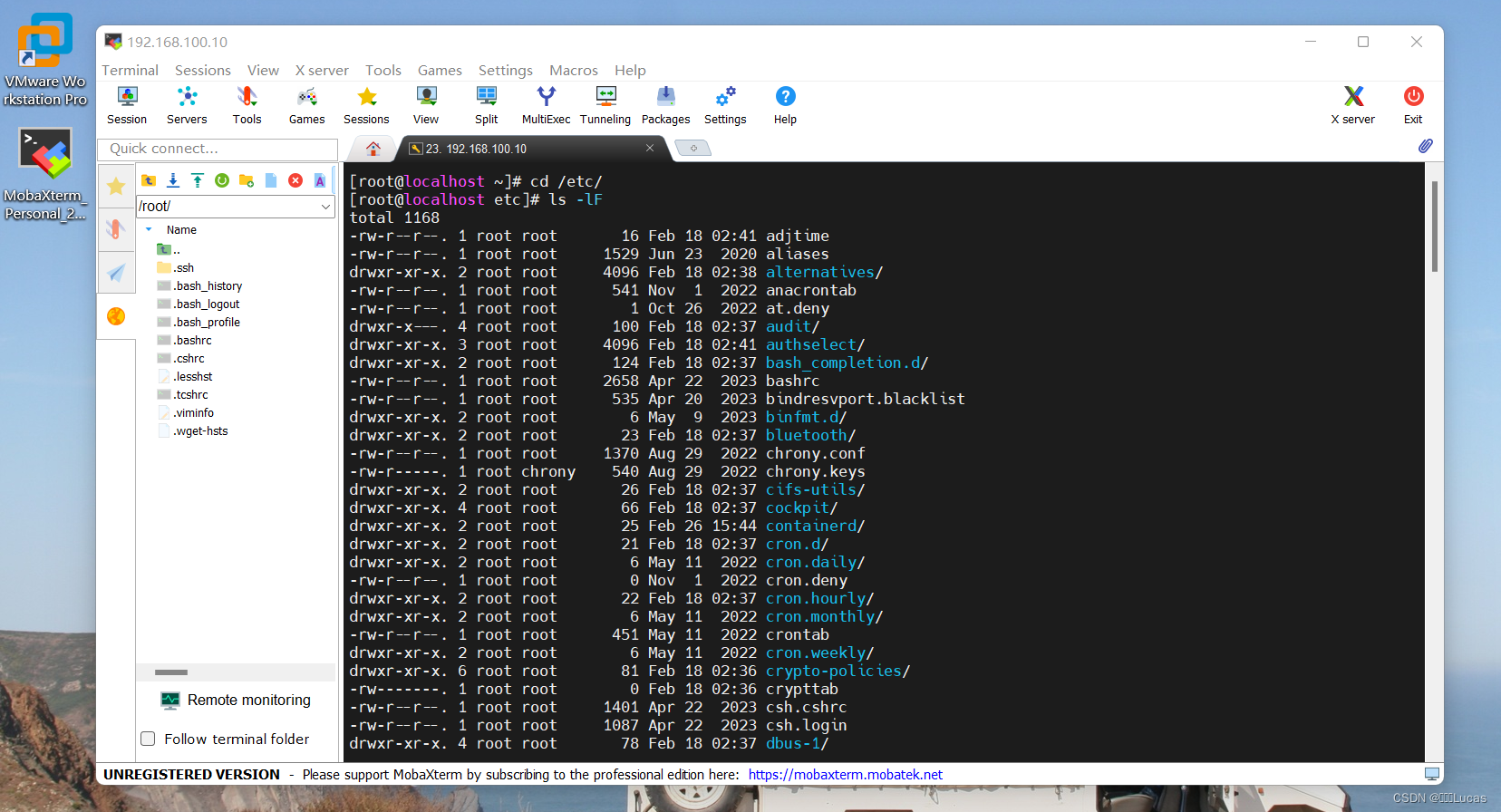

Logstash运行同样依赖jdk,本次为节省资源,故将Logstash安装在了10.12.153.71节点。

(1)安装

tar zxf /usr/local/package/logstash-7.13.2.tar.gz -C /usr/local/(2)测试文件

标准输入=>标准输出

1、启动logstash

2、logstash启动后,直接进行数据输入

3、logstash处理后,直接进行返回

input {

stdin {}

}

output {

stdout {

codec => rubydebug

}

}标准输入=>标准输出及es集群

1、启动logstash

2、启动后直接在终端输入数据

3、数据会由logstash处理后返回并存储到es集群中

input {

stdin {}

}

output {

stdout {

codec => rubydebug

}

elasticsearch {

hosts => ["10.12.153.71","10.12.153.72","10.12.153.133"]

index => 'logstash-debug-%{+YYYY-MM-dd}'

}

}端口输入=>字段匹配=>标准输出及es集群

1、由tcp 的8888端口将日志发送到logstash

2、数据被grok进行正则匹配处理

3、处理后,数据将被打印到终端并存储到es

input {

tcp {

port => 8888

}

}

filter {

grok {

match => {"message" => "%{DATA:key} %{NUMBER:value:int}"}

}

}

output {

stdout {

codec => rubydebug

}

elasticsearch {

hosts => ["10.12.153.71","10.12.153.72","10.12.153.133"]

index => 'logstash-debug-%{+YYYY-MM-dd}'

}

}

# yum install -y nc

# free -m |awk 'NF==2{print $1,$3}' |nc logstash_ip 8888文件输入=>字段匹配及修改时间格式修改=>es集群

1、直接将本地的日志数据拉去到logstash当中

2、将日志进行处理后存储到es

input {

file {

type => "nginx-log"

path => "/var/log/nginx/error.log"

start_position => "beginning" # 此参数表示在第一次读取日志时从头读取

# sincedb_path => "自定义位置" # 此参数记录了读取日志的位置,默认在 data/plugins/inputs/file/.sincedb*

}

}

filter {

grok {

match => { "message" => '%{DATESTAMP:date} [%{WORD:level}] %{DATA:msg} client: %{IPV4:cip},%{DATA}"%{DATA:url}"%{DATA}"%{IPV4:host}"'}

}

date {

match => [ "timestamp" , "dd/MMM/YYYY:HH:mm:ss Z" ]

}

}

output {

if [type] == "nginx-log" {

elasticsearch {

hosts => ["10.12.153.71","10.12.153.72","10.12.153.133"]

index => 'logstash-audit_log-%{+YYYY-MM-dd}'

}

}

}

filebeat => 字段匹配 => 标准输出及es

input {

beats {

port => 5000

}

}

filter {

grok {

match => {"message" => "%{IPV4:cip}"}

}

}

output {

elasticsearch {

hosts => ["192.168.249.139:9200","192.168.249.149:9200","192.168.249.159:9200"]

index => 'test-%{+YYYY-MM-dd}'

}

stdout { codec => rubydebug }

}(3)配置

创建目录,我们将所有input、filter、output配置文件全部放到该目录中。

mkdir -p /usr/local/logstash-7.13.2/etc/conf.d

vim /usr/local/logstash-7.13.2/etc/conf.d/input.conf

input {

kafka {

type => "audit_log"

codec => "json"

topics => "nginx"

decorate_events => true

bootstrap_servers => "10.12.153.71","10.12.153.72","10.12.153.133"

}

}

vim /usr/local/logstash-7.13.2/etc/conf.d/filter.conf

filter {

json { # 如果日志原格式是json的,需要用json插件处理

source => "message"

target => "nginx" # 组名

}

}

vim /usr/local/logstash-7.13.2/etc/conf.d/output.conf

output {

if [type] == "audit_log" {

elasticsearch {

hosts => ["10.12.153.71","10.12.153.72","10.12.153.133"]

index => 'logstash-audit_log-%{+YYYY-MM-dd}'

}

}

}(3)启动

cd /usr/local/logstash-7.13.2

nohup bin/logstash -f etc/conf.d/ --config.reload.automatic &grok

1、手动输入日志数据

一般为debug 方式,检测 ELK 集群是否健康,这种方法在 logstash 启动后可以直接手动数据数据,并将格式化后的数据打印出来。

数据链路

1、启动logstash

2、logstash启动后,直接进行数据输入

3、logstash处理后,直接进行返回

input {

stdin {}

}

output {

stdout {

codec => rubydebug

}

}2、手动输入数据,并存储到 es

数据链路

1、启动logstash

2、启动后直接在终端输入数据

3、数据会由logstash处理后返回并存储到es集群中

input {

stdin {}

}

output {

stdout {

codec => rubydebug

}

elasticsearch {

hosts => ["10.12.153.71","10.12.153.72","10.12.153.133"]

index => 'logstash-debug-%{+YYYY-MM-dd}'

}

}3、自定义日志1

数据链路

1、由tcp 的8888端口将日志发送到logstash

2、数据被grok进行正则匹配处理

3、处理后,数据将被打印到终端并存储到es

input {

tcp {

port => 8888

}

}

filter {

grok {

match => {"message" => "%{DATA:key} %{NUMBER:value:int}"}

}

}

output {

stdout {

codec => rubydebug

}

elasticsearch {

hosts => [""10.12.153.71","10.12.153.72","10.12.153.133""]

index => 'logstash-debug-%{+YYYY-MM-dd}'

}

}

# yum install -y nc

# free -m |awk 'NF==2{print $1,$3}' |nc logstash_ip 8888

4、自定义日志2

数据链路

1、由tcp 的8888端口将日志发送到logstash

2、数据被grok进行正则匹配处理

3、处理后,数据将被打印到终端

input {

tcp {

port => 8888

}

}

filter {

grok {

match => {"message" => "%{WORD:username}\:%{WORD:passwd}\:%{INT:uid}\:%{INT:gid}\:%{DATA:describe}\:%{DATA:home}\:%{GREEDYDATA:shell}"}

}

}

output {

stdout {

codec => rubydebug

}

}

# cat /etc/passwd | nc logstash_ip 88885、nginx access 日志

数据链路

1、在filebeat配置文件中,指定kafka集群ip [output.kafka] 的指定topic当中

2、在logstash配置文件中,input区域内指定kafka接口,并指定集群ip和相应topic

3、logstash 配置filter 对数据进行清洗

4、将数据通过 output 存储到es指定index当中

5、kibana 添加es 索引,展示数据

input {

kafka {

type => "audit_log"

codec => "json"

topics => "haha"

#decorate_events => true

#enable_auto_commit => true

auto_offset_reset => "earliest"

bootstrap_servers => ["192.168.52.129:9092,192.168.52.130:9092,192.168.52.131:9092"]

}

}

filter {

grok {

match => { "message" => "%{COMBINEDAPACHELOG} %{QS:x_forwarded_for}"}

}

date {

match => [ "timestamp" , "dd/MMM/YYYY:HH:mm:ss Z" ]

}

geoip {

source => "lan_ip"

}

}

output {

if [type] == "audit_log" {

stdout {

codec => rubydebug

}

elasticsearch {

hosts => ["192.168.52.129","192.168.52.130","192.168.52.131"]

index => 'tt-%{+YYYY-MM-dd}'

}

}

}

#filebeat 配置

filebeat.prospectors:

- input_type: log

paths:

- /opt/logs/server/nginx.log

json.keys_under_root: true

json.add_error_key: true

json.message_key: log

output.kafka:

hosts: [""10.12.153.71","10.12.153.72","10.12.153.133""]

topic: 'nginx'

# nginx 配置

log_format main '{"user_ip":"$http_x_real_ip","lan_ip":"$remote_addr","log_time":"$time_iso8601","user_req":"$request","http_code":"$status","body_bytes_sents":"$body_bytes_sent","req_time":"$request_time","user_ua":"$http_user_agent"}';

access_log /var/log/nginx/access.log main;

6、nginx error日志

数据链路

1、直接将本地的日志数据拉去到logstash当中

2、将日志进行处理后存储到es

input {

file {

type => "nginx-log"

path => "/var/log/nginx/error.log"

start_position => "beginning"

}

}

filter {

grok {

match => { "message" => '%{DATESTAMP:date} [%{WORD:level}] %{DATA:msg} client: %{IPV4:cip},%{DATA}"%{DATA:url}"%{DATA}"%{IPV4:host}"'}

}

date {

match => [ "timestamp" , "dd/MMM/YYYY:HH:mm:ss Z" ]

}

}

output {

if [type] == "nginx-log" {

elasticsearch {

hosts => [""10.12.153.71:9200","10.12.153.72:9200","10.12.153.133:9200""]

index => 'logstash-audit_log-%{+YYYY-MM-dd}'

}

}

}7、filebate 传输给 logstash

input {

beats {

port => 5000

}

}

filter {

grok {

match => {"message" => "%{IPV4:cip}"}

}

}

output {

elasticsearch {

hosts => ["192.168.249.139:9200","192.168.249.149:9200","192.168.249.159:9200"]

index => 'test-%{+YYYY-MM-dd}'

}

stdout { codec => rubydebug }

}

filebeat.inputs:

- type: log

enabled: true

paths:

- /var/log/nginx/access.log

output.logstash:

hosts: ["192.168.52.134:5000"]filebeat 日志模板

filebeat.inputs:

- type: log

enabled: true

paths:

- /var/log/nginx/access.log

output.kafka:

hosts: ["192.168.52.129:9092","192.168.52.130:9092","192.168.52.131:9092"]

topic: haha

partition.round_robin:

reachable_only: true

required_acks: 1