参考: https://huggingface.co/google/flan-t5-large

1:

from huggingface_hub.hf_api import HfFolder

HfFolder.save_token('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')

from transformers import pipeline

from transformers import T5Tokenizer, T5ForConditionalGeneration

tokenizer = T5Tokenizer.from_pretrained("google/flan-t5-large")

model = T5ForConditionalGeneration.from_pretrained("google/flan-t5-large")

input_text = """

Q: Can Geoffrey Hinton have aconversation with George Washington?

Give the rationale before answering

"""

input_ids = tokenizer(input_text, return_tensors="pt").input_ids

outputs = model.generate(input_ids,max_new_tokens=2000)

print(tokenizer.decode(outputs[0]))

2:

from huggingface_hub.hf_api import HfFolder

HfFolder.save_token('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')

from transformers import pipeline

from transformers import T5Tokenizer, T5ForConditionalGeneration

tokenizer = T5Tokenizer.from_pretrained("google/flan-t5-large")

model = T5ForConditionalGeneration.from_pretrained("google/flan-t5-large")

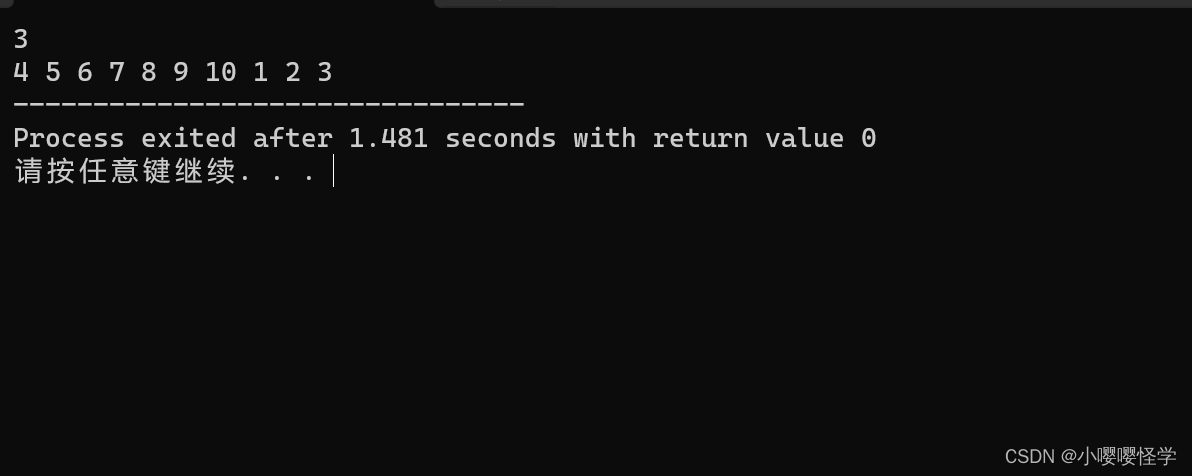

input_text = "answer the result: 1+ 2 ?"

input_ids = tokenizer(input_text, return_tensors="pt").input_ids

outputs = model.generate(input_ids,max_new_tokens=2000)

print(tokenizer.decode(outputs[0]))