Title: 非线性最小二乘问题的数值方法 —— 狗腿法 Powell‘s Dog Leg Method (II, Python 简单实例)

姊妹博文

非线性最小二乘问题的数值方法 —— 狗腿法 Powell‘s Dog Leg Method (I - 原理与算法)

0.前言

本篇博文作为对前述 “非线性最小二乘问题的数值方法 —— 狗腿法 Powell‘s Dog Leg Method (I - 原理与算法)” 的简单实践扩展.

理论部分参见前述博文, 此处不再重复. 这里只是补充一个简单的 Python 实例.

1. 最优问题实例

m i n i m i z e g ( x ) = 1 2 ∥ r ( x ) ∥ 2 2 = 1 2 ∑ i = 1 3 r i ( x ) 2 (I-1) {\rm minimize}\quad {g}(\mathbf{x}) = \frac{1}{2}\|\mathbf{r}(\mathbf{x})\|_2^2 = \frac{1}{2}\sum_{i=1}^{3} r_i(\mathbf{x})^2 \tag{I-1} minimizeg(x)=21∥r(x)∥22=21i=1∑3ri(x)2(I-1)

其中

x = [ x 1 , x 2 ] T \mathbf{x} = \begin{bmatrix} x_1, x_2 \end{bmatrix}^{\small\rm T} x=[x1,x2]T

r ( x ) = [ r 1 ( x ) , r 2 ( x ) , r 3 ( x ) ] T \mathbf{r}(\mathbf{x}) = \begin{bmatrix} r_1(\mathbf{x}), \, r_2(\mathbf{x}) ,\,r_3(\mathbf{x}) \end{bmatrix}^{\small\rm T} r(x)=[r1(x),r2(x),r3(x)]T

r 1 ( x ) = sin x 1 − 0.4 r_1(\mathbf{x}) = \sin x_1 -0.4 r1(x)=sinx1−0.4

r 2 ( x ) = cos x 2 + 0.8 r_2(\mathbf{x}) = \cos x_2 + 0.8 r2(x)=cosx2+0.8

r 3 ( x ) = x 1 2 + x 2 2 − 1 r_3(\mathbf{x}) = \sqrt{x_1^2 +x_2^2} -1 r3(x)=x12+x22−1

可以推得

∂ r ( x ) ∂ x = [ cos x 1 0 0 − sin x 2 x 1 x 1 2 + x 2 2 x 2 x 1 2 + x 2 2 ] \frac{\partial \mathbf{r}(\mathbf{x})}{\partial \mathbf{x}} = \begin{bmatrix}\cos x_1 & 0\\ 0 &-\sin x_2 \\ \frac{x_1}{\sqrt{x_1^2+x_2^2}} & \frac{x_2}{\sqrt{x_1^2+x_2^2}} \end{bmatrix} ∂x∂r(x)= cosx10x12+x22x10−sinx2x12+x22x2

g ( x ) = 1 2 [ ( sin x 1 − 0.4 ) 2 + ( cos x 2 + 0.8 ) 2 + ( x 2 2 + x 1 2 − 1 ) 2 ] g(\mathbf{x})=\frac{1}{2} \left[{ {{\left( \sin{ {x_1} }-0.4\right) }^{2}}+{{\left( \cos{ {x_2} }+0.8\right) }^{2}}+{{\left( \sqrt{{{{x_2}}^{2}}+{{{x_1}}^{2}}}-1\right) }^{2}}}\right] g(x)=21[(sinx1−0.4)2+(cosx2+0.8)2+(x22+x12−1)2]

∇ g ( x ) = [ x 1 ( x 2 2 + x 1 2 − 1 ) x 2 2 + x 1 2 + cos x 1 ( sin x 1 − 0.4 ) x 2 ( x 2 2 + x 1 2 − 1 ) x 2 2 + x 1 2 − sin x 2 ( cos x 2 + 0.8 ) ] \nabla g(\mathbf{x}) = \begin{bmatrix}\frac{{x_1} \left( \sqrt{{{{x_2}}^{2}}+{{{x_1}}^{2}}}-1\right) }{\sqrt{{{{x_2}}^{2}}+{{{x_1}}^{2}}}}+\cos{ {x_1} } \left( \sin{ {x_1} }-0.4\right) \\ \frac{{x_2} \left( \sqrt{{{{x_2}}^{2}}+{{{x_1}}^{2}}}-1\right) }{\sqrt{{{{x_2}}^{2}}+{{{x_1}}^{2}}}}- \sin{ {x_2} } \left( \cos{ {x_2} }+0.8\right) \end{bmatrix} ∇g(x)= x22+x12x1(x22+x12−1)+cosx1(sinx1−0.4)x22+x12x2(x22+x12−1)−sinx2(cosx2+0.8)

H ~ ( x ) = [ x 1 2 x 2 2 + x 1 2 + ( cos x 1 ) 2 x 1 x 2 x 2 2 + x 1 2 x 1 x 2 x 2 2 + x 1 2 ( sin x 2 ) 2 + x 2 2 x 2 2 + x 1 2 ] \widetilde{\mathbf{H}}(\mathbf{x})=\begin{bmatrix}\frac{{{{x_1}}^{2}}}{{{{x_2}}^{2}}+{{{x_1}}^{2}}}+{{(\cos{ {x_1} })}^{2}} & \frac{{x_1} {x_2}}{{{{x_2}}^{2}}+{{{x_1}}^{2}}}\\ \frac{{x_1} {x_2}}{{{{x_2}}^{2}}+{{{x_1}}^{2}}} & {{(\sin{ {x_2} )}}^{2}}+\frac{{{{x_2}}^{2}}}{{{{x_2}}^{2}}+{{{x_1}}^{2}}}\end{bmatrix} H (x)=[x22+x12x12+(cosx1)2x22+x12x1x2x22+x12x1x2(sinx2)2+x22+x12x22]

具体的符号推导参见非线性最小二乘问题的数值方法 —— 从牛顿迭代法到高斯-牛顿法 (实例篇 V).

2. 狗腿法 (Powell‘s Dog Leg Method) Python 实现

基于狗腿法的算法流程实现如下简单 Python Demo:

from mpl_toolkits.mplot3d import axes3d

import matplotlib.pyplot as plt

import numpy as np

from numpy.linalg import inv, det, norm

from math import cos

from math import sin

from math import sqrt

from math import pow

# multiplication of two matrixs

def multiply_matrix(A, B):

if A.shape[1] == B.shape[0]:

C = np.zeros((A.shape[0], B.shape[1]), dtype = float)

[rows, cols] = C.shape

for row in range(rows):

for col in range(cols):

for elt in range(len(B)):

C[row, col] += A[row, elt] * B[elt, col]

return C

else:

return "Cannot multiply A and B. Please check whether the dimensions of the inputs are compatible."

# g(x) = (1/2) ||r(x)||_2^2

def g(x_vector):

x_1 = x_vector[0]

x_2 = x_vector[1]

return ( pow(sin(x_1)-0.4, 2)+ pow(cos(x_2)+0.8, 2) + pow(sqrt(pow(x_2,2)+pow(x_1,2))-1, 2) ) /2

# r(x) = [r_1, r_2, r_3]^{T}

def r(x_vector):

x_1 = x_vector[0]

x_2 = x_vector[1]

return np.array([[sin(x_1)-0.4],

[cos(x_2)+0.8],

[sqrt(pow(x_1,2)+pow(x_2,2))-1]], dtype=object)

# \partial r(x) / \partial x

def dr(x_vector):

x_1 = x_vector[0]

x_2 = x_vector[1]

if sqrt(pow(x_2,2)+pow(x_1,2)) < 1e-3: ## 人为设置

return np.array([[cos(x_1), 0],

[0, -sin(x_2)],

[0, 0]], dtype=object)

else:

return np.array([[cos(x_1), 0],

[0, -sin(x_2)],

[x_1/sqrt(pow(x_2,2)+pow(x_1,2)), x_2/sqrt(pow(x_2,2)+pow(x_1,2))]], dtype=object)

# Simplified Hessian matrix in Gauss-Newton method

# refer to eq. (I-1-2) in blog "非线性最小二乘问题的数值方法 —— 从高斯-牛顿法到列文伯格-马夸尔特法 (I)"

def sH(x_vector):

x_1 = x_vector[0]

x_2 = x_vector[1]

return multiply_matrix(np.transpose(dr(x_1, x_2)), dr(x_1, x_2))

# \nabla g(x_1, x_2)

# refer to eq. (I-1-3) in blog "非线性最小二乘问题的数值方法 —— 从高斯-牛顿法到列文伯格-马夸尔特法 (I)"

def dg(x_vector):

x_1 = x_vector[0]

x_2 = x_vector[1]

return np.array(multiply_matrix(np.transpose(dr(x_1, x_2)), r(x_1, x_2)))

# model for the cost function g based on eq (II-2-2) in "非线性最小二乘问题的数值方法 —— 狗腿法 Powell‘s Dog Leg Method (I - 原理与算法)"

def L_model(h_vector, g_i, dg_i, sH_i):

return g_i + multiply_matrix( dg_i.transpose(), h_vector) + 0.5 * multiply_matrix(multiply_matrix(h_vector.transpose(), sH_i), h_vector)

def dog_leg_method(x_vector, epsilon_1, epsilon_2, epsilon_3, max_iter, trust_region_radius):

# x_1 = x_vector[1]

# # x_2 = x_vector[2]

iter = 0

delta = trust_region_radius # trust-region radius

found = False

# g_i = g(x_vector)

x_current_vector = x_vector

r_i = r(x_current_vector)

dr_i = dr(x_current_vector)

dg_i = multiply_matrix(np.transpose(dr_i), r_i)

g_i = g(x_current_vector)

# if np.max(np.abs(dg_i)) < epsilon_1:

if (norm(r_i, np.inf) < epsilon_3 ) or (norm(dg_i, np.inf) < epsilon_1):

found = True

array_x_1 = []

array_x_2 = []

array_x_3 = []

# x_new_vector = np.array([0,0])

# g_new = np.inf

while (found == False) and (iter < max_iter):

# sH_i = sH(x_vector)

array_x_1.append(x_current_vector[0])

array_x_2.append(x_current_vector[1])

array_x_3.append(g_i)

iter += 1

step_sd_i = - dg_i

Jh = multiply_matrix(dr_i, step_sd_i)

alpha_i = pow(norm(step_sd_i, 2), 2) / pow(norm(Jh, 2), 2)

step_cp_i = alpha_i * step_sd_i ## Steepest descent step

sH_i = multiply_matrix(np.transpose(dr_i), dr_i) ## Simplified Hessian Matrix

inv_sH_i = inv(sH_i)

step_gn_i = - np.array(multiply_matrix(inv_sH_i, dg_i)) ## Gauss-Newton step

rho = -1

while (rho < 0) and (found == False): ## Until step acceptable

if norm(step_gn_i, 2) < delta: ## Case I

step_dl_i = step_gn_i

print("Iterating index [%d], Case I"%iter)

elif norm(step_cp_i, 2) >= delta: ## Case II

step_dl_i = (delta / norm(step_sd_i, 2)) * step_sd_i

print("Iterating index [%d], Case II"%iter)

else: ## Case III

step_gn_cp_i = step_gn_i - step_cp_i

gn_cp_norm_sq = pow(norm(step_gn_cp_i, 2),2)

delta_cp_sq = pow(delta,2) - pow(norm(step_cp_i, 2), 2)

c_matrix = multiply_matrix( np.transpose(step_cp_i), step_gn_cp_i )

c = c_matrix[0][0]

sqrt_discriminant = sqrt( pow(c,2) + gn_cp_norm_sq * delta_cp_sq )

if (c <= 0):

beta = (-c + sqrt_discriminant) / gn_cp_norm_sq

else:

beta = delta_cp_sq / (c + sqrt_discriminant)

step_dl_i = step_cp_i + beta * step_gn_cp_i

print("Iterating index [%d], Case III"%iter)

norm_step_dl = norm(step_dl_i, 2)

if (norm_step_dl <= epsilon_2 * (norm(x_current_vector, 2) + epsilon_2)):

found = True

else:

# print(x_current_vector.shape)

# print(step_dl_i.shape)

x_new_vector = x_current_vector + step_dl_i.flatten()

g_new = g(x_new_vector)

L_0 = g_i

L_h = L_model(step_dl_i, g_i, dg_i, sH_i)

rho = (g_i - g_new) / (L_0 - L_h)

if (rho > 0): ## Step acceptable

x_current_vector = x_new_vector ## New iterating state

r_i = r(x_current_vector)

dr_i = dr(x_current_vector)

dg_i = multiply_matrix(np.transpose(dr_i), r_i)

g_i = g(x_current_vector)

if (norm(r_i, np.inf) < epsilon_3 ) or (norm(dg_i, np.inf) < epsilon_1):

found = True

if (rho > 0.75): ## Expanding trust region

if (delta - 3 * norm_step_dl < 0):

delta = 3 * norm_step_dl

elif (rho < 0.25): ## Shrinking trust region

delta = delta / 2

if (delta < (epsilon_2*(norm(x_current_vector, 2)+epsilon_2))):

found = True

return array_x_1, array_x_2, array_x_3

def result_plot(trajectory, trust_region_radius):

fig = plt.figure()

ax3 = plt.axes(projection='3d')

xx = np.arange(-5,5,0.1)

yy = np.arange(-4,4,0.1)

X, Y = np.meshgrid(xx, yy)

Z = np.zeros((X.shape[0], Y.shape[1]), dtype = float)

for i in range(X.shape[0]):

for j in range(Y.shape[1]):

Z[i,j] = g(np.array([X[0,j], Y[i,0]]))

ax3.plot_surface(X, Y, Z, rstride = 1, cstride = 1, cmap='rainbow', alpha=0.25)

ax3.contour(X, Y, Z, offset=-1, cmap = 'rainbow')

ax3.plot(trajectory[0], trajectory[1], trajectory[2], "r--")

offset_data = -1*np.ones(len(trajectory[0]))

ax3.plot(trajectory[0], trajectory[1], offset_data,'k--')

ax3.set_title('Dog Leg Method \n(Initial point [%.1f, %.1f], Trust-region radius %.2f)' %(trajectory[0][0], trajectory[1][0], trust_region_radius))

ax3.set_xlabel("r_1")

ax3.set_ylabel("r_2")

ax3.set_zlabel("g")

file_name_prefix = "./dog_leg"

file_extension = ".png"

radius= "-r"

file_name = f"{file_name_prefix}_{trajectory[0][0]}_{trajectory[1][0]}{radius}{trust_region_radius}{file_extension}"

print(file_name)

plt.draw()

plt.savefig(file_name)

if __name__ == "__main__":

test_data = np.array([[4.9, 3.9], [-2.9, 1.9], [0.1, -0.1], [-0.1, 0.1], [0,-3.8],[1,2.5]], dtype=object)

trust_region_radius = np.array([0.4, 0.01, 2.0])

for radius in trust_region_radius:

for inital_data in test_data:

print("\nInitial point: [%.1f, %.1f]" %(inital_data[0],inital_data[1]))

print("Trust region radius: %.2f" %radius)

epsilon_1 = 1e-6

epsilon_2 = 1e-6

epsilon_3 = 1e-6

max_iter = 1000

trajectory = dog_leg_method(inital_data, epsilon_1, epsilon_2, epsilon_3, max_iter, radius)

result_plot(trajectory, radius)

3. 测试结果

A. 结果显示

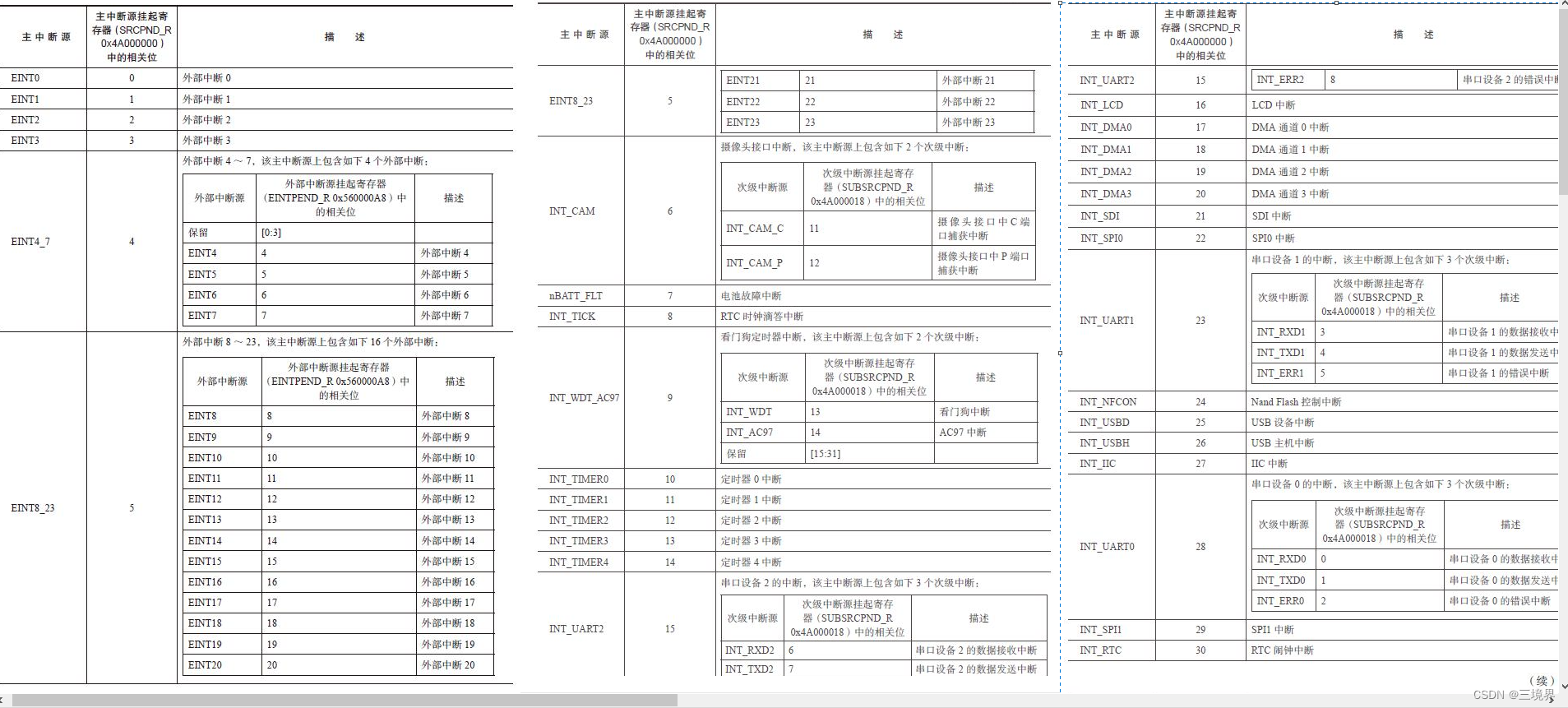

| 测试显示 (初始点 [4.9, 3.9], 信赖域半径分别为 0.01、0.4、2.0) | 测试显示 (初始点 [-2.9, 1.9], 信赖域半径分别为 0.01、0.4、2.0) |

|---|---|

|  |

|  |

|  |

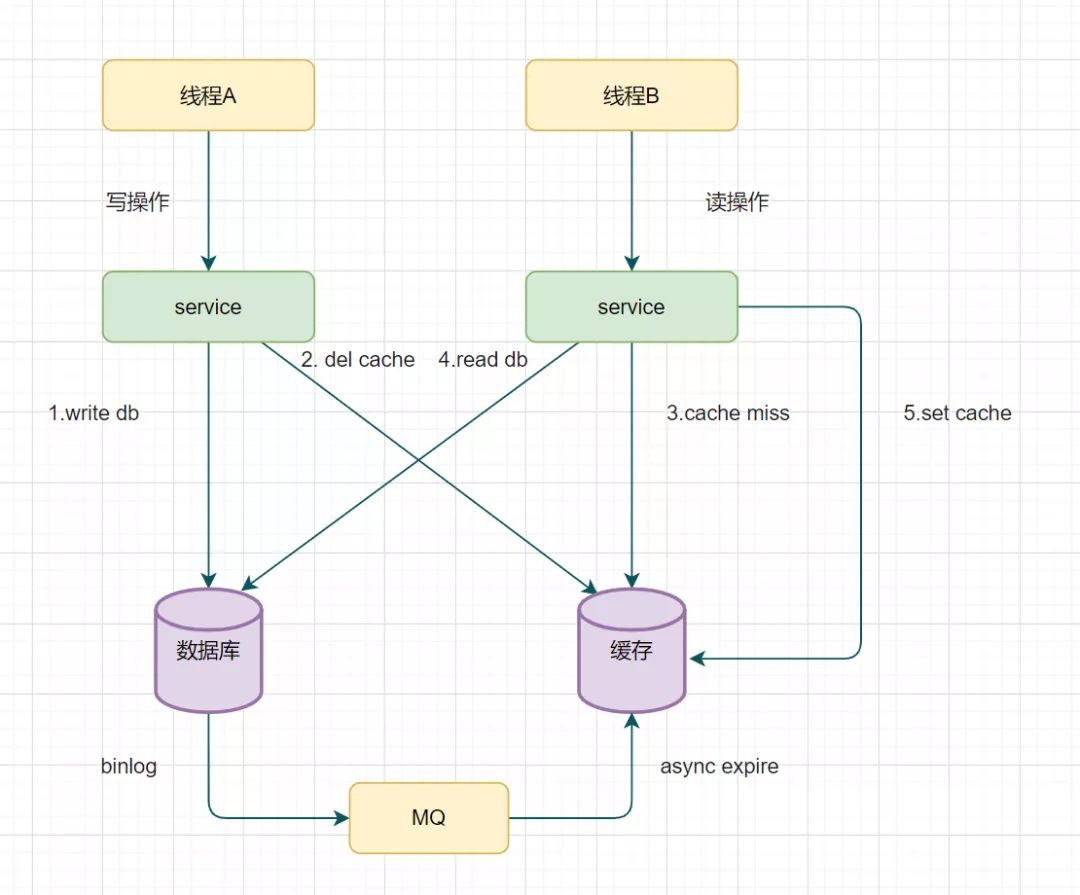

B. 迭代步说明

不同信赖域的迭代步及每一步的类型如下表所示. Case III 代表该步类型为狗腿步, Case II 代表该步类型为柯西步, Case I 代表该步类型为高斯-牛顿步.

越靠近收敛极小值点, 高斯-牛顿步类型出现频率越高, 这样有利于快速收敛.

信赖域半径对计算性能也有影响.

| Trust-region radius = 0.01 | Trust-region radius = 0.4 | Trust-region radius = 2.0 |

|---|---|---|

| Initial point: [4.9, 3.9] Trust region radius: 0.01 Iterating index [1], Case II Iterating index [2], Case II Iterating index [3], Case II Iterating index [4], Case II Iterating index [5], Case II Iterating index [6], Case II Iterating index [7], Case I Iterating index [8], Case I Iterating index [9], Case I Iterating index [10], Case I Iterating index [11], Case I Iterating index [12], Case I Iterating index [13], Case I Iterating index [14], Case I Iterating index [15], Case I Iterating index [16], Case I Iterating index [17], Case I Iterating index [18], Case I Iterating index [19], Case I Iterating index [20], Case I Iterating index [21], Case I Iterating index [22], Case I Iterating index [23], Case I Iterating index [24], Case I Iterating index [25], Case I | Initial point: [4.9, 3.9] Trust region radius: 0.40 Iterating index [1], Case II Iterating index [2], Case II Iterating index [3], Case III Iterating index [4], Case III Iterating index [4], Case III Iterating index [5], Case I Iterating index [5], Case I Iterating index [5], Case III Iterating index [6], Case II Iterating index [7], Case I Iterating index [8], Case I Iterating index [9], Case I Iterating index [10], Case I Iterating index [11], Case I Iterating index [12], Case I Iterating index [13], Case I Iterating index [14], Case I Iterating index [15], Case I Iterating index [16], Case I Iterating index [17], Case I Iterating index [18], Case I Iterating index [19], Case I Iterating index [20], Case I Iterating index [21], Case I Iterating index [22], Case I Iterating index [23], Case I | Initial point: [4.9, 3.9] Trust region radius: 2.00 Iterating index [1], Case II Iterating index [2], Case I Iterating index [3], Case I Iterating index [3], Case I Iterating index [3], Case III Iterating index [4], Case I Iterating index [5], Case I Iterating index [6], Case I Iterating index [7], Case I Iterating index [8], Case I Iterating index [9], Case I Iterating index [10], Case I Iterating index [11], Case I Iterating index [12], Case I Iterating index [13], Case I Iterating index [14], Case I Iterating index [15], Case I Iterating index [16], Case I Iterating index [17], Case I Iterating index [18], Case I Iterating index [19], Case I Iterating index [20], Case I Iterating index [21], Case I Iterating index [22], Case I |

| Initial point: [-2.9, 1.9] Trust region radius: 0.01 Iterating index [1], Case II Iterating index [2], Case II Iterating index [3], Case II Iterating index [4], Case II Iterating index [5], Case I Iterating index [6], Case I Iterating index [7], Case I Iterating index [8], Case III Iterating index [8], Case III Iterating index [9], Case I Iterating index [10], Case I Iterating index [11], Case I Iterating index [12], Case I Iterating index [13], Case I Iterating index [14], Case I Iterating index [15], Case I Iterating index [16], Case I Iterating index [17], Case I Iterating index [18], Case I Iterating index [19], Case I Iterating index [20], Case I Iterating index [21], Case I Iterating index [22], Case I Iterating index [23], Case I Iterating index [24], Case I Iterating index [25], Case I Iterating index [26], Case I | Initial point: [-2.9, 1.9] Trust region radius: 0.40 Iterating index [1], Case II Iterating index [2], Case I Iterating index [3], Case I Iterating index [4], Case I Iterating index [5], Case III Iterating index [5], Case III Iterating index [6], Case I Iterating index [7], Case I Iterating index [8], Case I Iterating index [9], Case I Iterating index [10], Case I Iterating index [11], Case I Iterating index [12], Case I Iterating index [13], Case I Iterating index [14], Case I Iterating index [15], Case I Iterating index [16], Case I Iterating index [17], Case I Iterating index [18], Case I Iterating index [19], Case I Iterating index [20], Case I Iterating index [21], Case I Iterating index [22], Case I Iterating index [23], Case I | Initial point: [-2.9, 1.9] Trust region radius: 2.00 Iterating index [1], Case I Iterating index [2], Case I Iterating index [3], Case III Iterating index [4], Case III Iterating index [4], Case III Iterating index [5], Case I Iterating index [6], Case I Iterating index [7], Case I Iterating index [8], Case I Iterating index [9], Case I Iterating index [10], Case I Iterating index [11], Case I Iterating index [12], Case I Iterating index [13], Case I Iterating index [14], Case I Iterating index [15], Case I Iterating index [16], Case I Iterating index [17], Case I Iterating index [18], Case I Iterating index [19], Case I Iterating index [20], Case I Iterating index [21], Case I Iterating index [22], Case I |

4. 结论

以上仅为一个狗腿法的简单示例, 推导和算法请见 “非线性最小二乘问题的数值方法 —— 狗腿法 Powell‘s Dog Leg Method (I - 原理与算法)”.

如有问题请指出, 谢谢!