文章目录

- 前言

- Pensieve原理

- *Pensieve重训练参考

- Oboe [SIGCOMM '18]

- Comyco [MM '19]

- Fugu [NSDI '20]

- A3C熵权重衰减

- 思路

- 实现

前言

Pensieve是DASH点播视频中最经典的ABR算法之一,也是机器学习类(Learning-based)ABR算法的代表性工作。Pensieve基于深度强化学习(DRL)方法A3C(Asynchronous Advantage Actor-Critic)设计,同时使用视频块的吞吐量历史采样、当前缓冲区等信息作为输入特征进行决策。与先前的启发式或基于领域知识的方法(如FESTIVE、BBA、BOLA、MPC等)不同,Pensieve利用强化学习模型自动在仿真环境中探索最优策略。

本文简要介绍Pensieve的基本原理。尽管Pensieve提供了开源代码,但由于深度强化学习的训练效果依赖于特定技巧,本文更为着重地介绍了应该如何正确地训练Pensieve模型,并将重训练Pensieve的代码、数据集、模型和结果进行了开源,参见:https://github.com/GreenLv/pensieve_retrain。

Pensieve论文:Neural Adaptive Video Streaming with Pensieve - SIGCOMM '17

Pensieve网站:Pensieve

Pensieve开源代码:hongzimao/pensieve: Neural Adaptive Video Streaming with Pensieve (SIGCOMM '17)

系列博文索引:

- DASH标准&ABR算法介绍

- dash.js的ABR逻辑

- ABR算法研究综述阅读笔记

- 经典ABR算法介绍:FESTIVE (CoNEXT '12) 论文阅读笔记

- 经典ABR算法介绍:BBA (SIGCOMM ’14) 设计与代码实现

- 经典ABR算法介绍:BOLA (INFOCOM '16) 核心算法逻辑

- 经典ABR算法介绍:BOLA (INFOCOM '16) dash.js代码实现

- 经典ABR算法介绍:Pensieve (SIGCOMM ‘17) 原理及训练指南

Pensieve原理

【注:Pensieve使用的A3C是一种Actor-Critic(AC)强化学习方法,背景知识及相关介绍参见:强化学习的数学原理学习笔记 - Actor-Critic,本文不再赘述。】

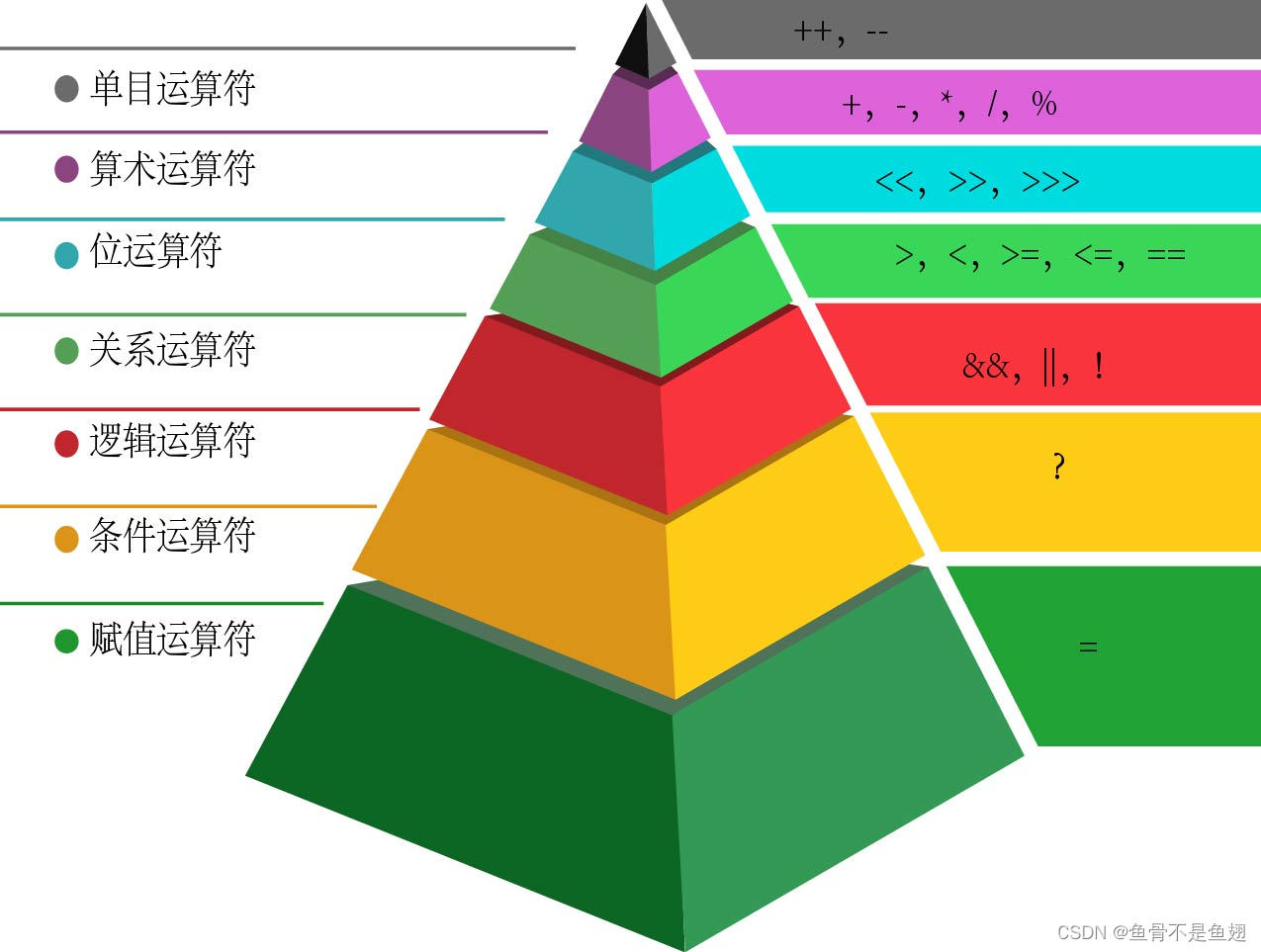

Actor-Critic原理:总体还是策略评估-策略提升的思想,其行为策略与目标策略一致,因此为on-policy方法

- Critic网络:负责策略评估,估计当前Actor策略的状态值

- Actor网络:负责策略提升,直接对应行为策略与目标策略,与环境交互获得经验采样,并不断更新优化目标策略

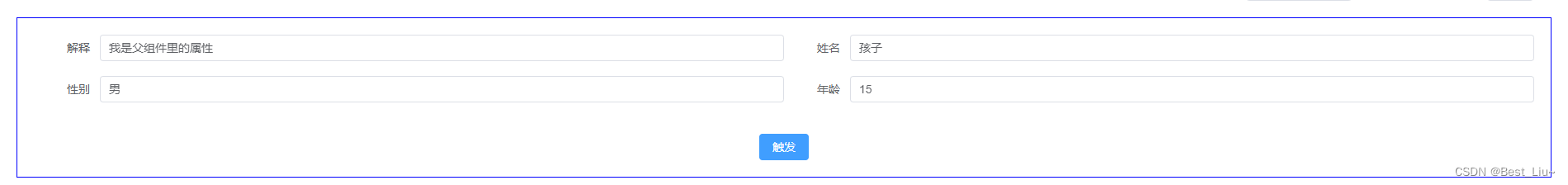

Pensieve使用两个神经网络,分别对应Actor与Critic,其特征及网络结构如下图所示。

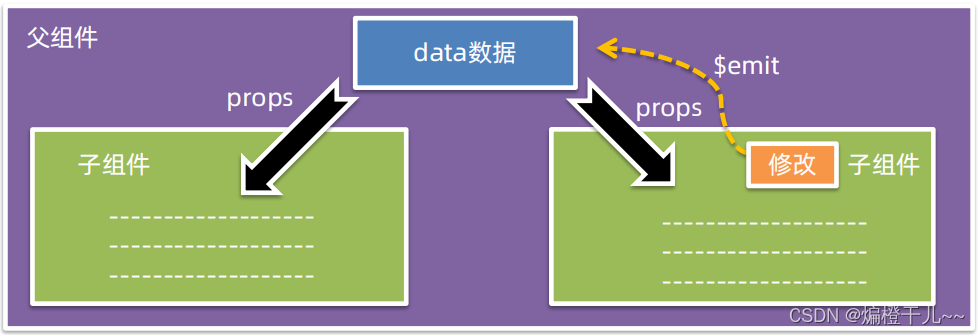

A3C改进自经典AC算法A2C(Advantage Actor-Critic),主要差异在于支持异步(asynchronous)训练。特别地,Pensieve使用的A3C完全基于CPU训练,同时使用16个agent进行经验采样,并交由一个central agent对采样进行汇总并计算更新神经网络参数。此外,A3C在网络结构、策略评估等方面也有优化,并为Actor的训练引入了额外的熵正则化项。

Pensieve参数更新公式:

- Critic网络: θ υ ← θ υ − α ′ ∑ t ∇ θ υ ( r t + γ V π θ ( s t + 1 ; θ υ ) − V π θ ( s t ; θ υ ) ) 2 \theta_\upsilon \larr \theta_\upsilon - \alpha' \sum_t \nabla_{\theta_\upsilon} (r_t + \gamma V^{\pi_\theta}(s_{t+1}; \theta_\upsilon) - V^{\pi_\theta}(s_{t}; \theta_\upsilon))^2 θυ←θυ−α′∑t∇θυ(rt+γVπθ(st+1;θυ)−Vπθ(st;θυ))2

- Actor网络:

θ

←

θ

+

α

∑

t

∇

θ

log

π

θ

(

s

t

,

a

t

)

A

(

s

,

a

)

+

β

∇

θ

H

(

π

θ

(

⋅

∣

s

t

)

)

\theta \larr \theta + \alpha \sum_t \nabla_\theta \log \pi_\theta (s_t, a_t) A(s, a) {\color{blue} +\beta \nabla_\theta H(\pi_\theta (\cdot | s_t) )}

θ←θ+α∑t∇θlogπθ(st,at)A(s,a)+β∇θH(πθ(⋅∣st))

- 计算累积奖励相对于策略参数 θ \theta θ的梯度

- 其中优势函数 A ( s , a ) A(s, a) A(s,a)基于Critic网络的输出(值函数的估计值)进行计算

- β ∇ θ H ( π θ ( ⋅ ∣ s t ) ) \beta \nabla_\theta H(\pi_\theta (\cdot | s_t) ) β∇θH(πθ(⋅∣st))为熵正则化项(由A3C引入)

优势函数(advantage function)表示在状态

s

s

s下,某个特定动作

a

a

a取得的奖励相对于整个策略

π

θ

\pi_\theta

πθ的预期(平均)奖励的提升,形式为:

A

π

θ

(

s

t

,

a

t

)

=

r

t

+

γ

V

π

θ

(

s

t

+

1

;

θ

υ

)

−

V

π

θ

(

s

t

;

θ

υ

)

A^{\pi_\theta}(s_t, a_t) = r_t + \gamma V^{\pi_\theta}(s_{t+1}; \theta_\upsilon) - V^{\pi_\theta}(s_{t}; \theta_\upsilon)

Aπθ(st,at)=rt+γVπθ(st+1;θυ)−Vπθ(st;θυ)

- 优势函数为正,表明当前动作 a a a优于现有策略,因此模型应该增强在当前状态下选择该动作的概率

- 此时,策略参数 θ \theta θ应该向能够使得策略 π θ \pi_\theta πθ选择 a a a的概率最大的方向(即梯度方向)进行更新

- 优势函数 A π θ ( s , a ) A^{\pi_\theta}(s, a) Aπθ(s,a) vs. 值函数 υ π θ ( s ) \upsilon^{\pi_\theta}(s) υπθ(s):通过估计值函数 V π θ ( s ; θ υ ) V^{\pi_\theta}(s; \theta_\upsilon) Vπθ(s;θυ)来估计优势函数

Pensieve参数设置:

- k = 8 k=8 k=8:过去历史采样数量

- γ = 0.99 \gamma = 0.99 γ=0.99:折扣因子,表示当前动作受未来100步的影响

- α = 1 0 − 4 \alpha=10^{-4} α=10−4:Actor网络学习率

- α ′ = 1 0 − 3 \alpha '=10^{-3} α′=10−3:Critic网络学习率

- β \beta β:熵因子,在 1 0 5 10^5 105次迭代中从 1 1 1衰减至 0.1 0.1 0.1【重要】

- 1D CNN采用 128 128 128个filters(size 4 with stride 1)

- 训练集占比:80%

Pensieve训练时间:

- 单个算法:50000次迭代,每次迭代300ms(16个agent并行更新参数)

- 总耗时:4小时左右

*Pensieve重训练参考

Pensieve的运行环境(参考:https://github.com/hongzimao/pensieve/issues/12#issuecomment-345060132)

Ubuntu 16.04, Tensorflow v1.1.0, TFLearn v0.3.1 and Selenium v2.39.0

有多篇工作对Pensieve进行了复现和对比,故可以作为重新训练Pensieve的参考资料。

对此部分细节不感兴趣的话可以跳过。主要结论是,重新训练Pensieve时最好按照原论文的描述实现熵权重的动态衰减,详见下一节内容。

Oboe [SIGCOMM '18]

论文:Oboe: auto-tuning video ABR algorithms to network conditions

Pensieve Re-Training and Validation. Before evaluating Pensieve on our dataset, we retrain Pensieve using the source code on the trace dataset provided by the Pensieve authors [11]. This helps us validate our retraining given that deep reinforcement learning results are not easy to reproduce [29].

We experimented with five different initial entropy weights in the author suggested range of 1 to 5, and linearly reduced their values in a gradual fashion using plateaus, with five different decrease rates until the entropy weight eventually reached 0.1. This rate scheduler follows best-practice [55]. From the trained set of models, we then selected the best performing model (an initial entropy weight of 1 reduced every 800 iterations until it reaches 0.1 over 100K iterations) and compared its performance to the pre-trained Pensieve model provided by the authors. Figure 10 shows CDFs of QoE-lin for the pretrained (Original) model and the model trained by us (Retrained). The performance distribution of the two models are almost identical over the test traces provided by the Pensieve authors, thereby validating our retraining methodology.

Having validated our retraining methodology, we trained Pensieve on our dataset with the same complete strategy described above. For this, we pick 1600 traces randomly from our dataset with average throughput in the 0-6 Mbps range. The number of training traces, the number of iterations per trace, and the range of throughput are similar to [39]. We then compare Pensieve and MPC+Oboe over a separate test set of traces also in the range of 0-6 Mbps (§4.2).

要点:

- 改变熵因子参数设置,但最后的最优模型还是Pensieve原论文中的参数

- 在Pensieve的原数据集上进行训练

- 最终在相同测试集上与Pensieve表现基本一致

- 重训练的数据集数量和迭代轮次与Pensieve原始模型接近

Comyco [MM '19]

论文:Comyco: Quality-Aware Adaptive Video Streaming via Imitation Learning

Pensieve Re-training. We retrain Pensieve via our datasets (§6.1), NN architectures (§4.1) and QoE metrics (§5.1). Followed by recent work [6], our experiments use different entropy weights in the range of 5.0 to 1.0 and dynamically decrease the weight every 1000 iterations. Training time takes about 8 hours and we show that Pensieve outperforms RobustMPC, with an overall average QoE improvement of 3.5% across all sessions. Note that same experiments can improve the Q o E l i n QoE_{lin} QoElin [51] by 10.5%. It indicates that Q o E v QoE_v QoEv cannot be easily improved because the metric reflects the real world MOS score.

要点:

- 和Oboe一样,尝试了不同熵因子的取值

- 但是只是用了Pensieve的A3C训练算法,神经网络结构和QoE指标都有改变

- 训练时长比Pensieve原始模型更长

Fugu [NSDI '20]

论文:Learning in situ: a randomized experiment in video streaming

Deploying Pensieve for live streaming. We use the released Pensieve code (written in Python with TensorFlow) directly. When a client is assigned to Pensieve, Puffer spawns a Python subprocess running Pensieve’s multi-video model.

We contacted the Pensieve authors to request advice on deploying the algorithm in a live, multi-video, real-world setting. The authors recommended that we use a longer-running training and that we tune the entropy parameter when training the multi-video neural network. We wrote an automated tool to train 6 different models with various entropy reduction schemes. We tested these manually over a few real networks, then selected the model with the best performance. We modified the Pensieve code (and confirmed with the authors) so that it does not expect the video to end before a user’s session completes. We were not able to modify Pensieve to optimize SSIM; it considers the average bitrate of each Puffer stream. We adjusted the video chunk length to 2.002 seconds and the buffer threshold to 15 seconds to reflect our parameters. For training data, we used the authors’ provided script to generate 1000 simulated videos as training videos, and a combination of the FCC and Norway traces linked to in the Pensieve codebase as training traces.

…

This dataset shift could have harmed the performance of Pensieve, which was trained on the FCC traces. In response to reviewer feedback, we trained a version of Pensieve on throughput traces randomly sampled from real Puffer video sessions.

要点:

- 调整了熵因子的选择,训练了多种不同模型

- 调整了视频块时长、缓冲区阈值的设置

- 使用Pensieve原本的网络数据集(FCC、Norway)进行训练(*后续使用了Puffer的数据集重新训练了Pensieve,性能有所提升)

- 使用Pensieve原本的QoE的优化目标(码率),而非Fugu使用的SSIM

- 重新训练Pensieve时按照论文提供了对于多视频的支持

- 为了实现在Puffer上的部署,修改了部分Pensieve代码

A3C熵权重衰减

思路

Pensieve原始代码中,熵权重是固定的(ENTROPY_WEIGHT = 0.5),并非论文中描述的从1逐步衰减至0.1,代码如下:

ENTROPY_WEIGHT = 0.5

...

class ActorNetwork(object):

"""

Input to the network is the state, output is the distribution

of all actions.

"""

def __init__(self, sess, state_dim, action_dim, learning_rate):

...

# Compute the objective (log action_vector and entropy)

self.obj = tf.reduce_sum(tf.multiply(

tf.log(tf.reduce_sum(tf.multiply(self.out, self.acts),

reduction_indices=1, keep_dims=True)),

-self.act_grad_weights)) \

+ ENTROPY_WEIGHT * tf.reduce_sum(tf.multiply(self.out,

tf.log(self.out + ENTROPY_EPS)))

作者在Github中明确建议使用熵权重递减的策略来训练模型,见:https://github.com/hongzimao/pensieve/tree/master/sim

A general strategy to train our system is to first set

ENTROPY_WEIGHTina3c.pyto be a large value (in the scale of 1 to 5) in the beginning, then gradually reduce the value to 0.1 (after at least 100,000 iterations).

Github的issue区有人提了类似的问题,参考:

- Why the result is not better than MPC? · Issue #11 · hongzimao/pensieve

- 很有价值的讨论过程,包括对Pensieve复现需要注意的细节以及部分复现结果

- Changing ENTROPY WEIGHT with training · Issue #6 · hongzimao/pensieve

- *这个问题是Oboe [SIGCOMM '18]的一作Zahaib Akhtar问的

作者说没有公开自动化熵权重衰减的原因,一是这个实现比较简单,二是希望大家复现的时候能观察到模型性能随着熵权重降低而逐步提高的过程。

[Author] Although we have our internal implementation (we didn’t post it because (1) it’s fairly easy to implement and (2) more importantly we intentionally want others to observe this effect), we would appreciate a lot if someone can reproduce and improve our implementation. Thanks!

关于自动化熵权重递减的讨论,部分内容摘录如下:

[Author] Did you load the trained model of previous run when you decay the factor? We (as well as others who reproduced it; some posts on issues already) didn’t do anything fancy, just plain decay once or twice should work.

[Questioner] I should stop the program, load the previous trained model, then re-run the python script. I’ve got good result by this way. But at first, I just set ENTROPY_WEIGHT as a member variable of

Class actor, and changed its value during thewhileloop.[Author] I think any reasonable decay function should work (e.g., linear, step function, etc.). … As for automatically decaying the exploration factor, notice that

ENTROPY_WEIGHTsets a constant in tensorflow computation graph (e.g., https://github.com/hongzimao/pensieve/blob/master/sim/a3c.py#L47-L52)..) To make it tunable during execution, you need to specify a tensorflowplaceholderand set its value each time.

要点:

- 最直接的方法,就是手动控制训练过程,当其达到某个迭代次数的是时候手动停止训练并保存模型,手动修改

a3c.py中的ENTROPY_WEIGHT值并基于先前的模型继续迭代训练 - 自动化熵权重衰减需要通过在

a3c.py的Class actor中预留placeholder,替代固定的ENTROPY_WEIGHT作为熵权重变量,并在multi_agent.py的while循环中将具体的数值传入(随迭代轮数改变) - 任何形式的衰减(例如线性)都可以,作者称一到二次衰减效果就可以了

提问者进一步给出了效果不错的熵权重(初始值是5)衰减设置:

| iteration | ENTROPY_WEIGHT |

|---|---|

| 0-19999 | 5 |

| 20000-39999 | 4 |

| 40000-59999 | 3 |

| 60000-69999 | 2 |

| 70000-79999 | 1 |

| 80000-89999 | 0.5 |

| 90000-100000 | 0.1 |

按照Pensieve原始论文和Oboe论文描述,考虑还是将1选为初始值,0.1选为100000次迭代后的最终值。或者可以选择1~5的初始值训练不同模型做性能对比。

实现

此部分具体实现参见:https://github.com/GreenLv/pensieve_retrain。除了熵权重衰减外,训练Pensieve还需要注意数据集划分、特征与奖励值归一化等问题,详见此Github仓库说明。

核心改动(1):在sim/a3c.py中,将原有的固定熵权重ENTROPY_WEIGHT改为变量entropy_weight。

class ActorNetwork(object):

"""

Input to the network is the state, output is the distribution

of all actions.

"""

def __init__(self, sess, state_dim, action_dim, learning_rate):

self.sess = sess

self.s_dim = state_dim

self.a_dim = action_dim

self.lr_rate = learning_rate

...

# This gradient will be provided by the critic network

self.act_grad_weights = tf.placeholder(tf.float32, [None, 1])

# dynamic entropy weight

self.entropy_weight = tf.placeholder(tf.float32)

# Compute the objective (log action_vector and entropy)

self.obj = tf.reduce_sum(tf.multiply(

tf.log(tf.reduce_sum(tf.multiply(self.out, self.acts),

reduction_indices=1, keep_dims=True)),

-self.act_grad_weights)) \

+ self.entropy_weight * tf.reduce_sum(tf.multiply(self.out,

tf.log(self.out + ENTROPY_EPS)))

...

核心改动(2):在sim/a3c.py中,修改compute_gradients和Actor.get_gradients的接口,将熵权重作为参数传入。

def compute_gradients(s_batch, a_batch, r_batch, terminal, actor, critic, entropy_weight):

...

actor_gradients = actor.get_gradients(s_batch, a_batch, td_batch, entropy_weight)

critic_gradients = critic.get_gradients(s_batch, R_batch)

return actor_gradients, critic_gradients, td_batch

class ActorNetwork(object):

...

def get_gradients(self, inputs, acts, act_grad_weights, entropy_weight):

return self.sess.run(self.actor_gradients, feed_dict={

self.inputs: inputs,

self.acts: acts,

self.act_grad_weights: act_grad_weights,

self.entropy_weight: entropy_weight

})

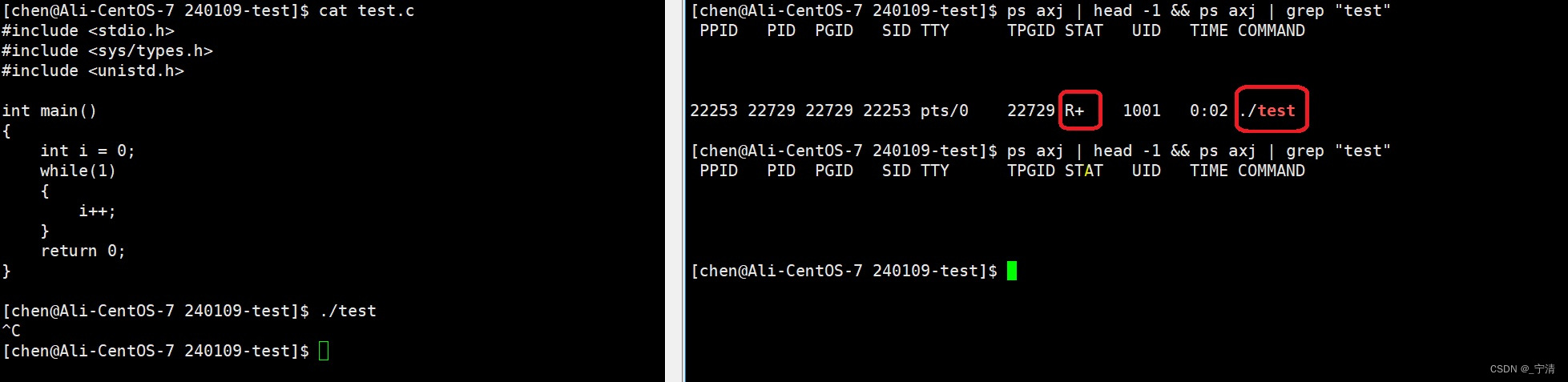

核心改动(3):在sim/multi_agent.py中,实现熵权重随迭代衰减的逻辑。

这里实现的方式是将初始值设为1,每10000次迭代将权重降低0.09,直到衰减至0.1为止。另外,也可以按照Github issue区的讨论从5开始分阶段衰减。

def calculate_entropy_weight(epoch):

# entropy weight decay with iteration

"""

if epoch < 20000:

entropy_weight = 5

elif epoch < 40000:

entropy_weight = 4

elif epoch < 60000:

entropy_weight = 3

elif epoch < 70000:

entropy_weight = 2

elif epoch < 80000:

entropy_weight = 1

elif epoch < 90000:

entropy_weight = 0.5

else:

entropy_weight = 0.1

"""

# initial entropy weight is 1, then decay to 0.1 in 100000 iterations

entropy_weight = 1 - (epoch / 10000) * 0.09

if entropy_weight < 0.1:

entropy_weight = 0.1

return entropy_weight

核心改动(4):在sim/multi_agent.py中,随着迭代轮数计算熵权重,并传递给a3c进行梯度计算。

def central_agent(net_params_queues, exp_queues):

...

while True:

...

# assemble experiences from the agents

actor_gradient_batch = []

critic_gradient_batch = []

# decay entropy_weight with iteration (from 1 to 0.1)

entropy_weight = calculate_entropy_weight(epoch)

for i in xrange(NUM_AGENTS):

s_batch, a_batch, r_batch, terminal, info = exp_queues[i].get()

actor_gradient, critic_gradient, td_batch = \

a3c.compute_gradients(

s_batch=np.stack(s_batch, axis=0),

a_batch=np.vstack(a_batch),

r_batch=np.vstack(r_batch),

terminal=terminal, actor=actor, critic=critic, entropy_weight=entropy_weight)

...

![[NSSRound#16 Basic]RCE但是没有完全RCE](https://img-blog.csdnimg.cn/img_convert/ebe19466707866f5401e60d4c125b227.png)

![[足式机器人]Part2 Dr. CAN学习笔记- Kalman Filter卡尔曼滤波器Ch05-3+4](https://img-blog.csdnimg.cn/direct/9aa076c4e486415a9f13facd707e0aba.png#pic_center)