Flink配置Flink配置及简单测试

上一篇:https://lizhiyong.blog.csdn.net/article/details/123560865

将USDP2.0自带的Flink更换为Flink1.14后,还没有来得及改配置。不改配置用起来是有问题的,所以。。。本文主要就是改配置及简单测试效果。

USDP默认的配置

################################################################################

# Licensed to the Apache Software Foundation (ASF) under one

# or more contributor license agreements. See the NOTICE file

# distributed with this work for additional information

# regarding copyright ownership. The ASF licenses this file

# to you under the Apache License, Version 2.0 (the

# "License"); you may not use this file except in compliance

# with the License. You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

################################################################################

#==============================================================================

# Common

#==============================================================================

# The external address of the host on which the JobManager runs and can be

# reached by the TaskManagers and any clients which want to connect. This setting

# is only used in Standalone mode and may be overwritten on the JobManager side

# by specifying the --host <hostname> parameter of the bin/jobmanager.sh executable.

# In high availability mode, if you use the bin/start-cluster.sh script and setup

# the conf/masters file, this will be taken care of automatically. Yarn/Mesos

# automatically configure the host name based on the hostname of the node where the

# JobManager runs.

jobmanager.rpc.address: localhost

# The RPC port where the JobManager is reachable.

jobmanager.rpc.port: 6123

# The total process memory size for the JobManager.

#

# Note this accounts for all memory usage within the JobManager process, including JVM metaspace and other overhead.

jobmanager.memory.process.size: 1600m

# The total process memory size for the TaskManager.

#

# Note this accounts for all memory usage within the TaskManager process, including JVM metaspace and other overhead.

taskmanager.memory.process.size: 1728m

# To exclude JVM metaspace and overhead, please, use total Flink memory size instead of 'taskmanager.memory.process.size'.

# It is not recommended to set both 'taskmanager.memory.process.size' and Flink memory.

#

# taskmanager.memory.flink.size: 1280m

# The number of task slots that each TaskManager offers. Each slot runs one parallel pipeline.

taskmanager.numberOfTaskSlots: 1

# The parallelism used for programs that did not specify and other parallelism.

parallelism.default: 1

# The default file system scheme and authority.

#

# By default file paths without scheme are interpreted relative to the local

# root file system 'file:///'. Use this to override the default and interpret

# relative paths relative to a different file system,

# for example 'hdfs://mynamenode:12345'

#

# fs.default-scheme

#==============================================================================

# High Availability

#==============================================================================

# The high-availability mode. Possible options are 'NONE' or 'zookeeper'.

#

# high-availability: zookeeper

# The path where metadata for master recovery is persisted. While ZooKeeper stores

# the small ground truth for checkpoint and leader election, this location stores

# the larger objects, like persisted dataflow graphs.

#

# Must be a durable file system that is accessible from all nodes

# (like HDFS, S3, Ceph, nfs, ...)

#

# high-availability.storageDir: hdfs:///flink/ha/

# The list of ZooKeeper quorum peers that coordinate the high-availability

# setup. This must be a list of the form:

# "host1:clientPort,host2:clientPort,..." (default clientPort: 2181)

#

# high-availability.zookeeper.quorum: localhost:2181

# ACL options are based on https://zookeeper.apache.org/doc/r3.1.2/zookeeperProgrammers.html#sc_BuiltinACLSchemes

# It can be either "creator" (ZOO_CREATE_ALL_ACL) or "open" (ZOO_OPEN_ACL_UNSAFE)

# The default value is "open" and it can be changed to "creator" if ZK security is enabled

#

# high-availability.zookeeper.client.acl: open

#==============================================================================

# Fault tolerance and checkpointing

#==============================================================================

# The backend that will be used to store operator state checkpoints if

# checkpointing is enabled.

#

# Supported backends are 'jobmanager', 'filesystem', 'rocksdb', or the

# <class-name-of-factory>.

#

# state.backend: filesystem

# Directory for checkpoints filesystem, when using any of the default bundled

# state backends.

#

# state.checkpoints.dir: hdfs://namenode-host:port/flink-checkpoints

# Default target directory for savepoints, optional.

#

# state.savepoints.dir: hdfs://namenode-host:port/flink-savepoints

# Flag to enable/disable incremental checkpoints for backends that

# support incremental checkpoints (like the RocksDB state backend).

#

# state.backend.incremental: false

# The failover strategy, i.e., how the job computation recovers from task failures.

# Only restart tasks that may have been affected by the task failure, which typically includes

# downstream tasks and potentially upstream tasks if their produced data is no longer available for consumption.

jobmanager.execution.failover-strategy: region

#==============================================================================

# Rest & web frontend

#==============================================================================

# The port to which the REST client connects to. If rest.bind-port has

# not been specified, then the server will bind to this port as well.

#

#rest.port: 8081

# The address to which the REST client will connect to

#

#rest.address: 0.0.0.0

# Port range for the REST and web server to bind to.

#

#rest.bind-port: 8080-8090

# The address that the REST & web server binds to

#

#rest.bind-address: 0.0.0.0

# Flag to specify whether job submission is enabled from the web-based

# runtime monitor. Uncomment to disable.

#web.submit.enable: false

#==============================================================================

# Advanced

#==============================================================================

# Override the directories for temporary files. If not specified, the

# system-specific Java temporary directory (java.io.tmpdir property) is taken.

#

# For framework setups on Yarn or Mesos, Flink will automatically pick up the

# containers' temp directories without any need for configuration.

#

# Add a delimited list for multiple directories, using the system directory

# delimiter (colon ':' on unix) or a comma, e.g.:

# /data1/tmp:/data2/tmp:/data3/tmp

#

# Note: Each directory entry is read from and written to by a different I/O

# thread. You can include the same directory multiple times in order to create

# multiple I/O threads against that directory. This is for example relevant for

# high-throughput RAIDs.

#

# io.tmp.dirs: /tmp

# The classloading resolve order. Possible values are 'child-first' (Flink's default)

# and 'parent-first' (Java's default).

#

# Child first classloading allows users to use different dependency/library

# versions in their application than those in the classpath. Switching back

# to 'parent-first' may help with debugging dependency issues.

#

# classloader.resolve-order: child-first

# The amount of memory going to the network stack. These numbers usually need

# no tuning. Adjusting them may be necessary in case of an "Insufficient number

# of network buffers" error. The default min is 64MB, the default max is 1GB.

#

# taskmanager.memory.network.fraction: 0.1

# taskmanager.memory.network.min: 64mb

# taskmanager.memory.network.max: 1gb

#==============================================================================

# Flink Cluster Security Configuration

#==============================================================================

# Kerberos authentication for various components - Hadoop, ZooKeeper, and connectors -

# may be enabled in four steps:

# 1. configure the local krb5.conf file

# 2. provide Kerberos credentials (either a keytab or a ticket cache w/ kinit)

# 3. make the credentials available to various JAAS login contexts

# 4. configure the connector to use JAAS/SASL

# The below configure how Kerberos credentials are provided. A keytab will be used instead of

# a ticket cache if the keytab path and principal are set.

# security.kerberos.login.use-ticket-cache: true

# security.kerberos.login.keytab: /path/to/kerberos/keytab

# security.kerberos.login.principal: flink-user

# The configuration below defines which JAAS login contexts

# security.kerberos.login.contexts: Client,KafkaClient

#==============================================================================

# ZK Security Configuration

#==============================================================================

# Below configurations are applicable if ZK ensemble is configured for security

# Override below configuration to provide custom ZK service name if configured

# zookeeper.sasl.service-name: zookeeper

# The configuration below must match one of the values set in "security.kerberos.login.contexts"

# zookeeper.sasl.login-context-name: Client

#==============================================================================

# HistoryServer

#==============================================================================

# The HistoryServer is started and stopped via bin/historyserver.sh (start|stop)

# Directory to upload completed jobs to. Add this directory to the list of

# monitored directories of the HistoryServer as well (see below).

jobmanager.archive.fs.dir: hdfs://zhiyong-1/zhiyong-1/flink-completed-jobs/

# The address under which the web-based HistoryServer listens.

# historyserver.web.address: zhiyong3

# History Server所绑定的ip,0.0.0.0代表允许所有ip访问

historyserver.web.address: 0.0.0.0

# 指定History Server间隔多少毫秒扫描一次归档目录

historyserver.archive.fs.refresh-interval: 10000

# The port under which the web-based HistoryServer listens, default 8082.

historyserver.web.port: 8082

# Comma separated list of directories to monitor for completed jobs.

historyserver.archive.fs.dir: hdfs://zhiyong-1/zhiyong-1/flink-completed-jobs/

# Interval in milliseconds for refreshing the monitored directories, default 10000.

historyserver.archive.fs.refresh-interval: 10000

historyserver.web.tmpdir: /data/udp/2.0.0.0/flink

env.java.opts: -Dlog4j2.formatMsgNoLookups=true

开启HA

修改Yaml

################################################################################

# Licensed to the Apache Software Foundation (ASF) under one

# or more contributor license agreements. See the NOTICE file

# distributed with this work for additional information

# regarding copyright ownership. The ASF licenses this file

# to you under the Apache License, Version 2.0 (the

# "License"); you may not use this file except in compliance

# with the License. You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

################################################################################

#==============================================================================

# Common

#==============================================================================

# The external address of the host on which the JobManager runs and can be

# reached by the TaskManagers and any clients which want to connect. This setting

# is only used in Standalone mode and may be overwritten on the JobManager side

# by specifying the --host <hostname> parameter of the bin/jobmanager.sh executable.

# In high availability mode, if you use the bin/start-cluster.sh script and setup

# the conf/masters file, this will be taken care of automatically. Yarn/Mesos

# automatically configure the host name based on the hostname of the node where the

# JobManager runs.

jobmanager.rpc.address: zhiyong2

# The RPC port where the JobManager is reachable.

jobmanager.rpc.port: 6123

# The total process memory size for the JobManager.

#

# Note this accounts for all memory usage within the JobManager process, including JVM metaspace and other overhead.

jobmanager.memory.process.size: 1600m

# The total process memory size for the TaskManager.

#

# Note this accounts for all memory usage within the TaskManager process, including JVM metaspace and other overhead.

taskmanager.memory.process.size: 1728m

# To exclude JVM metaspace and overhead, please, use total Flink memory size instead of 'taskmanager.memory.process.size'.

# It is not recommended to set both 'taskmanager.memory.process.size' and Flink memory.

#

# taskmanager.memory.flink.size: 1280m

# The number of task slots that each TaskManager offers. Each slot runs one parallel pipeline.

taskmanager.numberOfTaskSlots: 1

# The parallelism used for programs that did not specify and other parallelism.

parallelism.default: 1

# The default file system scheme and authority.

#

# By default file paths without scheme are interpreted relative to the local

# root file system 'file:///'. Use this to override the default and interpret

# relative paths relative to a different file system,

# for example 'hdfs://mynamenode:12345'

#

# fs.default-scheme

#==============================================================================

# High Availability

#==============================================================================

# The high-availability mode. Possible options are 'NONE' or 'zookeeper'.

#

high-availability: zookeeper

# The path where metadata for master recovery is persisted. While ZooKeeper stores

# the small ground truth for checkpoint and leader election, this location stores

# the larger objects, like persisted dataflow graphs.

#

# Must be a durable file system that is accessible from all nodes

# (like HDFS, S3, Ceph, nfs, ...)

#

high-availability.storageDir: hdfs://zhiyong-1/flink/ha/

# The list of ZooKeeper quorum peers that coordinate the high-availability

# setup. This must be a list of the form:

# "host1:clientPort,host2:clientPort,..." (default clientPort: 2181)

#

high-availability.zookeeper.quorum: zhiyong2:2181,zhiyong3:2181,zhiyong4:2181

# ACL options are based on https://zookeeper.apache.org/doc/r3.1.2/zookeeperProgrammers.html#sc_BuiltinACLSchemes

# It can be either "creator" (ZOO_CREATE_ALL_ACL) or "open" (ZOO_OPEN_ACL_UNSAFE)

# The default value is "open" and it can be changed to "creator" if ZK security is enabled

#

# high-availability.zookeeper.client.acl: open

#==============================================================================

# Fault tolerance and checkpointing

#==============================================================================

# The backend that will be used to store operator state checkpoints if

# checkpointing is enabled.

#

# Supported backends are 'jobmanager', 'filesystem', 'rocksdb', or the

# <class-name-of-factory>.

#

state.backend: filesystem

# Directory for checkpoints filesystem, when using any of the default bundled

# state backends.

#

state.checkpoints.dir: hdfs://zhiyong-1/flink-checkpoints

# Default target directory for savepoints, optional.

#

state.savepoints.dir: hdfs://zhiyong-1/flink-savepoints

# Flag to enable/disable incremental checkpoints for backends that

# support incremental checkpoints (like the RocksDB state backend).

#

# state.backend.incremental: false

# The failover strategy, i.e., how the job computation recovers from task failures.

# Only restart tasks that may have been affected by the task failure, which typically includes

# downstream tasks and potentially upstream tasks if their produced data is no longer available for consumption.

jobmanager.execution.failover-strategy: region

#==============================================================================

# Rest & web frontend

#==============================================================================

# The port to which the REST client connects to. If rest.bind-port has

# not been specified, then the server will bind to this port as well.

#

#rest.port: 8081

# The address to which the REST client will connect to

#

#rest.address: 0.0.0.0

# Port range for the REST and web server to bind to.

#

#rest.bind-port: 8080-8090

# The address that the REST & web server binds to

#

#rest.bind-address: 0.0.0.0

# Flag to specify whether job submission is enabled from the web-based

# runtime monitor. Uncomment to disable.

web.submit.enable: true

#==============================================================================

# Advanced

#==============================================================================

# Override the directories for temporary files. If not specified, the

# system-specific Java temporary directory (java.io.tmpdir property) is taken.

#

# For framework setups on Yarn or Mesos, Flink will automatically pick up the

# containers' temp directories without any need for configuration.

#

# Add a delimited list for multiple directories, using the system directory

# delimiter (colon ':' on unix) or a comma, e.g.:

# /data1/tmp:/data2/tmp:/data3/tmp

#

# Note: Each directory entry is read from and written to by a different I/O

# thread. You can include the same directory multiple times in order to create

# multiple I/O threads against that directory. This is for example relevant for

# high-throughput RAIDs.

#

# io.tmp.dirs: /tmp

# The classloading resolve order. Possible values are 'child-first' (Flink's default)

# and 'parent-first' (Java's default).

#

# Child first classloading allows users to use different dependency/library

# versions in their application than those in the classpath. Switching back

# to 'parent-first' may help with debugging dependency issues.

#

# classloader.resolve-order: child-first

# The amount of memory going to the network stack. These numbers usually need

# no tuning. Adjusting them may be necessary in case of an "Insufficient number

# of network buffers" error. The default min is 64MB, the default max is 1GB.

#

# taskmanager.memory.network.fraction: 0.1

# taskmanager.memory.network.min: 64mb

# taskmanager.memory.network.max: 1gb

#==============================================================================

# Flink Cluster Security Configuration

#==============================================================================

# Kerberos authentication for various components - Hadoop, ZooKeeper, and connectors -

# may be enabled in four steps:

# 1. configure the local krb5.conf file

# 2. provide Kerberos credentials (either a keytab or a ticket cache w/ kinit)

# 3. make the credentials available to various JAAS login contexts

# 4. configure the connector to use JAAS/SASL

# The below configure how Kerberos credentials are provided. A keytab will be used instead of

# a ticket cache if the keytab path and principal are set.

# security.kerberos.login.use-ticket-cache: true

# security.kerberos.login.keytab: /path/to/kerberos/keytab

# security.kerberos.login.principal: flink-user

# The configuration below defines which JAAS login contexts

# security.kerberos.login.contexts: Client,KafkaClient

#==============================================================================

# ZK Security Configuration

#==============================================================================

# Below configurations are applicable if ZK ensemble is configured for security

# Override below configuration to provide custom ZK service name if configured

# zookeeper.sasl.service-name: zookeeper

# The configuration below must match one of the values set in "security.kerberos.login.contexts"

# zookeeper.sasl.login-context-name: Client

#==============================================================================

# HistoryServer

#==============================================================================

# The HistoryServer is started and stopped via bin/historyserver.sh (start|stop)

# Directory to upload completed jobs to. Add this directory to the list of

# monitored directories of the HistoryServer as well (see below).

jobmanager.archive.fs.dir: hdfs://zhiyong-1/zhiyong-1/flink-completed-jobs/

# The address under which the web-based HistoryServer listens.

# historyserver.web.address: zhiyong3

# History Server所绑定的ip,0.0.0.0代表允许所有ip访问

historyserver.web.address: 0.0.0.0

# 指定History Server间隔多少毫秒扫描一次归档目录

historyserver.archive.fs.refresh-interval: 10000

# The port under which the web-based HistoryServer listens, default 8082.

historyserver.web.port: 8082

# Comma separated list of directories to monitor for completed jobs.

historyserver.archive.fs.dir: hdfs://zhiyong-1/zhiyong-1/flink-completed-jobs/

# Interval in milliseconds for refreshing the monitored directories, default 10000.

historyserver.archive.fs.refresh-interval: 10000

historyserver.web.tmpdir: /data/udp/2.0.0.0/flink

env.java.opts: -Dlog4j2.formatMsgNoLookups=true

修改后直接USDP确定,USDP会自动分发。

修改其它文件

masters

[root@zhiyong2 ~]# cd /srv/udp/2.0.0.0/flink/conf/

[root@zhiyong2 conf]# ll

总用量 60

-rwxrwxrwx 1 hadoop hadoop 10732 4月 1 11:29 flink-conf.yaml

-rwxr-xr-x 1 root root 4469 4月 1 11:29 hive-site.xml

-rwxrwxrwx 1 hadoop hadoop 2917 3月 14 23:15 log4j-cli.properties

-rwxrwxrwx 1 hadoop hadoop 3041 3月 14 23:15 log4j-console.properties

-rwxrwxrwx 1 hadoop hadoop 2694 3月 14 23:15 log4j.properties

-rwxrwxrwx 1 hadoop hadoop 2041 3月 14 23:15 log4j-session.properties

-rwxrwxrwx 1 hadoop hadoop 2740 4月 1 11:29 logback-console.xml

-rwxrwxrwx 1 hadoop hadoop 1550 3月 14 23:15 logback-session.xml

-rwxrwxrwx 1 hadoop hadoop 2327 4月 1 11:29 logback.xml

-rwxrwxrwx 1 hadoop hadoop 15 3月 14 23:15 masters

drwxr-xr-x 2 root root 300 4月 1 11:29 old

-rwxrwxrwx 1 hadoop hadoop 10 3月 14 23:15 workers

-rwxrwxrwx 1 hadoop hadoop 1434 3月 14 23:15 zoo.cfg

[root@zhiyong2 conf]# cat masters

localhost:8081

[root@zhiyong2 conf]# vim masters

[root@zhiyong2 conf]# cat masters

zhiyong3:8081

zhiyong4:8081

zoo.cfg

[root@zhiyong2 conf]# vim zoo.cfg

[root@zhiyong2 conf]# cat zoo.cfg

################################################################################

# Licensed to the Apache Software Foundation (ASF) under one

# or more contributor license agreements. See the NOTICE file

# distributed with this work for additional information

# regarding copyright ownership. The ASF licenses this file

# to you under the Apache License, Version 2.0 (the

# "License"); you may not use this file except in compliance

# with the License. You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

################################################################################

# The number of milliseconds of each tick

tickTime=2000

# The number of ticks that the initial synchronization phase can take

initLimit=10

# The number of ticks that can pass between sending a request and getting an acknowledgement

syncLimit=5

# The directory where the snapshot is stored.

# dataDir=/tmp/zookeeper

# The port at which the clients will connect

clientPort=2181

# ZooKeeper quorum peers

server.1=zhiyong2:2888:3888

server.2=zhiyong3:2888:3888

server.3=zhiyong4:2888:3888

# server.2=host:peer-port:leader-port

workers

[root@zhiyong2 conf]# cat workers

localhost

[root@zhiyong2 conf]# vim workers

[root@zhiyong2 conf]# cat workers

zhiyong2

zhiyong3

zhiyong4

分发

[root@zhiyong2 conf]# pwd

/srv/udp/2.0.0.0/flink/conf

[root@zhiyong2 conf]# scp ./masters root@zhiyong3:$PWD

masters 100% 28 11.5KB/s 00:00

[root@zhiyong2 conf]# scp ./masters root@zhiyong4:$PWD

masters 100% 28 9.0KB/s 00:00

[root@zhiyong2 conf]# scp ./zoo.cfg root@zhiyong3:$PWD

zoo.cfg 100% 1489 759.7KB/s 00:00

[root@zhiyong2 conf]# scp ./zoo.cfg root@zhiyong4:$PWD

zoo.cfg 100% 1489 530.4KB/s 00:00

[root@zhiyong2 conf]# scp ./workers root@zhiyong3:$PWD

workers 100% 27 7.0KB/s 00:00

[root@zhiyong2 conf]# scp ./workers root@zhiyong4:$PWD

workers 100% 27 8.9KB/s 00:00

[root@zhiyong2 conf]#

测试on Yarn

在Yarn做资源容器的情况下,主要是3种模式:Session模式,Per-Job模式,Application模式。

Session模式适用于频繁交互的小任务【比如当即席查询来用的sql-client】。这种模式随便玩玩就好,生产环境不合适。

Per-Job好处就是资源隔离的比较彻底,坏处当然就是资源占用率可能不高,没办法充分压榨CPU的算力。

Application模式是后来新增的,当然也就先进一些。

在目前最新的官网文档:https://nightlies.apache.org/flink/flink-docs-release-1.16/docs/deployment/resource-providers/yarn/#per-job-mode-deprecated

已经白底黑字清清楚楚地写了:

Per-job mode is only supported by YARN and has been deprecated in Flink 1.15. It will be dropped in FLINK-26000. Please consider application mode to launch a dedicated cluster per-job on YARN.

从Flink1.15开始Per-Job模式就要淘汰了,所以之后的新版本Flink应该逐步切换位Application模式。如果有必要,那也是基于Application模式构建专用集群模拟出Per-Job的效果。

Session模式

提交任务:

./bin/flink run -t yarn-session \

-Dyarn.application.id=application_XXXX_YY \

./examples/streaming/TopSpeedWindowing.jar

或者指定Yarn的ID:

./bin/yarn-session.sh -id application_XXXX_YY

Per-Job模式

这是我们prod使用的部署模式,用法简单:

./bin/flink run -t yarn-per-job --detached ./examples/streaming/TopSpeedWindowing.jar

查看和cancel掉任务也很简单:

# List running job on the cluster

./bin/flink list -t yarn-per-job -Dyarn.application.id=application_XXXX_YY

# Cancel running job

./bin/flink cancel -t yarn-per-job -Dyarn.application.id=application_XXXX_YY <jobId>

Application模式

提交任务:

./bin/flink run-application -t yarn-application ./examples/streaming/TopSpeedWindowing.jar

查看和Cancel任务:

# List running job on the cluster

./bin/flink list -t yarn-application -Dyarn.application.id=application_XXXX_YY

# Cancel running job

./bin/flink cancel -t yarn-application -Dyarn.application.id=application_XXXX_YY <jobId>

还可以提前上传依赖的Jar包到HDFS:

./bin/flink run-application -t yarn-application \

-Dyarn.provided.lib.dirs="hdfs://myhdfs/my-remote-flink-dist-dir" \

hdfs://myhdfs/jars/my-application.jar

这样减少分发来提速。

简单测试

由于批处理是跑完就自动停止,所以当只启动一个批处理任务时,各种模式区别不太大,简单起见使用session模式。

当前线程

[root@zhiyong2 ~]# jps

14048 NameNode

13729 DFSZKFailoverController

377220 NodeManager

10854 udp-agent-1.0.0.jar

16326 HMaster

13130 HttpFSServerWebServer

17325 RunJar

15790 HRegionServer

379882 ResourceManager

988575 Jps

988190 RunJar

14323 JournalNode

11156 QuorumPeerMain

13492 DataNode

17305 RunJar

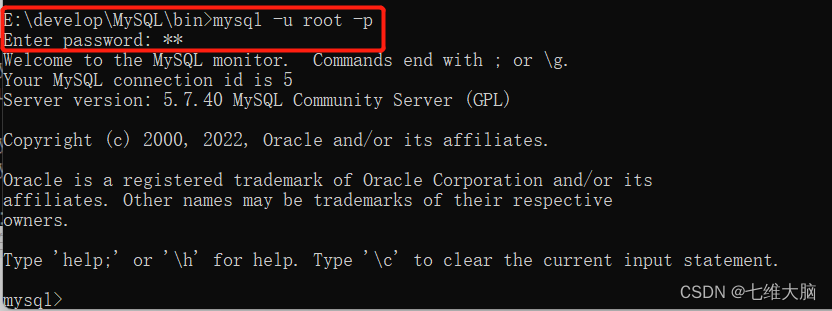

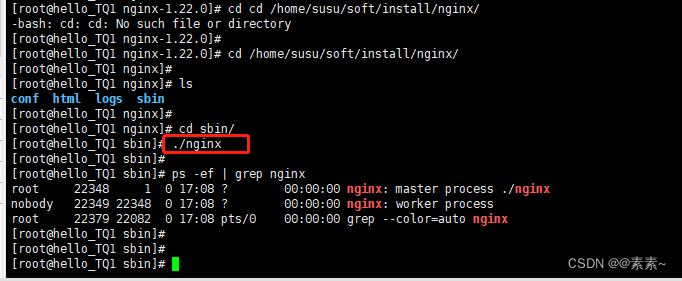

启动任务

[root@zhiyong2 ~]# flink run -m yarn-cluster -yjm 1024 -ytm 1024 /srv/udp/2.0.0.0/flink/examples/batch/WordCount.jar

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/opt/usdp-srv/srv/udp/2.0.0.0/flink/lib/log4j-slf4j-impl-2.17.1.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/opt/usdp-srv/srv/udp/2.0.0.0/yarn/share/hadoop/common/lib/slf4j-log4j12-1.7.25.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.apache.logging.slf4j.Log4jLoggerFactory]

Executing WordCount example with default input data set.

Use --input to specify file input.

Printing result to stdout. Use --output to specify output path.

2022-04-01 12:48:35,994 WARN org.apache.flink.yarn.configuration.YarnLogConfigUtil [] - The configuration directory ('/opt/usdp-srv/srv/udp/2.0.0.0/flink/conf') already contains a LOG4J config file.If you want to use logback, then please delete or rename the log configuration file.

2022-04-01 12:48:36,424 INFO org.apache.hadoop.yarn.client.AHSProxy [] - Connecting to Application History server at zhiyong3/192.168.88.102:10201

2022-04-01 12:48:36,432 INFO org.apache.flink.yarn.YarnClusterDescriptor [] - No path for the flink jar passed. Using the location of class org.apache.flink.yarn.YarnClusterDescriptor to locate the jar

2022-04-01 12:48:36,526 INFO org.apache.hadoop.yarn.client.ConfiguredRMFailoverProxyProvider [] - Failing over to rm2

2022-04-01 12:48:36,582 INFO org.apache.hadoop.conf.Configuration [] - resource-types.xml not found

2022-04-01 12:48:36,582 INFO org.apache.hadoop.yarn.util.resource.ResourceUtils [] - Unable to find 'resource-types.xml'.

2022-04-01 12:48:36,630 INFO org.apache.flink.yarn.YarnClusterDescriptor [] - Cluster specification: ClusterSpecification{masterMemoryMB=1024, taskManagerMemoryMB=1024, slotsPerTaskManager=1}

2022-04-01 12:48:41,392 INFO org.apache.flink.yarn.YarnClusterDescriptor [] - Submitting application master application_1648782295643_0004

2022-04-01 12:48:41,666 INFO org.apache.hadoop.yarn.client.api.impl.YarnClientImpl [] - Submitted application application_1648782295643_0004

2022-04-01 12:48:41,667 INFO org.apache.flink.yarn.YarnClusterDescriptor [] - Waiting for the cluster to be allocated

2022-04-01 12:48:41,670 INFO org.apache.flink.yarn.YarnClusterDescriptor [] - Deploying cluster, current state ACCEPTED

2022-04-01 12:48:50,387 INFO org.apache.flink.yarn.YarnClusterDescriptor [] - YARN application has been deployed successfully.

2022-04-01 12:48:50,388 INFO org.apache.flink.yarn.YarnClusterDescriptor [] - Found Web Interface zhiyong2:40000 of application 'application_1648782295643_0004'.

Job has been submitted with JobID 8ed8908de03e30fface549af0a3c2180

Program execution finished

Job with JobID 8ed8908de03e30fface549af0a3c2180 has finished.

Job Runtime: 14446 ms

Accumulator Results:

- 780a8818b15c7abfa776bae8c4db75b8 (java.util.ArrayList) [170 elements]

当前线程

[root@zhiyong2 ~]# jps

14048 NameNode

13729 DFSZKFailoverController

377220 NodeManager

988845 CliFrontend

990315 YarnJobClusterEntrypoint

10854 udp-agent-1.0.0.jar

16326 HMaster

13130 HttpFSServerWebServer

17325 RunJar

15790 HRegionServer

379882 ResourceManager

14323 JournalNode

11156 QuorumPeerMain

13492 DataNode

990330 Jps

17305 RunJar

989424 RunJar

运行结果:

[root@zhiyong2 ~]# flink run -m yarn-cluster -yjm 1024 -ytm 1024 /srv/udp/2.0.0.0/flink/examples/batch/WordCount.jar

。。。省略中间多余的内容

(wrong,1)

(you,1)

批处理任务结束后线程自动kill。

历史记录

http://zhiyong3:8088/cluster

可以看到:

成功提交到Yarn。

开启History Server

[root@zhiyong2 ~]# which historyserver.sh

/srv/udp/2.0.0.0/flink/bin/historyserver.sh

[root@zhiyong2 ~]# historyserver.sh start

Starting historyserver daemon on host zhiyong2.

[root@zhiyong2 ~]# netstat -lntp |grep 8082

[root@zhiyong2 ~]# historyserver.sh stop

No historyserver daemon (pid: 853461) is running anymore on zhiyong2.

[root@zhiyong2 ~]# historyserver.sh stop

No historyserver daemon (pid: 688346) is running anymore on zhiyong2.

显然启动失败!

查看脚本:

[root@zhiyong2 ~]# cat /srv/udp/2.0.0.0/flink/bin/historyserver.sh

#!/usr/bin/env bash

################################################################################

# Licensed to the Apache Software Foundation (ASF) under one

# or more contributor license agreements. See the NOTICE file

# distributed with this work for additional information

# regarding copyright ownership. The ASF licenses this file

# to you under the Apache License, Version 2.0 (the

# "License"); you may not use this file except in compliance

# with the License. You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

################################################################################

# Start/stop a Flink HistoryServer

USAGE="Usage: historyserver.sh (start|start-foreground|stop)"

STARTSTOP=$1

bin=`dirname "$0"`

bin=`cd "$bin"; pwd`

. "$bin"/config.sh

if [[ $STARTSTOP == "start" ]] || [[ $STARTSTOP == "start-foreground" ]]; then

export FLINK_HISTORYSERVER_JMX_OPTS="-Dcom.sun.management.jmxremote.authenticate=false -Dcom.sun.management.jmxremote.ssl=false -Dcom.sun.management.jmxremote.local.only=false -Dcom.sun.management.jmxremote.port=7930 -javaagent:/srv/udp/2.0.0.0/flink/jmx_exporter/jmx_exporter.jar=7931:/srv/udp/2.0.0.0/flink/jmx_exporter/jmx_config_flink_history_server.yml"

export FLINK_ENV_JAVA_OPTS="${FLINK_ENV_JAVA_OPTS} ${FLINK_ENV_JAVA_OPTS_HS} ${FLINK_HISTORYSERVER_JMX_OPTS}"

args=("--configDir" "${FLINK_CONF_DIR}")

fi

if [[ $STARTSTOP == "start-foreground" ]]; then

exec "${FLINK_BIN_DIR}"/flink-console.sh historyserver "${args[@]}"

else

"${FLINK_BIN_DIR}"/flink-daemon.sh $STARTSTOP historyserver "${args[@]}"

fi

Apache版本的长这样:

[root@zhiyong2 ~]# cd /export/server/

[root@zhiyong2 server]# ll

总用量 0

drwxrwxrwx 10 hadoop hadoop 156 3月 14 23:14 flink

drwxr-xr-x 10 501 games 156 1月 11 07:45 flink-1.14.3

[root@zhiyong2 server]# cd flink-1.14.3/

[root@zhiyong2 flink-1.14.3]# cd bin/

[root@zhiyong2 bin]# ll

总用量 2348

-rw-r--r-- 1 501 games 2290643 1月 11 07:45 bash-java-utils.jar

-rwxr-xr-x 1 501 games 20576 11月 30 01:53 config.sh

-rwxr-xr-x 1 501 games 1318 9月 14 2020 find-flink-home.sh

-rwxr-xr-x 1 501 games 2381 8月 20 2021 flink

-rwxr-xr-x 1 501 games 4247 10月 29 04:32 flink-console.sh

-rwxr-xr-x 1 501 games 6584 8月 20 2021 flink-daemon.sh

-rwxr-xr-x 1 501 games 1564 9月 14 2020 historyserver.sh

-rwxr-xr-x 1 501 games 2295 1月 8 2021 jobmanager.sh

-rwxr-xr-x 1 501 games 1650 8月 20 2021 kubernetes-jobmanager.sh

-rwxr-xr-x 1 501 games 1717 8月 20 2021 kubernetes-session.sh

-rwxr-xr-x 1 501 games 1770 8月 20 2021 kubernetes-taskmanager.sh

-rwxr-xr-x 1 501 games 2994 8月 20 2021 pyflink-shell.sh

-rwxr-xr-x 1 501 games 3742 8月 20 2021 sql-client.sh

-rwxr-xr-x 1 501 games 2006 1月 28 2021 standalone-job.sh

-rwxr-xr-x 1 501 games 1837 9月 14 2020 start-cluster.sh

-rwxr-xr-x 1 501 games 1854 9月 14 2020 start-zookeeper-quorum.sh

-rwxr-xr-x 1 501 games 1617 9月 14 2020 stop-cluster.sh

-rwxr-xr-x 1 501 games 1845 9月 14 2020 stop-zookeeper-quorum.sh

-rwxr-xr-x 1 501 games 2960 8月 20 2021 taskmanager.sh

-rwxr-xr-x 1 501 games 1725 8月 20 2021 yarn-session.sh

-rwxr-xr-x 1 501 games 2405 1月 8 2021 zookeeper.sh

[root@zhiyong2 bin]# cat historyserver.sh

#!/usr/bin/env bash

################################################################################

# Licensed to the Apache Software Foundation (ASF) under one

# or more contributor license agreements. See the NOTICE file

# distributed with this work for additional information

# regarding copyright ownership. The ASF licenses this file

# to you under the Apache License, Version 2.0 (the

# "License"); you may not use this file except in compliance

# with the License. You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

################################################################################

# Start/stop a Flink HistoryServer

USAGE="Usage: historyserver.sh (start|start-foreground|stop)"

STARTSTOP=$1

bin=`dirname "$0"`

bin=`cd "$bin"; pwd`

. "$bin"/config.sh

if [[ $STARTSTOP == "start" ]] || [[ $STARTSTOP == "start-foreground" ]]; then

export FLINK_ENV_JAVA_OPTS="${FLINK_ENV_JAVA_OPTS} ${FLINK_ENV_JAVA_OPTS_HS}"

args=("--configDir" "${FLINK_CONF_DIR}")

fi

if [[ $STARTSTOP == "start-foreground" ]]; then

exec "${FLINK_BIN_DIR}"/flink-console.sh historyserver "${args[@]}"

else

"${FLINK_BIN_DIR}"/flink-daemon.sh $STARTSTOP historyserver "${args[@]}"

fi

显然USDP版的Flink与Apache版的Flink有些不同。

尝试使用Apache版Flink的History Server

[root@zhiyong2 conf]# cp flink-conf.yaml flink-conf.yaml-beifen

[root@zhiyong2 conf]# ll

总用量 64

-rw-r--r-- 1 501 games 11123 10月 29 04:32 flink-conf.yaml

-rw-r--r-- 1 root root 11123 4月 1 15:35 flink-conf.yaml-beifen

-rw-r--r-- 1 501 games 2917 10月 29 04:32 log4j-cli.properties

-rw-r--r-- 1 501 games 3041 10月 29 04:32 log4j-console.properties

-rw-r--r-- 1 501 games 2694 10月 29 04:32 log4j.properties

-rw-r--r-- 1 501 games 2041 10月 29 04:32 log4j-session.properties

-rw-r--r-- 1 501 games 2711 10月 29 04:32 logback-console.xml

-rw-r--r-- 1 501 games 1550 9月 14 2020 logback-session.xml

-rw-r--r-- 1 501 games 2302 10月 29 04:32 logback.xml

-rw-r--r-- 1 501 games 15 9月 14 2020 masters

-rw-r--r-- 1 501 games 10 9月 14 2020 workers

-rw-r--r-- 1 501 games 1434 9月 14 2020 zoo.cfg

[root@zhiyong2 conf]# cat masters

localhost:8081

[root@zhiyong2 conf]# cp /srv/udp/2.0.0.0/flink/conf/flink-conf.yaml /export/server/flink/conf

cp:是否覆盖"/export/server/flink/conf/flink-conf.yaml"? y

[root@zhiyong2 conf]# cd ..

[root@zhiyong2 flink-1.14.3]# cd bin/

[root@zhiyong2 bin]# ll

总用量 2348

-rw-r--r-- 1 501 games 2290643 1月 11 07:45 bash-java-utils.jar

-rwxr-xr-x 1 501 games 20576 11月 30 01:53 config.sh

-rwxr-xr-x 1 501 games 1318 9月 14 2020 find-flink-home.sh

-rwxr-xr-x 1 501 games 2381 8月 20 2021 flink

-rwxr-xr-x 1 501 games 4247 10月 29 04:32 flink-console.sh

-rwxr-xr-x 1 501 games 6584 8月 20 2021 flink-daemon.sh

-rwxr-xr-x 1 501 games 1564 9月 14 2020 historyserver.sh

-rwxr-xr-x 1 501 games 2295 1月 8 2021 jobmanager.sh

-rwxr-xr-x 1 501 games 1650 8月 20 2021 kubernetes-jobmanager.sh

-rwxr-xr-x 1 501 games 1717 8月 20 2021 kubernetes-session.sh

-rwxr-xr-x 1 501 games 1770 8月 20 2021 kubernetes-taskmanager.sh

-rwxr-xr-x 1 501 games 2994 8月 20 2021 pyflink-shell.sh

-rwxr-xr-x 1 501 games 3742 8月 20 2021 sql-client.sh

-rwxr-xr-x 1 501 games 2006 1月 28 2021 standalone-job.sh

-rwxr-xr-x 1 501 games 1837 9月 14 2020 start-cluster.sh

-rwxr-xr-x 1 501 games 1854 9月 14 2020 start-zookeeper-quorum.sh

-rwxr-xr-x 1 501 games 1617 9月 14 2020 stop-cluster.sh

-rwxr-xr-x 1 501 games 1845 9月 14 2020 stop-zookeeper-quorum.sh

-rwxr-xr-x 1 501 games 2960 8月 20 2021 taskmanager.sh

-rwxr-xr-x 1 501 games 1725 8月 20 2021 yarn-session.sh

-rwxr-xr-x 1 501 games 2405 1月 8 2021 zookeeper.sh

[root@zhiyong2 bin]# sh historyserver.sh start

/export/server/flink-1.14.3/bin/config.sh:行32: 未预期的符号 `<' 附近有语法错误

/export/server/flink-1.14.3/bin/config.sh:行32: ` done < <(find "$FLINK_LIB_DIR" ! -type d -name '*.jar' -print0 | sort -z)'

可能是Apache版Flink本身就有Bug!自己玩,使用Stand alone和 K8S都可以,K8S取代Yarn是必然的事。启动不了这个historyserver问题不大。

使用USDP自带的History Server

USDP版本的显然是自行封装了一些Apache版不具备的内容。

ssh到zhiyong5后跨机器拷贝USDP自行封装的包:

[root@zhiyong5 flink]# pwd

/srv/udp/2.0.0.0/flink

[root@zhiyong5 flink]# ll

总用量 28

drwxr-xr-x. 2 hadoop hadoop 4096 4月 1 15:59 bin

drwxr-xr-x. 2 hadoop hadoop 284 3月 1 23:35 conf

drwxr-xr-x. 7 hadoop hadoop 76 10月 9 17:49 examples

drwxr-xr-x. 2 hadoop hadoop 73 3月 1 23:35 jmx_exporter

drwxr-xr-x. 2 hadoop hadoop 4096 10月 23 16:14 lib

-rwxr-xr-x. 1 hadoop hadoop 11357 7月 23 2021 LICENSE

drwxr-xr-x. 2 hadoop hadoop 6 7月 23 2021 log

drwxr-xr-x. 3 hadoop hadoop 4096 10月 9 17:49 opt

drwxr-xr-x. 10 hadoop hadoop 210 10月 9 17:49 plugins

-rwxr-xr-x. 1 hadoop hadoop 1309 7月 23 2021 README.txt

drwxrwxrwx. 2 hadoop hadoop 6 4月 1 14:46 run

[root@zhiyong5 flink]# scp -r ./jmx_exporter/ root@zhiyong2:$PWD

jmx_exporter.jar 100% 366KB 19.1MB/s 00:00

jmx_config_flink_history_server.yml 100% 152 102.3KB/s 00:00

[root@zhiyong5 flink]# scp -r ./jmx_exporter/ root@zhiyong3:$PWD

jmx_exporter.jar 100% 366KB 23.8MB/s 00:00

jmx_config_flink_history_server.yml 100% 152 87.6KB/s 00:00

[root@zhiyong5 flink]# scp -r ./jmx_exporter/ root@zhiyong4:$PWD

jmx_exporter.jar 100% 366KB 23.7MB/s 00:00

jmx_config_flink_history_server.yml 100% 152 77.3KB/s 00:00

当然USDP魔改的脚本也需要scp:

[root@zhiyong5 bin]# pwd

/srv/udp/2.0.0.0/flink/bin

[root@zhiyong5 bin]# scp ./config.sh root@zhiyong2:$PWD

config.sh 100% 20KB 3.5MB/s 00:00

[root@zhiyong5 bin]# scp ./config.sh root@zhiyong3:$PWD

Warning: Permanently added 'zhiyong3' (ECDSA) to the list of known hosts.

config.sh 100% 20KB 10.9MB/s 00:00

[root@zhiyong5 bin]# scp ./config.sh root@zhiyong4:$PWD

Warning: Permanently added 'zhiyong4' (ECDSA) to the list of known hosts.

config.sh 100% 20KB 8.9MB/s 00:00

修改zhiyong-1集群的flink-conf.yaml:

historyserver.web.address: zhiyong3

点确定后可以自动分发。

之后启动History Server:

[root@zhiyong2 flink]# historyserver.sh start

Starting historyserver daemon on host zhiyong2.

[root@zhiyong2 flink]# netstat -lntp |grep 8082

[root@zhiyong3 ~]# netstat -lntp |grep 8082

tcp6 0 0 192.168.88.102:8082 :::* LISTEN 412060/java

打开网站:

http://zhiyong3:8082/#/overview

显示:

此时虽然可以看到是更换过的Flink1.14.3的Web UI,但是允许提交任务的设置显然并没有生效:

web.submit.enable: true

这个History Server貌似反应也有点迟钝。用来查看批处理的历史记录影响不是很大,但是查看正在运行的流计算任务就很不合适。暂时凑合着先用Stand Alone的web UI。

流计算测试

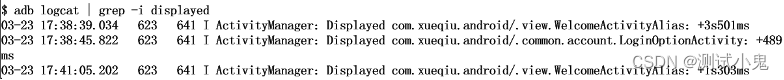

[root@zhiyong2 ~]# nc -lk 9999

-bash: nc: 未找到命令

[root@zhiyong2 ~]# yum install -y nc

提交到Yarn:

[root@zhiyong2 streaming]# flink run -m yarn-cluster -yjm 1024 -ytm 1024 /srv/udp/2.0.0.0/flink/examples/streaming/SocketWindowWordCount.jar --hostname zhiyong2 --port 9998

都还比较正常。

随便搞个测试的类包:

package com.zhiyong.start;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.functions.source.SourceFunction;

import java.util.ArrayList;

import java.util.Random;

/**

* @program: bigdataStudy

* @description: 自动wordCount测试Flink环境是否正常

* @author: zhiyong

* @create: 2022-04-01 19:50

**/

public class WordCountAuto {

public static void main(String[] args) throws Exception{

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

DataStreamSource<String> data = env.addSource(new source());

data.print();

env.execute("测试");

}

//自定义数据源

private static class source implements SourceFunction<String>{

boolean needRun = true;

@Override

public void run(SourceContext<String> ctx) throws Exception {

while (needRun){

ArrayList<String> result = new ArrayList<>();

for (int i = 0; i < 20; i++) {

result.add("zhiyong" + i);

}

ctx.collect(result.get(new Random().nextInt(20)));

Thread.sleep(1000);

}

}

@Override

public void cancel() {

needRun=false;

}

}

}

此时使用StandAlone模式提交会报错:

[root@zhiyong2 ~]# flink run --class com.zhiyong.start.WordCountAuto /root/jars/flinkStudy-1.0.0.jar

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/opt/usdp-srv/srv/udp/2.0.0.0/flink/lib/log4j-slf4j-impl-2.17.1.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/opt/usdp-srv/srv/udp/2.0.0.0/yarn/share/hadoop/common/lib/slf4j-log4j12-1.7.25.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.apache.logging.slf4j.Log4jLoggerFactory]

2022-04-01 20:22:45,449 INFO org.apache.flink.yarn.cli.FlinkYarnSessionCli [] - Found Yarn properties file under /tmp/.yarn-properties-root.

2022-04-01 20:22:45,449 INFO org.apache.flink.yarn.cli.FlinkYarnSessionCli [] - Found Yarn properties file under /tmp/.yarn-properties-root.

2022-04-01 20:22:46,165 WARN org.apache.flink.yarn.configuration.YarnLogConfigUtil [] - The configuration directory ('/opt/usdp-srv/srv/udp/2.0.0.0/flink/conf') already contains a LOG4J config file.If you want to use logback, then please delete or rename the log configuration file.

2022-04-01 20:22:46,655 INFO org.apache.hadoop.yarn.client.AHSProxy [] - Connecting to Application History server at zhiyong3/192.168.88.102:10201

2022-04-01 20:22:46,666 INFO org.apache.flink.yarn.YarnClusterDescriptor [] - No path for the flink jar passed. Using the location of class org.apache.flink.yarn.YarnClusterDescriptor to locate the jar

2022-04-01 20:22:46,734 INFO org.apache.hadoop.yarn.client.ConfiguredRMFailoverProxyProvider [] - Failing over to rm2

2022-04-01 20:22:46,797 ERROR org.apache.flink.yarn.YarnClusterDescriptor [] - The application application_1648782295643_0010 doesn't run anymore. It has previously completed with final status: KILLED

------------------------------------------------------------

The program finished with the following exception:

org.apache.flink.client.program.ProgramInvocationException: The main method caused an error: Couldn't retrieve Yarn cluster

at org.apache.flink.client.program.PackagedProgram.callMainMethod(PackagedProgram.java:372)

at org.apache.flink.client.program.PackagedProgram.invokeInteractiveModeForExecution(PackagedProgram.java:222)

at org.apache.flink.client.ClientUtils.executeProgram(ClientUtils.java:114)

at org.apache.flink.client.cli.CliFrontend.executeProgram(CliFrontend.java:812)

at org.apache.flink.client.cli.CliFrontend.run(CliFrontend.java:246)

at org.apache.flink.client.cli.CliFrontend.parseAndRun(CliFrontend.java:1054)

at org.apache.flink.client.cli.CliFrontend.lambda$main$10(CliFrontend.java:1132)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:422)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1729)

at org.apache.flink.runtime.security.contexts.HadoopSecurityContext.runSecured(HadoopSecurityContext.java:41)

at org.apache.flink.client.cli.CliFrontend.main(CliFrontend.java:1132)

Caused by: org.apache.flink.client.deployment.ClusterRetrieveException: Couldn't retrieve Yarn cluster

at org.apache.flink.yarn.YarnClusterDescriptor.retrieve(YarnClusterDescriptor.java:411)

at org.apache.flink.yarn.YarnClusterDescriptor.retrieve(YarnClusterDescriptor.java:128)

at org.apache.flink.client.deployment.executors.AbstractSessionClusterExecutor.execute(AbstractSessionClusterExecutor.java:75)

at org.apache.flink.streaming.api.environment.StreamExecutionEnvironment.executeAsync(StreamExecutionEnvironment.java:2042)

at org.apache.flink.client.program.StreamContextEnvironment.executeAsync(StreamContextEnvironment.java:137)

at org.apache.flink.client.program.StreamContextEnvironment.execute(StreamContextEnvironment.java:76)

at org.apache.flink.streaming.api.environment.StreamExecutionEnvironment.execute(StreamExecutionEnvironment.java:1916)

at com.zhiyong.start.WordCountAuto.main(WordCountAuto.java:22)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.apache.flink.client.program.PackagedProgram.callMainMethod(PackagedProgram.java:355)

... 11 more

Caused by: java.lang.RuntimeException: The Yarn application application_1648782295643_0010 doesn't run anymore.

at org.apache.flink.yarn.YarnClusterDescriptor.retrieve(YarnClusterDescriptor.java:397)

... 23 more

这是因为之前使用过Yarn的session模式,有残留文件,需要:

[root@zhiyong2 tmp]# cd /tmp/

[root@zhiyong2 tmp]# cat .yarn-properties-root

#Generated YARN properties file

#Fri Apr 01 18:20:14 CST 2022

dynamicPropertiesString=

applicationID=application_1648782295643_0010

[root@zhiyong2 tmp]# rm -rf .yarn-properties-root

重新Run任务,可以看到:

也可以手动停止任务:

但是此时USDP的Flink Web UI还什么都看不到:

需要过一会儿才能看到执行结果:

开源组件,一步一个坑。。。不是在填坑,就是在填坑的路上。

从Flink1.15开始,JDK需要JDK11+了,所以JDK1.8止步于Flink1.14了。可能JDK11的ZGC更强?时代总是要进步的。

转载请注明出处:https://lizhiyong.blog.csdn.net/article/details/128474711

![第五章:开机,重启和用户登录注销-[实操篇]](https://img-blog.csdnimg.cn/54719075b3154972a20ff9c62c58bd79.png)