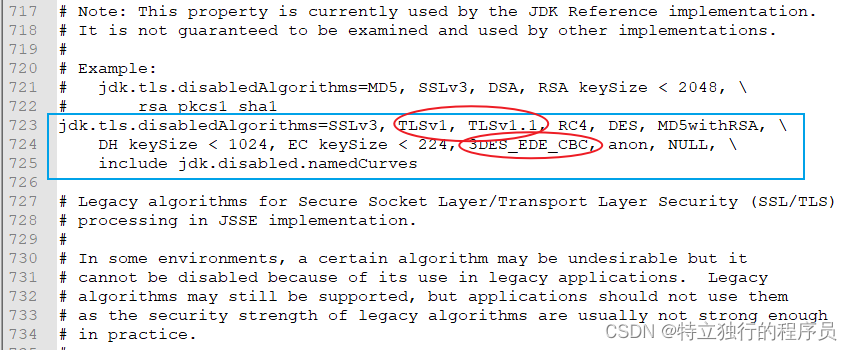

一、现象描述

使用Mac电脑本地启动spring-kakfa消费不到Kafka的消息,监控消费组的消息偏移量发现存在Lag的消息,但是本地客户端就是拉取不到,通过部署到公司k8s容器上消息却能正常消费!

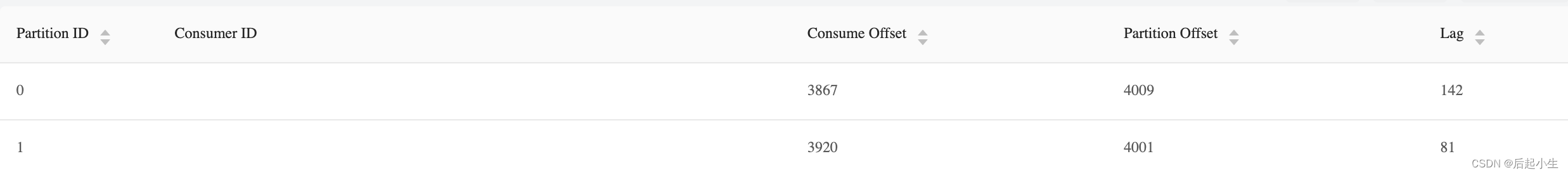

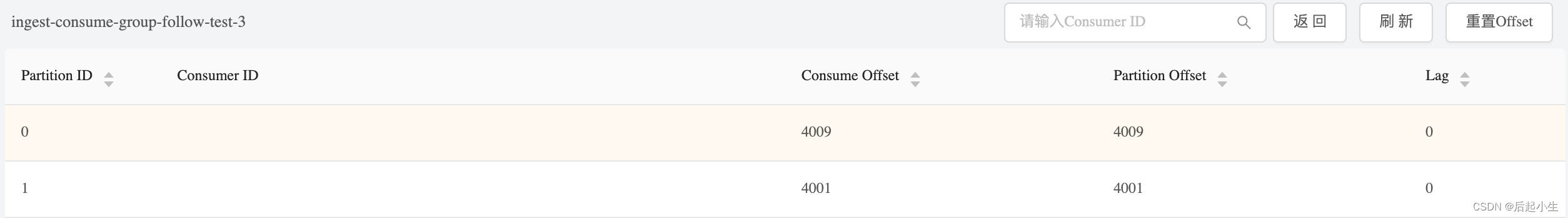

本地启动的服务消费组监控

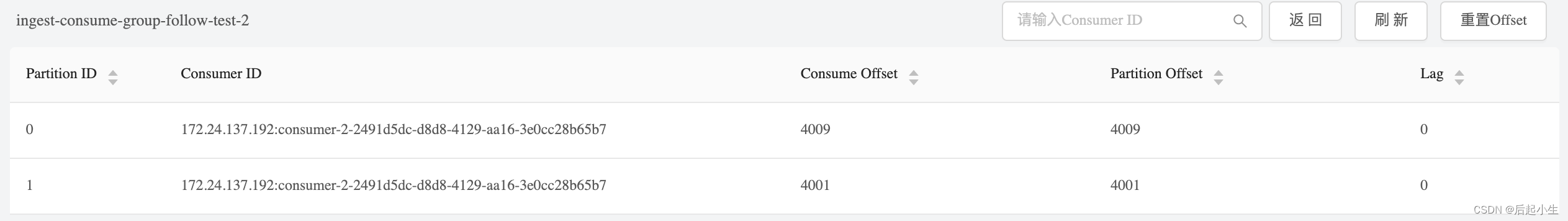

公司k8s容器服务消费组监控

二、环境信息

Spring Kafka版本: 2.1.13.RELEASE

Kafka Client版本: 1.0.2

Local JDK版本: Zulu 8.60.0.21-CA-macos-aarch64

K8s JDK版本: Oracle 1.8.0_202-b08

三、排查过程

-

猜测是JDK版本或者JDK 对 Apple Silicon芯片兼容问题

-

Debug跟踪了KafkaConsumer poll过程,并没有发现任何异常,轮询拉取的线程正常循环执行,只是每次都拉取到 records 为0条。

-

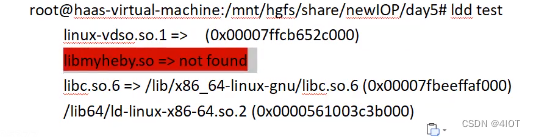

决定调整kafka 日志级别看下心跳是否正常,居然发现了有异常抛出,看到是snappy相关类NotClassFound

SLF4J: Failed toString() invocation on an object of type [org.apache.kafka.common.protocol.types.Struct]

Reported exception:

java.lang.NoClassDefFoundError: Could not initialize class org.xerial.snappy.Snappy

at org.xerial.snappy.SnappyInputStream.hasNextChunk(SnappyInputStream.java:435)

at org.xerial.snappy.SnappyInputStream.read(SnappyInputStream.java:466)

at java.io.DataInputStream.readByte(DataInputStream.java:265)

at org.apache.kafka.common.utils.ByteUtils.readVarint(ByteUtils.java:168)

at org.apache.kafka.common.record.DefaultRecord.readFrom(DefaultRecord.java:292)

at org.apache.kafka.common.record.DefaultRecordBatch$1.readNext(DefaultRecordBatch.java:264)

at org.apache.kafka.common.record.DefaultRecordBatch$RecordIterator.next(DefaultRecordBatch.java:563)

at org.apache.kafka.common.record.DefaultRecordBatch$RecordIterator.next(DefaultRecordBatch.java:532)

at org.apache.kafka.common.record.MemoryRecords.toString(MemoryRecords.java:292)

at java.lang.String.valueOf(String.java:2994)

at java.lang.StringBuilder.append(StringBuilder.java:136)

at org.apache.kafka.common.protocol.types.Struct.toString(Struct.java:390)

at java.lang.String.valueOf(String.java:2994)

at java.lang.StringBuilder.append(StringBuilder.java:136)

at org.apache.kafka.common.protocol.types.Struct.toString(Struct.java:384)

at java.lang.String.valueOf(String.java:2994)

at java.lang.StringBuilder.append(StringBuilder.java:136)

at org.apache.kafka.common.protocol.types.Struct.toString(Struct.java:384)

at org.slf4j.helpers.MessageFormatter.safeObjectAppend(MessageFormatter.java:299)

at org.slf4j.helpers.MessageFormatter.deeplyAppendParameter(MessageFormatter.java:271)

at org.slf4j.helpers.MessageFormatter.arrayFormat(MessageFormatter.java:233)

at org.slf4j.helpers.MessageFormatter.arrayFormat(MessageFormatter.java:173)

at ch.qos.logback.classic.spi.LoggingEvent.getFormattedMessage(LoggingEvent.java:293)

at ch.qos.logback.classic.spi.LoggingEvent.prepareForDeferredProcessing(LoggingEvent.java:206)

at ch.qos.logback.core.OutputStreamAppender.subAppend(OutputStreamAppender.java:223)

at ch.qos.logback.core.OutputStreamAppender.append(OutputStreamAppender.java:102)

at ch.qos.logback.core.UnsynchronizedAppenderBase.doAppend(UnsynchronizedAppenderBase.java:84)

at ch.qos.logback.core.spi.AppenderAttachableImpl.appendLoopOnAppenders(AppenderAttachableImpl.java:51)

at ch.qos.logback.classic.Logger.appendLoopOnAppenders(Logger.java:270)

at ch.qos.logback.classic.Logger.callAppenders(Logger.java:257)

at ch.qos.logback.classic.Logger.buildLoggingEventAndAppend(Logger.java:421)

at ch.qos.logback.classic.Logger.filterAndLog_0_Or3Plus(Logger.java:383)

at ch.qos.logback.classic.Logger.trace(Logger.java:437)

at org.apache.kafka.common.utils.LogContext$KafkaLogger.trace(LogContext.java:135)

at org.apache.kafka.clients.NetworkClient.handleCompletedReceives(NetworkClient.java:689)

at org.apache.kafka.clients.NetworkClient.poll(NetworkClient.java:469)

at org.apache.kafka.clients.consumer.internals.ConsumerNetworkClient.poll(ConsumerNetworkClient.java:258)

at org.apache.kafka.clients.consumer.internals.ConsumerNetworkClient.pollNoWakeup(ConsumerNetworkClient.java:297)

at org.apache.kafka.clients.consumer.internals.AbstractCoordinator$HeartbeatThread.run(AbstractCoordinator.java:948)

[2023-09-15 14:02:27.248]^^A[TID: N/A]^^A[kafka-coordinator-heartbeat-thread | ingest-consume-group-follow-test-4]^^ATRACE^^Aorg.apache.kafka.clients.NetworkClient^^A[Consumer clientId=consumer-1, groupId=ingest-consume-group-follow-test-4] Completed receive from node 1 for FETCH with correlation id 15, received [FAILED toString()]

-

如果了解 snappy-java这个依赖包的话,到这里就对拉取不到消息原因猜测的八九不离十了,因为 Kafka 服务端使用 snappy对息做了压缩并序列化为二进制进行传输,如果客户端在对消息的解压与反序列化过程中抛出异常,那么自然就拉取不到消息。

-

接着,解决一下snappy-java包的兼容问题,通过验证升级版本可以解决此问题。

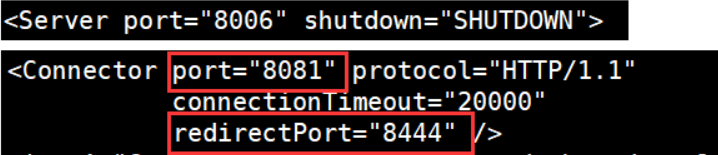

排除kafka-client包中 snappy-java v1.1.4版本依赖

<!-- spring-kafka -->

<dependency>

<groupId>org.springframework.kafka</groupId>

<artifactId>spring-kafka</artifactId>

<exclusions>

<!-- 排除 snappy-java 1.1.4 版本 -->

<exclusion>

<groupId>org.xerial.snappy</groupId>

<artifactId>snappy-java</artifactId>

</exclusion>

</exclusions>

</dependency>

- 再引入高版本v1.1.8.4的依赖包

<dependency>

<groupId>org.xerial.snappy</groupId>

<artifactId>snappy-java</artifactId>

<version>1.1.8.4</version>

<scope>compile</scope>

</dependency>

- 重新编译启动spring kafka客户端程序,消费问题解决~

四、疑问解答

- 为什么Kafka Consumer poll消息过程没有异常抛出且可以正常运行?

答:待补充 - 为什么调整日志级别为Trace才看到异常日志抛出?

答:待补充

![[maven] scopes 管理 profile 测试覆盖率](https://img-blog.csdnimg.cn/3379879de5154a1da1e6033ad9eb8f42.png)